Type what you want to break: AI-assisted chaos engineering with Krkn

Learn how the Krkn scenario generator, an AI-assisted chaos engineering tool for Kubernetes, addresses the challenge of translating desired chaos tests.

Learn how the Krkn scenario generator, an AI-assisted chaos engineering tool for Kubernetes, addresses the challenge of translating desired chaos tests.

A deep dive into the confidential containers solution on OpenShift bare metal, integrating TEEs into cloud-native platforms to provide hardware-backed workload isolation.

Learn how to deploy Hermes Agent, a self-improving AI agent with a learning loop, on OpenShift AI with GPU-accelerated vLLM model serving.

Explore new features in Red Hat build of Cryostat 4.2, including SQL query support for JFR analytics, async-profiler integration, and remote management for smart triggers.

Develop a Flask application using Red Hat hardened images and Red Hat build of

Prevent OOM crashes in controller-runtime operators. Learn how to filter your Kubernetes informer cache to stop ConfigMap-driven denial-of-service attacks.

Learn how the PTP operator integrates NTP for time synchronization, ensuring system clocks remain accurate and within 1 millisecond of accuracy even in the event of GNSS failure.

Discover the changes in Red Hat OpenShift Container Platform system resource management, designed to improve node stability.

How to use a split disk configuration to solve disk space management issues, specifically in OpenShift clusters running large AI/ML workloads.

Learn practical troubleshooting steps for common debugging scenarios in OpenShift 4.20's image mode. Understand the three stages of the process, MachineOSConfig creation, MachineOSBuild creation and execution, and application of the new image to nodes. Discover what to watch for in each stage to keep your clusters running smoothly.

Scale agentic AI with Red Hat’s trusted software factory. Use Policy as Code and SBOMs to strengthen your development pipeline and manage software provenance.

Deploy confidential containers and CVMs for AI. Learn how the Red Hat build of Trustee automates attestation to protect patient data on OpenShift and RHEL.

Learn how Red Hat AI 3.4 uses EvalHub to orchestrate AI evaluations on Kubernetes. Scale frameworks like Garak and LightEval with built-in MLflow tracking.

Tekton is now a CNCF incubating project, aligning with the Kubernetes ecosystem to foster deeper collaboration and integration for cloud-native CI/CD.

Learn how to monitor and analyze costs associated with your OpenShift clusters using Red Hat Lightspeed Cost Management API. Retrieve cost data for every cluster, project, node, and more. Use filters to get the exact data you need.

Improve authentication for the management of remote Red Hat OpenShift instances using Red Hat OpenShift GitOps and zero trust identity workload manager.

Learn how Red Hat Hybrid Cloud Console uses a single data layer to serve the access management interface at runtime, Storybook mocks during development, and a standalone CLI that seeds and cleanses real test environments.

Learn how the ObservabilityInstaller one-click installation simplifies the deployment of a production-ready distributed tracing stack on OpenShift.

Discover how OpenStack Services on OpenShift distributed zones isolate storage to separate failure domains for business resiliency and continuity.

Deploy multiple Red Hat OpenStack Services on OpenShift clusters using hosted control planes (HCPs) to achieve scalable isolation and efficiency.

Learn about our team's experience implementing a defense-in-depth safety architecture for AI agents using Llama Stack shields.

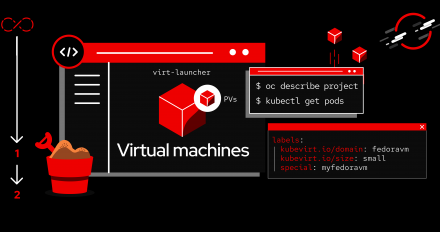

Learn how to use Zabbix integrated with Prometheus/Thanos in the OpenShift Virtualization cluster using low level discovery (LLD) to automate VM discovery.

Learn how migrating the controller deployment strategy in OpenShift Pipelines 1.20 from leader election to StatefulSet-based sharding improved performance.

Learn how Red Hat made Storybook a verification engine for the access management interface on Red Hat Hybrid Cloud Console.

Deploy a 5G core testing pipeline to create a continuous quality check for a 5G