Red Hat Serverless Operator provides Knative features directly in Red Hat OpenShift.

Let's start by exploring: What is serverless? Serverless refers to running back-end programs and processes in the cloud. Serverless works on an as-used basis, meaning that companies only use what they pay for. Knative is a platform-agnostic solution for running serverless deployments.

Red Hat OpenShift Serverless, built on top of the Knative project for Kubernetes and its Red Hat FaaS environments.

More so, Serverless computing offerings typically fall into two groups:

- Backend-as-a-Service (BaaS): BaaS gives developers access to a variety of third-party services and apps. For instance, a cloud-provider may offer authentication services, extra encryption, cloud-accessible databases, and high-fidelity usage data.

- Function-as-a-Service (FaaS): Developers still write custom server-side logic, but it’s run in containers fully managed by a cloud services provider.

Figure 1 depicts the serverless components.

Installation

Selecting Red Hat OpenShift Serverless as shown in Figure 2 will be installed in a new namespace called openshift-serverless.

This Operator will provide three APIs: Knative Serving, Knative Eventing, and Knative Kafka as shown in Figure 3.

After its installation, the following will happen:

- A new namespace called

openshift-serverlesswill be created, and in it, there will be three pods:knative-openshift,knative-openshift-ingress, andknative-operator-webhook. - A new namespace called

knative-servingwill be created, without pods. - A new namespace called

knative-eventingwill be created, without pods.

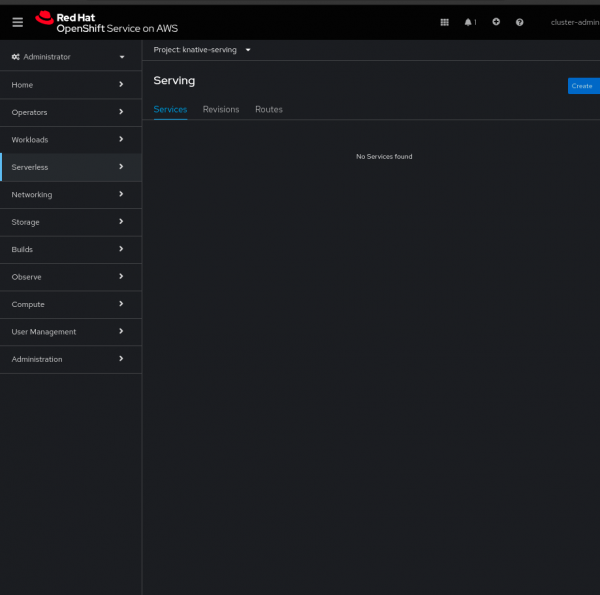

As soon as one Serving or Eventing is created, the Serverless menu will appear on the left side of the main menu (Figure 4).

Knative Serving

This type of resource allows the user to define and control how the serverless workload behaves on the cluster.

Knative Serving is a platform for streamlined application deployment, traffic-based auto-scaling from zero to N, and traffic-split rollouts, as the documentation explains.

The serving can only be created in the serving namespace, otherwise, it will fail as below:

- lastTransitionTime: '2024-08-01T18:26:56Z'

message: 'Install failed with message: Knative Serving must be installed into the namespace "knative-serving"'Knative Eventing

Knative Eventing is a collection of APIs that enable you to use an event-driven architecture with your applications. You can use these APIs to create components that route events from event producers (known as sources) to event consumers (known as sinks) that receive events. Sinks can also be configured to respond to HTTP requests by sending a response event.

An event-driven application platform that leverages CloudEvents with a simple HTTP interface.

Knative Kafka

Knative Kafka is an extension to Knative Eventing, merging HTTP accessibility with Apache Kafka's proven efficiency and reliability.

Other CustomResources/Definitions

Upon the installation of the Serverless Operator and then the creation of Knative eventing and Knative serving other APIs (CustomResourceDefinitions) will be displayed. Below one sees that the default three main APIs were installed and then the others, such as pingsources.sources.knative.dev came right after together with the others:

$ oc get crd | grep knative

apiserversources.sources.knative.dev 2024-12-10T02:01:39Z

brokers.eventing.knative.dev 2024-12-10T02:01:39Z

certificates.networking.internal.knative.dev 2024-12-10T02:01:33Z

channels.messaging.knative.dev 2024-12-10T02:01:39Z

clusterdomainclaims.networking.internal.knative.dev 2024-12-10T02:01:33Z

configurations.serving.knative.dev 2024-12-10T02:01:33Z

containersources.sources.knative.dev 2024-12-10T02:01:39Z

domainmappings.serving.knative.dev 2024-12-10T02:01:34Z

eventtypes.eventing.knative.dev 2024-12-10T02:01:39Z

images.caching.internal.knative.dev 2024-12-10T02:01:33Z

ingresses.networking.internal.knative.dev 2024-12-10T02:01:34Z

inmemorychannels.messaging.knative.dev 2024-12-10T02:01:45Z

knativeeventings.operator.knative.dev 2024-12-10T02:00:22Z

knativekafkas.operator.serverless.openshift.io 2024-12-10T02:00:22Z

knativeservings.operator.knative.dev 2024-12-10T02:00:23Z

metrics.autoscaling.internal.knative.dev 2024-12-10T02:01:34Z

parallels.flows.knative.dev 2024-12-10T02:01:39Z

pingsources.sources.knative.dev 2024-12-10T02:01:39Z

podautoscalers.autoscaling.internal.knative.dev 2024-12-10T02:01:34Z

revisions.serving.knative.dev 2024-12-10T02:01:34Z

routes.serving.knative.dev 2024-12-10T02:01:34Z

sequences.flows.knative.dev 2024-12-10T02:01:40Z

serverlessservices.networking.internal.knative.dev 2024-12-10T02:01:34Z

services.serving.knative.dev 2024-12-10T02:01:34Z

sinkbindings.sources.knative.dev 2024-12-10T02:01:40Z

subscriptions.messaging.knative.dev 2024-12-10T02:01:40Z

triggers.eventing.knative.dev 2024-12-10T02:01:40ZThe new CustomResourceDefinitions (CRDs) build upon the three main CustomResources (CRs: knative eventing, knative serving, knative kafka) to provide advanced features such as Ping Sources, which is an event source that produces events with a fixed payload on a specified cron schedule.

Demo

The steps below basically reiterate the learning path Create an OpenShift Serverless function by Don Schenck. See below:

- Install the OpenShift Serverless Operator.

- Verify the Serverless Option on the menu is created (see above).

- Deploy the Photobooth application.

Create a Serving

$oc get kservice, which can be trivial for this demo:apiVersion: operator.knative.dev/v1beta1 kind: KnativeServing metadata: name: knative-serving namespace: knative-serving spec: controller-custom-certs: name: '' type: '' registry: {}After the Serving creation, several pods will appear in the

knative-servingnamespace:$ oc project knative-serving $ oc get pod NAME READY STATUS RESTARTS AGE activator-7b7d4557cc-4rfk9 2/2 Running 0 16m activator-7b7d4557cc-jsf5s 2/2 Running 0 17m autoscaler-f794648c6-h4p2x 2/2 Running 0 17m autoscaler-f794648c6-rknhg 2/2 Running 0 17m autoscaler-hpa-659f6f48b7-gm9jx 2/2 Running 0 17m autoscaler-hpa-659f6f48b7-z4ddb 2/2 Running 0 17m controller-85c7c98dfc-2bgbg 2/2 Running 0 16m controller-85c7c98dfc-gp5kp 2/2 Running 0 17m webhook-7c4994d865-fqdlr 2/2 Running 0 16m webhook-7c4994d865-l2mvt 2/2 Running 0 17mCreate a Service (

$oc get ksvc). This must be created after the Serving:apiVersion: serving.knative.dev/v1 kind: Service metadata: name: imageoverlay namespace: knative-serving spec: template: spec: containers: - image: quay.io/rhdevelopers/imageoverlay:latestGet the route using

$oc get ksvc:$ oc get ksvc NAME URL LATESTCREATED LATESTREADY READY REASON imageoverlay https://imageoverlay-knative-serving.apps.rosa.example.com imageoverlay-00001 imageoverlay-00001 True- Apply the

ksvcin the fieldService URL Setting:https://imageoverlay-knative-serving.apps.rosa.example.com. - Take the picture (Figure 5).

Customization

For customizing settings, such as the container settings, one can do as explained in the solution Setting Serverless Operator container limits and requests in OCP 4. For example:

$ oc get kservice -o yaml

apiVersion: serving.knative.dev/v1

kind: Service

metadata:

name: showcase

namespace: knative-serving

annotations:

serving.knative.dev/creator: cluster-admin

serving.knative.dev/lastModifier: cluster-admin

spec:

template:

metadata:

annotations:

queue.sidecar.serving.knative.dev/resourcePercentage: "40" <---- 40% of the user-container will be queue-proxyTroubleshooting

For troubleshooting, we usually verify:

- The CustomResources: serving, events,

$oc get kservice, and$oc get kevent. - Inspect bundle, where the pod YAMLs and pod logs will be.

- In most cases we ask the serverless must-gather as well, but not in all cases.

Learn more

This article covers the introduction, installation, customization, and finally troubleshooting of Red Hat OpenShift Serverless.

Don Schenck's learning path Create an OpenShift Serverless function is an excellent resource for learning more.

Additional resources

For any other specific inquiries, please open a case with Red Hat support. Our global team of experts can help you with any issues.

Special thanks to Jonathan Edwards for the excellent collaboration last year on Knative, Alexander Barbosa

for the contribution to this article with the diagrams/reviews, and finally Josh Brandenburg for allocating time for my research on this topic as well.