Deploy Hermes Agent on OpenShift AI with vLLM model serving

Learn how to deploy Hermes Agent, a self-improving AI agent with a learning loop, on OpenShift AI with GPU-accelerated vLLM model serving.

Learn how to deploy Hermes Agent, a self-improving AI agent with a learning loop, on OpenShift AI with GPU-accelerated vLLM model serving.

Prevent OOM crashes in controller-runtime operators. Learn how to filter your Kubernetes informer cache to stop ConfigMap-driven denial-of-service attacks.

Discover how NVIDIA confidential computing and OpenShift sandboxed containers work together to secure GPU workloads through hardware-based isolation and attestation.

Learn how to prevent GPU waste and financial loss by implementing just-in-time (JIT) checkpointing with Kubeflow Training SDK on OpenShift AI.

Learn about a new feature in Red Hat OpenShift GitOps operator 1.20.2 that simplifies the management of trusted TLS certificates for Argo CD.

How to use a split disk configuration to solve disk space management issues, specifically in OpenShift clusters running large AI/ML workloads.

Learn practical troubleshooting steps for common debugging scenarios in OpenShift 4.20's image mode. Understand the three stages of the process, MachineOSConfig creation, MachineOSBuild creation and execution, and application of the new image to nodes. Discover what to watch for in each stage to keep your clusters running smoothly.

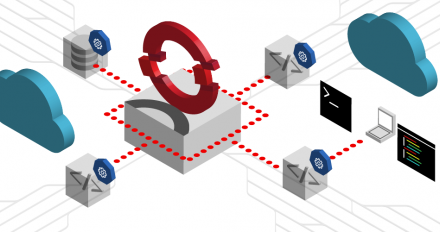

Learn how Red Hat AI 3.4 uses EvalHub to orchestrate AI evaluations on Kubernetes. Scale frameworks like Garak and LightEval with built-in MLflow tracking.

Tekton is now a CNCF incubating project, aligning with the Kubernetes ecosystem to foster deeper collaboration and integration for cloud-native CI/CD.

Learn how the ObservabilityInstaller one-click installation simplifies the deployment of a production-ready distributed tracing stack on OpenShift.

Discover how OpenStack Services on OpenShift distributed zones isolate storage to separate failure domains for business resiliency and continuity.

Deploy multiple Red Hat OpenStack Services on OpenShift clusters using hosted control planes (HCPs) to achieve scalable isolation and efficiency.

Learn how Kagenti ADK, an open source toolkit, handles the complexities of managing production AI agents. It aligns with the Linux Foundation's Agent2Agent (A2A) protocol and provides a set of runtime services for easier deployment and operation.

Discover a three-cluster architecture for deploying hosted control planes with OpenShift Virtualization, suitable for large enterprises requiring maximum isolation and independent scaling.

Learn how to use Zabbix integrated with Prometheus/Thanos in the OpenShift Virtualization cluster using low level discovery (LLD) to automate VM discovery.

Learn how migrating the controller deployment strategy in OpenShift Pipelines 1.20 from leader election to StatefulSet-based sharding improved performance.

Learn how to build and push .NET container images in Tekton pipelines without a Dockerfile. See how the dotnet-publish-image task simplifies your CI/CD workflow.

This article describes an enterprise-preferred topology that separates fleet management from cluster hosting for independent scaling and clean operational boundaries.

Learn to modernize enterprise Java for Kubernetes using the four-path framework. Explore cloud-native patterns like Sidecars, Sagas, and RAG to build resilient, AI-ready systems.

Learn how to combine KServe and llm-d to optimize generative AI inference, improve performance, and reduce infrastructure costs. This article demonstrates the integration architecture and provides practical guidance for AI platform teams.

Learn how to deploy hosted control planes with OpenShift Virtualization using an all-in-one cluster for lower costs and faster cluster provisioning.

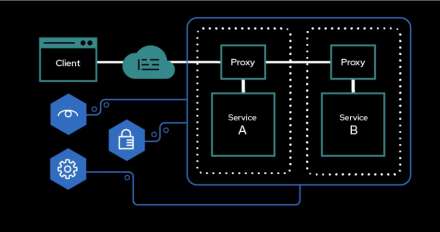

Learn how to connect an external Red Hat Enterprise Linux VM to an OpenShift Service Mesh 3.3 deployment. You'l use the classic Bookinfo sample application to see how the process works

Explore new features in Red Hat build of Kueue 1.3, including integration with JobSet for efficient batch job scheduling, support for LeaderWorkerSet for distributed ML workloads, and the introduction of v1beta2 APIs. Learn how to get started with the updated Kueue operator.

Discover a practical and reproducible methodology for latency-sensitive DPDK workloads running on bare-metal OpenShift.

Cloud-native bursts overwhelm default DNS settings, causing silent timeouts. Protect your IdM infrastructure with recursive-client limits and CoreDNS caching.