Microservices

Microservices breakdown your application architecture into smaller, independent components that communicate through APIs.This approach lets multiple team members work on different parts of the architecture simultaneously for faster development. It’s a scalable, flexible, resilient way to build modern applications. .

Microservices and hybrid cloud: A perfect union

The joining of microservices and hybrid cloud architecture has come to define cutting-edge software design and deployment.Individual microservices fulfill a specific function to contribute to the larger application system.

Combining this approach with a hybrid cloud, which blends the advantages of public and private clouds:

- Enhances your application’s flexibility

- Boosts efficiency, and

- Scales with your business needs

Red Hat named a Leader in Multicloud Container Platforms

Red Hat was recognized by Forrester as a leader in The Forrester Wave™: Multicloud Container Platforms, Q4 2023.

Red Hat named a Leader for Container Management

Red Hat was recognized by Gartner® as a Leader in the September 2023

Magic Quadrant™.

Microservices for Java Developers

Java microservices help developers build and ship applications faster, improve scalability and security, and adapt quickly to changing business needs.

Learn Microservices

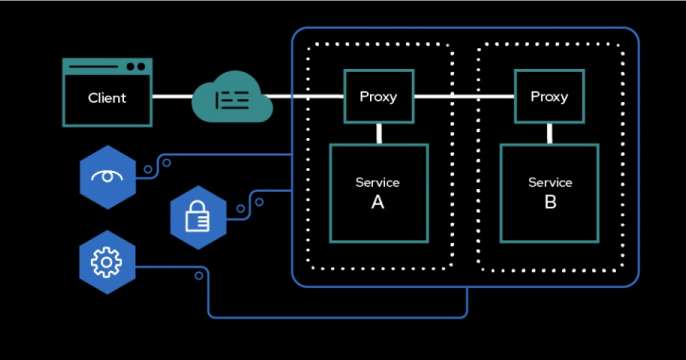

Advanced microservices tracing with Jaeger

One of the greatest challenges of moving from traditional monolithic application design to a microservices architecture is being able to monitor your business transaction flow—the flow of events via micro service calls throughout your entire system.

Free Microservices Course from Red Hat

Sign up for a free video course on Microservices, Developing Cloud-Native Applications with Microservices Architectures (DO092).

Microservices Books

Recent Microservices articles

Explore an automated, event-driven solution using streams for Apache Kafka,...

Learn about the Red Hat build of OpenTelemetry and its auto-instrumentation...

Learn how to design agentic workflows, and how the Red Hat AI portfolio...

Explore new features in Red Hat JBoss EAP XP 6, including upgrades to...

Krkn-AI automates AI-assisted, objective-driven chaos testing for Kubernetes....

Learn how to configure, trigger, and monitor a circuit breaker using Red Hat...