The new Open Data Hub version 0.8 (ODH) release includes many new features, continuous integration (CI) additions, and documentation updates. For this release, we focused on enhancing JupyterHub image builds, enabling more mixing of Open Data Hub and Kubeflow components, and designing our comprehensive end-to-end continuous integration and continuous deployment and delivery (CI/CD) process. In this article, we introduce the highlights of this newest release.

Note: Open Data Hub is an open source project and a community Operator for building an AI-as-a-Service (AIaaS) platform on Red Hat OpenShift.

JupyterHub

In an effort to streamline code changes to JupyterHub, we forked two pivotal repositories under the opendatahub-io GitHub project: jupyterhub-quickstart and jupyterhub-odh. Moving forward, all code changes and feature enhancements for JupyterHub will go under these two new repositories.

We also streamlined all of the images used by JupyterHub by pulling them from different private and public repositories into two repositories on Quay.io: odh-jupyterhub and thoth-station.

Notebook images are now built and maintained by Thoth Station, which is an artificial intelligence (AI) tool that analyzes and recommends software stacks for artificial intelligence applications. For more comprehensive information about Thoth and how to use it, visit Thoth Station.

We've also updated Open Data Hub 0.8 with Elyra, an AI toolkit that lets you launch JupyterLab images.

From notebooks to pipelines with Elyra

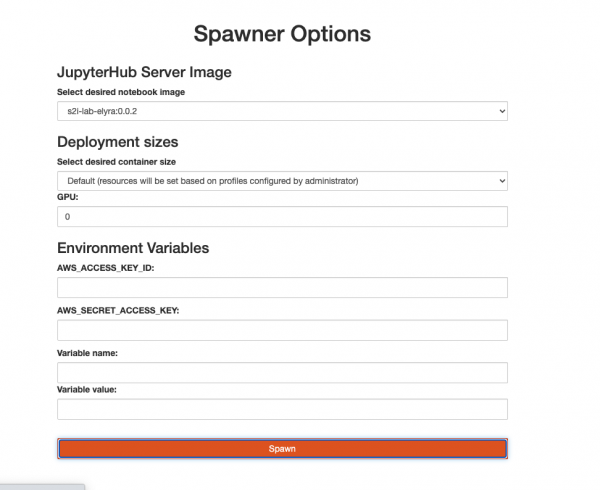

In an effort to allow data scientists to turn their notebooks into Argo Workflows or Kubeflow pipelines, we've added an exciting new tool called Elyra to Open Data Hub 0.8. The process of converting all of the work that a data scientist has created in notebooks to a production-level pipeline is cumbersome and usually manual. Elyra lets you execute this process from the JupyterLab portal with just a few clicks. As shown in Figure 1, Elyra is now included in a JupyterHub notebook image.

The image runs a JupyterLab environment with Elyra tools, as shown in Figure 2.

Look for upcoming articles and documentation about how to use Elyra optimally.

Monitoring Kubeflow

As part of our effort to make Kubeflow and Open Data Hub components interchangeable, we've added monitoring capabilities to Kubeflow. With ODH 0.8, users can add Prometheus and Grafana for Kubeflow component monitoring. Currently, not all Kubeflow components support a Prometheus endpoint. We did turn on the Prometheus endpoint in Argo, and we've provided the example dashboard shown in Figure 3, which lets users monitor their pipelines.

Two Kubeflow components that you can use for monitoring are tfjobs and pytorchjobs. To install Kubeflow with monitoring, please use the Kubeflow-monitoring example Kfdef.

Distributed machine learning

As a continuation of our previous effort to provide more distributed-learning tools to ODH, the PyTorch Operator now works with ODH components. To install the PyTorch Operator with ODH, please use the distributed-training example Kfdef. The example also includes the tfjobs Kubeflow component, which was ported in ODH 0.7. See the ODH documentation for more information about mixing components.

CI/CD

We added more tests to Open Data Hub components, including Apache Kafka and Superset, and we enhanced JupyterHub testing by adding Selenium for web portal testing. We also added the ability to run these tests in the forked Kubeflow manifest repository in our CI pipeline. At the moment, however, the tests only verify the manifests. We need to expand them to include more comprehensive tests such as the ones that we developed for the odh-manifest repository. The team is also continuing to investigate a continuous deployment and delivery system for a complete and dynamic CI/CD pipeline.

Conclusion

Visit opendatahub.io for the complete Open Data Hub 0.8 documentation. You will find details about specific components in each component's GitHub section. We've also added new guidelines to develop and run test suites locally via a container against an existing cluster, which makes development easier. This method mirrors what is actually running in our CI system, so it is a more useful method for debugging.

Last updated: February 5, 2024