Red Hat Integration

Runtimes, frameworks, and services to build applications natively on Red Hat OpenShift.

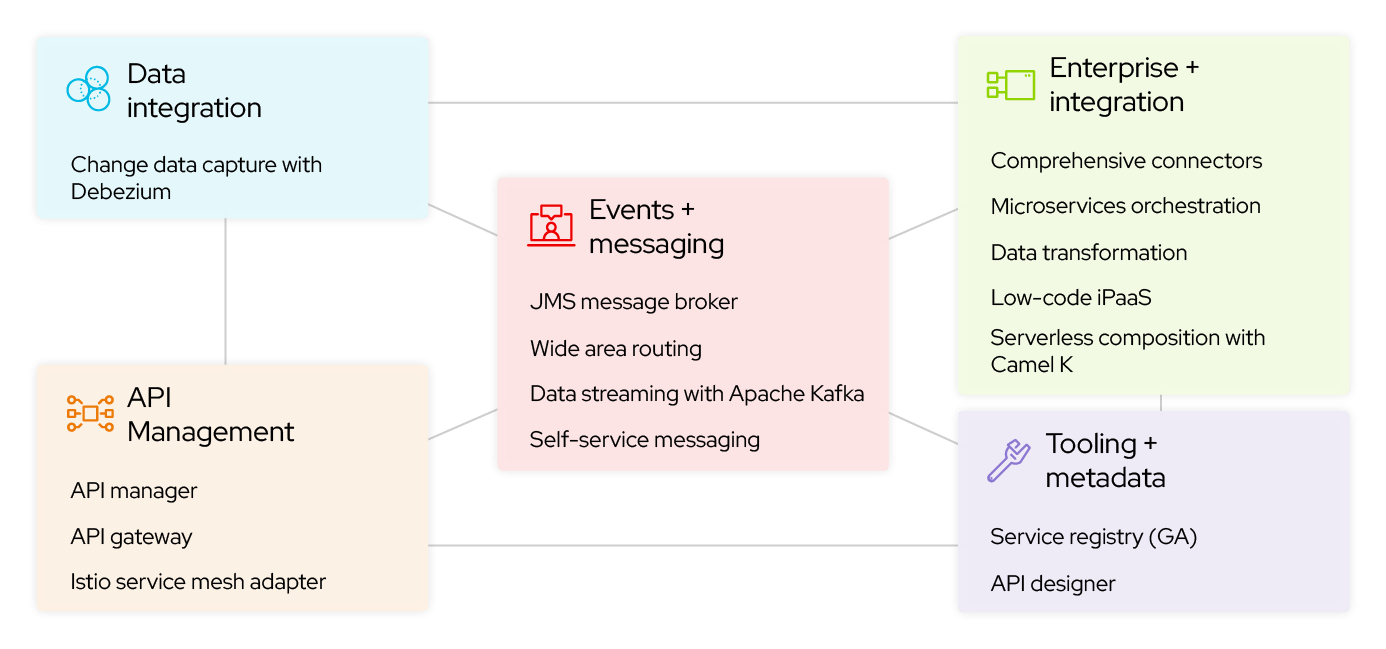

Cloud-native development that connects systems

High-speed, secure messaging with Red Hat AMQ

Red Hat AMQ is a multi-protocol messaging platform that speaks a variety of programming languages and allows developers to exchange data with high throughput and low latency.

Latest integration articles

A Llama Stack-dependent backend, or any rapidly-evolving upstream project...

Learn how to transform a simple chatbot into an enterprise RAG application by...

Discover how Red Hat OpenShift AI 3.4's Models-as-a-Service (MaaS) capability...

Learn how to prevent GPU waste and financial loss by implementing...

Featured integration blogs

Dive into the Q1’26 edition of Camel integration quarterly digest, covering...

This post introduces two interactive, sports-themed hands-on labs designed to...

Dive into the Q4’25 edition of Camel integration quarterly digest, covering...

Dive into the Q3’25 edition of Camel integration quarterly digest, covering...

Start building your Red Hat Integration toolbox

Access and run the software components you need in your own environment.