DevOps pipelines with Kubernetes

Cloud developers can take advantage of increased speed, decreased risk, and improved collaboration with Kubernetes and DevOps.

Free e-book: DevOps with OpenShift

Experts explain how to configure Docker application containers and the Kubernetes cluster manager with OpenShift’s developer- and operational-centric tools. Discover how this infrastructure-agnostic container management platform can help companies navigate the murky area where infrastructure-as-code ends and application automation begins.

The book covers the following topics:

- Why automation is important

- Patterns and practical examples for managing continuous deployments such as rolling, A/B, blue-green, and canary

- Implementing continuous integration pipelines with OpenShift’s CI/CD capability

- Mechanisms for separating and managing configuration from static runtime software

- Customizing OpenShift’s source-to-image capability

- Considerations when working with OpenShift-based application workloads

- Self-contained local versions of the OpenShift environment on your computer

What is DevOps?

DevOps is a set of tools and techniques for combining the development and deployment of software. DevOps uses source code management systems and automated testing software to simplify the development process, while automated build and deployment tools streamline the work of the operations team. In a sophisticated DevOps environment, the division of labor between people who write software and people who deploy software to production systems is largely artificial. As DevOps continues to mature, the line between development and operations departments will become more blurred.

DevOps All The Things!

Host: Natale Vinto/Alex Soto

Guest: Daniel Bryant

Want to know how you can effectively debug your workloads deployed in the cloud? And what are the best DevOps tools around today?

Join us for our bi-weekly hour-long live chat show with Daniel Bryant. Daniel is the Director of DevRel at Ambassador Labs. His technical expertise focuses on ‘DevOps’ tooling, cloud/container platforms, and microservice implementations. Daniel is a Java Champion and contributes to several open source projects.

Getting started with DevOps

Discover how NVIDIA confidential computing and OpenShift sandboxed containers...

Learn how to prevent GPU waste and financial loss by implementing...

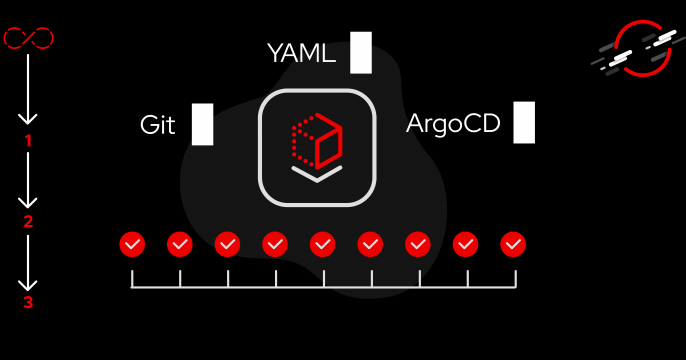

Learn about a new feature in Red Hat OpenShift GitOps operator 1.20.2 that...

Podcast: DevOps - Tear down that wall

Red Hat's Command Line Heroes podcast series covered DevOps in Season 1, Episode 4.

What is DevOps, really? Developer guests, including Scott Hanselman from Microsoft and Cindy Sridharan of Apple, think about DevOps as a practice from their side of the wall, while members from various operations teams explain what they've been working to defend.

Differences remain, but with DevOps, teams are working better than ever. Learn more in this episode of Command Line Heroes.

More DevOps resources

Scale agentic AI with Red Hat’s trusted software factory. Use Policy as...

Learn how Red Hat Hybrid Cloud Console uses a single data layer to serve the...

Learn how Kagenti ADK, an open source toolkit, handles the complexities of...

Learn how Red Hat made Storybook a verification engine for the access...

Learn how to build and push .NET container images in Tekton pipelines without...

Learn how we replaced an entire React application while keeping the original...

Java & DevOps for supercharged pipelines with containers

This panel brings together developers who understand the benefits of container technologies for DevOps and delivery pipelines.

Edson Yanaga(Director of Developer Experience at Red Hat)

A developer's journey through DevOps

Developers and operators must work hand-in-hand; Burr walks us through the process and highlights automation, CI and CD deployment, containers, and microservices.

Burr Sutter(Director of Developer Experience)