This article introduces Red Hat AI Inference Server, recently announced at Red Hat Summit, and guides you through deploying a Whisper model on Red Hat AI Inference Server within a Red Hat Enterprise Linux (RHEL) environment.

What is Red Hat AI Inference Server?

Red Hat AI Inference Server is designed to optimize the serving and inferencing of large language models (LLMs). Red Hat AI Inference Server focuses on boosting LLM performance while reducing associated costs. It provides enterprise-grade stability and security while being built on open source software, particularly the upstream vLLM project.

Red Hat AI Inference Server integrates innovative features like continuous batching, which processes requests as they arrive, and tensor parallelism, which distributes LLM workloads across multiple GPUs. These features work together to reduce latency and increase throughput, improving overall performance.

A key cost-saving feature of Red Hat AI Inference Server is PagedAttention. This technology optimizes memory allocation for LLMs, similar to virtual memory in operating systems, resulting in significant memory savings and reduced costs.

What is a Whisper model?

Whisper models are automatic speech recognition (ASR) systems developed by OpenAI. They are designed to convert spoken language into written text and are capable of handling multiple languages, accents, and noisy audio environments.

Running Whisper on Red Hat AI Inference Server with RHEL 9 and Podman

We're going to go through the steps to run OpenAI’s Whisper model using Red Hat AI Inference Server on a Red Hat Enterprise Linux 9 environment. This guide is tailored for NVIDIA GPU-enabled setups using Podman containers and is tested on an AWS EC2 instance.

Prerequisites

Before we get started, ensure your environment meets these essential requirements:

Tested environment

This setup was tested in the following environment:

- AWS EC2 Instance: A g6.6xlarge: 1× NVIDIA L4 GPU with 22 GB GPU memory running RHEL 9

Pre-check

Let's first verify the ability to access GPUs via Podman with this command:

sudo podman run --rm --device nvidia.com/gpu=all nvidia/cuda:11.0.3-base-ubuntu20.04 nvidia-smiIf prompted, select the image from docker.io.

The output should look similar to this, confirming your GPU setup is recognized:

+-----------------------------------------------------------------------------+

| NVIDIA-SMI 535.104.05 Driver Version: 535.104.05 CUDA Version: 12.2 |

|-------------------------------+----------------------+----------------------+

| GPU Name Persistence-M| Bus-Id Disp.A | Volatile Uncorr. ECC |

| Fan Temp Perf Pwr:Usage/Cap| Memory-Usage | GPU-Util Compute M. |

|===============================+======================+======================|

| 0 NVIDIA L4 On | 00000000:00:1E.0 Off | 0 |

| N/A 35C P8 9W / 72W | 0MiB / 22528MiB | 0% Default |

+-------------------------------+----------------------+----------------------+

+-----------------------------------------------------------------------------+

| Processes: |

| GPU GI CI PID Type Process name GPU Memory |

| ID ID Usage |

|=============================================================================|

| No running processes found |

+-----------------------------------------------------------------------------+If you get an error message at this stage, you will need to check the installation of the NVIDIA drivers and the NVIDIA container toolkit.

Running Red Hat AI Inference Server

Now that we've validated we can access the GPUs via Podman, we can download and run the Red Hat AI Inference Server image from the Red Hat registry.

Step 1: Login to the Red Hat registry

First, get login credentials for the Red Hat registry by following the instructions on this page.

Login to the Red Hat registry with your credentials:

podman login registry.redhat.ioStep 2: Pull the Red Hat AI Inference Server image

Start by pulling the CUDA-enabled Red Hat AI Inference Server container image:

podman pull registry.redhat.io/rhaiis/vllm-cuda-rhel9:3.0.0Step 3: Set Your Hugging Face Token

To authenticate with Hugging Face and download the Whisper model, set your token in the environment. Get your token from https://huggingface.co/settings/tokens.

export HF_TOKEN=your_huggingface_token_hereStep 4: Run the Red Hat AI Inference Server container

Create a src folder on your local machine; this will be used to persist the model once it's downloaded from Hugging Face:

mkdir -p src

chmod a+rwX srcNow, launch the Whisper inference service using the following Podman command:

podman run --rm \

--device nvidia.com/gpu=all \

--ipc=host \

-p 8000:8000 \

--env "HUGGING_FACE_HUB_TOKEN=$HF_TOKEN" \

--env "HF_HUB_OFFLINE=0" \

-v ./src:/opt/app-root/src \

--name=rhaiis \

registry.redhat.io/rhaiis/vllm-cuda-rhel9:3.0.0 \

--model openai/whisper-large-v3Once started, you should see log output confirming the API server is running.

INFO 05-15 13:35:22 [api_server.py:1081] Starting vLLM API server on http://0.0.0.0:8000

INFO 05-15 13:35:22 [launcher.py:26] Available routes are:

INFO 05-15 13:35:22 [launcher.py:34] Route: /openapi.json, Methods: HEAD, GET

INFO 05-15 13:35:22 [launcher.py:34] Route: /docs, Methods: HEAD, GET

INFO 05-15 13:35:22 [launcher.py:34] Route: /docs/oauth2-redirect, Methods: HEAD, GET

INFO 05-15 13:35:22 [launcher.py:34] Route: /redoc, Methods: HEAD, GET

INFO 05-15 13:35:22 [launcher.py:34] Route: /health, Methods: GET

INFO 05-15 13:35:22 [launcher.py:34] Route: /load, Methods: GET

INFO 05-15 13:35:22 [launcher.py:34] Route: /ping, Methods: GET, POST

INFO 05-15 13:35:22 [launcher.py:34] Route: /tokenize, Methods: POST

INFO 05-15 13:35:22 [launcher.py:34] Route: /detokenize, Methods: POST

INFO 05-15 13:35:22 [launcher.py:34] Route: /v1/models, Methods: GET

INFO 05-15 13:35:22 [launcher.py:34] Route: /version, Methods: GET

INFO 05-15 13:35:22 [launcher.py:34] Route: /v1/chat/completions, Methods: POST

INFO 05-15 13:35:22 [launcher.py:34] Route: /v1/completions, Methods: POST

INFO 05-15 13:35:22 [launcher.py:34] Route: /v1/embeddings, Methods: POST

INFO 05-15 13:35:22 [launcher.py:34] Route: /pooling, Methods: POST

INFO 05-15 13:35:22 [launcher.py:34] Route: /score, Methods: POST

INFO 05-15 13:35:22 [launcher.py:34] Route: /v1/score, Methods: POST

INFO 05-15 13:35:22 [launcher.py:34] Route: /v1/audio/transcriptions, Methods: POST

INFO 05-15 13:35:22 [launcher.py:34] Route: /rerank, Methods: POST

INFO 05-15 13:35:22 [launcher.py:34] Route: /v1/rerank, Methods: POST

INFO 05-15 13:35:22 [launcher.py:34] Route: /v2/rerank, Methods: POST

INFO 05-15 13:35:22 [launcher.py:34] Route: /invocations, Methods: POST

INFO 05-15 13:35:22 [launcher.py:34] Route: /metrics, Methods: GET

INFO: Started server process [1]

INFO: Waiting for application startup.

INFO: Application startup complete.Step 5: Set up and SSH tunnel to the RHEL VM

To access the running API from your local machine, the simplest method is to use SSH port forwarding. From a second terminal session, run:

ssh -i "rhaiis.pem" -L 8000:localhost:8000 username@server.hostnameStep 6: Clone and run the sample UI application

We've provided a sample Whisper UI application to test audio transcription. Start by cloning the repository on your local machine:

git clone https://github.com/rh-aiservices-bu/vllm-whisper

cd vllm-whisperSet up a virtual Python environment

Ensure Python 3.8+ is installed. Create and activate a virtual environment:

python3 -m venv venv

source venv/bin/activate Install dependencies

Navigate to the ui directory and install packages:

cd ui

pip install -r requirements.txtSet Whisper API endpoint

Configure the API endpoint:

export WHISPER_URL=http://localhost:8000Run the Streamlit application

Launch the UI with:

streamlit run app.pyTesting the transcription

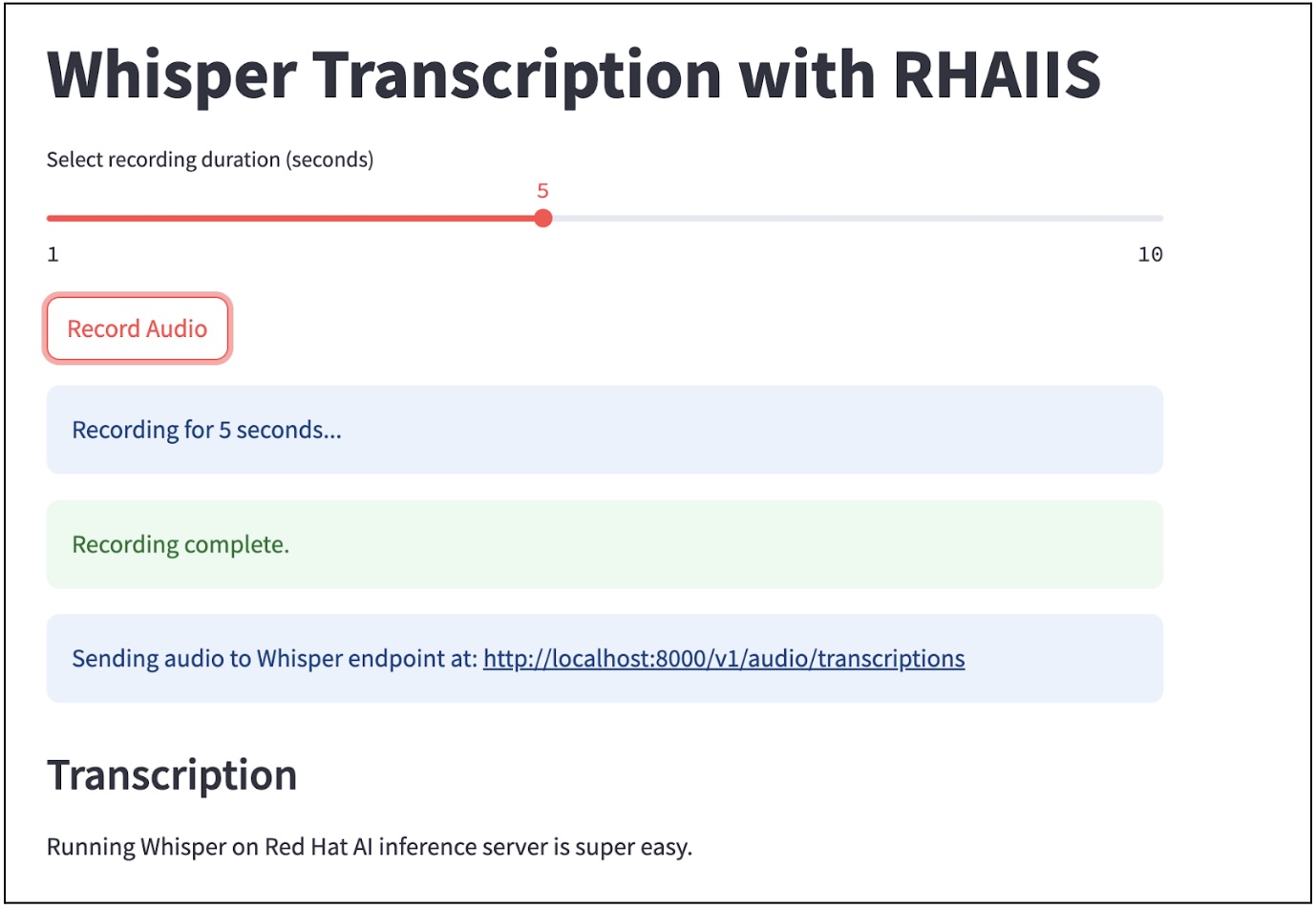

Once the application is running, you can interact with Whisper directly from your browser at http://localhost:8501/ as shown in Figure 1.

When you click the Record Audio button, the application captures a 5-second audio clip using your microphone, sends it as a WAV file to the Whisper server, and displays the transcribed text in the UI.

Conclusion

Running Whisper on Red Hat AI Inference Server with RHEL 9 is extremely easy once you've set up the necessary GPU support and container environment. With Podman and the sample Streamlit UI application, you can quickly deploy and test powerful speech recognition capabilities on your own infrastructure.

Last updated: June 11, 2025