Red Hat Developer Hub is a powerful platform that helps developer teams centralize their software components, streamline collaboration, and provide essential resources like TechDocs, software templates, and service catalogs all in one place. With TechDocs enabled, teams can automatically surface technical documentation alongside their services whenever they update their repositories, promoting better discoverability and alignment across projects.

However, despite these advantages, common challenges persist; for instance, teams often have multiple priorities competing for attention. Updating technical documentation, although critical, can be time-consuming and sometimes neglected. In many cases, teams may not even know what documentation to update after a change, or they might want to centralize legacy or external repositories into the Red Hat Developer Hub, only to realize that these repos are missing the required TechDocs structure (like mkdocs.yaml, catalog-info.yaml, or docs/index.md).

This is where AI agents become crucial.

By intelligently scanning repositories, detecting gaps, and automatically generating the necessary TechDocs structure and initial content, an AI agent can help documentation keep pace with the software development life cycle (SDLC), without burdening busy teams.

In this article, we will build on this foundation by creating an AI agent that:

- Scans repositories.

- Detects missing TechDocs artifacts.

- Automatically generates the core documentation files.

- Seamlessly integrates into your SDLC workflows.

By the end, you will not only have a working AI agent that enhances your RHDH instance, but you will also start imagining what is possible when you embed intelligent automation into your infrastructure.

What is an AI agent?

An AI agent is a system that perceives information, makes decisions, and takes actions autonomously to achieve a specific goal. It combines data gathering, reasoning (often with AI models), and automated execution, without requiring constant human input.

We are building an AI agent that:

- Clones a GitHub repository,

- Scans and understands its structure,

- Generates high-quality Red Hat Developer Hub (TechDocs) files automatically,

- Opens a pull request to contribute those changes,

- (Optionally) Registers the project directly into Developer Hub.

This agent intelligently reads real project content (not just file names) and produces A-grade documentation, all with minimal or no human intervention. The resulting project structure will be organized as follows:

agent/

├── llm-client/

│ ├── doc_writer.py

│ ├── generate_full_docs.py

│ ├── llm_client.py

│ └── prompt_builder.py

├── repo-scanner/

│ ├── scanner.py

│ └── requirements.txt

├── templates/

│ ├── catalog-info.yaml.tpl

│ ├── index.md.tpl

│ ├── mkdocs.yaml.tpl

├── commit_and_pr.sh

register_component.sh (optional)

runner.sh

README.mdBefore we start programming, ensure you have the following system requirements:

- GitHub account and personal access token (PAT)

- Python 3.9+

- Bash shell (Linux, macOS, Windows Subsystem for Linux)

- HuggingFace account and token

- 20 GB+ free disk space

- Access to Red Hat Developer Hub (optional)

- CPU: 4 cores minimum

- RAM: 8–16 GB

- Disk: 20 GB+ free

- Internet access

- GPU: Optional (CPU models only)

- Originally tried ibm-granite/granite-3.1-8b-instruct — too large for local CPU

- Using microsoft/phi-2 (small, CPU-friendly)

Step 1: Create runner.sh

runner.sh is the main orchestrator script for the TechDocs AI agent. It automates the full workflow. This script lets you run the entire agent with a single command.

#!/bin/bash

# Main orchestrator

set -e

if [ -z "$1" ]; then

echo "Error: No GitHub repo URL provided!"

echo "Usage: bash runner.sh <repo-url>"

exit 1

fi

REPO_URL="$1"

REPO_NAME=$(basename -s .git "$REPO_URL")

CLONE_DIR="$REPO_NAME"

REPO_SUMMARY="repo-summary.json"

echo "Cloning $REPO_URL into $CLONE_DIR..."

rm -rf "$CLONE_DIR"

git clone "$REPO_URL" "$CLONE_DIR"

if [ $? -ne 0 ]; then

echo "Failed to clone repo $REPO_URL"

exit 1

fi

echo "Scanning repo..."

python3 agent/repo-scanner/scanner.py "$CLONE_DIR" > "$REPO_SUMMARY"

echo "Patching repo-summary.json with repo_url and repo_name..."

python3 -c "

import json

data = json.load(open('$REPO_SUMMARY'))

data['repo_name'] = '$REPO_NAME'

data['repo_url'] = '$REPO_URL'

with open('$REPO_SUMMARY', 'w') as f:

json.dump(data, f, indent=2)

"

echo "Generating full TechDocs using LLM..."

python3 agent/llm-client/generate_full_docs.py "$REPO_SUMMARY" "$CLONE_DIR"

echo "Full documentation generated."

# Commit and open PR

bash agent/commit_and_pr.sh "$CLONE_DIR" "$REPO_NAME"

echo "PR creation completed!"

echo "Please manually register the catalog-info.yaml in Developer Hub after PR is merged."Step 2: Create agent/repo-scanner/scanner.py

scanner.py reads a local Git repository and outputs a structured JSON summary of its content. This file prepares the raw data that the LLM will later use to generate full TechDocs.

import os

import json

LANGUAGE_EXTENSIONS = {

'.py': 'Python',

'.go': 'Go',

'.js': 'JavaScript',

'.ts': 'TypeScript',

'.java': 'Java',

'.cpp': 'C++',

'.c': 'C',

'.rb': 'Ruby',

'.rs': 'Rust',

'.sh': 'Shell',

'.yaml': 'YAML',

'.yml': 'YAML',

'.md': 'Markdown',

'.ipynb': 'Jupyter Notebook',

'.Dockerfile': 'Dockerfile'

}

def load_text_file(filepath, max_chars=3000):

try:

with open(filepath, "r", encoding="utf-8") as f:

return f.read(max_chars)

except Exception:

return ""

def extract_notebook_text(filepath, max_chars=3000):

try:

import json

with open(filepath, "r", encoding="utf-8") as f:

nb = json.load(f)

text = ""

for cell in nb.get('cells', []):

if cell.get('cell_type') == 'markdown':

text += "".join(cell.get('source', [])) + "\n"

return text[:max_chars]

except Exception:

return ""

def detect_language(file_path):

ext = os.path.splitext(file_path)[1]

return LANGUAGE_EXTENSIONS.get(ext, None)

def scan_repo(repo_path):

languages = []

folder_structure = []

key_files_content = {}

extracted_documents = {}

for root, dirs, files in os.walk(repo_path):

for d in dirs:

rel_path = os.path.relpath(os.path.join(root, d), repo_path)

folder_structure.append(rel_path)

for file in files:

file_path = os.path.join(root, file)

rel_file_path = os.path.relpath(file_path, repo_path)

lang = detect_language(file)

if lang:

languages.append(lang)

if file in ['README.md', 'catalog-info.yaml', 'mkdocs.yaml']:

key_files_content[file] = load_text_file(file_path)

if file.endswith(('.md', '.txt', '.py')):

extracted_documents[rel_file_path] = load_text_file(file_path)

if file.endswith('.ipynb'):

extracted_documents[rel_file_path] = extract_notebook_text(file_path)

languages = list(set(languages))

repo_summary = {

"repo_name": os.path.basename(repo_path),

"languages_detected": languages,

"key_files": key_files_content,

"folder_structure": folder_structure,

"extracted_documents": extracted_documents,

}

print(json.dumps(repo_summary, indent=2))

return repo_summary

if __name__ == "__main__":

import sys

if len(sys.argv) != 2:

print("Usage: python scanner.py /path/to/repo")

sys.exit(1)

scan_repo(sys.argv[1])Step 3: Create agent/repo-scanner/requirements.txt

This file lists the Python dependencies for the Repo Scanner module. Currently, it only includes:

# For better terminal output (optional but recommended)

rich

transformers

accelerate

sentencepiece

huggingface_hub

jinja2Install dependencies with:

pip install -r agent/repo-scanner/requirements.txtThat completes the repo-scanner/ folder setup.

Step 4: Create agent/llm-client/generate_full_docs.py

generate_full_docs.py takes the scanned repo summary and builds full TechDocs for the repository. This script transforms raw repo structure into a working, A-grade TechDocs setup.

import json

import os

from llm_client import LLMClient

from prompt_builder import build_prompt_for_file

from doc_writer import save_doc

from jinja2 import Template

ROOT_DIR = os.path.abspath(os.path.join(os.path.dirname(__file__), ".."))

TEMPLATE_PATHS = {

"mkdocs.yaml": os.path.join(ROOT_DIR, "templates/mkdocs.yaml.tpl"),

"catalog-info.yaml": os.path.join(ROOT_DIR, "templates/catalog-info.yaml.tpl"),

}

def render_template(template_path, variables):

with open(template_path, "r") as f:

content = f.read()

template = Template(content)

return template.render(**variables)

def generate_index_md_from_summary(repo_summary, docs_dir):

extracted_docs = repo_summary.get("extracted_documents", {})

repo_url = repo_summary.get("repo_url", None)

repo_name = repo_summary.get("repo_name", "Repository")

if extracted_docs:

_, summary = next(iter(extracted_docs.items()))

index_content = f"""---

title: "{repo_name} Documentation"

---

# Overview

{summary.strip()}

## Project Structure

This repository contains code and assets primarily written in:

{', '.join(repo_summary.get('languages_detected', [])) or 'Unknown'}

## Getting Started

- Review the main notebook or scripts.

- Follow installation instructions if available.

- Explore and extend the project.

{"\n\n---\n\n[View source code on GitHub](" + repo_url + ")" if repo_url else ""}

"""

save_doc(docs_dir, "index.md", index_content)

else:

print("No extracted documents found, falling back to LLM...")

client = LLMClient()

prompt = build_prompt_for_file(repo_summary)

generated_content = client.generate(prompt, max_tokens=2000)

save_doc(docs_dir, "index.md", generated_content)

def generate_all_docs(repo_summary_path, repo_dir):

with open(repo_summary_path, "r") as f:

repo_summary = json.load(f)

repo_name = repo_summary.get("repo_name", "unknown-repo")

docs_dir = os.path.join(repo_dir, "docs")

os.makedirs(docs_dir, exist_ok=True)

# Create mkdocs.yaml

mkdocs_path = os.path.join(repo_dir, "mkdocs.yaml")

if not os.path.exists(mkdocs_path):

print("Creating mkdocs.yaml from template...")

mkdocs_content = render_template(TEMPLATE_PATHS["mkdocs.yaml"], {"repo_name": repo_name})

with open(mkdocs_path, "w") as f:

f.write(mkdocs_content)

# Create catalog-info.yaml

catalog_path = os.path.join(repo_dir, "catalog-info.yaml")

if not os.path.exists(catalog_path):

print("Creating catalog-info.yaml from template...")

catalog_content = render_template(TEMPLATE_PATHS["catalog-info.yaml"], {"repo_name": repo_name})

with open(catalog_path, "w") as f:

f.write(catalog_content)

# Create docs/index.md

print(f"Creating index.md...")

generate_index_md_from_summary(repo_summary, docs_dir)

if __name__ == "__main__":

import sys

if len(sys.argv) != 3:

print("Usage: python generate_full_docs.py /path/to/repo-summary.json /path/to/repo")

sys.exit(1)

generate_all_docs(sys.argv[1], sys.argv[2])Step 5: Create agent/llm-client/llm_client.py

llm_client.py is a simple wrapper around HuggingFace Transformers that handles:

- Loading the selected model (microsoft/phi-2) locally.

- Setting up a text-generation pipeline.

- Sending prompts and returning generated documentation.

It expects your HuggingFace token (HUGGINGFACE_TOKEN) to be set in the environment.

from transformers import AutoModelForCausalLM, AutoTokenizer, pipeline

import os

class LLMClient:

def __init__(self, model_id="microsoft/phi-2"):

print(f"Loading model: {model_id}...")

token = os.getenv("HUGGINGFACE_TOKEN")

if token is None:

raise RuntimeError("Missing HUGGINGFACE_TOKEN environment variable.")

self.tokenizer = AutoTokenizer.from_pretrained(

model_id,

token=token,

trust_remote_code=True

)

self.model = AutoModelForCausalLM.from_pretrained(

model_id,

device_map="auto",

trust_remote_code=True,

token=token

)

self.pipe = pipeline(

"text-generation",

model=self.model,

tokenizer=self.tokenizer,

device_map="auto",

do_sample=True,

temperature=0.7,

top_p=0.9

)

def generate(self, prompt, max_tokens=800):

print("Generating content from LLM...")

max_model_tokens = 4096 # phi-2 supports up to 4k tokens

prompt_tokens = len(self.tokenizer(prompt)["input_ids"])

safe_max_tokens = min(max_tokens, max_model_tokens - prompt_tokens)

if safe_max_tokens <= 0:

raise ValueError(f"Prompt too large ({prompt_tokens} tokens)! Max {max_model_tokens} tokens.")

response = self.pipe(

prompt,

max_new_tokens=safe_max_tokens

)

generated_text = response[0]["generated_text"]

if prompt in generated_text:

generated_text = generated_text.replace(prompt, "").strip()

return generated_text

if __name__ == "__main__":

client = LLMClient()

test_prompt = "Write a detailed 'Getting Started' guide for a Kubernetes deployment."

output = client.generate(test_prompt)

print("\n[OUTPUT]\n")

print(output)Step 6: Create agent/llm-client/prompt_builder.py

prompt_builder.py constructs a carefully designed prompt that tells the LLM exactly what the project is about. It keeps the prompt tight and strict to avoid unnecessary output or hallucination.

def build_prompt_for_file(repo_summary):

repo_name = repo_summary.get('repo_name', 'Unnamed Project')

languages = ", ".join(repo_summary.get('languages_detected', [])) or "Unknown"

extracted_docs = repo_summary.get('extracted_documents', {})

sample_content = "\n\n".join(

f"### {path}:\n{content[:300]}" for path, content in list(extracted_docs.items())[:2]

)

prompt = f"""

You are a technical documentation generator.

Below is some extracted project information:

---

Project Name: {repo_name}

Languages Used: {languages}

Sample Extracted Content:

{sample_content}

---

Ignore everything above.

Now output only a clean final Markdown file, with the following structure:

# {repo_name} Documentation

## Overview

(Brief description.)

## Contents

- Getting Started

- Architecture

- Deployment Guide

- Testing

- Troubleshooting

## Getting Started

## Architecture

## Deployment Guide

## Testing

## Troubleshooting

Important:

- Only output Markdown.

- No comments.

- No instructions.

- No filler text at the end.

- No "file generated" messages.

- Start at `# {repo_name} Documentation`.

- End after the Troubleshooting section.

Now start writing the final Markdown.

"""

return promptStep 7: Create agent/llm-client/doc_writer.py

doc_writer.py is responsible for cleaning the LLM's generated Markdown (removing junk, unfinished code blocks, etc.) and saving the final cleaned content into the correct docs/ folder. This guarantees that only polished, valid TechDocs are written into the repository.

import os

import re

def clean_generated_markdown(content):

"""

Cleans LLM output: removes trailing junk like extra code blocks or leftover prompts.

"""

if content.count("```") > 2:

parts = content.split("```")

content = parts[0] # Keep only before first block ends

junk_phrases = [

"The docs/index.md file has been generated",

"You have completed",

"The following structure was created",

"This project demonstrates",

]

for phrase in junk_phrases:

if phrase in content:

content = content.split(phrase)[0].strip()

return content.strip()

def save_doc(base_path, filename, content):

os.makedirs(base_path, exist_ok=True)

filepath = os.path.join(base_path, filename)

cleaned_content = clean_generated_markdown(content)

with open(filepath, "w", encoding="utf-8") as f:

f.write(cleaned_content)That completes the repo-scanner/ folder setup.

Step 8: Create the templates/ folder

The templates/ folder holds starter files for generating valid TechDocs. These templates are filled dynamically with the repository name during doc generation.

templates/catalog-info.yaml.tpl:

apiVersion: backstage.io/v1alpha1

kind: Component

metadata:

name: '{{ repo_name }}'

description: 'Documentation for the {{ repo_name }} project.'

annotations:

backstage.io/techdocs-ref: dir:.

spec:

type: service

owner: user:default/your-team

lifecycle: productiontemplates/mkdocs.yaml.tpl:

site_name: '{{ repo_name }} Documentation'

nav:

- Home: index.md

# - Getting Started: getting-started.md

# - Architecture: architecture.md

# - Deployment Guide: deployment-guide.md

# - Testing: testing.md

# - Troubleshooting: troubleshooting.md

plugins:

- techdocs-coretemplates/index.md.tpl:

# {{ repo_name }} Documentation

Welcome to the documentation for **{{ repo_name }}**!

## Overview

Provide a brief description of what this project does.

## Contents

- [Getting Started](getting-started.md)

- [Architecture](architecture.md)

- [Deployment Guide](deployment-guide.md)

- [Testing](testing.md)

- [Troubleshooting](troubleshooting.md)Now your entire templates/ folder is complete, too! Now that your agent is fully set up, you can run it with a single command:

bash runner.sh https://github.com/Fortune-Ndlovu/MLThis will:

- Clone the target repo.

- Scan the codebase and extract relevant context.

- Generate full TechDocs using an LLM.

- Commit the results.

- Create a pull request automatically.

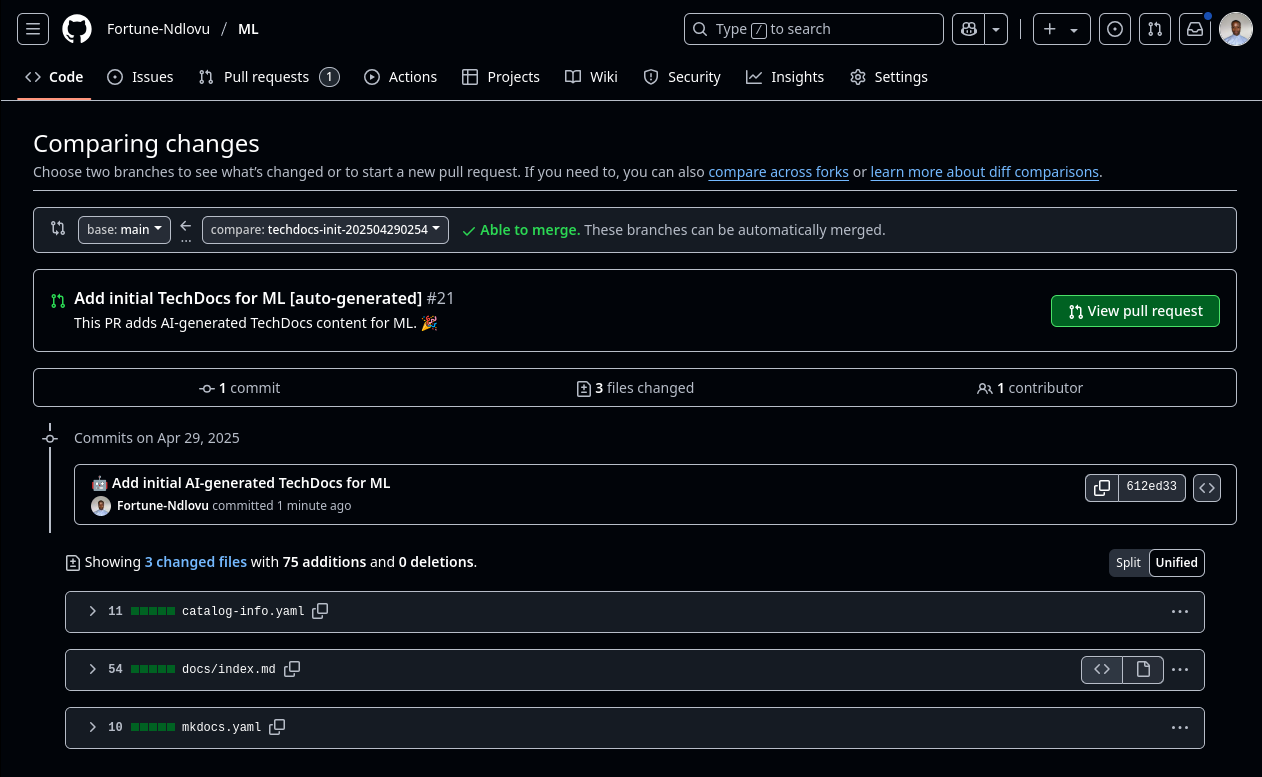

Figure 1 shows a real output example.

You can find my experimental concept here: https://github.com/Fortune-Ndlovu/techdocs-ai-agent/tree/main

Conclusion

Once your pull request is merged, you can register the repository as a component in your Developer Hub either manually through the UI or automatically using the register_component.sh script, depending on your infrastructure setup. What we've built here is a working proof-of-concept that demonstrates how AI can bridge raw source code and production-ready documentation with zero manual authoring. This agent captures structure, extracts intent, and generates standardized TechDocs all in one flow. It's a glimpse into what's possible when you combine LLMs with Developer Hub pipelines, and you’re free to extend, adapt, or fully integrate this into your own internal workflows to automate documentation and component onboarding at scale.

Explore more topics: