A foundation for contributor-driven gen AI

Seamlessly and openly develop, test, and run Granite family LLMs to power your apps with Red Hat Enterprise Linux AI.

Discover Red Hat’s latest solutions for building applications with more flexibility, scalability, security and reliability.

Rapidly build, test, and deploy applications that make work better for your organization.

A stable, proven foundation that’s versatile enough for rolling out new...

Open, hybrid-cloud Kubernetes platform to build, run, and scale...

Red Hat Ansible Automation Platform allows developers to set up automation to...

Learn about Red Hat products and start using them for yourself.

Discover how the RamaLama open source project can help isolate AI models for...

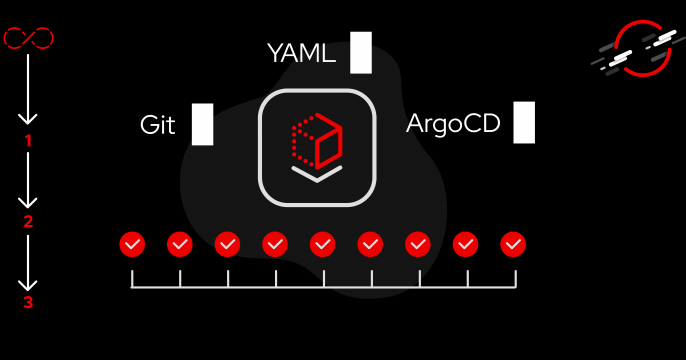

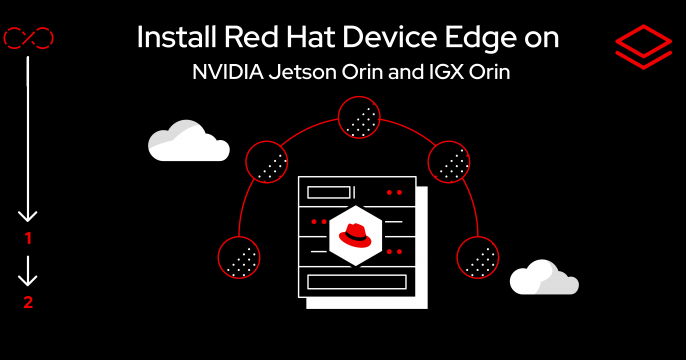

Discover how to install MicroShift and why it’s more compatible with Red...

Learn how to deploy confidential containers on bare metal with the Intel TDX...

Explore multimodal model quantization in LLM Compressor, a unified library...

Boost your technical skills to expert-level with our interactive learning tutorials.

A Red Hat Developer membership comes with a ton of benefits, including no-cost access to products such as Red Hat Enterprise Linux (RHEL), Red Hat OpenShift, and Red Hat Ansible Automation Platform.

1 year of access to all Red Hat products

Developer learning resources

Virtual and in-person tech events

Red Hat Customer Portal access

Exclusive content

Access to the Developer Sandbox, a shared OpenShift and Kubernetes cluster for practicing your skills

Join us May 19-22 in Boston, Massachusetts, to network with product experts and build skills through training, labs, and interactive sessions.

Explore developer resources and tools that help you build, deliver, and manage innovative cloud-native apps and services.

Did you know that Linux users don’t need root privileges to create and...

C2y makes memcpy(NULL, NULL, 0) and other zero-length operations on null...

A look at four use cases where image mode will streamline your OS and its...

This year's top articles on AI include an introduction to GPU programming, a...

Explore insights, news, and tutorials on Red Hat developer tools, platforms, and more.