Red Hat build of Apache Camel

The Red Hat build of Apache Camel is an integration framework available as part of Red Hat Application Foundations and Red Hat Integration, or available as a trial within Developer Sandbox.

Try the Red Hat build of Apache Camel

Spin up our Developer Sandbox to test a selection of Red Hat products—including the Red Hat® build of Apache Camel. Available as a Red Hat OpenShift® Dev Spaces integrated development environment (IDE), our Developer Sandbox doesn't require local installs and lets you follow many of our learning paths, tutorials, and step-by-step guides that cover installation, getting to Hello World, and executing common tasks.

What is Apache Camel?

Apache Camel is an open source integration framework that implements enterprise integration patterns with ready-to-use building blocks so developers can create, test, and maintain data flows.

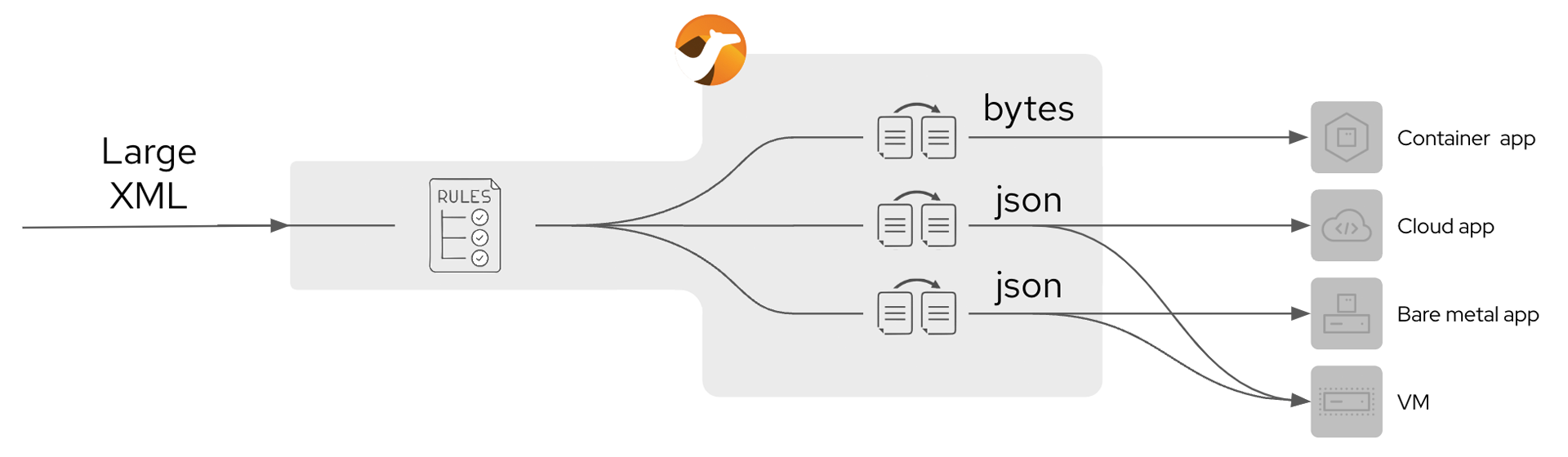

It can help process messages that are semantically equivalent—but arrive in different formats—by using normalizers to route each message through custom translators that match a common format. The normalizer then uses a message router to send incoming messages to the right message translator.

While all-in-one application server architectures like OSGi (Karaf) or Java Enterprise Edition (JBoss EAP) are no longer supported by the Red Hat build of Apache Camel, Spring Boot and Quarkus remain the standard cloud-native and microservices-based application runtimes.

Get started with the Red Hat build of Apache Camel

We've consolidated our most popular learning paths, labs, tutorials, case studies, walkthroughs, solution patterns, and more to give you a head start on making the Red Hat build of Apache Camel a useful part of your development stack.

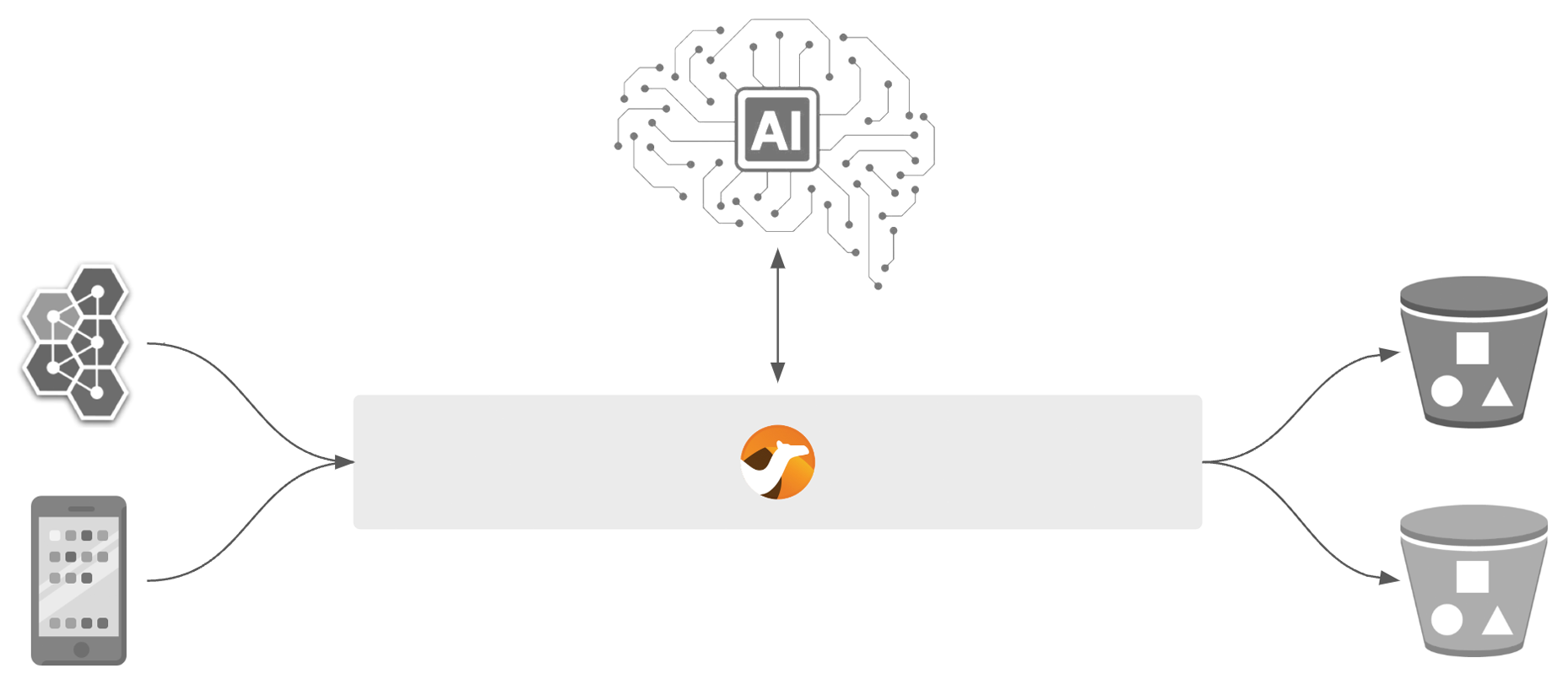

Data ingestion for AI/ML

Camel connectors ingest data from various sources—validating and transforming raw data into AI-ready information that can be routed to data lakes, databases, and S3 buckets.

API-driven processing

Apache Camel is designed with core capabilities to support both API-first and code-first approaches in a range of different protocols and specifications.

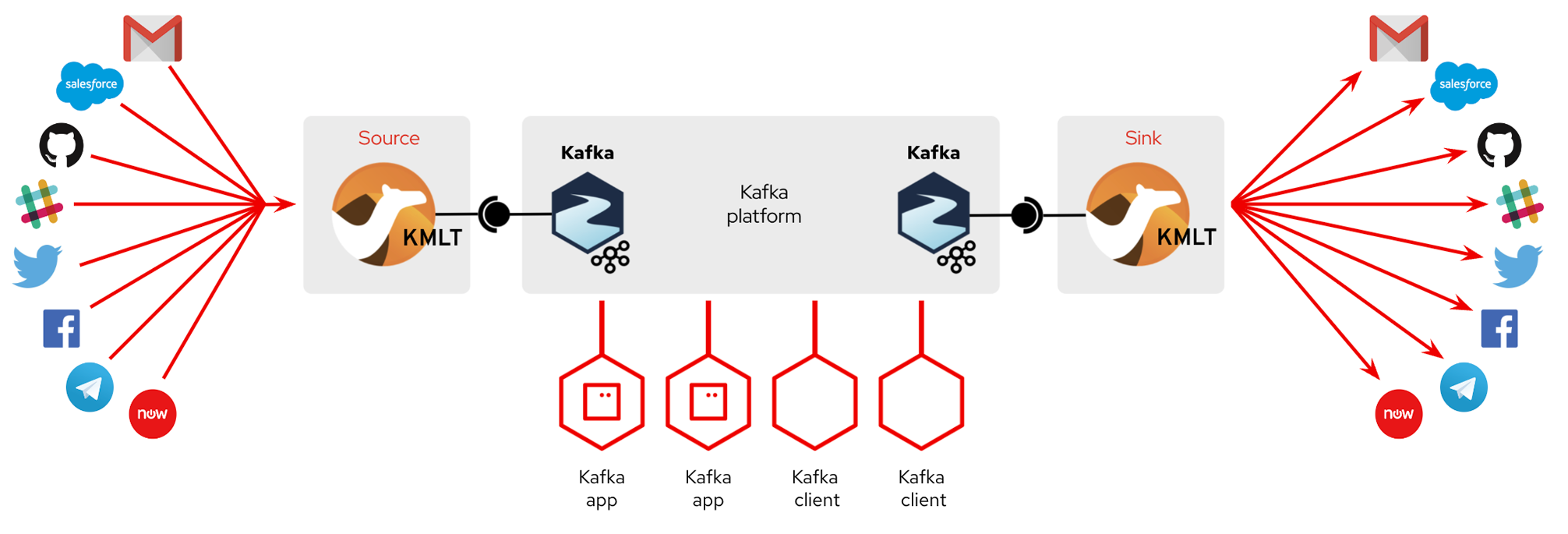

Event-driven processing

The default consumer model in Apache Camel is event based, so the Camel container can manage pooling, threading, and concurrency in a declarative manner.

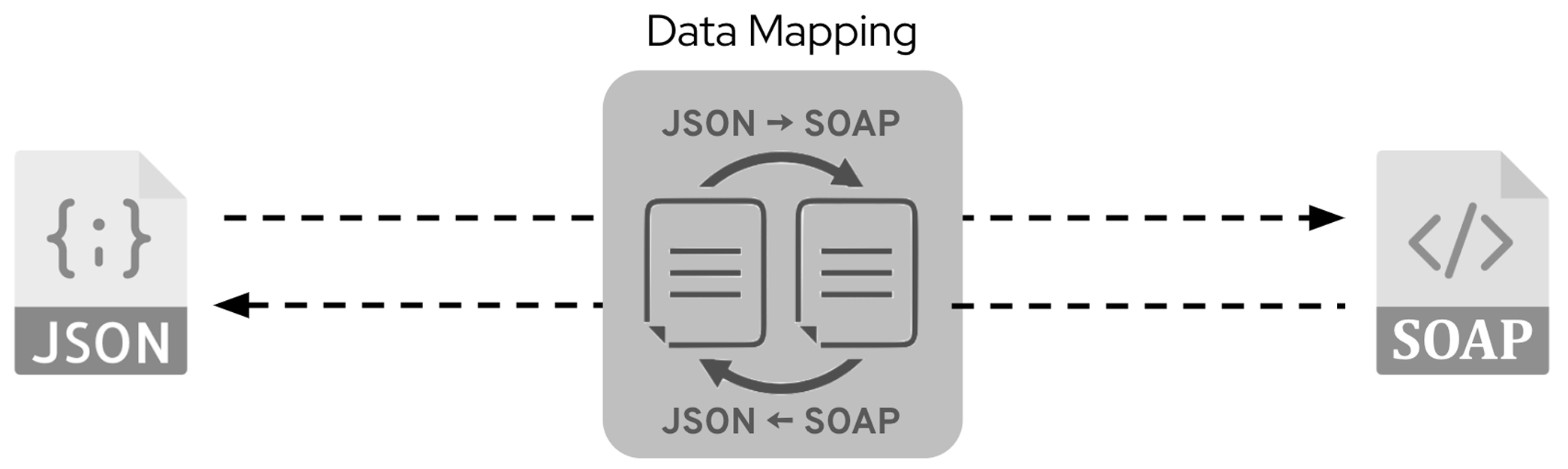

Data mapping and transformation

Convert APIs, data formats, and protocols using data mappers, transformation stylesheets, templates, automatic data convertors, type converters, user defined endpoints, expression languages, custom processors, and more.

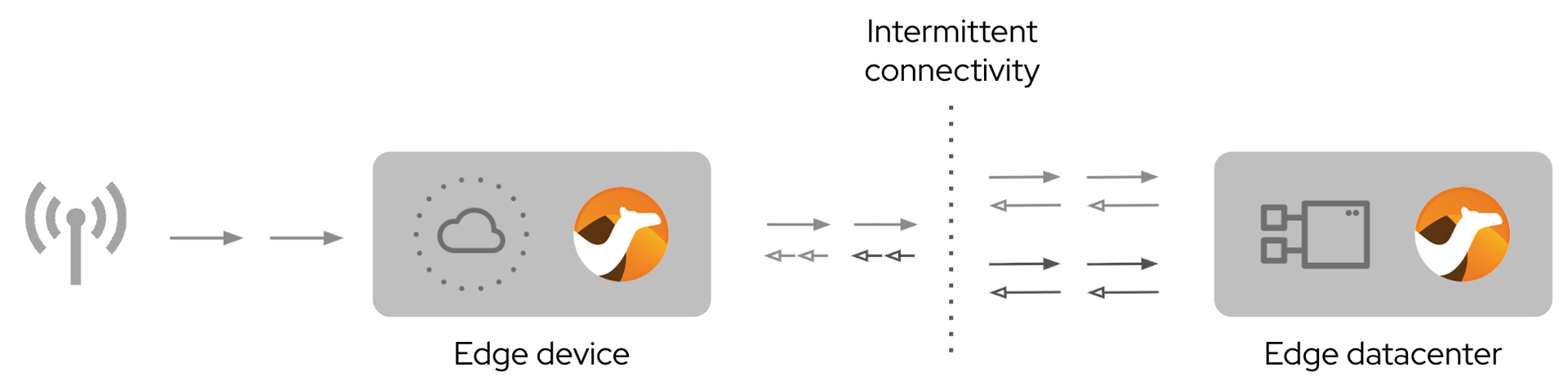

IoT edge framework

Move sensor data and events from the operational/site edge towards core platforms. Camel’s asynchronous components and EIPs provide great support for intermittent connectivity characteristic of remote devices with poor signal coverage.

Large upload/download transfers

Camel’s streaming ability handles raw byte streams, image, video, documents, data structures, and more with high performance and very low memory usage—sending data over HTTP to multiple endpoints or cloud services.

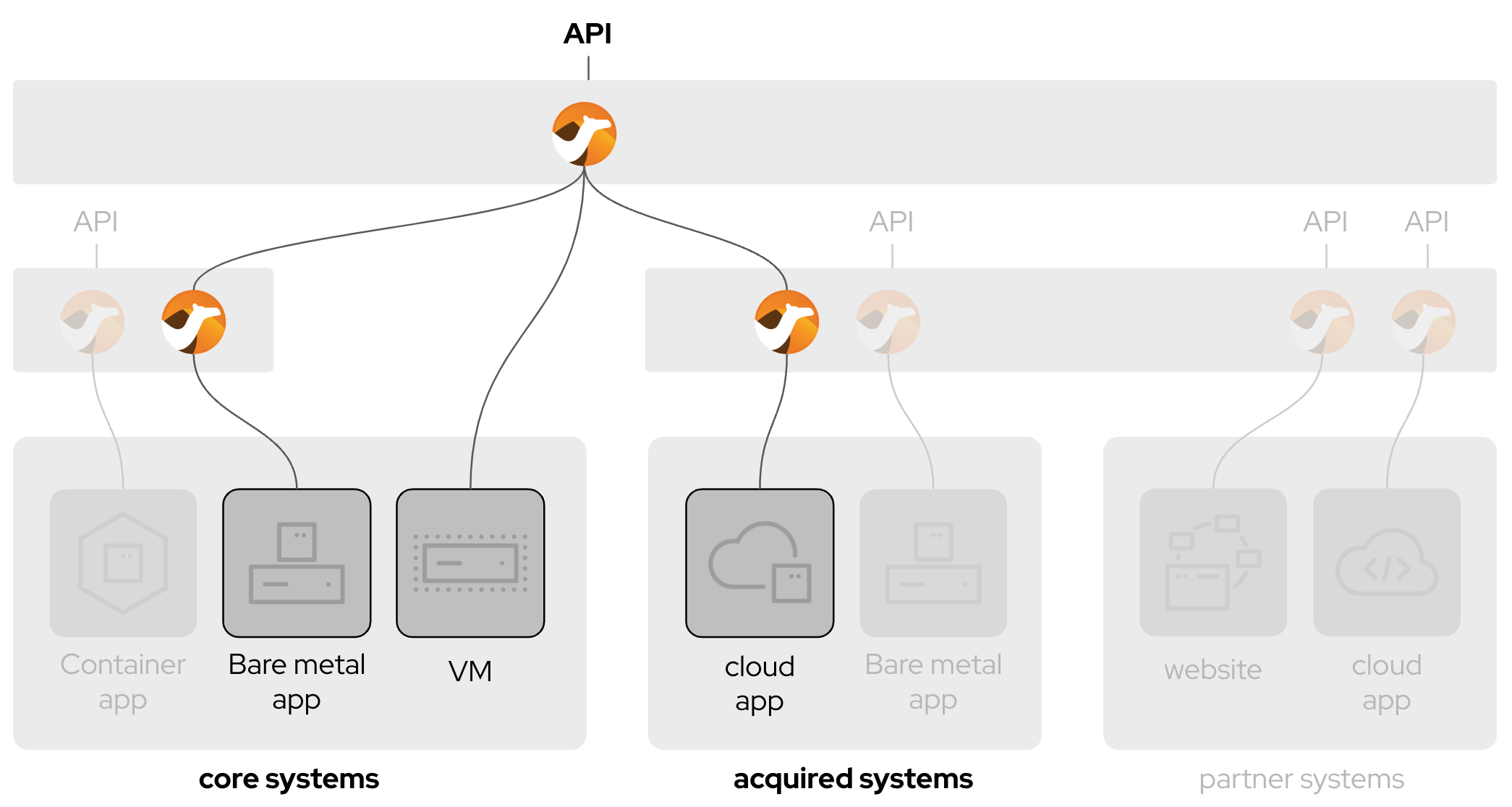

Service composition

Use pattern-based integration to combine many services into a single one. Define business functions by gathering data from multiple endpoints to resolve the most complex integrations.

Latest articles about the Red Hat build of Apache Camel

Dive into the Q1’26 edition of Camel integration quarterly digest, covering...

Learn how to create a baseline RAG system using Apache Camel, PostgreSQL, and...

This post introduces two interactive, sports-themed hands-on labs designed to...

See how to use Apache Camel to turn LLMs into reliable text-processing...

Ready to use the Red Hat build of Apache Camel in production?

Take your deployment to the next level. Using the Red Hat build of Apache Camel in production requires adopting Red Hat Application Foundations—a unified suite of tools for API management, data streaming, and enterprise integration across cloud-native applications.