Introduction

Hi, I'm Steve, a member of the Inception team at Red Hat. The Inception team was pulled from different parts of IT to foster DevOps culture in Red Hat. Though we've only been a team for a little over a month, we've been trying to do some early projects to make everyone's lives easier.l

We spent quite a bit of time in our early meetings identifying pain points in the current processes. We talked with a few developers, ops folks, noted historical issues and ran with general brain storming. We heard a lot about configuration management, lack of information, redundant and time consuming tasks, and many more of what one expects when asking tech people what pains them.

Of course, Tim (another Incepticon) and I were itching to write some code so once the team identified a common issue for both developers and ops engineers we jumped in head first.

The rest of this post describes our journey from initially trying to implement a simple solution to improve the day-to-day lives of developers, through the technical limitations we experienced along the way, and finally arrives at the empathy for our developers we've gained from that experience. We'll wrap up with a note on how Red Hat Software Collections (announced as GA in September) would've simplified our development process.

What?

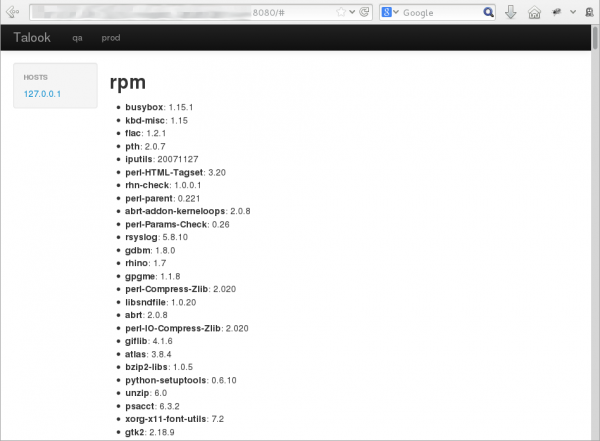

We decided to take one of the simple pain points we heard from both devs and ops: What's installed on system $FOO in environment $BAR. After spending a bit of time defining the User Story, the team rallied around the technical tasks to define what the implementation would look like. We didn't go too deep with the tasks staying high level for the sake of time. In a nutshell we decided a simple REST interface showing packages, versions and facts would be an easy and quick fix for both the developers and ops folks. Right after the definition meeting, Tim created a GitHub repo and we both started writing code and, in a few hours we had a minimum viable product.

Based on the minimum viable product, a few members of the team shopped the idea again to a couple of other developers and the want for the product was reinforced. We also picked up the idea that it would be helpful to have a web UI for those who are not techies. It's interesting how excited people can be for such a simple tool when it scratches their itch!

Leading by Example

Since one of our longer term goals is to get the whole process from code check-in to production automated, we came up with some basic requirements for developing software. Nothing earth shattering. We require pretty well known best practices such as unit testing, packaging, continuous integration, code review, documentation, etc... At this point we had not come up with how much coverage unit testing must have, what code review looks like, etc... but that didn't stop us from following these best practices to the best of our ability. Here are some examples noting what we followed:

- We wrote unittest and had them run via Travis-CI (jsonstats, Talook)

- Code conventions were enforced as part of CI

- When tests fail the code or test (if incorrect) would be fixed

- Tests do not get removed unless the code it tests is also removed!

- The code and testing instance were submitted for security testing

- Code had to be installable via RPM (with service scripts, etc...) and runnable via source

- Without documentation our tool would not be considered done

- A video demo was created to show the tools in action

- Tim and I read each others code and provided patches (example)

Iterative Pain

As I said before Tim and I like to code, especially when it's in Python. We were able to start on an early coding project to help get information to developers in a quick self-service way, but not everything was rainbows and ponies through the development process!

For jsonstats we started off with Tornado as the base web library. Both of us used our Fedora machines to install Tornado locally and started to hack away at code. After a very short amount of time we had a minimum viable product, but Tim noticed that Tornado was not available on our servers. Worse, it wasn't available unless we added a third party repository which would be a pain to get added with the current deployment processes. Even if we did get the repository approved we'd still be about 2 years behind the current stable version for tornado. This means we'd be coding to features which, in some cases, have been replaced with better packages in the framework (example: tornado.gen). After a quick discussion we decided to remove Tornado and just use the simple wsgiref library. It wouldn't be as scalable, and probably take more code, but it would still be pretty simple and easy to deploy.

While Tim continued to polish up the JSON and plugin parts of the code, I replaced the Tornado code with a standalone wsgiref server using the library available in Python 2.5 onward. It was at this time Tim facepalmed and noted that the json module was not available in Python 2.5. It was added in 2.6. Instead of freaking out and complaining we decided to simply include the old try/except import for json and fall back to requiring simplejson. Happy times are here again! Well, for a short time anyway.

As you probably guessed, it didn't hit us until after the port to wisgiref that we'd be deploying on Python 2.4 (which was released almost 10 years ago.)

This meant for us that there was no built-in WSGI container we could use in the base Python package. Since we wanted to try to keep the dependencies as low as possible, I started writing a translation container with Python's BaseHTTPServer module. I called it WSGILite and both projects started to use it for instances running on Python 2.4.

To briefly recap (tl;dr): we started by implementing the Tornado Framework, discovered it wasn't available without pulling in several external dependencies, had to detect if the runtime was modern enough to provide json functionality, fell-back to wsgiref, discovered that our older systems didn't have that available, and finally resorted to writing our own pseudo-implementation of what's now considered a standard library.

While both Tim and I have been in release engineering/platform ops roles in the past, most of the development pain we've known about was by proxy. We heard the complaints and frustrations and even understood why they were being aired, but they were not things we directly dealt with from a developers point of view.

After going through this initial tool development we both have more empathy for the pain that our developers go through when dealing with code deployments. If both of us were not familiar with Python it would have been a nightmare getting help from other developers. Ask yourself this question: who do you know that is actively using Python 2.4 as their development target? Heck, even Python 2.5 was just removed from Travis-CI! There are so many unknowns and hurdles just to write a small tool, and there is no business value in dealing with technical hurdles imposed by old stuff.

Conclusions

While stability is a very good thing there has to be a risk versus reward analysis done. No one wants to use ancient libraries and, generally speaking, the business has to wait longer for less features when developers can't use modern libraries/tools/etc... In our initial tool development what should have been a very small Python server with a single dependency became more complex with code to support the old runtime. Roughly 33% (47 out of 143 "lines") of the jsonstats runnable server code was due to backporting the application to run on Python 2.4.

The writing of tools and being bound by our current systems gave us some extra street cred. It brought both Tim and myself into the sphere of developer pain making us want to help fix it all the more. This experience also brought Software Collections into our view. Pulling in a newer version of Python housing libraries we expect via Software Collections would have helped us a whole lot and I'm sure it will help many fellow developers in the future.

And we are only scratching the very top of the surface. We still have so much to do to move people towards self service, automation and pure awesomeness. We'll get there, one iteration at a time.

Related Posts

For more information about Red Hat Software Collections and other topics related to this article, visit one of these other developer blog posts:

Last updated: February 26, 2024