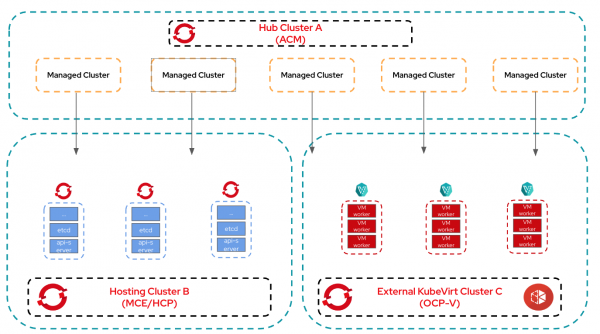

In Parts 1 and 2 of this series, we covered an all-in-one deployment and the common two-cluster hub/management split. In this final installment, we will discuss a stronger separation suited for large enterprises, including the following three clusters with a single, clear responsibility.

- Cluster A: Red Hat Advanced Cluster Management for Kubernetes (hub), fleet management, policy, placement, Day-2 operations

- Cluster B: Multicluster engine for Kubernetes, hosted control plane (control plane hosts) runs HostedCluster control plane pods. The multicluster engine for Kubernetes provides the provisioning and lifecycle orchestration.

- Cluster C: Red Hat OpenShift Virtualization (worker virtual machines), a dedicated virtualization cluster that provides VMs for hosted cluster NodePools (worker nodes).

This topology provides maximum isolation, operational separation, and capacity scaling. Control planes and workers live on different clusters tuned for their roles, and the fleet/hub cluster remains lightweight and focused on governance.

Architecture

This pattern optimizes performance, maintainability, and scalability within the system architecture.

- Strong separation of concerns: Red Hat Advanced Cluster Management remains a true management plane without hosting control planes or VMs. Cluster B focuses on control-plane hosting; Cluster C is optimized for VM density and storage.

- Operational safety: A high load or upgrade on Cluster B or the Red Hat Advanced Cluster Management hub on Cluster A won’t affect the end user applications running in the data plane on Cluster C.

- Independent scaling: Add compute nodes (or tune storage) on Cluster C without touching Cluster B or A. Scale control-plane resources independently on Cluster B.

- Security zoning and compliance: You can place Cluster C in a different security/perimeter zone (e.g., edge DC) while keeping Red Hat Advanced Cluster Management in a central, tightly controlled zone.

- Multi-tenant / multi-region compute: Multiple worker hosting clusters (Cluster C variants) can be used across regions while control planes remain centralized or regionalized on Cluster B.

This architecture shown in Figure 1 provides a robust, resilient, and manageable foundation that meets the functional requirements.

Prerequisites

You must meet the following prerequisites.

Cluster A (Red Hat Advanced Cluster Management Hub):

- OpenShift and Red Hat Advanced Cluster Management installed

- Network routes to control plane endpoints (Cluster B-hosted APIs)

Cluster B (multicluster engine for Kubernetes and hosted control plane):

- Multicluster engine for Kubernetes installed and configured to the run hosted control plane

- Adequate control-plane node resources (masters/workers) to host many control plane pods

- Ability to create and manage HostedCluster CRs

- Network access to Cluster C endpoints and ability to consume infra kubeconfigs

Cluster C (OpenShift Virtualization):

- OpenShift Virtualization (HCO) installed and healthy

- Storage classes and DataVolume support for VM disks

- Sufficient CPU/RAM/storage to host VMs at target density

- API reachable from Cluster B (for NodePool provisioning via infra kubeconfig)

Operators / Components to install

- Cluster A: Red Hat Advanced Cluster Management and any required add-ons (GitOps, observability)

- Cluster B: Multicluster engine for Kubernetes and hosted control plane (deployed by multicluster engine for Kubernetes) and MetalLB

- Cluster C: OpenShift Virtualization and CSI/storage operator

Common prerequisites

DNS entries for the hub cluster pointing to the IP from the same subnet as the nodes of the cluster.

- API: api.cluster.example.com

- Ingress: *.apps.cluster.example.com

Plan for firewall, LB, and DNS entries across clusters.

Tools/credentials:

- OpenShift installer

- OC CLI

- Pull secret

- SSH keys

- hcp CLI

- clusteradm CLI plug-in

Section 1

For the demonstration purpose, we tested this on VMs running on vSphere using nested virtualization.

Prerequisites for Cluster A (Red Hat Advanced Cluster Management):

Nodes Sizing (compact 3 node cluster)

3 x Master/Worker Nodes - 8 vCPU / 32G Memory / 1 x 125 GB disk

Once all the pre-requisite are met, install the OpenShift cluster using your preferred installation method.

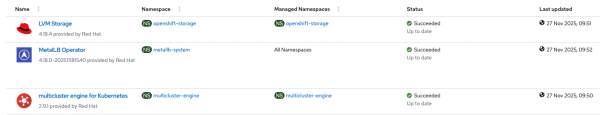

Install Red Hat Advanced Cluster Management operator. Check whether all the required operators are installed on Cluster A (Figure 2).

Section 2

For the demonstration purpose, we tested this on VMs running on vSphere using nested virtualization.

Prerequisites for Cluster B (multicluster engine for Kubernetes and hosted control plane):

3 x Master Nodes - 8 vCPU / 32G Memory / 1 x 125 GB disk

3 x Worker Nodes - 16 vCPU / 48G Memory / 1 x 125 GB disk + 1 x 500 GB disk

Install the multicluster engine for Kubernetes cluster.

Once all the pre-requisite are met, install the OpenShift cluster using your preferred installation method.

To keep things simple, we are going to use LVM storage class for etcd PVs of hosted clusters.

Install the LVM storage operator. Once the LVMS operator is installed, create the following CR.

$ cat <<EOF | oc apply -f -

apiVersion: lvm.topolvm.io/v1alpha1

kind: LVMCluster

metadata:

name: lvmcluster-sample

namespace: openshift-storage

spec:

storage:

deviceClasses:

- fstype: xfs

thinPoolConfig:

chunkSizeCalculationPolicy: Static

metadataSizeCalculationPolicy: Host

sizePercent: 90

name: thin-pool-1

overprovisionRatio: 10

default: true

name: vg1

EOFOnce the LVMCluster is available, you should see a new StorageClass created by the name “lvms-vg1.” But we need to create a new StorageClass with VolumeBindingMode: Immediate.

Note: This step is required only when you are using LVM StorageClass for OpenShift Virtualization and for testing purposes. In the Production scenario, you might be choosing a different storage solution which supports RWX and VolumeBindingMode: WaitForFistConsumer is recommended.

$ cat <<EOF | oc apply -f -

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: lvm-immediate

annotations:

description: Provides RWO and RWOP Filesystem & Block volumes

storageclass.kubernetes.io/is-default-class: "true"

labels:

owned-by.topolvm.io/group: lvm.topolvm.io

owned-by.topolvm.io/kind: LVMCluster

owned-by.topolvm.io/name: lvmcluster-sample

owned-by.topolvm.io/namespace: openshift-storage

owned-by.topolvm.io/version: v1alpha1

provisioner: topolvm.io

parameters:

csi.storage.k8s.io/fstype: xfs

topolvm.io/device-class: vg1

reclaimPolicy: Delete

allowVolumeExpansion: true

volumeBindingMode: Immediate

EOFMake sure to remove the default SC annotation from lvms-vg1.

$ oc patch storageclass lvms-vg1 \

-p '{"metadata": {"annotations": {"storageclass.kubernetes.io/is-default-class": null}}}'Install the MetalLB operator

Configure IPPool and L2Advertisement as per the official documentation. Once the MetalLB operator is installed, create the following.

$ cat <<EOF | oc apply -f -

apiVersion: metallb.io/v1beta1

kind: MetalLB

metadata:

name: metallb

namespace: metallb-system

EOF

$ cat <<EOF | oc apply -f -

apiVersion: metallb.io/v1beta1

kind: IPAddressPool

metadata:

name: metallb

namespace: metallb-system

spec:

addresses:

- 192.168.34.205-192.168.34.215

EOF

$ cat <<EOF | oc apply -f -

apiVersion: metallb.io/v1beta1

kind: L2Advertisement

metadata:

name: l2advertisement

namespace: metallb-system

spec:

ipAddressPools:

- metallb

EOFInstall the multicluster engine for the Kubernetes operator. Once you’ve installed the multicluster engine for Kubernetes successfully, make sure the hub cluster is seen as the managed cluster.

$ oc get managedclusters local-clusterCheck the installation of all the required operators (Figure 3).

Prepare the multicluster engine for Kubernetes cluster before importing it on Red Hat Advanced Cluster Management Cluster. It is important to complete all the steps outlined in this official documentation to manage the multicluster engine for Kubernetes cluster from Red Hat Advanced Cluster Management and auto-discover hosted clusters imported using Red Hat Advanced Cluster Management policies.

Do not proceed with the next section before completing this section.

Section 3

For the demonstration purpose, we tested this on VMs running on vSphere using nested virtualization.

Pre-requisites for Cluster C (OpenShift Virtualization):

Node Sizing (compact 3 node cluster)

3 x Master + Worker Nodes - 16 vCPU / 64G Memory / 1 x 125 GB disk + 1 x 500 GB disk

Install the OpenShift Virtualization cluster. Once all the prerequisites are met, install the cluster using your preferred installation method.

Make sure to have a RWX capable storage for live migration of VMs. For demonstration purposes we will be using LVM Storage on Cluster C.

Patch the network operator.

$ oc patch ingresscontroller -n openshift-ingress-operator default \

--type=json \

-p '[{ "op": "add", "path": "/spec/routeAdmission", "value": {wildcardPolicy: "WildcardsAllowed"}}]'Install the OpenShift Virtualization operator and hyperconverged CR. Once all the operators are successfully installed, it’s time to test by creating a simple VM to make sure OpenShift Virtualization is functioning. Once VM validation is successful, proceed with next steps to create a hosted cluster on Cluster B.

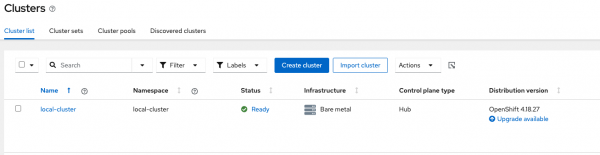

Make sure all the required operators are installed (Figure 4).

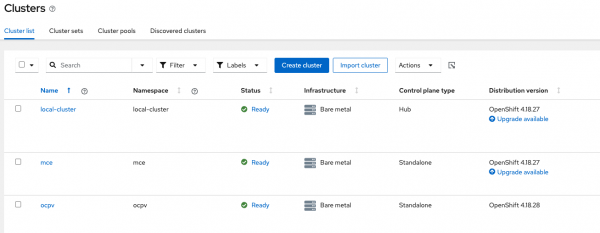

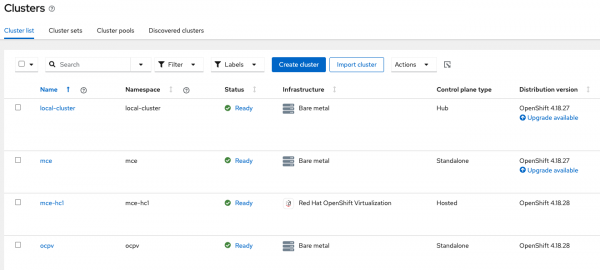

Import the Cluster C in Red Hat Advanced Cluster Management to manage it centrally. Once imported, we can see the following from Red Hat Advanced Cluster Management (Figure 5).

On the multicluster engine for Kubernetes cluster, there is just a local-cluster (Figure 6).

Create a hosted cluster on Cluster B

Before creating a hosted cluster on Cluster B, create a project on Cluster C where nodepool VMs will be deployed.

$ export KUBECONFIG=kubeconfig-ocpv

$ oc new-project hc1You cannot create a hosted cluster from the Red Hat Advanced Cluster Management hub cluster. Connect to the multicluster engine for Kubernetes cluster and run hcp create cluster command to create the hosted cluster.

This single hcp create cluster command provisions the hosted cluster on a multicluster engine for Kubernetes (Cluster B) using nodepools VMs deployed on Cluster C.

$ export KUBECONFIG=kubeconfig-mce

$ hcp create cluster kubevirt \

--name hc1 \

--pull-secret pull-secret.txt \

--node-pool-replicas 2 \

--memory 8Gi \

--cores 2 \

--etcd-storage-class=lvm-immediate \

--namespace clusters \

--infra-namespace=hc1 \

--infra-kubeconfig-file=kubeconfig-ocpv \

--release-image quay.io/openshift-release-dev/ocp-release:4.18.28-multiNotice that additional flags --infra-namespace refers to the new project created in Cluster C and --infra-kubeconfig-file should point to the Kubeconfig of Cluster C. If these two flags are missed, then the hosted cluster will be deployed on the multicluster engine for Kubernetes cluster.

Wait for 10 to 15 minutes to have the hosted cluster created. While waiting, you can check the status on the GUI of the multicluster engine for Kubernetes (aka hosting) cluster. Once the hosted cluster is created successfully, it will be imported on the Red Hat Advanced Cluster Management Hub (Cluster A) automatically.

We can check the status of the hosted cluster from Red Hat Advanced Cluster Management, multicluster engine for Kubernetes, and the Nodepools status from the OpenShift Virtualization cluster as follows.

$ oc --kubeconfig=kubeconfig-acm get managedcluster

NAME HUB ACCEPTED MANAGED CLUSTER URLS JOINED AVAILABLE AGE

local-cluster true https://api.acm.example.com:6443 True True 39h

mce true https://api.mce.example.com:6443 True True 20h

mce-hc1 true https://192.168.34.205:6443 True True 29m

ocpv true https://api.ocpv.example.com:6443 True True 53mNotice that the hosted cluster hc1 is pre-fixed with mce- as it was configured to import automatically with the name of the managed cluster.

$ oc --kubeconfig=kubeconfig-mce get managedcluster

NAME HUB ACCEPTED MANAGED CLUSTER URLS JOINED AVAILABLE AGE

hc1 true https://192.168.34.205:6443 True True 29m

local-cluster true https://api.mce.example.com:6443 True True 20h

$ oc --kubeconfig=kubeconfig-mce get hostedcluster -n clusters

NAME VERSION KUBECONFIG PROGRESS AVAILABLE PROGRESSING MESSAGE

hc1 4.18.28 hc1-admin-kubeconfig Completed True False The hosted control plane is available

$ oc --kubeconfig=kubeconfig-ocpv get vmi -n hc1

NAME AGE PHASE IP NODENAME READY

hc1-bq8cm-fp4ng 2m35s Running 10.129.0.138 ocpv-worker1 True

hc1-bq8cm-nmnjc 3m35s Running 10.128.0.156 ocpv-worker2 TrueWe can also see this in Figure 7 from Red Hat Advanced Cluster Management (Cluster A).

Finally, we can check the hosted cluster and run commands.

$ export KUBECONFIG=kubeconfig-mce ; hcp create kubeconfig --name hc1 --namespace clusters > kubeconfig-hc1

$ oc --kubeconfig=kubeconfig-hc1 get nodes

NAME STATUS ROLES AGE VERSION

hc1-bq8cm-fp4ng Ready worker 34m v1.31.13

hc1-bq8cm-nmnjc Ready worker 36m v1.31.13

$ oc --kubeconfig=kubeconfig-hc1 whoami --show-console

https://console-openshift-console.apps.hc1.apps.ocpv.example.comNotice that the console is pointing to a wildcard DNS of the Cluster C.

$ oc --kubeconfig=kubeconfig-hc1 whoami --show-server

https://192.168.34.205:6443You can notice that the API address is pointing to the IP address provided by the MetalLB range configured in Section 2.

Validation and troubleshooting

To validate and troubleshoot the deployment, check the control plane pods on Cluster B within the HostedCluster namespace. Verify the NodePool VM objects and VMIs on Cluster C. Use the hosted cluster kubeconfig locally to run oc get nodes and confirm that the worker nodes are in the ready state. Finally, check the ManagedCluster status on Cluster A via the Red Hat Advanced Cluster Management view.

For most organizations, the architecture in Part 2 provides sufficient isolation and production capabilities. This three-cluster model is for specific advanced requirements where:

- Your organization needs strong separation between fleet management, control-plane hosting, and high-density VM compute.

- You operate across multiple security domains, and worker VMs must be placed in different network/physical zones.

- You need to scale nodepool density aggressively without impacting control-plane performance.

- You want to support many heterogeneous compute clusters (multiple Cluster C’s) fed by a single control-plane hosting cluster B.

Wrap up

This three-cluster model is the most enterprise-grade topology we cover in this series: Red Hat Advanced Cluster Management remains the lightweight governance hub, hosted control plane hosts the control planes at scale, and OpenShift Virtualization clusters deliver dense, optimized VM compute for NodePools. It’s the best pattern when you need maximum isolation, flexibility, and independent scaling. This topology requires managing three production clusters instead of two, with associated operational overhead for networking, observability, and credential management. The trade-off is worthwhile when your organization's security, compliance, or geographic distribution requirements mandate this level of separation, but not for everyone.

This concludes our article series. You now have technical recipes for lab proof-of-concept deployments through to production-grade, highly distributed hosting models.