You made an agent that works on your laptop. It summarizes documents, calls APIs, and chains tools together to deliver the results you intended. Then someone asks, "Can we run this in production?" and the next three weeks disappear into auth configuration, observability wiring, and a crash course in Kubernetes networking.

Managing everything surrounding the agent is often the hardest part, even though these concerns are similar for every agent.

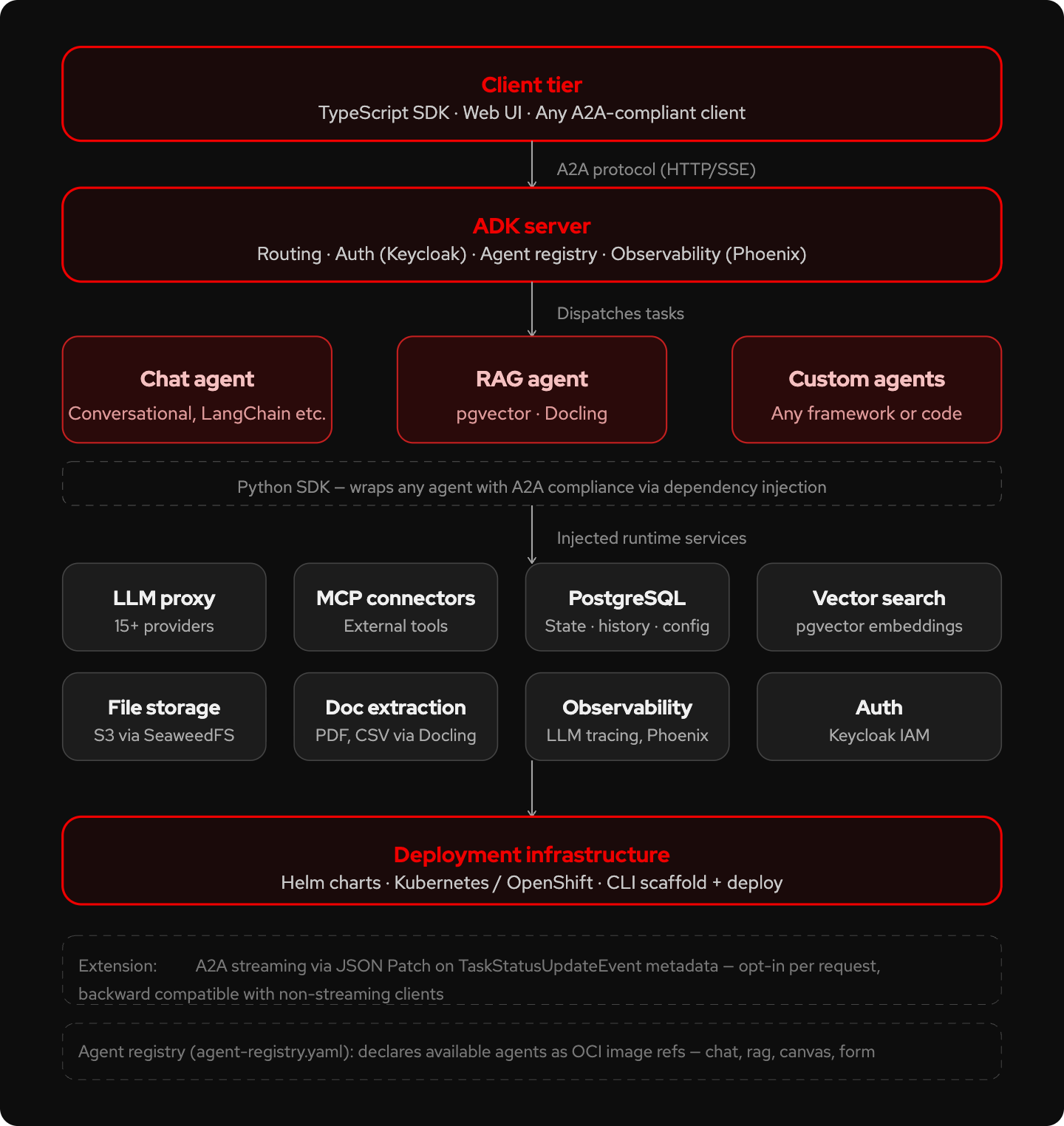

Kagenti ADK is an open source toolkit that takes the agent you built, regardless of the framework you used, and handles those surrounding concerns for you. It aligns with the protocols laid out by the Linux Foundation's Agent2Agent (A2A).

Production agents have unique needs

A production AI agent functions as a network service. It receives requests, calls a large language model (LLM), invokes tools, and returns structured responses. Because agents can be unpredictable, they require operational support like workload identity and audit trails. You need just-in-time authorization and detailed logs to understand not just what happened during a failure, but why it happened.

Many agent frameworks help you write logic but provide little guidance on managing the operational layer. You still need to manage sessions, rotate certificates, exchange tokens, implement distributed tracing, and prevent credential sprawl.

What A2A compliance requires

The protocol defines a standard communication layer for AI agents, which makes them discoverable and interoperable regardless of the framework you used to build them. Google originally developed the protocol and donated it to the Linux Foundation. More than 150 organizations now support it.

Getting A2A compliance right requires meeting three specific requirements.

Agent card

Every A2A-compliant service publishes a JSON metadata document at /.well-known/agent-card.json that describes its identity, capabilities, and authentication requirements. This is how agents discover each other. The specification requires you to control access to the endpoint and canonicalize the card according to RFC 8785 if you implement card signing. Kagenti automatically generates and hosts the agent card so you don't have to write JSON by hand.

JSON-RPC 2.0 message handling

All A2A communication uses JSON-RPC 2.0. Your service receives task requests and returns structured responses in this format. One important detail: errors are included in the JSON-RPC payload rather than the HTTP status code, except for auth failures. The Kagenti SDK handles this protocol layer so your agent code doesn't need to.

HTTPS/TLS in production

All A2A traffic in production must use HTTPS with TLS 1.3 or higher and strong cipher suites. Kagenti uses Istio Ambient Mesh to handle mutual TLS between agents at the network layer. This approach avoids injecting sidecar proxies into your agent pods, which is useful when your pods already contain LLM inference containers.

Features provided by Kagenti

Beyond A2A compliance, the ADK ships a set of runtime services that get injected into agents via dependency injection, not hardcoded into your code.

- LLM proxy: Access more than 15 providers (including OpenAI, Anthropic, watsonx.ai, Amazon Bedrock, Ollama) through a single API. This allows you to switch models without rewriting your agent.

- PostgreSQL: Manage agent state, conversation history, and configuration.

- Vector search: Use pgvector for embeddings and similarity search within the built-in retrieval-augmented generation (RAG) agent.

- MCP connectors: Connect to external tools using the Model Context Protocol (MCP).

- Authentication: Keycloak supports RFC 8693 token exchange. This allows an agent to present a SPIFFE workload identity to receive a short-lived JSON Web Token (JWT) that attests to the workload's SPIFFE ID (JWT-SVID) instead of static API keys.

- Observability: Use Phoenix for LLM tracing with an OpenTelemetry collector.

- File storage: Access S3-compatible object storage through SeaweedFS.

- Document extraction: Extract text from PDFs and CSV files using Docling.

Quick start

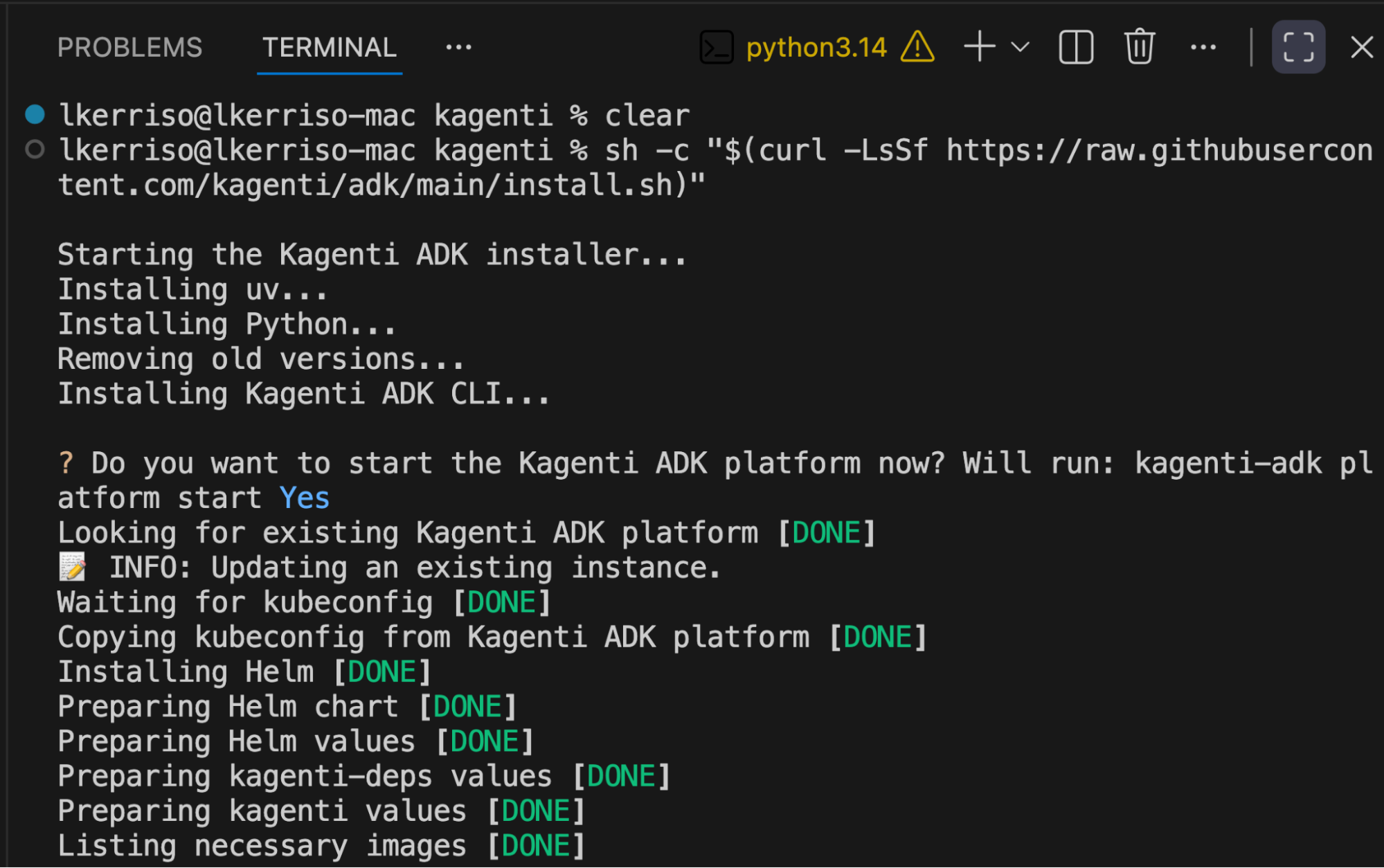

To get started with Kagenti, run the install command to download the Python packages and select your model.

# One-liner install — handles CLI, server, and all dependencies

sh -c "$(curl -LsSf https://raw.githubusercontent.com/kagenti/adk/main/install.sh)"

After installation, use the Kagenti ADK CLI as your primary interface. You can use the CLI to scaffold a new agent project, run agents locally, and deploy them to a cluster.

# Start the local platform

kagenti-adk platform start

# List available agents

kagenti-adk agent list

# Run a specific agent

kagenti-adk agent run website_summarizer "summarize kagenti.github.io"

# Open the built-in Web UI

open http://adk.localtest.me.8080Try a pre-built agent

The ADK includes reference agents that you can deploy immediately to test the platform and understand integration patterns. The platform automatically indexes these agents using AgentCard metadata, which removes the need for a manual registry file. You can use this same pattern to register your own custom agents.

You can try the ADK support agent, a conversational agent that answers questions about Kagenti ADK by searching official documentation. It demonstrates several of the project's capabilities.

- LLM proxy: Model configuration is injected via

LLMServiceExtension. You can switch providers by changing a platform setting rather than modifying agent code. - Session memory: Conversation history is loaded and stored through

RunContext, which is backed by PostgreSQL. - A2A compliance: The

@server.agent()decorator handles the agent card, JSON-RPC transport, and task lifecycle. - Trajectory tracing: The agent emits

trajectory_metadataevents at each stage, giving the platform visibility into reasoning steps. - Citations: Documentation references are returned as structured

Citationobjects so the UI can render source links. - Dependency injection: Runtime services are injected as typed function parameters rather than hardcoded imports.

The agent's core logic uses a two-stage retrieval pattern: an LLM selects relevant documentation pages, and then a second call generates an answer based on those pages. The platform handles all other requirements, including A2A protocol handling, authentication, observability, and session persistence.

Try wrapping your own agent

Kagenti ADK is available on GitHub under the Apache 2.0 license.

The project is under active development. The team is moving fast, and the Discord community can help if you run into any issues.

Ready to try it? Spin up Red Hat OpenShift AI in the Developer Sandbox and deploy your first A2A-compliant agent without needing your own cluster. Kagenti is built to deploy on Kubernetes and OpenShift.

For the A2A protocol spec and broader ecosystem context, visit a2a-protocol.org.