Remote attestation has a fundamental tension at its core. The whole point is to verify that a system is trustworthy, but to do that you run a network server accepting inbound connections, and expose ports to the world. You're asking an untrusted machine to act as a service endpoint before you've even verified it.

Keylime's new push model eliminates this contradiction. Instead of the verifier polling agents, agents drive the attestation process themselves by initiating connections, submitting evidence, and never exposing a single port. This feature is supported in Red Hat Enterprise Linux 10.2 (RHEL) and is available upstream in the Rust agent and Python verifier.

This article is for platform engineers, security architects, and anyone running infrastructure where the adage "trust but verify" isn't good enough. You don't need prior Keylime experience to follow along. We explain the concepts as we go.

The problem with pull

In Keylime's traditional pull model, the verifier is the active party. It maintains a list of enrolled agents, periodically connects to each one over HTTPS, requests a TPM quote and measurement logs, then validates the response. The agent runs an HTTP server and waits.

This works, but it creates real problems:

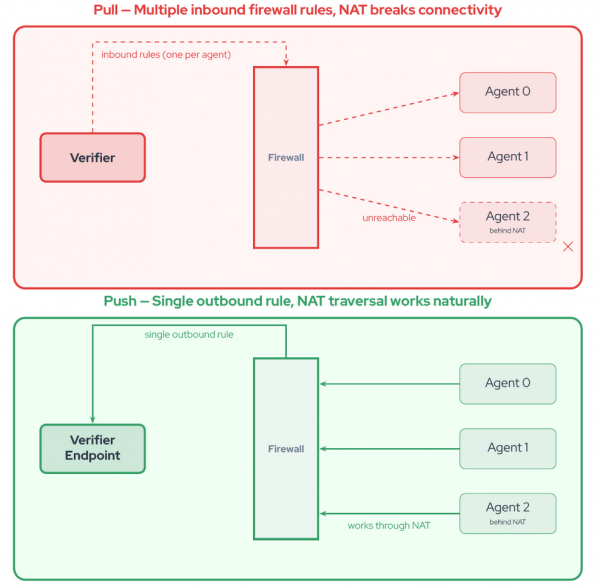

- Attack surface: Every agent exposes an HTTP server to the network. That's a listening port on every machine you're trying to protect — exactly the kind of surface area security teams spend their careers minimizing.

- Network complexity: The verifier needs direct connectivity to every agent. In enterprise environments with NATs, firewalls, and segmented networks, this means punching holes in exactly the places you don't want them.

- Scaling: The verifier must maintain O(n) active connections, one for every enrolled agent, with polling loops consuming resources whether or not there's anything new to verify.

- Rigidity: Attestation frequency is controlled by the verifier's polling interval, not by the workload's actual security needs.

The different approaches are illustrated in Figure 1.

Inverting the relationship

The push model flips the architecture, as illustrated in Figure 2. Agents become HTTP clients, connecting outbound to the verifier to submit their attestation evidence, and then closing the connection. The verifier is a service endpoint that processes evidence as it arrives.

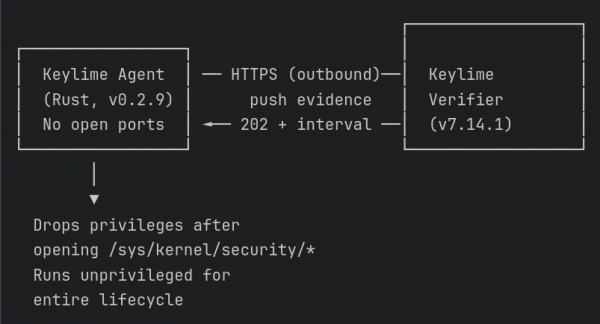

No agent ports. No inbound firewall rules. No NAT traversal. Agents only need outbound HTTPS to a single verifier endpoint, as show in Figure 3.

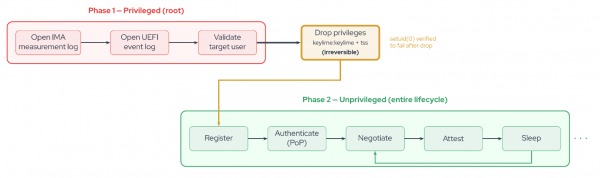

The Rust agent goes further. It opens privileged resources (IMA measurement logs at /sys/kernel/security/ima/ascii_runtime_measurements, UEFI event logs at /sys/kernel/security/tpm0/binary_bios_measurements) while still running as root, then irreversibly drops privileges to an unprivileged user. The privilege drop follows CERT C POS36-C guidelines: After calling setuid(), the agent verifies it cannot regain root by confirming setuid(0) fails. For the rest of its lifetime, the agent operates unprivileged, accessing those security-sensitive files through the file descriptors it opened before the drop. This is summarized in Figure 4.

Payload delivery (the pull model's mechanism for pushing encrypted secrets and scripts to agents) is deliberately absent from the push model. Removing the ability for server components to execute arbitrary code on attested nodes is a security-first design choice, not a missing feature.

How it works

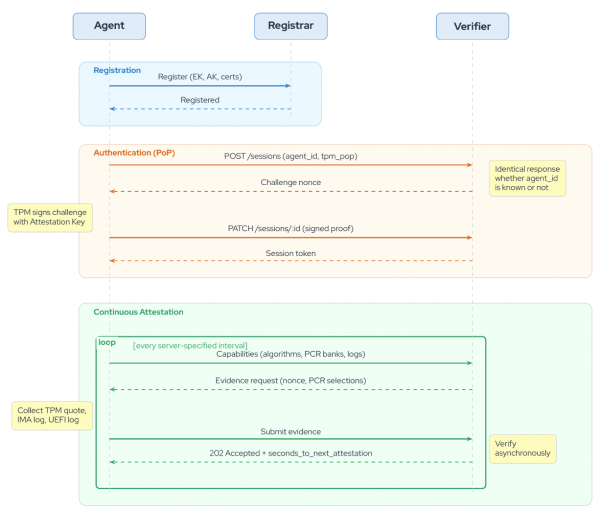

The push model attestation lifecycle has four phases (shown in Figure 5):

- Register: Prove your TPM identity

- Authenticate: Get a session token through a cryptographic challenge

- Negotiate: Agree on what evidence to collect

- Submit: Send that evidence on a recurring schedule

Registration

Key terms: The endorsement key (EK) is a unique key burned into the TPM at manufacturing. The attestation key (AK) is derived from the EK and used to sign attestation evidence.

The agent starts by registering with the Keylime registrar, providing its identity: The TPM's endorsement key, attestation key, and any available certificates. The registrar performs trust decisions, verifying the EK against a certificate trust store, invoking webhooks for custom logic when certificates aren't available (common with cloud vTPMs), and binding identities to the node's logical identifier.

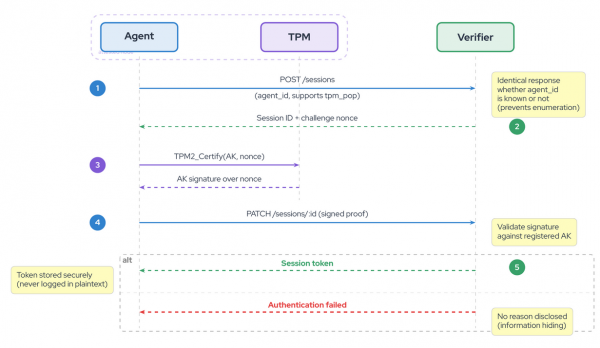

Authentication

What is proof of possession (PoP)? Instead of using pre-provisioned certificates, the agent proves it holds the TPM's private key by signing a challenge from the verifier. This avoids the operational overhead of distributing and managing client certificates.

Once registered, the agent needs to authenticate to the verifier before submitting evidence. The push model uses challenge-response proof of possession (PoP), authenticating directly through the TPM with no need for pre-provisioned client certificates. The agent connects over standard TLS (server-verified only, no mTLS), which keeps certificate management simple (as shown in Figure 6). The protocol is a three-step exchange:

- The agent sends POST sessions with its

agent_idand declares that it supportstpm_popauthentication. - The verifier responds with a challenge nonce. Critically, it produces an identical response structure regardless of whether the

agent_idis known. This information-hiding design prevents attackers from enumerating valid agent identifiers. - The agent uses

TPM2_Certifyto produce a proof of possession. The AK certifies itself, signing the challenge nonce. The agent sends this proof using PATCHsessions:id. If the signature validates against the AK on file, then the verifier issues a session token.

The token is wrapped in a SecretToken type that prevents accidental leakage. Debug and display implementations show only a truncated SHA-256 hash prefix (SecretToken(<hash:a1b2c3d4>)), and the raw value is accessible only through an explicit reveal() call used solely for Authorization: Bearer headers.

Capabilities negotiation

What are PCRs? Platform configuration registers (PCR) are tamper-evident registers inside the TPM that record measurements of boot and runtime software. They can only be extended (that is, appended to), never overwritten, providing a cryptographic chain of the system's software state.

Before collecting evidence, the agent tells the verifier what it can produce. This isn't a static declaration, and the Rust agent's StructureFiller queries the actual TPM hardware at runtime to discover:

- Supported hash algorithms: SHA-1, SHA-256, SHA-384, SHA-512, SM3-256 (whatever the TPM reports)

- PCR banks: Which PCRs are available under each algorithm

- AK certification data: The public portion of the attestation key and its properties

- Supported signing schemes: What the TPM can use to sign quotes

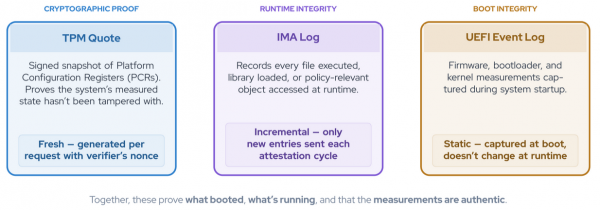

It also reports IMA and UEFI log capabilities. The IMA log is declared as appendable with supports_partial_access: true (the agent can send incremental updates), while the UEFI log is appendable: false (it's static after boot).

The verifier responds with what evidence it wants, including a challenge nonce for the TPM quote and the specific PCR selections.

Evidence submission

IMA and UEFI logs at a glance: The integrity measurement architecture (IMA) log records every file the kernel loads at runtime. The UEFI boot log records the boot sequence and is static after startup. Together, they give the verifier a complete picture of what software ran on the machine.

The agent collects its evidence and submits it in a single PATCH request. Behind the scenes:

- TPM quotes are generated fresh, signing the verifier's nonce with the AK over the requested PCR banks.

- IMA logs are read incrementally. The agent maintains a MeasurementList that tracks (

entry_count,file_offset) pairs. On each attestation cycle, it seeks to the last known position and reads only new entries. This avoids re-reading the entire measurement log, which can grow to hundreds of thousands of entries on long-running systems. - UEFI event logs are read from the file descriptor opened before the privilege drop. Because the measured boot log is static after boot, it doesn't need the incremental tracking that IMA requires. The agent re-reads and sends the full log each cycle the verifier requests it.

The verifier responds 202 Accepted immediately and verifies the evidence asynchronously. This keeps the protocol responsive. The agent isn't blocked waiting for potentially expensive cryptographic verification and policy evaluation. The response includes a seconds_to_next_attestation value that the agent uses to schedule its next cycle, giving the verifier server-side control over attestation frequency.

Continuous attestation

The agent enters a loop: Sleep for an interval specified by the server, renegotiate capabilities (the verifier may have updated its requirements or policy), collect fresh evidence, and submit. If the token expires or the verifier returns 401, the agent immediately re-authenticates. If attestation fails, the agent retries with exponential backoff. If registration is lost, it starts over from scratch.

Security by design

The push model's security advantages go well beyond mandating no open ports.

Information hiding: The authentication protocol is designed so that the verifier reveals as little as possible before authentication completes. During the challenge-response exchange, the verifier produces the same response structure for valid and invalid agent identifiers. An attacker probing the API cannot determine whether a particular agent is enrolled.

Token opacity: Session tokens never appear in logs. The SecretToken wrapper ensures that any attempt to log, debug-print, or format a token produces only its hash prefix. The actual token value is gated behind reveal(), which is called only when constructing the HTTP Authorization header.

Rate limiting: The verifier supports dual-layer rate limiting — per-IP and per-agent — to protect against denial-of-service attacks on the session creation endpoint. Both layers are independently configurable with separate windows and thresholds.

Privilege separation: The agent opens /sys/kernel/security files as root, then irreversibly drops to an unprivileged user (typically keylime:keylime) with supplementary group tss for TPM access. The three-phase drop — validate target user, open privileged resources, drop and verify — follows POSIX best practices and explicitly confirms the drop is irreversible.

Fail-fast on corruption: If the TPM mutex is poisoned (indicating a thread panicked while holding the TPM lock), the agent calls process::exit(1) immediately. No graceful shutdown, no attempt to recover potentially corrupted TPM state. The rationale is explicit in the code: TPM hardware state may be inconsistent, any internal corruption is a critical security event, and only a full process restart can safely reinitialize TPM contexts. A supervisor process or systemd handles the restart with a clean slate.

Resilient by default

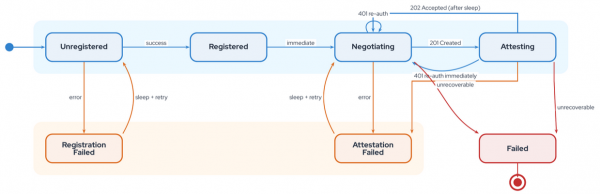

The Rust agent is engineered for the reality of unreliable networks (illustrated in Figure 8):

- Reasonable but progressive backoff policy: Exponential backoff with configurable initial delay (default 10 seconds), maximum delay (default 5 minutes), and retry count (default 5 attempts) handles transient failures without overwhelming the verifier.

- Retry-after header support: The verifier can tell the agent exactly when to come back, in either seconds or HTTP-date format. The agent's

RetryAfterMiddlewareparses both and defers to server-directed timing, avoiding double-delays with the backoff strategy. - Many states: A state machine with clear recovery paths governs the agent lifecycle. There are seven states (Unregistered, Registered, Negotiating, Attesting, RegistrationFailed, AttestationFailed, and terminal Failed) with well-defined transitions. RegistrationFailed sleeps and retries registration. AttestationFailed sleeps and retries negotiation. A

401during attestation triggers immediate re-authentication. Only unrecoverable errors reach the terminal Failed state. - Verifier-controlled intervals: To prevent overwhelming traffic, the

seconds_to_next_attestationfield in the verifier's response lets the server stagger agents. This slows attestation during high load, or increases frequency when security posture demands it.

Deployment scenarios

You're probably already seeing the advantage to Keylime's push model. There are many industries that stand to benefit. Here are a few example use cases.

Edge and IoT

Thousands of devices behind NATs with intermittent connectivity. In the pull model, every unreachable device wastes verifier resources on failed polling. In the push model, devices attest when they have connectivity and the verifier simply processes evidence as it arrives. No VPN tunnels, no port forwarding, no complex firewall rules — just outbound HTTPS.

Red Hat OpenShift and Kubernetes

Ephemeral pods with dynamic IPs are natural fit for agent-driven attestation. Pods attest on startup, push evidence to the verifier through the cluster's standard egress path, and disappear without the verifier needing to track their IP lifecycle. The push model works naturally with Kubernetes networking, service meshes, and security policies that restrict inbound connections to pods.

High-security enterprise

For environments requiring frequent attestation and strict compliance, the push model combines PoP authentication (no pre-provisioned certificates needed — the TPM proves identity), no exposed agent ports (aligning with zero-trust network principles), and server-controlled attestation intervals. The deliberate removal of payload delivery means the attestation infrastructure cannot be used as a vector for code execution on attested nodes.

Getting started

Deploying the push model requires configuring two components: The verifier (which receives and validates evidence) and the agent (which collects and submits it).

Verifier configuration

In /etc/keylime/verifier.conf:

[verifier]

mode = push

# Challenge-response authentication

challenge_lifetime = 300

# Rate limiting

session_create_rate_limit_per_ip = 50

session_create_rate_limit_window_ip = 60

session_create_rate_limit_per_agent = 15

session_create_rate_limit_window_agent = 60Agent configuration

In the Rust agent's configuration:

[agent]

verifier_url = "https://verifier.example.com:8881"

enable_authentication = true

attestation_interval_seconds = 60

# Retry behavior

exponential_backoff_max_retries = 5

exponential_backoff_initial_delay = 10000

exponential_backoff_max_delay = 300000Enrolling an Agent

Once both sides are configured, enroll the agent with the tenant command-line interface:

$ keylime_tenant -v 10.0.1.5 \

-t 10.0.2.5 \

-u d432fbb3-d2f1-4a97-9ef7-75bd81c00000 \

--push-model \

--runtime-policy policy.jsonThe --push-model flag tells the verifier to expect evidence from this agent rather than polling for it.

What's next

The push model opens the door to capabilities that were impractical in a polling architecture:

- Event-driven attestation: Attest on specific system events—

sudoinvocations, package installations, configuration changes—not just on a timer. - Adaptive intervals: Adjust attestation frequency based on workload risk profile or threat intelligence.

- Distributed verification: With agents pushing to a service endpoint, verification can be horizontally scaled across multiple verifier instances behind a load balancer.

The push model is available in the upstream Rust Keylime agent and Keylime verifier, and ships as a supported feature in RHEL 10.2. The enhancement proposal details the full design rationale, including the adversarial model and trust mechanisms.

For detailed information on RHEL system integrity with Keylime: RHEL 10 Security Hardening Guide.