Retrieval-augmented generation (RAG) has become the default architecture for enterprise large-language models (LLM) applications. By grounding models in external knowledge bases, RAG systems can provide accurate, up-to-date responses without the cost and complexity of fine-tuning. In practice, most RAG systems reach production with weak evaluation strategies.

Teams tune embeddings, retrievers, chunking strategies, and prompts—but still rely on manual spot checks, small hand-labeled datasets, or generic LLM-as-a-judge metrics to assess quality. The result: systems that appear to work, but fail silently under real user traffic. So the real question becomes: How do you know your RAG system actually works—and why it fails when it doesn't?

Why RAG evaluation is hard

Evaluating RAG systems is fundamentally harder than evaluating stand-alone language models. Traditional approaches break down in several ways:

- Retrieval or generation entanglement: When a RAG system fails, it's often unclear whether the retriever surfaced the wrong documents or the LLM hallucinated despite correct context. Without known ground truth (verifiable fact) context, debugging becomes guesswork.

- No ground truth at scale: Most evaluation datasets are manually curated or lightly generated. This makes them expensive, slow to produce, and impossible to scale as knowledge bases grow. More importantly, they rarely include ground truth context, making retrieval evaluation unreliable.

- Knowledge-base drift: Enterprise documents evolve continuously. Static test sets become outdated almost immediately, resulting in misleading evaluations that no longer reflect production behavior.

- Domain-specific blind spots: Generic metrics often miss failure modes that matter most in practice, such as regulatory correctness in finance, clinical precision in health care, or procedural accuracy in technical documentation.

Why evaluation matters more than ever

As RAG systems are increasingly embedded inside agentic and multi-step workflows, evaluation failures compound. A single retrieval error can cascade across tool calls, memory updates, or downstream decisions. Without rigorous evaluation, teams lose the ability to:

- Compare retriever or embedding changes objectively

- Debug failures at the component level

- Improve system quality systematically over time

As the saying goes, you can't improve what you can't measure.

The solution: Synthetic data generation

Synthetic data unlocks a fundamentally different approach:

- High-quality question-answer-context triplets: Generate evaluation datasets directly from your knowledge base with realistic questions, grounded answers, and ground truth context.

- Automatic ground truth creation: No manual annotation needed. Test retrieval and generation separately. Know exactly which component failed.

- Repeatable benchmarks: Compare different embedding models, chunking strategies, and LLM configurations. Track improvements over time with confidence.

How the RAG evaluation dataset flow works

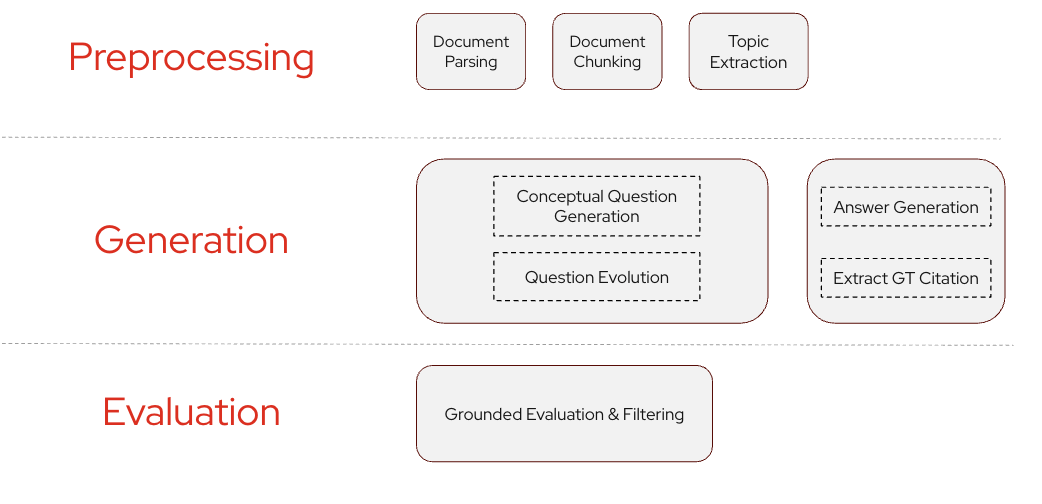

SDG Hub is Red Hat's open source Python framework for building synthetic data generation pipelines. SDG HUb offers a pre-built RAG evaluation dataset flow that generates high-quality question-answer-context triplets, as shown in Figure 1.

The RAG evaluation flow turns raw documents into high-quality evaluation datasets through a structured, grounded pipeline:

- Topic extraction identifies key concepts from the document to anchor evaluation.

- Question generation creates initial questions guided by the document outline.

- Question evolution refines these into realistic, user-style queries.

- Answer generation produces answers grounded strictly in the source content.

- Groundedness filtering removes low-quality question-answer pairs.

- Context extraction isolates the minimal ground truth context needed to answer each question.

The result is a clean, RAG-ready dataset of questions, answers, and gold contexts designed for reliable retrieval and answer evaluation.

Getting started

Generate grounded evaluation datasets for your RAG system in minutes.

1. Install

For SDG Hub, it's recommended to use the uv command. After you've installed it, use it to install the sdg-hub Python package:

uv pip install sdg-hub2. Try the RAG evaluation example

Clone the repo and open the notebook:

git clone https://github.com/Red-Hat-AI-Innovation-Team/sdg_hubFor more details about data preprocessing for SDG flow, and data post processing for specific evaluation frameworks, refer to the notebook. You can also optimize the flow SDG Hub ships out of the box for your custom evaluation needs. Here's a simple example of SDG Hub in use:

from datasets import Dataset

from sdg_hub import Flow, FlowRegistry

# 1) Load the RAG evaluation flow

flow = Flow.from_yaml(

FlowRegistry.get_flow_path("RAG Evaluation Dataset Flow")

)

# 2) Provide minimal input: content + outline

input_dataset = Dataset.from_dict({

"document": [

"Kubernetes is an open source system for automating deployment, scaling, and management of containerized applications.",

"OpenShift is Red Hat's enterprise Kubernetes platform with added developer and operational tooling."

],

"document_outline": [

"Kubernetes overview as the standard platform for containers",

"OpenShift overview as the enteprise Kubenertes platform "

],

})

# 3) Generate the evaluation dataset

result = flow.generate(input_dataset)

# 4) Inspect outputs

df = result.to_pandas()

print(df.columns)

df.head()3. Explore the docs

Take a look at the documentation available at https://ai-innovation.team/sdg_hub.

Input contract

The RAG Evaluation flow expects exactly two columns, enforced to ensure grounded and debuggable evaluation.

- Document: The atomic unit of knowledge your RAG system should retrieve (document, section, or chunk).This is treated as the gold reference context, so it must match your production chunking strategy.

- Document_outline: A short, intent-level label (title or summary) used to guide realistic question generation.Good outlines prevent trivial or purely extractive questions.

This separation ensures questions reflect real user intent while answers remain strictly grounded in known context, making downstream retrieval and generation metrics meaningful.

Output contract

The RAG evaluation flow returns a dataset containing:

question: Synthetic user question grounded in your contentanswer: Answer generated based on the ground-truth contextground_truth_context: The exact chunk/section used as the "gold" context

From synthetic data to end-to-end RAG evaluation

After generation, SDG Hub outputs are post-processed into an evaluation-ready dataset containing synthetic user queries, ground-truth answers, and gold reference contexts. This dataset is then executed against a real RAG pipeline, which produces retrieved contexts and generated answers.

Together, these signals form the full input required by downstream evaluation frameworks. Because the ground-truth context is known, evaluation metrics reflect true retrieval and generation quality—not proxy judgments from another LLM.

Typical metrics include:

- Context precision and recall: Did retrieval surface the correct document spans?

- Faithfulness: Is the answer supported by retrieved context?

- Answer relevance: Does the response actually answer the question?

A closed-loop evaluation workflow

SDG Hub enables a repeatable, metric-driven workflow:

- Generate grounded evaluation data from your knowledge base

- Run the dataset through your RAG system

- Score retrieval and generation quality

- Compare configurations and track improvements over time

Why this matters

Turn synthetic data from "nice test data" into a systematic optimization tool:

- Benchmark retrievers, embeddings, and chunking strategies

- Isolate whether failures come from retrieval or generation

- Re-run the same evaluation as models or data evolve

In short, SDG Hub combined with downstream evaluation frameworks replace intuition-driven RAG tuning with measurable, repeatable improvement. Get started today.