Automation is what we (developers) do. We automate ticket sales and automobiles and streaming music services and everything you can possibly tie into an analog-to-digital converter. But, have we taken the time to automate our processes?

In this article, I'll show how to build an automated integration and continuous delivery pipeline using Jenkins CI/CD and Red Hat OpenShift 4. I will not dive into a lot of details—and there are a lot of details—but we'll get a good overview. The details will be explained later in this series of blog posts.

Note: In this post, I'll sometimes refer to OpenShift 4 as OpenShift Container Platform (OCP).

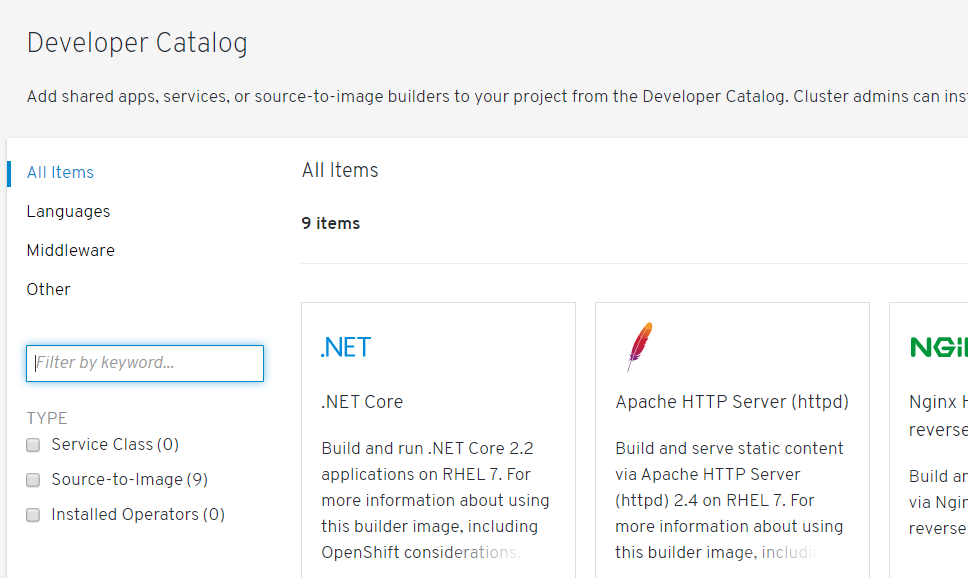

It's not enough to start an OCP cluster. We need to get Jenkins up and running. Fortunately, OCP has templates, an ever-growing selection of environments that you can get up and running with a simple point and click (or at the command line). This catalog of templates, however, is not readily apparent in the OCP dashboard.

In the following screen capture, you'll notice that nine options are available:

Is that it?

Nine items. You will also notice that Jenkins is not an option. But what if it is an option? The developer's friend—the command line—is where the catalog really springs to life. We can run the following command to see all of the templates that are included when we launch our OCP 4 cluster but are not visible on the dashboard:

oc get templates -n openshift

You'll see a list that's too long to show here—about 91 templates. As it stands, we can use them at the command line, and we will. A Template Service Broker is used to make them appear on the dashboard, but that's another article. For now, we'll use the command line.

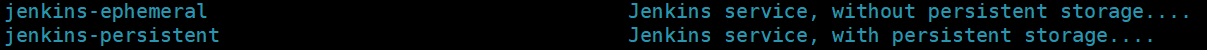

If you look through the list, you'll find two templates for Jenkins:

Because we don't have persistent storage configured (that's yet another article), we'll use the "Jenkins-ephemeral" template for this article. The fundamentals are the same, and the knowledge is transferable, but this way we spend our time in this article on Jenkins and CI/CD and not on creating and configuring and using persistent storage.

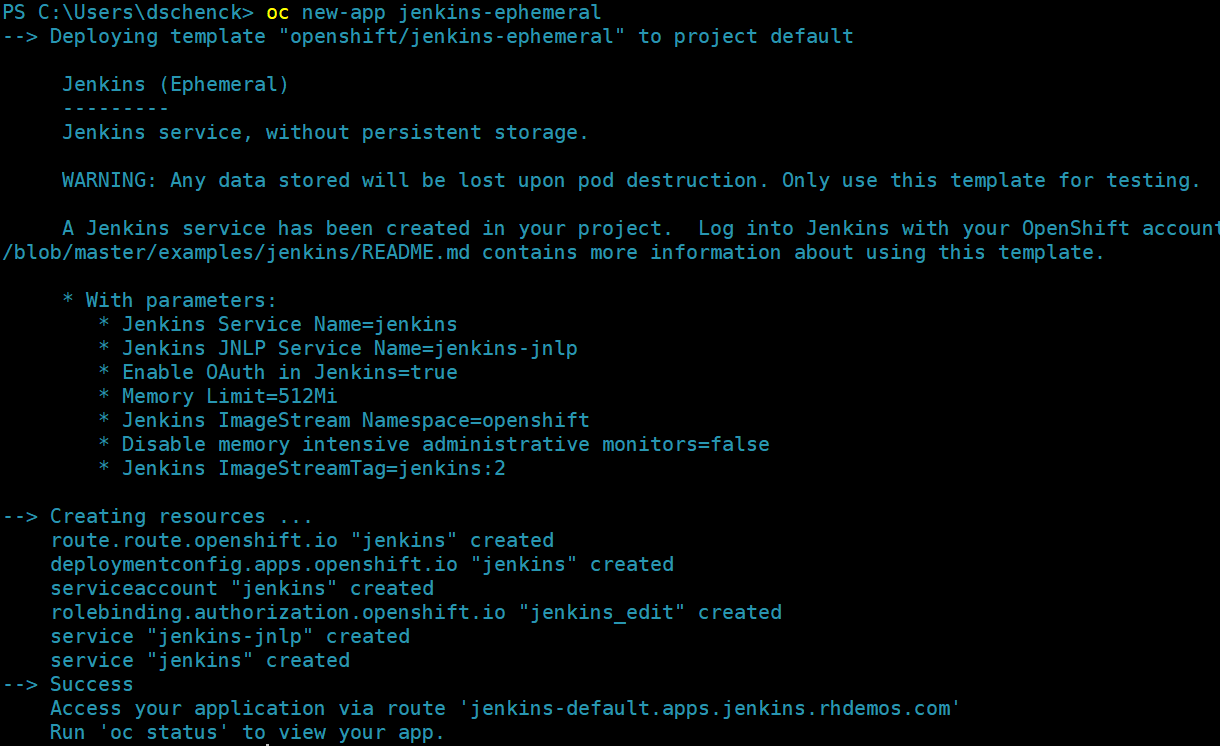

Give me Jenkins, now

Let's start with the easy part: Getting Jenkins up and running inside our OCP 4 cluster. It's one command:

oc new-app jenkins-ephemeral

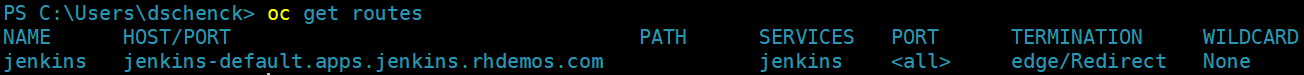

You can now run the following command to get the URL to your Jenkins installation:

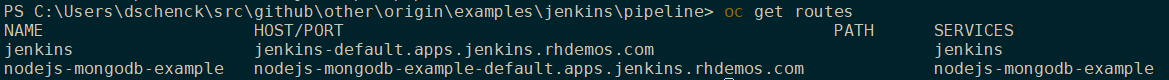

oc get routes

Put that URL (jenkins-default.apps.jenkins.rhdemos.com) into your browser and voilà ... Jenkins:

By the way, if you want to see the template that did all this work, you can view it by using the following command:

oc get template/jenkins-ephemeral -o json -n openshift

Jenkins, at your service

Go ahead and log in with OpenShift, and select the Allow Selected Permissions option. You'll then be presented with your Jenkins dashboard:

Countdown to launch

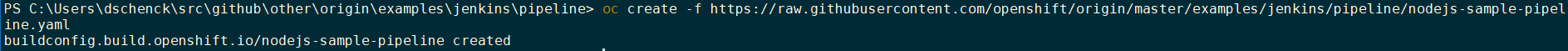

That was the easy part. Now the real work begins. We need to set up a pipeline to build our software, but we want to use the build that is built into OpenShift. The following command will create a build configuration (or "build config," which is an object of type "BuildConfig"), which has the instructions we give to OpenShift to tell it how to build our application. In this particular case, we're creating a pipeline that, in turn, has the build instructions:

oc create -f https://raw.githubusercontent.com/openshift/origin/master/examples/jenkins/pipeline/nodejs-sample-pipeline.yaml

Don't sweat the details for now; we'll cover that in the next blog post. For this article, we simply want to see what's possible and get the creative gears turning.

Want to see the pipeline? Use the following command:

oc get buildconfig/nodejs-sample-pipeline -o yaml

Note: This build example is using Node.js, and I did all the work using a Windows PC running PowerShell. Open source and platform independent—that's the beauty of all this. That is, the oc command works everywhere. Also; this example relies heavily on the OpenShift examples GitHub page.

Where are we?

At this point in Red Hat OpenShift, we have a build config for a pipeline. That's it. We haven't actually built anything yet; we just have a configuration of a pipeline. We can see this—the fact that we haven't built anything—in two ways: We can look at the Builds section of the OpenShift dashboard, and we can view the Jenkins dashboard. In both of them, we do not see our pipeline—again, because we haven't built the pipeline.

By the way, if you look in the BuildConfig section of the OpenShift dashboard, you'll see our build config sitting there like a rocket that's ready for liftoff.

Let's take it for a spin

Now the magic happens. At the command line, we can run a command to see the name of the BuildConfig we want to build:

oc get buildconfigs

We can start the build and then, for fun, switch over to the Jenkins dashboard to watch it run. Even better, while in Jenkins, select the "Open Blue Ocean" option on the menu (on the left side) to view things in a nice, modern interface instead of the, uh, "classic" Jenkins UI. You'll see the pipeline appear, from which you can follow the build. It may take a few seconds before it's available in Jenkins.

Use this command to kick off all the action:

oc start-build nodejs-sample-pipeline

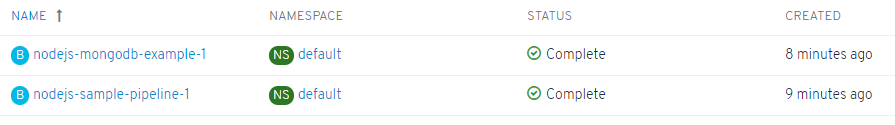

The pipeline was built and the build was started. If you look in OpenShift, under Builds, you'll see the two separate builds:

The results

Based on a combination of knowledge and a hunch, I bet there's a route for that "nodejs-mongodb-example" service. Running oc get routes will confirm:

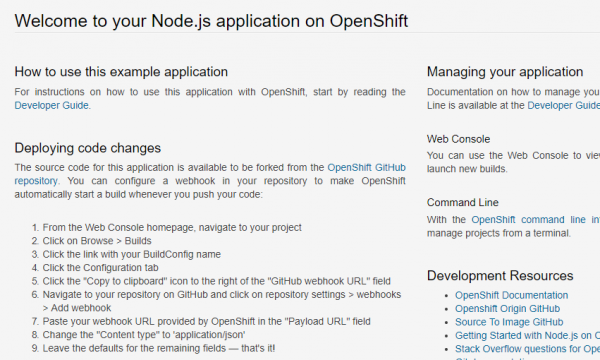

If I put that URL into a browser I should get:

Note: See that "Deploying code changes" section in the image? You might want to play around with that (hint, hint).

We did it!

Well, OpenShift and Jenkins did it. But we now have Jenkins building and deploying our solution.

Next?

There's a lot more we can do. Tests? Manual deployments? Deployments to other environments (e.g., dev, testing, staging, and production). Other programming languages? Additionally, this Jenkins environment doesn't have persistent storage—something we'll likely want in a real-world scenario. And, we need to dive even deeper to see what's going on and get a real understanding so we can build our own solutions.

There's a lot more to come; watch this space for future articles.

Last updated: September 3, 2019