Beta testing is fundamentally all about the testing of a product performed by real users in a real environment. There are many tags we use to refer to the testing of similar characteristics, such as User Acceptance Testing (UAT), Customer Acceptance Testing (CAT), Customer Validation, and Field Testing (more popular in Europe). Whichever tag we use for these testing cases, the basic components are more or less the same. To discover and fix potential issues, this involves the user and front-end user interface (UI) testing, as well as the user experience (UX) related testing. This always happens in the iteration of the software development lifecycle (SDLC) where the idea has transformed into design and has passed the development phases, while the unit and integration testing has already been completed.

The beta stage in the product lifecycle management (PLM) is the ideal opportunity to hear from the target market and to plan for the road ahead.

As we zoom into this phase of testing it has a board spectrum of ranges. On one side is the Front-End or UI related testing involving UI functionality (Cosmetic, UI level Interaction and Visual look and feel). At the other end is the User Experience (UX), including user testing involving more from A/B (split) testing, hypothesis, user behavior tracking and analysis, heat maps, user flows and segment preference study or exploratory testing and feedback loops.

Beta testing relies on the popular belief that goodness will prevail, which defines the typical tools that carry out such tests. Examples are the shortening of beta cycles, reducing the time investment, increasing tester participation, improving the feedback loop and visibility, etc.

The Importance of Beta Testing

If we dive deeper into the factors behind the existence of tools from different angles, we find two major reasons that advocate the importance of beta testing:

1. Left-right brain analogy, which points to the overlap between humans and technology.

The typical belief is that the left-hand side of the brain mostly processes the logical thinking, while the right-hand side is more about the emotional thinking. Based on this popular analogy, when we map the different aspects involved in different stages of SDLC for a digital product across a straight line from left to right (refer to the diagram below), we will notice the logical and more human-centered aspects are divided by an imaginary line from the center. We will also notice the gradual progression of the emotional index for the components from left to right.

When we map these to the beta testing phase, we notice these right-hand components are predominant. As users of the products (like humans), we are more emotionally connected to such aspects of the product, which are validated or verified in a beta test. This makes beta testing one of the most important testing phases in any SDLC.

Another interesting point to note is that when we look from the traditional software approach to define "criticality", the areas that are tested during user acceptance testing (UAT / Beta), mostly fall into Class 3 and 4 type of criticality. But because these touch the core human aspects, they become more important. This Youtube video (Boy Receives Enchroma Glasses From Father) helps to illustrate the importance of technology touching the human emotions. It was posted by a popular brand of glasses to demonstrate how they can correct colorblindness in real time for the end user.

Interestingly, this is an aspect of "accessibility", which is typically covered during a beta test. Considering this video, the aspect of accessibility naturally raises the question about what we can do for this father and son as a tester, as a developer, or as a designer? And when we look at the stats, we find that the number of people the accessibility impacts is huge. One in every five people is challenged by some kind of disability. And unfortunately, some reports indicate that a majority of websites do not conform to the World Wide Web contortion's (W3C) accessibility guidelines (in 2011 almost 98% of websites failed the W3C's accessibility guideline).

This helps to demonstrate the human angle, which advocates why beta testing is important to ensure these aspects are validated and verified to ensure the target user needs are completely met.

2. A second reason for advocating the importance of beta testing is evaluating it from the international standards perspective (ISO/IEC 9126-4). This helps to define the difference between usability and quality.

The International Standards Organization (ISO) has been focused on the standards around quality versus usability over time. In 1998 ISO identified efficiency, effectiveness and satisfaction as major attributes of usability. In 1999 a quality model was proposed, involving an approach to measure quality in terms of software quality and external factors. In 2001 the ISO/IEC 9126-4 standard suggested that the difference between usability and the quality in use is a matter of context of use. ISO/IEC 9126-4 also distinguished external quality versus internal quality and defined related metrics. Metrics for external quality can be obtained only by executing the software product in the system environment for which the product is intended.

This shows that without usability/human computer interaction (HCI) in the right context, the

quality process is incomplete. The context referred to here is fundamental to a beta test where you have real users in a real environment, thereby making the case of the beta test stronger.

Beta Testing Challenges

Now that we know why beta testing is so very critical, let's explore the challenges that are involved with a beta stage.

Any time standards are included, including ISO/IEC 9126, most of these models are static and none of them accurately describe the relationship between phases in the product development cycle and appropriate usability measures at specific project milestones. Any standard also provides relatively few guidelines about how to interpret scores from specific usability metrics. And specific to usability as a quality factor, it is worth noting that usability is that aspect of quality where the metrics have to be interpreted.

When we look at popular beta-testing tools of today, the top beta testing tools leave the interpretation to the customer or to the end-user's discretion. This highlights our number one challenge in beta testing, which is how to filter out pure perception from the actual and valid issues and concerns brought forward.

As most of the issues are related to user testing (split testing and front-end testing), there is no optimized single window solution that is smart enough to handle this in an effective manner. Real users in the real environment are handicapped to comprehend all the aspects of beta testing and properly react. It’s all a matter of perspective and all of them cannot be validated with real data from benchmarks or standards.

The World Quality Report in 2015-16 Edition indicated that expectations from Beta testing is changing dramatically. It hinted that the customers are looking for more product insights through a reliable way to test quality and functionality, along with the regular usability and user testing in real customer facing environment.

It's not only the Beta Testing, but more user-demand is also impacted by the rising complexities and challenges due to accelerated changes in the technology, development, and delivery mechanisms. The 2017-18 World Quality Report states that the test environment and test data continue to be an achilles heel for QA and Testing. The challenges with testing in agile development are also increasing. There is now a demand for automation, mobility, and ubiquity, along with smartness to be implemented in the software quality testing. Many believe that the analytics-based automation solutions would be the first step in transforming to smarter QA and smarter test automation. While this true for overall QA and testing, this is also true for Beta Testing, which deals with the functional aspect of the product.

Let's see where we stand today. If we explore popular beta testing solutions, we will get a big vacuum in the area where we try to benchmark the user's need for more functional aspects, along with the usability and user testing aspects. Also, you can notice in the diagram below that there is ample space to play around with the smart testing scenario with the use of cognitive, automation, and machine learning.

(Note: Above figure shows my subjective analysis of the competitive scenario.)

Basically, we lack "smartness" and proper "automation" in Beta Testing Solutions.

Apart from all these, there are some more challenges that we can notice if we start evaluating the user needs from the corresponding persona's viewpoint. For example, even when assuming that the functional aspect is to be validated, the end-user or the customer may have an inability to recognize it. The product owner, customer, or end-user are part of the user segment who may not be aware of the nuts and bolts of the technology involved in the development of the product they are testing to sign it off. It's like the classic example of a customer who is about to buy a second-hand car and inspects the vehicle before making the payment. In most of the cases, he is paying the money without being able to recognize "What's inside the bonnet!". This is the ultimate use-case that advocates to "empower the customer".

So, how do we empower the end user or the customer? The tools should support that in a way so that the user can have his own peace of mind while validating the product. Unfortunately, many small tools which try to solve some of those little issues to empower the user are scattered (example: Google Chrome extension that helps to analyze CSS and creates the report or an on-screen ruler that the user can use to check alignment, etc.). The ground reality is that there is no single-window extension/widgets based solution available. Also, not all widgets are connected. And those which are available, not all are comprehensible to the customer/end-user as almost all of them are either developer or tester centric. They are not meant for the customer without any special skills!

When we look at the automation solutions in testing as part of much Continous Integration (CI) and Continuous Delivery (CD), they are engaged and effective in mostly "pre-beta" stage of SDLC. Also, they require specific skills to run them. With the focus on DevOps, in many cases, the CI-CD solutions are getting developed and integrated with the new age solutions looking at the rising complexities of technology stacks, languages, frameworks, etc.. But most of them are for the skilled dev or test specialists to use and execute them. And this is something that does not translate well when it comes to Beta testing where the end-user or the customer or the "real user in real environment" are involved.

Assuming we can have all these automation features enabled in BETA, it still points to another limitation in the existing solutions. It's because the employment of automation brings in its own challenge of "information explosion", where the end user needs to deal with the higher volume of data from automation. With so much information, the user will struggle to get a consolidated and meaningful insight of the specific product context. So what do we need to solve these challenges? Here is my view -- we need smart, connected, single window beta testing solutions with automation that can be comprehensible to the end-users in a real environment without the help of the geeks.

For sometime since a last few years, I have been exploring these aspects for the ideal beta testing solution and was working on the model and a proof of concept called "Beta Studio", representing the ideal beta testing solution, which should have all these -- Beta-Testing that utilizes data from all stages of SDLC and PLM along with the standards + specs and user testing data to provide more meaningful insights to the customer. Test real applications in real environments by the real users. Customer as well as end-user centric. Test soft aspects of the application -- Usability, Accessibility, Cosmetic, etc.. Be smart enough to compare and analyze these soft aspects of the application against functional testing data.

Use machine-learning & cognitive to make the more meaningful recommendation and not just dump info about bugs and potential issues. Here is an indicative vision of Beta Studio:

Mostly this vision of the ideal beta testing solution touches upon all the aspects we just discussed. It also touches upon all the interaction points of the different personas; e.g. customer, end-user, developer, tester, product owner, project manager, support engineer, etc.. It should cover the entire Product Life Cycle and utilize automation along with the machine learning components such as Computer Vision (CV) and Natural Language Processing (NLP). Then gathering this information to be processed by the cognitive aspect to generate the desired insights about the product and recommendations. During this process, the system will involve data from standards and specs along with the design benchmark generated from the inputs at the design phase of the SDLC, so that meaningful insights can be generated.

In order to see this vision translated into reality, what do we need? The following diagram hints about the six steps we need to take.

- First we should create the design benchmark from the information at the design stage that can be used in comparing the product features against metrics based on this design benchmark.

- Then automate and perform manual tracking of the product during runtime in real time and then categorize and collate this data.

- This involves creating features to support the user feedback cycle and user testing aspects (exploratory, split testing capabilities).

- Collect all standards and specifications on different aspects -- e.g. Web Content Accessibility Guideline (WCAG) Section 508, Web Accessibility Initiative Specs ARIA, Design Principles, W3C Compliance, JS Standards, CSS Standards & Grids, Code Optimization Metrics Error codes & Specs, Device Specific Guidelines (e.g. Apple Human Interface Guideline) etc.

- Build the critical components such as Computer Vision and Natural Language Processing units which would process all the data collected in all these stages and generate the desired metrics and inferences.

- Build the unit to generate the model to map the data and compare against the metrics.

- The final step is to build the cognitive unit that can compare the data and apply the correct models and metrics to carry out the filtering of the data and generate the insights which can be shared as actionable output to the end-user/customer.

While experimenting for BetaStudio, I have explored different aspects and built some bare bone POCs. For example, Specstra is a component that can help create Design benchmark from design files.

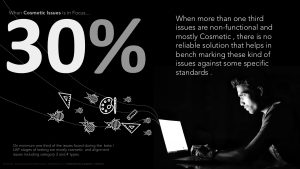

With Specstra I was trying to address the issues related to the cosmetic aspect or visual look and feel. Typically when it comes to cosmetic issues, more than 30% are non-functional and mostly cosmetic. There is no reliable solution that helps in benchmarking these kind of issues against specific standards. One third of the issues found during the beta / UAT stages of testing are mostly cosmetic and alignment issues, including category 3 and 4 types. And these happen mostly because the two persona's involved (developer and designer) have their own boundaries defined by a mythical fine line.

When a developer is in focus, roughly 45% of them are not aware of all the design principles employed or the heuristics of UX to be employed. Similarly, half of the designers are not aware of more than half of the evolving technological solutions about design.

And in 70% of the of the projects, we do not get detailed design specs to benchmark with. Detailing out a spec for design comes with a cost and required skills. In more than two-thirds of the cases of development there is the absence of detailed design with specs. Many of the designs are not standardized and most of them do not have clear and detailed specs. Design is carried out by different tools so it is not always easy to have a centralized place where all the designs info is available for benchmarking.

Specstra comes in handy to solve this. It is an Automation POC that is more like a cloud-based Visual Design Style Guide Generator from the third party design source files. This is a case where the user would like to continue using his existing Design tools like Photoshop/Sketch or Illustrator, PDF etc..

You can view the video of the demo here:

View the demo of the BetaStudio POC here:

https://www.youtube.com/embed/kItqD5wc4_4

I understand that reaching the goal of an ideal beta testing solution might require effort and will likely evolve over time. Rest assured, the journey has started for all of us to connect and explore how to make it a reality.

To explore the open-source project of BetaStudio POC, follow the link here: https://github.com/betaStudio-online.

Take advantage of your Red Hat Developers membership and download RHEL today at no cost.

Last updated: February 5, 2024