With the growing number of APIs and microservices, the time given to creating and integrating them has become shorter and shorter. That's why we need an integration framework with tooling to quickly build an API and include capabilities for a full API life cycle. Camel K lets you build and deploy your API on Kubernetes or Red Hat OpenShift in less than a second. Unbelievable, isn't it?

For those who are not familiar with it, Camel K is a subproject of Apache Camel with the target of building a lightweight runtime for running integration code directly on cloud platforms like Kubernetes and Red Hat OpenShift. It was inspired by serverless principles, and it will also target Knative shortly. The article by Nicola Ferraro will give you a good introduction.

In this article, I'll show how to build an API with Camel K. For that, we will start first by designing our API using Apicurio Studio, which is based on the OpenAPI standard, and then we will provide the OpenAPI standard document to Camel K in order to implement the API and deploy it to Red Hat OpenShift.

Design your API in Apicurio

Apicurio is a web-based open source tool for designing based on the OpenAPI specification. Go to https://www.apicur.io/, then you can start by clicking on Create New API.

Essentially, you need to:

- Create the API

- Create the data definitions

- Add Paths, and define parameters, operations, and return responses to the path

For each operation (GET, POST), you should configure the Operation ID. This field is used by Camel K at the build of the REST route in order to redirect this request to a direct endpoint with the same name as the Operation ID. That means your integration routes should start from a direct endpoint (like direct://getCustomer, direct://createCustomer) in order to apply the integration patterns and process those REST requests:

Once you have finished the design of your API, download as a JSON file your API based on OpenAPI v2 specification. Here's an example of the OpenAPI standard specification of a CustomerAPI.

Build your API using Camel K

To start using Camel K, you need the kamel binary, which can be used to both configure the cluster and run integrations. Refer to the release page for latest version of the kamel tool.

Once you have the kamel binary, log into your cluster using the standard oc (OpenShift) or kubectl (Kubernetes) client tool and execute the following command to install Camel K:

kamel install

At that point, you have just to develop your integration routes in the multiple languages supported by Camel K. In my example, CamelK-customerAPI, I use plain XML: customer-api.xml.

You are ready now to build and to deploy your API:

git clone https://github.com/abouchama/CamelK-customerAPI.git

cd CamelK-customerAPI

kamel run --dev --name customers --dependency camel-undertow --property camel.rest.port=8080 --open-api customer-api.json customer-api.xml

You can check the status of your integration by running the following command:

oc get it NAME PHASE CONTEXT customers Running ctx-biq59ca78n55k4851cjg

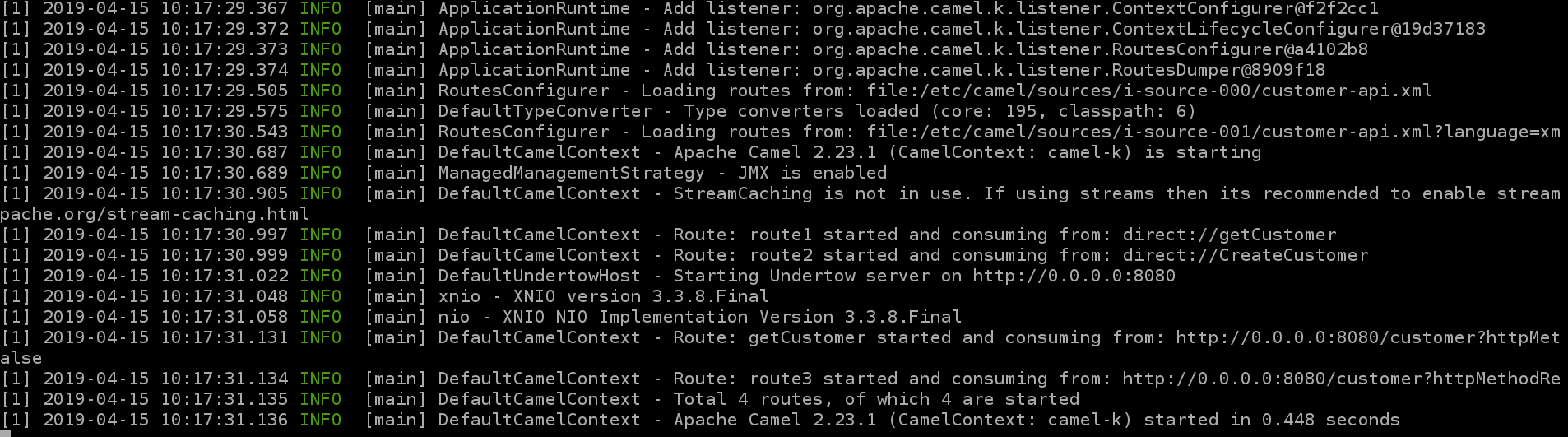

Logs show:

Congratulations! Your first API was built and deployed on Red Hat OpenShift in less than one second, as you can see from the last line of the log.

Tips

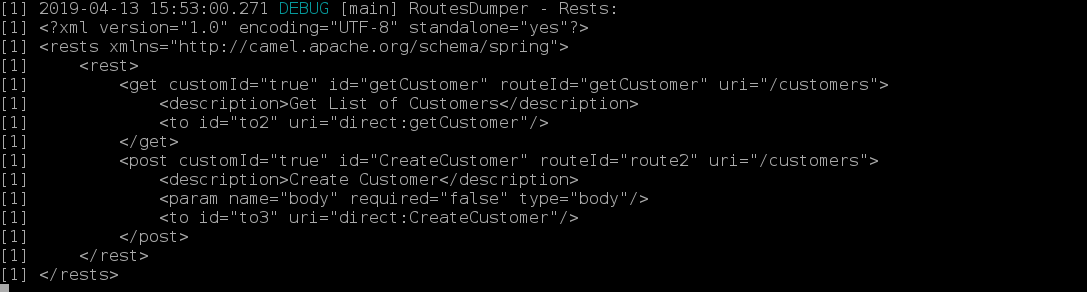

By setting the logging level to DEBUG, you can see the print of the rests routes converted from the provided OpenAPI specification in the parameter --open-api:

kamel run --dev --name customers --dependency camel-undertow --property camel.rest.port=8080 --open-api customer-api.json --logging-level org.apache.camel.k=DEBUG customer-api.xml

To monitor your Camel K routes with Hawtio, simply enable the jolokia trait (-t jolokia.enabled=true), and the button Open Java Console will appear. For monitoring with Prometheus, you can simply enable the prometheus trait (-t prometheus.enabled=true):

kamel run --dev --name customers --dependency camel-undertow --property camel.rest.port=8080 -t jolokia.enabled=true -t prometheus.enabled=true -t prometheus.service-monitor=false --open-api customer-api.json --logging-level org.apache.camel.k=DEBUG customer-api.xml

Thanks for reading, and I hope that you enjoyed this article.

The video below walks through what I've covered in this article: