Kyle Sayers

Kyle Sayers's contributions

Article

LLM Compressor v0.10: Faster compression with distributed GPTQ

Kyle Sayers

+2

LLM Compressor v0.10 introduces Distributed Data Parallel (DDP) for faster compression, memory management, and advanced quantization formats. Make model compression workflows more efficient for large language models.

Article

Accelerating large language models with NVFP4 quantization

Shubhra Pandit

+3

Learn about NVFP4, a 4-bit floating-point format for high-performance inference on modern GPUs that can deliver near-baseline accuracy at large scale.

Article

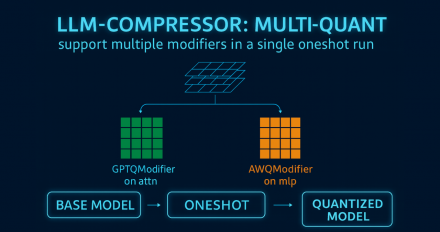

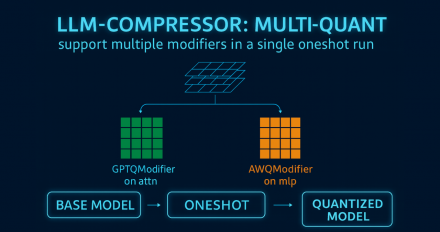

LLM Compressor 0.9.0: Attention quantization, MXFP4 support, and more

Kyle Sayers

+3

Explore the latest release of LLM Compressor, featuring attention quantization, MXFP4 support, AutoRound quantization modifier, and more.

Article

Run Mistral Large 3 & Ministral 3 on vLLM with Red Hat AI on Day 0: A step-by-step guide

Saša Zelenović

+6

Run the latest Mistral Large 3 and Ministral 3 models on vLLM with Red Hat AI, providing day 0 access for immediate experimentation and deployment.

Article

LLM Compressor 0.8.0: Extended support for Qwen3 and more

Dipika Sikka

+2

The LLM Compressor 0.8.0 release introduces quantization workflow enhancements, extended support for Qwen3 models, and improved accuracy recovery.

Article

LLM Compressor 0.7.0 release recap

Dipika Sikka

+3

LLM Compressor 0.7.0 brings Hadamard transforms for better accuracy, mixed-precision FP4/FP8, and calibration-free block quantization for efficient compression.

Article

LLM Compressor: Optimize LLMs for low-latency deployments

Kyle Sayers

+3

LLM Compressor bridges the gap between model training and efficient deployment via quantization and sparsity, enabling cost-effective, low-latency inference.

Article

Multimodal model quantization support through LLM Compressor

Kyle Sayers

+3

Explore multimodal model quantization in LLM Compressor, a unified library for optimizing models for deployment with vLLM.

LLM Compressor v0.10: Faster compression with distributed GPTQ

LLM Compressor v0.10 introduces Distributed Data Parallel (DDP) for faster compression, memory management, and advanced quantization formats. Make model compression workflows more efficient for large language models.

Accelerating large language models with NVFP4 quantization

Learn about NVFP4, a 4-bit floating-point format for high-performance inference on modern GPUs that can deliver near-baseline accuracy at large scale.

LLM Compressor 0.9.0: Attention quantization, MXFP4 support, and more

Explore the latest release of LLM Compressor, featuring attention quantization, MXFP4 support, AutoRound quantization modifier, and more.

Run Mistral Large 3 & Ministral 3 on vLLM with Red Hat AI on Day 0: A step-by-step guide

Run the latest Mistral Large 3 and Ministral 3 models on vLLM with Red Hat AI, providing day 0 access for immediate experimentation and deployment.

LLM Compressor 0.8.0: Extended support for Qwen3 and more

The LLM Compressor 0.8.0 release introduces quantization workflow enhancements, extended support for Qwen3 models, and improved accuracy recovery.

LLM Compressor 0.7.0 release recap

LLM Compressor 0.7.0 brings Hadamard transforms for better accuracy, mixed-precision FP4/FP8, and calibration-free block quantization for efficient compression.

LLM Compressor: Optimize LLMs for low-latency deployments

LLM Compressor bridges the gap between model training and efficient deployment via quantization and sparsity, enabling cost-effective, low-latency inference.

Multimodal model quantization support through LLM Compressor

Explore multimodal model quantization in LLM Compressor, a unified library for optimizing models for deployment with vLLM.