In Scaling Earth and space AI models with Red Hat AI Inference Server and Red Hat OpenShift AI, we showed the performance benefits of serving inference for the Prithvi-EO model with Red Hat AI Inference Server. We demonstrated this using both a standalone setup and a combination of KServe and Knative. Here, we will dive deeper and show how to set up and test both cases. If you are feeling adventurous, you can also try using your own Earth and space model instead of Prithvi.

Let’s dive in!

Before you start

This article includes two self-contained activities. In the first part, we deploy Prithvi using a traditional Deployment object. In the second part, we serve the model using KServe and run a benchmark test to observe how Knative scales serving replicas as traffic increases. To follow along, be sure to have a suitable environment meeting the following requirements.

Prerequisites:

- A Red Hat OpenShift cluster with at least one NVIDIA GPU

- Red Hat OpenShift AI 2.25 or later

Note: We run the service using an NVIDIA A100 80 GB GPU hosted on a bare metal OpenShift cluster.

How to serve Prithvi with Red Hat AI Inference Server

The following steps describe how to bring up a vLLM instance serving a Prithvi 2.0 model for flood detection using Red Hat AI Inference Server on OpenShift. They assume you are logged into OpenShift in a namespace where you can request GPUs.

Step 1: Create a Red Hat AI Inference Server deployment serving the Prithvi model

First, create a Deployment and Service YAML description to serve the model using Red Hat AI Inference Server. For example:

apiVersion: apps/v1

kind: Deployment

metadata:

name: rhaiis-prithvi

labels:

app: rhaiis-prithvi

spec:

replicas: 1

selector:

matchLabels:

app: rhaiis-prithvi

template:

metadata:

labels:

app: rhaiis-prithvi

spec:

volumes:

- name: shm

emptyDir:

medium: Memory

sizeLimit: "2Gi"

containers:

- name: rhaiis-prithvi

image: registry.redhat.io/rhaiis/vllm-cuda-rhel9:3

command: ["vllm"]

args: ["serve",

"ibm-nasa-geospatial/Prithvi-EO-2.0-300M-TL-Sen1Floods11",

"--enforce-eager",

"--skip-tokenizer-init",

"--enable-mm-embeds",

"--io-processor-plugin",

"terratorch_segmentation"]

env:

- name: HF_HUB_OFFLINE

value: "0"

ports:

- containerPort: 8000

resources:

limits:

cpu: "10"

memory: 20G

nvidia.com/gpu: "1"

requests:

cpu: "2"

memory: 6G

nvidia.com/gpu: "1"

volumeMounts:

- name: shm

mountPath: /dev/shm

livenessProbe:

httpGet:

path: /health

port: 8000

initialDelaySeconds: 120

periodSeconds: 10

readinessProbe:

httpGet:

path: /health

port: 8000

initialDelaySeconds: 120

periodSeconds: 5

---

apiVersion: v1

kind: Service

metadata:

name: rhaiis-prithvi

spec:

ports:

- name: rhaiis-prithvi

port: 8000

protocol: TCP

targetPort: 8000

selector:

app: rhaiis-prithvi

sessionAffinity: None

type: ClusterIPSave the YAML to a file named rhaiis_prithvi.yaml. Run the following command to create the deployment in your current OpenShift namespace:

oc create –f rhaiis_prithvi.yamlOnce the rhaiis-prithvi pod becomes Ready (it can take several minutes depending on the network speed) inference requests can be sent to the model in the cluster via a service or port-forward. Start the port forward for rhaiis-prithvi using the following command. Note that the port-forward command does not return control of the terminal, so open a new terminal to complete the following section.

oc port-forward svc/rhaiis-prithvi 8000:8000Step 2: Send an inference request to the Prithvi model

Before sending a request to the service, we need to describe the request payload. The following example payload is for an inference request that specifies the input image using a URL and requests the output as a base64-encoded image. Save the JSON payload to a file named payload.json.

{

"data": {

"data": "https://huggingface.co/ibm-nasa-geospatial/Prithvi-EO-2.0-300M-TL-Sen1Floods11/resolve/main/examples/India_900498_S2Hand.tif",

"indices": [1, 2, 3, 8, 11, 12],

"data_format": "url",

"out_data_format": "b64_json",

"image_format": "tiff"

},

"model": "ibm-nasa-geospatial/Prithvi-EO-2.0-300M-TL-Sen1Floods11"

}The image can be specified by either a path to a TIFF file (accessible on the file system the server has access to) or an URL pointing to the same. You can also specify whether the service saves the output image to a path on the server's file system or returns it as a base64-encoded in the response. For a full description of input and output options, see the TerraTorch project documentation.

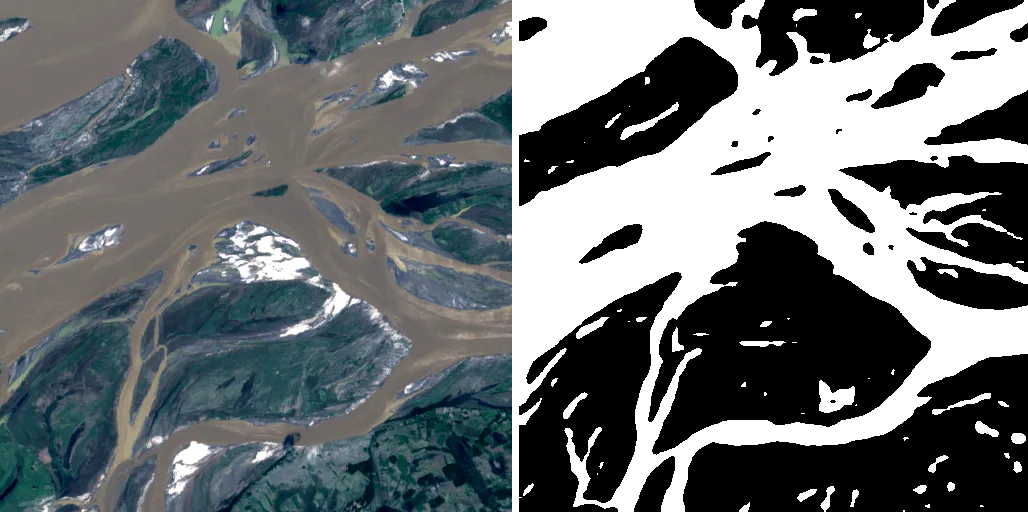

From a different terminal window, run the following command to send the inference request to vLLM. Ensure you run the command from the directory where you saved the payload The command decodes the output into a TIFF image and saves it as a mask.tiff file. Figure 1 shows the input image URL from the payload (left) and the mask Prithvi produced (right).

curl -s -H "Content-Type: application/json" \

--data @payload.json \

http://localhost:8000/pooling \

| jq -r '.data.data' \

| base64 --decode \

> mask.tiff

Benchmark the service

The vLLM benchmarking tool, vllm bench, tests geospatial models by varying parameters such as request‑rate distribution and client‑side concurrency. Here we recommend installing vLLM from source, as this process is supported for all major architectures. However, check if a PyPI package is available for your specific architecture. To install vllm bench, you must specify the extra benchmarking dependencies by adding bench. For example, run the following command when installing from source:

uv pip install -e ".[bench]"After the build process finishes, download the dataset_url_input_india.jsonl file from the repository:

curl http://mgazz.github.io/dataset\_url\_input\_india.jsonl \ --output dataset_url_input_india.jsonlThen, run the following command line from the repository's top-level directory (substitute the value of --base-url as appropriate).

vllm bench serve \

--base-url http://localhost:8000 \

--dataset-name=custom \

--model ibm-nasa-geospatial/Prithvi-EO-2.0-300M-TL-Sen1Floods11 \

--skip-tokenizer-init \

--endpoint /pooling \

--backend vllm-pooling \

--percentile-metrics e2el \

--metric-percentiles 25,75,99 \

--num-prompts 10 \

--dataset-path ./dataset_url_input_india.jsonlHow to create a scalable geospatial inference service

This section describes how to deploy the Prithvi-EO-2.0-300M-TL-Sen1Floods11 model using OpenShift AI. This installation uses vLLM as the serving engine, KServe as the inference platform, and Knative as the inference autoscaler. Combining these three technologies simplifies deployment and dynamically scales inference servers based on request load.

These instructions assume you are logged into an OpenShift cluster with GPUs that has OpenShift AI installed.

Step 1: Create the vLLM ServingRuntime and InferenceService

The setup uses a custom ServingRuntime backed by a Red Hat AI Inference Server container and a serverless InferenceService with autoscaling based on request concurrency. First, create a YAML description of the KServe objects and a PersistentVolumeClaim (PVC) to deploy Red Hat AI Inference Server.

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: shared-pvc

spec:

accessModes:

- ReadWriteMany

resources:

requests:

storage: 10Gi

---

apiVersion: serving.kserve.io/v1alpha1

kind: ServingRuntime

metadata:

labels:

app: rhaiis-prithvi-300m

name: rhaiis-prithvi-300m

spec:

containers:

- args:

- serve

- ibm-nasa-geospatial/Prithvi-EO-2.0-300M-TL-Sen1Floods11

- --skip-tokenizer-init

- --enforce-eager

- --io-processor-plugin

- terratorch_segmentation

- --enable-mm-embeds

- --runner

- pooling

command:

- vllm

env:

- name: VLLM_LOGGING_LEVEL

value: INFO

- name: HF_HOME

value: /tmp

- name: HF_HUB_CACHE

value: /cache

- name: HOME

value: /tmp

image: registry.redhat.io/rhaiis/vllm-cuda-rhel9:3

readinessProbe:

httpGet:

path: /health

port: 8000

periodSeconds: 2

imagePullPolicy: Always

name: kserve-container

ports:

- containerPort: 8000

protocol: TCP

resources:

limits:

cpu: "64"

memory: 64G

nvidia.com/gpu: "1"

requests:

cpu: "32"

memory: 64G

nvidia.com/gpu: "1"

securityContext:

capabilities:

drop:

- MKNOD

volumeMounts:

- mountPath: /cache

name: tests-cache

imagePullSecrets:

- name: cp-icr-pull-secret

multiModel: false

supportedModelFormats:

- autoSelect: true

name: vLLM

volumes:

- name: tests-cache

persistentVolumeClaim:

claimName: shared-pvc

---

apiVersion: serving.kserve.io/v1beta1

kind: InferenceService

metadata:

annotations:

serving.knative.openshift.io/enablePassthrough: "true"

serving.kserve.io/deploymentMode: Serverless

sidecar.istio.io/inject: "true"

sidecar.istio.io/rewriteAppHTTPProbers: "true"

prometheus.io/scrape: "true"

prometheus.io/path: "/metrics"

prometheus.io/port: "8000"

autoscaling.knative.dev/metric: concurrency

autoscaling.knative.dev/target: "13"

autoscaling.knative.dev/window: "60s"

autoscaling.knative.dev/panic-threshold-percentage: "150"

sidecar.istio.io/proxyCPU: "2"

sidecar.istio.io/proxyCPULimit: "4"

sidecar.istio.io/proxyMemory: "4Gi"

sidecar.istio.io/proxyMemoryLimit: "4Gi"

name: rhaiis-prithvi-300m

spec:

predictor:

affinity:

podAntiAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

- labelSelector:

matchExpressions:

- key: app

operator: In

values:

- rhaiis-prithvi-300m

topologyKey: kubernetes.io/hostname

nodeSelector:

nvidia.com/gpu.product: NVIDIA-A100-80GB-PCIe

containerConcurrency: 14

runtime: rhaiis-prithvi-300m

maxReplicas: 3

minReplicas: 1

model:

modelFormat:

name: vLLM

name: ""Save the YAML content to a file named kserve_prithvi.yaml. To create the deployment in your current OpenShift namespace, run the following command:

oc create –f kserve_prithvi.yamlStep 2: Verify that the service is up and running

Inspect the InferenceService object and verify that it is in a Ready state:

oc get isvc rhaiis-prithvi-300mFetch the URL for the Red Hat AI Inference Server service:

RHAIIS=$(oc get isvc rhaiis-prithvi-300m \ -o jsonpath='{.status.url}{"\n"}')From a different terminal window, run the following command to send an inference request to vLLM through KServe. You can use the same payload described in the section Sending an inference request to the Prithvi model section.

curl -s -H "Content-Type: application/json" \

--data @payload.json \

http://localhost:8000/pooling \

| jq -r '.data.data' \

| base64 --decode \

> mask.tiffHandling ingress bandwidth limits

When running these tests, the throughput reported by each InferenceService replica might be lower than expected. This is often caused by network bandwidth saturation pulling images from the Hugging Face repository.

To remove this bottleneck, deploy a local image server to serve the TIFF files from inside the cluster. The following example uses a simple BusyBox container running httpd to serve the image listed in the dataset_url_input_india.jsonl dataset file.

kind: Deployment

apiVersion: apps/v1

metadata:

name: image-server

spec:

replicas: 3

selector:

matchLabels:

app: image-server

template:

metadata:

labels:

app: image-server

spec:

containers:

- command:

- sh

- -c

- |

wget https://huggingface.co/christian-pinto/Prithvi-EO-2.0-300M-TL-VLLM/resolve/main/India_900498_S2Hand.tif -O /tmp/India_900498_S2Hand.tif

httpd -f -p 8080 -h /tmp

image: busybox:latest

imagePullPolicy: Always

name: http

---

kind: Service

apiVersion: v1

metadata:

name: image-server

spec:

ports:

- name: http

protocol: TCP

port: 80

targetPort: 8080

type: ClusterIP

selector:

app: image-serverAfter deploying the local image server, update your dataset JSONL file so each input request points to the in‑cluster URL. This ensures the benchmark runs entirely within the cluster.

http://image-server/India_900498_S2Hand.tifTest the service

To measure dynamic autoscaling performance, run two instances of vllm bench to generate the traffic load. The first instance simulates background traffic at 13 requests per second (RPS). Start the second instance two minutes later to increase traffic with a burst at 26 RPS.

Both instances run the same benchmarking command. Change the value of the TRAFFIC_LOAD environment variable to 13 for background traffic and 26 for burst traffic.

vllm bench serve \

--base-url "${RHAIIS}" \

--dataset-name=custom \

--model ibm-nasa-geospatial/Prithvi-EO-2.0-300M-TL-Sen1Floods11 \

--seed 12345 \

--skip-tokenizer-init \

--endpoint /pooling \

--backend vllm-pooling \

--metric-percentiles 25,75,99 \

--percentile-metrics e2el \

--dataset-path ./dataset_url_input_india.jsonl \

--num-prompts 500 \

--request-rate ${TRAFFIC_LOAD} \

--max-concurrency ${TRAFFIC_LOAD} \

--burstiness 5In a separate terminal, run the following command to watch for changes in the replicas associated with the rhaiis-prithvi-300m InferenceService:

watch oc get pods -l app=rhaiis-prithvi-300m-predictor-00001 When Knative detects a traffic burst that exceeds the InferenceService concurrency constraints, it scales the replicas to handle the benchmark traffic. You can verify the scale-out event by checking the pod status:

NAME READY STATUS RESTARTS AGE

rhaiis-prithvi-300m-predictor-00001-deployment-55 2/2 Running 0 45s

rhaiis-prithvi-300m-predictor-00001-deployment-82 2/2 Running 0 30s

rhaiis-prithvi-300m-predictor-00001-deployment-99 2/2 Running 0 30sExperimenting with configuration settings

The main parameter for addressing bursty traffic is the panic window threshold. You identify this using the autoscaling.knative.dev/panic-threshold-percentage annotation. In this example, the configuration scales replicas if the number of in-flight requests (concurrency) to a server exceeds 150% of the target value. We set this target to 13 using the autoscaling.knative.dev/target annotation. This target is based on our evaluation that a single vLLM server can sustain up to 14.6 RPS (where each request is a tile) when downloading from a URL.

To prevent overloading the vLLM replicas, we set the containerConcurrency option to at 14, close to this throughput limit. Knative then begins queuing requests once traffic per vLLM instance approaches the maximum safe limit. This ensures the load is evenly distributed.

Try experimenting with different parameter and benchmark settings to see how they change behavior. For example, the following command raises concurrency targets and triggers autoscaling events in response to larger traffic bursts:

oc patch inferenceservice rhaiis-prithvi-300m \

--type='merge' \

-p='{

"metadata": {

"annotations": {

"autoscaling.knative.dev/target": "16"

}

},

"spec": {

"predictor": {

"containerConcurrency": 20

}

}

}'After the experiment completes, delete the resources to free the GPUs.

oc delete –f kserve_prithvi.yamlBring your own model

In this article we used Prithvi, a model available on Hugging Face that vLLM supports natively. You can extend vLLM with general plug-ins to support custom models. Register custom out-of-tree models in the vLLM model registry to make them available for serving. To deploy a custom model, make the plug-in available to Red Hat AI Inference Server at startup. For example, use a PVC and install the plug-in into the main Python environment used to start vLLM. Like other models, vLLM expects out-of-tree models to be hosted on Hugging Face or stored a local directory.

Wrap up

Red Hat OpenShift AI provides a ready‑to‑use AI application platform that simplifies deploying and scaling AI models based on traffic. This is an essential capability for geospatial use cases, where demand can spike unpredictably due to new data or sudden events such as natural disasters or extreme weather events.

Learn more

- Check the documentation

- Read Red Hat’s overview of how vLLM accelerates AI inference and enterprise use cases

- Deep dive into Red Hat AI Inference Server technical architecture and parallelism

- Explore using vLLM for geospatial serving mechanics and more<

- Try Prithvi models in your environment: Hugging Face, GitHub