Modern organizations increasingly rely not on monolithic applications, but on smaller and more specialized systems and services that interact with each other. These assets may reside close by in the same operating environment, or in a totally different environment altogether—for instance, one may be in an on-premise datacenter and another in a public cloud. It's crucial that any interaction between resources be facilitated in a secure fashion, regardless of where they are running. Traditional mechanisms, such as username/password pairs (or access key/secret key pairs) are no longer sufficient, which makes alternative solutions necessary.

Workload identity is an approach for solving this challenge that has gained popularity, particularly with the rise in cloud computing and zero trust methodologies. Workload identity allows for the creation of policies within identity and access management (IAM) systems to enable secure communication between systems without the need to leverage or manage static credentials. SPIFFE (the Secure Production Identity Framework For Everyone) and its related SPIRE (the SPIFFE Runtime Environment) projects work together as a workload identity solution. They allow for identities to be assigned wherever they operate across hybrid cloud environments.

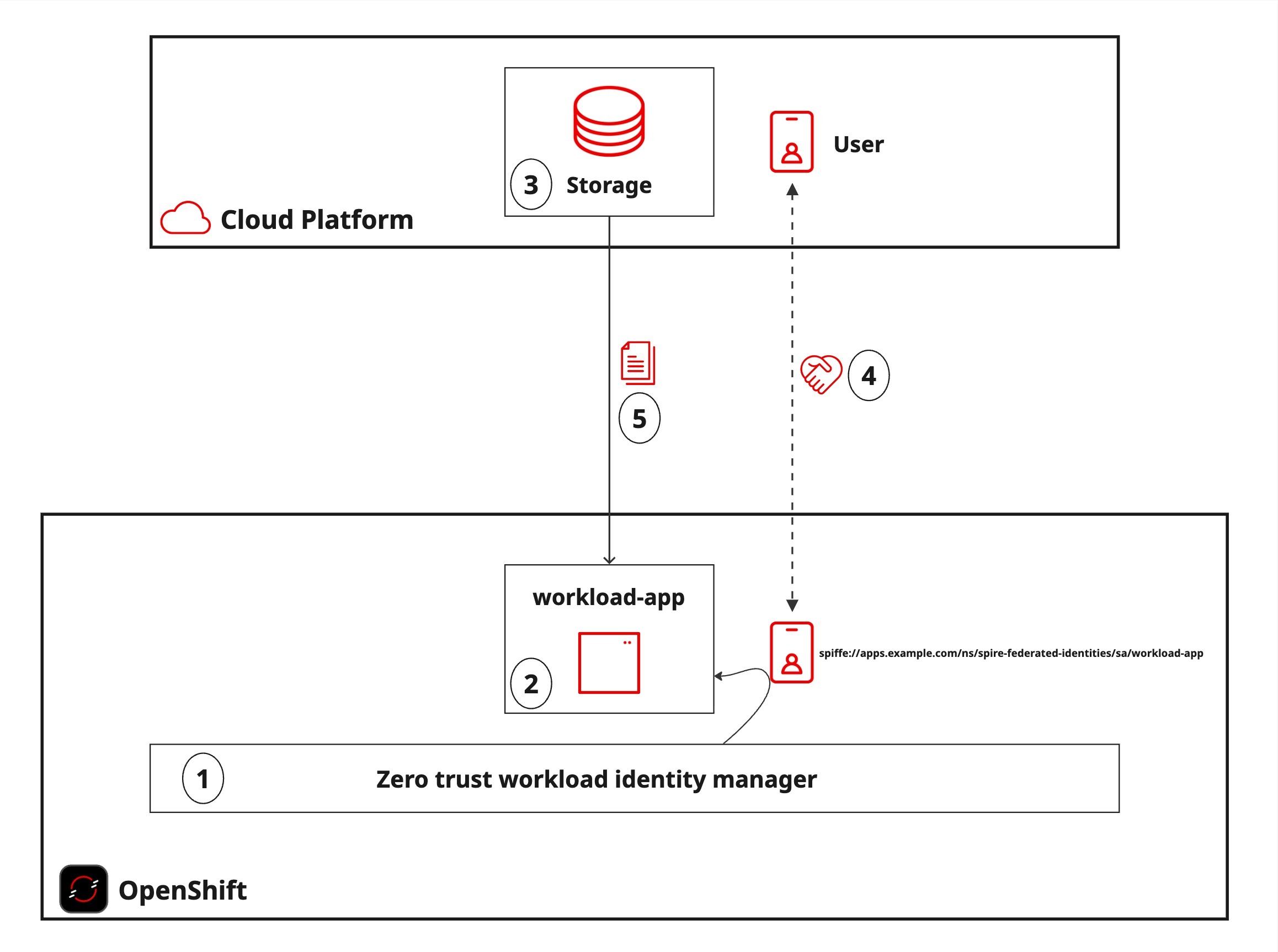

Red Hat supports the use of SPIFFE and SPIRE as zero trust workload identity manager, a Red Hat OpenShift operator. In this blog post, you'll see how this operator allows OpenShift workloads to securely connect to storage resources hosted by the 3 major cloud service providers. This will demonstrate how these technologies reshape how we think about the ways in which systems interact with one another, and the limits that can be employed to further reduce the level of access systems need to work effectively.

Addressing a common challenge

There is a good chance that any modern system or service, no matter where it operates, will ultimately rely on a service provided by a cloud provider. Examples include networking offerings like DNS or routing, application-based messaging, or storage solutions, just to name a few.

While SPIFFE and SPIRE provide a path forward to enable a more secure operating model, the process of integrating these technologies with public cloud providers like Amazon Web Services (AWS), Microsoft Azure, or Google Cloud is not straightforward and raises a number of questions:

- What options are available within these cloud providers?

- What configurations need to be made within the cloud provider to enable these types of offerings?

- What steps need to be made at an application level to leverage both the features of SPIFFE and SPIRE and those of the desired cloud provider?

Fortunately, even though some of the configuration steps are unique to individual cloud providers, the process at a high level is the same.

The ability to leverage cloud storage within an application is a common use case relevant to any of the public cloud providers that can help illustrate the power and opportunities provided by workload identity and SPIFFE/SPIRE. Cloud storage services are popular, given their diversified offerings and ability to scale to meet demands.

By walking through each of the steps in detail, this blog post will demonstrate how to federate identities provided by zero trust workload identity manager in an OpenShift environment to enable access to cloud storage without the use of static credentials. This knowledge will help you implement these steps in your own environments.

Breaking down the pieces

While the configuration may differ slightly within each cloud provider, the following is the high-level set of steps that we will work through together:

- Deploy zero trust workload identity manager to an OpenShift environment

- Deploy a sample application within the OpenShift environment that will be used to consume storage from the public cloud providers

- Configure cloud storage within the target cloud provider

- Federate the identity provided to the application by zero trust workload identity manager with the cloud provider to enable access to the previously configured storage solution

- Verify access to the cloud storage from within the previously deployed application on OpenShift

Figure 1 illustrates these steps.

There is a good chance that only a portion of the cloud offerings presented is of interest to you at this time, so feel free to review the solutions that matter the most to you. However, if you understand the steps necessary to integrate zero trust workload identity manager on OpenShift in detail with each cloud provider, you will better understand your current area of interest today and can help support potential opportunities in the future.

Configuring an OpenShift environment

The first step you'll take, before you even begin federating identities with any of the public cloud providers, is to deploy SPIFFE/SPIRE to an OpenShift environment. As discussed previously, one of the primary benefits of SPIFFE/SPIRE is that it can be deployed to OpenShift environments running hosted on a public cloud or on-premise, giving you the flexibility to choose the operating environment that suits your needs.

In order to work through any of the steps described within this article, you must enable the appropriate communication paths from OpenShift to the cloud provider. The specific configurations necessary for this are outside the scope of the discussion of this first step, but can be found in the documentation provided by the cloud provider and will be covered within each provider implementation later in this blog post.

The installation and configuration of SPIRE on OpenShift, as well as the deployment of a sample application to demonstrate how SPIRE federated identities can access resources within each of the cloud providers, will leverage assets found in this GitHub repository.

Before beginning, ensure that you have the following utilities installed and configured on your local machine:

- Git

oc, the OpenShift command line interfaceenvsubst- jq

- OpenSSL

Begin by cloning the repository with the required assets to your local machine and changing into the cloned directory:

git clone https://github.com/sabre1041/spire-openshift-federated-identites

cd spire-openshift-federated-identitesOnce within the repository directory, set an environment variable called GIT_REPO_ROOT to represent the top-level location within the Git repository using the following command:

export GIT_REPO_ROOT=$(pwd)Several of the steps for creating resources throughout this guide make use of rendered configuration files using a series of template files. Baseline templates of these configurations are provided and rendered based on environment variables that have been configured. The majority of these rendered resources will be stored in a separate directory called rendered and located at the root of the Git repository.

Create a new directory to store the rendered content:

mkdir $GIT_REPO_ROOT/renderedNow that all of the prerequisite steps are complete, you can use the steps in the following sections to deploy SPIRE to OpenShift, along with a sample application.

Deploying zero trust workload identity manager

The installation of zero trust workload identity manager in an OpenShift environment is facilitated using the Kustomize functionality included within the OpenShift command-line interface. First, log in to your OpenShift environment using the OpenShift command-line Interface and set a variable called OPENSHIFT_CLUSTER_APP_DOMAIN:

export OPENSHIFT_CLUSTER_APP_DOMAIN=apps.$(oc get dns cluster -o jsonpath='{ .spec.baseDomain }')Next, obtain the channel for the zero trust workload identity manager operator:

export ZTWIM_OPERATOR_CHANNEL=$(oc get packagemanifests openshift-zero-trust-workload-identity-manager -o jsonpath='{ .status.defaultChannel }')Now render the Kustomization file that will deploy the zero trust workload identity manager operator:

envsubst < $GIT_REPO_ROOT/templates/ztwim-operator-kustomization.template > $GIT_REPO_ROOT/install/ztwim/operator/kustomization.yamlDeploy the zero trust workload identity manager operator:

oc apply -k $GIT_REPO_ROOT/install/ztwim/operatorWait until the operator has been deployed and the Custom Resource Definitions have been installed within the cluster.

until oc wait crd/spireservers.operator.openshift.io --for condition=established --timeout 120s >/dev/null 2>&1 ; do sleep 1 ; doneNext, render the Kustomization file that will deploy the zero trust workload identity manager resources:

envsubst < $GIT_REPO_ROOT/templates/ztwim-instance-kustomization.template > $GIT_REPO_ROOT/install/ztwim/instance/kustomization.yamlDeploy zero trust workload identity manager:

oc apply -k $GIT_REPO_ROOT/install/ztwim/instanceAfter a few minutes, zero trust workload identity manager will be deployed to OpenShift. Execute the following command to confirm that it deployed successfully:

until [[ $(oc get zerotrustworkloadidentitymanager cluster -o jsonpath='{ .status.conditions[?(@.type=="Ready")].status }') == "True" ]]; do sleep 10; doneWorkload deployment

Now that zero trust workload identity manager has been made available, the next step is to deploy a sample application that will demonstrate how to leverage SPIFFE federated identities to access resources within each of the public clouds.

Execute the following command to create a new namespace called spire-federated-identities along with a ServiceAccount, Secret and Deployment, all called app-workload:

oc apply -f ${GIT_REPO_ROOT}/install/workload/The names of the Namespace and ServiceAccount are significant as they will influence how the workload is identified and constrain access to the cloud-based resources. The Secret will be used to contain values that are needed to support the integration between the workload application and the cloud provider. Initially, the Secret is empty, but you will populate it during the configuration process for each cloud provider section later on in this blog post.

The workload application downloads a set of dependencies at startup, so it may take a few moments for it to indicate a ready status. You can confirm that the application is ready when the conditions have been met with the following command:

oc wait -n spire-federated-identities --for=condition=Available deployment/workload-app --timeout=600s

The tools and libraries included within the dependencies will allow you to test and verify access.

Public cloud implementations

Now that both zero trust workload identity manager and the workload application have been deployed to OpenShift, the next step is to demonstrate how to federate the identity provided by SPIFFE with the cloud providers. However, before beginning to work with each provider, we need to take a closer look at the identity that SPIRE has assigned to our application.

The spiffe Python library was one of the dependencies that the sample application downloaded at pod startup, and it includes capabilities to obtain a SPIFFE ID associated with the workload. Execute the following command to begin remote session within the pod to call this library to obtain the SPIFFE ID:

oc rsh -n spire-federated-identities deployment/workload-app python -c "from spiffe import WorkloadApiClient; print(f'SPIFFE ID: {WorkloadApiClient().fetch_x509_svid().spiffe_id}')"You'll see a response similar to the following:

SPIFFE ID: spiffe://apps.example.com/ns/spire-federated-identities/sa/workload-appNotice how the SPIFFE ID includes the values of both the namespace and the service account that was created earlier and associated with the pod the command was executed within. This not only confirms that SPIRE correctly generated an identity for this application, but this SPIFFE ID can be used in the creation of policies to gain access to cloud resources.

Now that you have an understanding of the identity that SPIRE has provided for our workload, you're ready to walk through the steps for each of the 3 major public cloud providers (AWS, Azure, and Google Cloud) that demonstrate how to federate this identity to allow our pod to access resources within each of the clouds. Once again, feel free to review the implementation of most interest to you, as each will follow the same high-level set of steps.

Microsoft Azure

Azure Cloud Storage enables the use of blob storage within the Microsoft Azure Cloud. Our sample workload will use workload identity to manage content within this resource.

Prerequisites

- Microsoft Azure account and subscription with permissions to create and manage Azure Cloud Storage resources

- Azure command-line interface (CLI) installed and configured

Configuration

First, set a few environment variables related to your Microsoft Azure account after authenticating to the desired account and subscription using the Azure CLI:

export SUBSCRIPTION_ID=$(az account list --query "[?isDefault].id" -o tsv)

export TENANT_ID=$(az account list --query "[?isDefault].tenantId" -o tsv)Next, set the location where the resources will be created. The list of locations can be found using the following command, in the name column of the output:

az account list-locations -o tableSet the desired location from the list retrieved above:

export LOCATION="<location>"Next, you will allocate variables related to the names of the resources that will be created. Microsoft Azure storage accounts have several restrictions related to naming, including a requirement that every storage account have a globally unique name.

Choose a descriptive name to associate with your specific deployment (such as your initials) and replace <unique_name> with that name in the following command:

export NAME=<unique_name>

export UNIQUE_NAME="spire-${NAME}"

export STORAGE_NAME="spire${NAME}"With the required variables now set, you can create a new resource group to contain the all of the resources:

az group create --name "${UNIQUE_NAME}" --location "${LOCATION}"Note that you could use an existing resource group if you prefer. Be sure to update the appropriate locations in subsequent commands for the desired resource.

Create a new storage account that will be used to store content:

az storage account create \

--name ${STORAGE_NAME} \

--resource-group ${UNIQUE_NAME} \

--location ${LOCATION} \

--sku Standard_ZRS \

--encryption-services blobObtain the storage ID for the newly created storage account so that it can be used in subsequent commands:

export STORAGE_ACCOUNT_ID=$(az storage account show -n ${STORAGE_NAME} -g ${UNIQUE_NAME} --query id --out tsv)Now, create a storage container inside the newly created storage account to provide a location to support the storage of blobs:

az storage container create \

--account-name ${STORAGE_NAME} \

--name ${STORAGE_NAME} \

--auth-mode loginWith blob storage now available within the targeted resource group, access can now be allocated to allow entities to manage storage. Managed identities are a capability provided within Azure for enabling access to services without having to worry about handling credentials. Of the managed identity offerings available, a user-assigned managed identity provides the most suitable option by allocating a standalone entity for which policies can be created and assigned to manage resources in Azure.

Create a new user-assigned managed identity, then obtain the client ID of the related service principal associated with that user-assigned managed identity:

export USER_ASSIGNED_IDENTITY_NAME="spire-${NAME}"

az identity create --name ${USER_ASSIGNED_IDENTITY_NAME} --resource-group ${UNIQUE_NAME}

export IDENTITY_CLIENT_ID=$(az identity show --resource-group "${UNIQUE_NAME}" --name "${USER_ASSIGNED_IDENTITY_NAME}" --query 'clientId' -otsv)Azure includes a role called Storage Blob Data Contributor that provides a baseline set of capabilities for managing storage containers and blobs. This targeted set of policies enables access to only the resources that are needed, adhering to zero trust principles.

Associate this role with the service principal associated with the user-assigned managed identity:

az role assignment create --role "Storage Blob Data Contributor" --assignee "${IDENTITY_CLIENT_ID}" --scope $(az storage account show -n ${STORAGE_NAME} -g ${UNIQUE_NAME} --query id --out tsv)The user-assigned managed identity and the SPIFFE identity associated with the workload application are bridged by federating the identities using the az identity federated-credential create command:

az identity federated-credential create \

--name ${UNIQUE_NAME} \

--identity-name ${UNIQUE_NAME} \

--resource-group ${UNIQUE_NAME} \

--issuer $(oc get cm -n zero-trust-workload-identity-manager spire-server -o jsonpath='{ .data.server\.conf }' | jq -r '.server.jwt_issuer') \

--subject spiffe://$(oc get cm -n zero-trust-workload-identity-manager spire-server -o jsonpath='{ .data.server\.conf }' | jq -r '.server.trust_domain')/ns/spire-federated-identities/sa/workload-app \

--audience api://AzureADTokenExchangeThe command is doing a number of things:

--name: Giving a name to the new federated credential using the--identity-name: Referencing the name of the service principal previously configured with rights to manage Azure blob storage.--issuer: Location of the OIDC Discovery Provider component of SPIRE in the OpenShift environment that is used to verify JWT tokens.--subject: The SPIFFE ID that is included in thesubfield of the JWT token.--audience api://AzureADTokenExchange: Name that identifies the recipient of the JWT token. This is the recommended value as described in the Microsoft documentation, but in reality you can use any desired value. This value will be used when obtaining a JWT token within the workload application.

Finally, update the app-workload Secret within the spire-federated-identities namespace with the values from Azure.

envsubst < $GIT_REPO_ROOT/templates/app-workload-secret-azure.template | oc apply -f-Restart the workload application deployment so that it picks up the values provided within the Secret as they are injected as environment variables into the pod.

oc rollout restart -n spire-federated-identities deployment/workload-appOnce again, wait until the Deployment is ready, at which point the configuration process is complete.

oc wait -n spire-federated-identities --for=condition=Available deployment/workload-app --timeout=600sVerification

Now that the SPIFFE identity has been federated with Azure, let's demonstrate that we can manage content in the blob storage from our application workload.

Obtain a remote shell within the application workload pod:

oc rsh -n spire-federated-identities deployment/workload-appA Python script called get-spiffe-token.py is available within the current directory (/opt/app-root/src) and is used to retrieve a JWT token from the SPIFFE Workload API. The script requires that the desired audience be provided as a parameter using the --audience flag, which will allow for it to be included within the returned JWT. The Secret that was patched earlier contained a property (AZURE_AUDIENCE) matching the value that was configured previously when federating the identity (api://AzureADTokenExchange).

Set the output from the script to a new variable called TOKEN:

export TOKEN=$(/opt/app-root/src/get-spiffe-token.py --audience=$AZURE_AUDIENCE)Log in to the Azure CLI included within the pod using the value of the token:

az login --service-principal \

-u $AZURE_CLIENT_ID \

-t $AZURE_TENANT_ID \

--federated-token $TOKENIf the login command returned a response related to the Azure environment and Service Principal, not only has the Azure CLI authenticated successfully, but the identities have been successfully federated as well.

With the Azure CLI authenticated, we can now manage content within the blob storage. Create a new file called openshift-spire-federated-identities.txt within the application workload Pod.

echo “Hello from OpenShift” > $HOME/openshift-spire-federated-identities.txtNext, upload the file to the Azure blob storage:

az storage blob upload \

--account-name ${BLOB_STORE_ACCOUNT} \

--container-name ${BLOB_STORE_ACCOUNT} \

--name openshift-spire-federated-identities.txt \

--file $HOME/openshift-spire-federated-identities.txt \

--auth-mode loginConfirm the file was uploaded successfully by listing the files contained within the blob storage:

az storage blob list \

--account-name=${BLOB_STORE_ACCOUNT} \

--container-name=${BLOB_STORE_ACCOUNT} \

--auth-mode=login \

-o tableThe file that was previously uploaded should be displayed.

Remember, we constrained the privileges allocated to the Service Principal to those related to blob storage management. That means that attempting to perform other actions, like listing groups stored within Entra ID, should fail. To test that, issue this command:

az ad group listYou should see a response similar to the following, confirming that the Service Principal has a restricted set of privileges:

Insufficient privileges to complete the operationFeel free to continue exploring the other capabilities allocated to the Service Principal for managing Azure blob storage, and to inspect the contents of get-spiffe-azure-token.py as it uses the spiffe Python package to interact with SPIRE and the Workload API.

When you're done, type exit to end the remote session within the Pod. To cleanup and remove the provisioned resources within Azure, execute the following command:

az group delete -y --name "${UNIQUE_NAME}" AWS

Amazon S3 enables the use of object storage within Amazon Web Services. Our sample workload will use workload identity to manage content within this resource.

Prerequisites

- An Amazon Web Services account with permissions to create and manage S3 and IAM resources

- AWS command-line interface (CLI) installed and configured

Configuration

First you'll need to set a few environment variables related to your Amazon Web Services account and deployment after authenticating using the AWS CLI. Set the AWS region that should be used as a target:

export AWS_REGION=$(aws configure get region)Next, set a descriptive name to associate with your specific deployment (such as your initials) and replace <unique_name> with that name in the following command:

export NAME=<unique_name>

export UNIQUE_NAME="spire-${NAME}"Create an S3 bucket that will be used to store your content.

aws s3api create-bucket \

--bucket $UNIQUE_NAME \

--region ${AWS_REGION} \

--create-bucket-configuration LocationConstraint=${AWS_REGION}You'll use the SPIRE OIDC Discovery Provider to verify the JWT tokens provided by SPIRE. Set the hostname of this resource:

export OIDC_DISCOVERY_PROVIDER_HOSTNAME=$(oc get route -n zero-trust-workload-identity-manager spire-oidc-discovery-provider -o jsonpath='{ .spec.host }')Set an environment variable containing the audience that represents the intended recipient of the JWT token:

export SPIFFE_AUDIENCE=spiffe-openshiftAWS IAM requires that a thumbprint of the top intermediate Certificate Authority (CA) be specified when creating an OIDC identity provider. Execute the following command to obtain the fingerprint of the OIDC Discovery Provider:

export OIDC_PROVIDER_THUMBPRINT=`echo | openssl s_client -servername ${OIDC_DISCOVERY_PROVIDER_HOSTNAME} -showcerts -connect ${OIDC_DISCOVERY_PROVIDER_HOSTNAME}:443 2>/dev/null | openssl x509 -fingerprint -noout | sed s/://g | sed 's/.*=//'`Before the OIDC Identity Provider can be created, you need to render the configuration that contains the required values:

envsubst < $GIT_REPO_ROOT/templates/aws-oidc-identity-provider.template > $GIT_REPO_ROOT/rendered/aws-oidc-identity-provider.jsonNext, create an AWS OIDC Identity Provider that references the SPIRE OIDC Discovery Provider:

export OIDC_IDENTITY_PROVIDER_ARN=$(aws iam create-open-id-connect-provider --cli-input-json file://$GIT_REPO_ROOT/rendered/aws-oidc-identity-provider.json)An AWS IAM policy is used to specify the permissions that are granted. In this case, you'll specify permissions for the ability to manage content within the AWS bucket previously created, and to list all S3 buckets within the region. Render the IAM policy using the following command:

envsubst < $GIT_REPO_ROOT/templates/aws-s3-policy.template > $GIT_REPO_ROOT/rendered/aws-s3-policy.jsonNow create the policy using the rendered manifest:

S3_POLICY_ARN=$(aws iam create-policy --policy-name ${UNIQUE_NAME} --policy-document file://$GIT_REPO_ROOT/rendered/aws-s3-policy.json --query Policy.Arn --output text)Set the SPIFFE ID representing the workload application. This value will be used when creating a trust policy that grants the workload application Pod permission to assume an AWS role that provides access to manage content in S3.

export SPIFFE_ID_WORKLOAD_APP=spiffe://$(oc get cm -n zero-trust-workload-identity-manager spire-server -o jsonpath='{ .data.server\.conf }' | jq -r '.server.trust_domain')/ns/spire-federated-identities/sa/workload-appRender the trust policy that connects the OIDC Discovery Provider, the SPIFFE ID, and the audience previously assigned:

envsubst < $GIT_REPO_ROOT/templates/aws-trust-policy.template > $GIT_REPO_ROOT/rendered/aws-trust-policy.jsonCreate a new AWS IAM role that leverages the previously rendered Trust Policy:

export ROLE_ARN=$(aws iam create-role --role-name ${UNIQUE_NAME} --assume-role-policy-document file://$GIT_REPO_ROOT/rendered/aws-trust-policy.json --query Role.Arn --output text)Finally, attach the created policy to the IAM role:

aws iam attach-role-policy --role-name ${UNIQUE_NAME} --policy-arn ${S3_POLICY_ARN}Now that the configurations in AWS are complete, update the app-workload secret within the spire-federated-identities namespace with the values that were previously set:

envsubst < $GIT_REPO_ROOT/templates/app-workload-secret-aws.template | oc apply -f-Roll out the workload application so that it can leverage the Secret containing details related to the configuration:

oc rollout restart -n spire-federated-identities deployment/workload-appWait for the application to roll out successfully:

oc wait -n spire-federated-identities --for=condition=Available deployment/workload-app --timeout=600sVerification

Now that the configuration to enable the workload application to manage content in S3 is complete, let's showcase the capabilities that are available:

Obtain a remote shell within the application workload pod:

oc rsh -n spire-federated-identities deployment/workload-appA Python script called get-spiffe-token.py is present within the current directory (/opt/app-root/src) and is used to retrieve a JWT token from the SPIFFE Workload API. The script requires that the desired audience be provided as a parameter using the --audience flag, which will allow for it to be included within the returned JWT. The Secret that was patched earlier contained a property (AWS_AUDIENCE) matching the value that was configured previously when federating the identity.

Generate a JWT token from SPIRE and save the contents in a file referenced by the $AWS_WEB_IDENTITY_TOKEN_FILE environment variable:

/opt/app-root/src/get-spiffe-token.py --audience=$AWS_AUDIENCE > $AWS_WEB_IDENTITY_TOKEN_FILEWith the JWT token from SPIRE now set, verify that AWS buckets can be listed:

aws s3 lsAll of the available buckets should be returned.

Now that you have confirmed that the workload application is authenticated and can browse S3, create a new file called openshift-spire-federated-identities.txt within the application workload pod to confirm that files with the S3 bucket can also be uploaded:

echo “Hello from OpenShift” > $HOME/openshift-spire-federated-identities.txtNow, upload the file to the S3 bucket:

aws s3 cp $HOME/openshift-spire-federated-identities.txt s3://${AWS_BUCKET_NAME}/Confirm that the file is present in S3 by listing the contents of the bucket:

aws s3api list-objects \

--bucket ${AWS_BUCKET_NAME} \

--query 'Contents[].Key' \

--output textThe file that was previously uploaded should be displayed.

Since we constrained the privileges that were assigned within the policy to just the management of S3 storage, attempting to perform other actions, like listing IAM roles, should fail. Check that with the following command:

aws iam list-rolesYou should see a response similar to the following, confirming that the role assigned to the pod has a restricted set of privileges:

An error occurred (AccessDenied) when calling the ListRoles operation: User: arn:aws:sts::<account>:assumed-role/<role>/botocore-session-<session_id> is not authorized to perform: iam:ListRoles on resource: arn:aws:iam::<account>:role/ because no identity-based policy allows the iam:ListRoles actionFeel free to continue exploring the other capabilities allocated to the role for managing S3 storage, and to inspect the contents of the get-spiffe-token.py as it uses the spiffe Python package to interact with SPIRE and the Workload API.

When you're done, type exit to end the remote session within the pod. To clean up and remove the provisioned resources within AWS, execute the following commands:

aws iam detach-role-policy --role-name ${UNIQUE_NAME} --policy-arn ${S3_POLICY_ARN}

aws iam delete-policy --policy-arn ${S3_POLICY_ARN}

aws iam delete-role --role-name ${UNIQUE_NAME}

aws iam delete-open-id-connect-provider --open-id-connect-provider-arn ${OIDC_IDENTITY_PROVIDER_ARN}

aws s3 rm s3://${UNIQUE_NAME} --recursive

aws s3api delete-bucket --bucket ${UNIQUE_NAME}Google Cloud

Google Cloud Storage enables the use of blob storage within the Google Cloud Platform. Our sample workload will use Workload Identity Federation to manage content within this resource.

Prerequisites

- A Google Cloud account/project with permissions to create and manage Google Cloud Storage and IAM resources

- Google Cloud command-line interface (

gcloudCLI) installed and configured

Configuration

First, set an environment variable representing the Google project ID. This will be used in multiple commands in this section:

export PROJECT_ID=<PROJECT_ID>Authenticate to the gcloud Google CLI and set the desired project:

gcloud config set project <PROJECT_ID>Obtain the project number for the associated project and store it in an environment variable called PROJECT_NUMBER:

export PROJECT_NUMBER=$(gcloud projects describe $PROJECT_ID --format="value(projectNumber)")Set a descriptive name to associate with your specific deployment (such as your initials) and replace <unique_name> in the following command with that name:.

export NAME=<unique_name>

export UNIQUE_NAME="spire-${NAME}"Create a new Google Cloud Storage bucket that will be used to store content:

gcloud storage buckets create gs://${UNIQUE_NAME}The workload application will impersonate a Google Cloud service account in order to interact with the Google Cloud. Create the service account using the following command:

gcloud iam service-accounts create ${UNIQUE_NAME}Now grant the service account access to the Google Cloud Storage by using one of the built-in roles (roles/storage.admin).

gcloud projects add-iam-policy-binding ${PROJECT_ID} \

--member "serviceAccount:${UNIQUE_NAME}@${PROJECT_ID}.iam.gserviceaccount.com" \

--role "roles/storage.admin"You'll use the SPIRE OIDC Discovery Provider to verify the JWT tokens provided by SPIRE. Set the hostname of this resource:

export OIDC_DISCOVERY_PROVIDER_HOSTNAME=$(oc get route -n zero-trust-workload-identity-manager spire-oidc-discovery-provider -o jsonpath='{ .spec.host }')You'll use Workload Identity Federation (WIF) to give an OpenShift workload with an assigned SPIFFE identity the ability to access Google Cloud resources. The first step for setting up WIF is to create an identity pool that allows for the management of external identities:

gcloud iam workload-identity-pools create ${UNIQUE_NAME} --location="global" --display-name="${UNIQUE_NAME}"Create a relationship between SPIRE and WIF using a workload identity pool provider:

gcloud iam workload-identity-pools providers create-oidc ${UNIQUE_NAME} \

--location="global" \

--workload-identity-pool="${UNIQUE_NAME}" \

--issuer-uri="https://${OIDC_DISCOVERY_PROVIDER_HOSTNAME}" \

--attribute-mapping="google.subject=assertion.sub"The --attribute-mapping parameter maps fields from the SPIFFE JWT token to properties in Google Cloud. In this case, we are mapping the sub field representing the SPIFFE subject to the Google subject.

Next you'll set the SPIFFE ID representing the workload application. This value will be used to allow for the pod to impersonate the service account created previously.

export SPIFFE_ID_WORKLOAD_APP=spiffe://$(oc get cm -n zero-trust-workload-identity-manager spire-server -o jsonpath='{ .data.server\.conf }' | jq -r '.server.trust_domain')/ns/spire-federated-identities/sa/workload-appNow allow the workload application pod the ability to impersonate the service account by granting the built-in roles/iam.workloadIdentityUser role to the principal that represents the workload pod.

gcloud iam service-accounts add-iam-policy-binding ${UNIQUE_NAME}@${PROJECT_ID}.iam.gserviceaccount.com \

--role roles/iam.workloadIdentityUser \

--member "principal://iam.googleapis.com/projects/${PROJECT_NUMBER}/locations/global/workloadIdentityPools/${UNIQUE_NAME}/subject/${SPIFFE_ID_WORKLOAD_APP}"Next, you'll create a credentials file that will allow the gcloud CLI (or any other utility or library) to interact with Google Cloud. This resource will reference the location within the pod where the JWT token can be found and the service account that should be impersonated. The generated credentials configuration will be saved to a file called google-credentials.json within the rendered directory.

gcloud iam workload-identity-pools create-cred-config \

projects/${PROJECT_NUMBER}/locations/global/workloadIdentityPools/${UNIQUE_NAME}/providers/${UNIQUE_NAME} \

--service-account=${UNIQUE_NAME}@${PROJECT_ID}.iam.gserviceaccount.com \

--output-file=${GIT_REPO_ROOT}/rendered/google-credentials.json \

--credential-source-file=/opt/app-root/src/spiffe-token-google.txt \

--credential-source-type=textTo make the credentials file available within the pod, set an environment variable called GOOGLE_APPLICATION_CREDENTIALS_BASE64 that will be used to store the contents of google-credentials.json as a base 64-encoded string within the workload application secret:

export GOOGLE_APPLICATION_CREDENTIALS_BASE64=$(cat ${GIT_REPO_ROOT}/rendered/google-credentials.json | base64)At pod startup, the contents will be decoded and written to the file system of the pod.

With the configuration for Google complete, you can update the app-workload secret within the spire-federated-identities namespace with the values that were previously set:

envsubst < $GIT_REPO_ROOT/templates/app-workload-secret-google.template | oc apply -f-Roll out the workload application so that it can make use of the secret containing the details related to the configuration:

oc rollout restart -n spire-federated-identities deployment/workload-appWait for the application to roll out successfully:

oc wait -n spire-federated-identities --for=condition=Available deployment/workload-app --timeout=600sVerification

Now that the configuration to enable the workload application to manage content in Google Cloud Storage is complete, let's showcase the capabilities that are available.

Obtain a remote shell within the application workload pod:

oc rsh -n spire-federated-identities deployment/workload-appA Python script called get-spiffe-token.py is present within the current directory (/opt/app-root/src) and is used to retrieve a JWT token from the SPIFFE Workload API. The script requires that the desired audience be provided as a parameter using the --audience flag, which will allow for it to be included within the returned JWT. The secret that was patched earlier contained a property (GOOGLE_AUDIENCE) matching the value that Google Cloud expects (the full canonical name of the workload identity pool provider).

Generate a JWT token from SPIRE and save the contents in a file with the path /opt/app-root/src/spiffe-token-google.txt. This location was used when generating the Google credentials file in the prior section:

/opt/app-root/src/get-spiffe-token.py --audience=$GOOGLE_AUDIENCE > /opt/app-root/src/spiffe-token-google.txtNext, log in to Google Cloud using the credentials file that references the file containing the JWT token generated by SPIRE:

gcloud auth login --cred-file=$GOOGLE_APPLICATION_CREDENTIALS --project=${GOOGLE_PROJECT_ID}The gcloud CLI has authenticated successfully using the JWT from SPIRE, so you can verify that Google Cloud Storage buckets can be listed:

gcloud storage lsAll of the available buckets should be returned.

Now that you have confirmed that the workload application is both authenticated and can list Google Cloud Storage buckets, create a new file called openshift-spire-federated-identities.txt within the application workload pod to confirm that files with the Google Cloud Storage bucket can also be uploaded:

echo “Hello from OpenShift” > $HOME/openshift-spire-federated-identities.txtNext, upload the file to the Google Cloud Storage bucket:

gcloud storage cp $HOME/openshift-spire-federated-identities.txt gs://${GOOGLE_BUCKET_NAME}/Confirm that the file is present in Google Cloud Storage by listing the contents of the bucket:

gcloud storage ls \

--recursive \

gs://${GOOGLE_BUCKET_NAME}The file that was previously uploaded should be displayed.

Finally, because we constrained the privileges that were assigned within the policy to just the management of Google Cloud storage, attempting to perform other actions, like listing Workload Identity Federation pools, should fail. Test that with this command:

gcloud iam roles listA response similar to the following should be presented, confirming that the role assigned to the pod has a restricted set of privileges:

ERROR: (gcloud.iam.workload-identity-pools.list) PERMISSION_DENIED: Permission 'iam.workloadIdentityPools.list' denied on resource '//iam.googleapis.com/projects/${PROJECT_ID}/locations/global' (or it may not exist). This command is authenticated as ${UNIQUE_NAME}@${PROJECT_ID}.iam.gserviceaccount

- '@type': type.googleapis.com/google.rpc.ErrorInfo

domain: iam.googleapis.com

metadata:

permission: iam.workloadIdentityPools.list

resource: projects/${PROJECT_ID}/locations/global

reason: IAM_PERMISSION_DENIEDFeel free to continue exploring the other capabilities allocated to the service account for managing Google Cloud Storage, and to inspect the contents of the get-spiffe-token.py file as it uses the spiffe Python package to interact with SPIRE and the Workload API.

When you're done, type exit to end the remote session within the pod. To clean up and remove the provisioned resources within Google Cloud, execute the following commands:

gcloud iam workload-identity-pools delete ${UNIQUE_NAME} --location=global --quiet

gcloud iam service-accounts delete ${UNIQUE_NAME}@${PROJECT_ID}.iam.gserviceaccount.com --quietWrap-up

Whether you attempted any of the public cloud implementations described previously or just reviewed the steps needed to complete the process, you should hopefully now have a sense of a future where workloads using identities can communicate both successfully and securely. By taking advantage of zero trust workload identity manager and SPIFFE and SPIRE identities on OpenShift, workloads can not only generate an identity that can be presented to external systems, but also customize its construction and content. These entities are not tied to a specific platform or operating environment, so they can be used throughout a hybrid cloud environment and support integration with systems on-premise or in the public cloud.

We have just scratched the surface of the potential opportunities where SPIFFE and SPIRE provide solutions, and hope that these approaches will instill confidence for a more secure future moving forward.