Enterprises today seek to integrate generative AI capabilities into their applications. However, scaling large AI models introduces complexity, such as managing high-volume traffic from large language models (LLMs), optimizing inference performance, maintaining predictable latency, and controlling infrastructure costs.

Platform engineering leaders require more than model deployment capabilities. They need a Kubernetes-native infrastructure that supports efficient GPU utilization and intelligent request routing. This foundation also enables distributed inference patterns, cost-aware autoscaling, and production-grade governance.

This article demonstrates how two open source solutions, KServe and llm-d, can be combined to address these challenges. We explore the role of each solution, illustrate their integration architecture, and provide practical guidance for AI platform teams, with a focus on KServe's LLMInferenceService, introduced in KServe v0.16.

KServe: Simplifying AI model deployment on Kubernetes

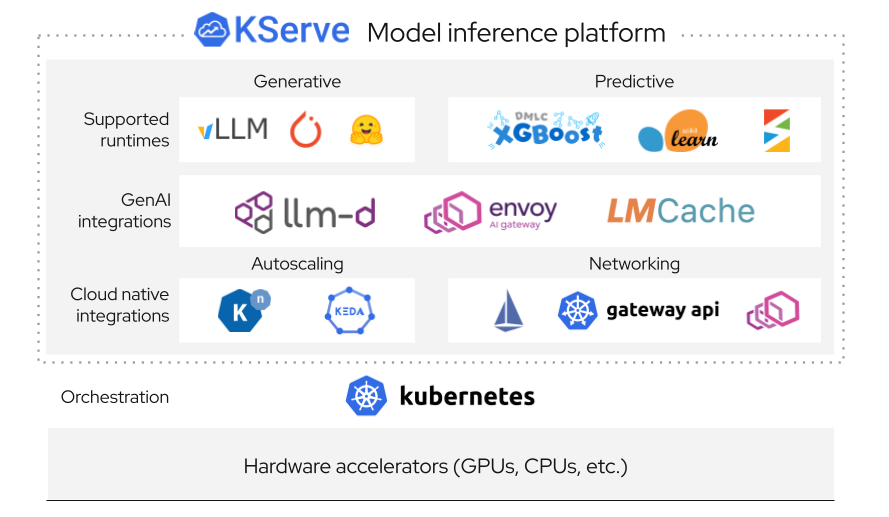

KServe is a Kubernetes-based model serving platform that simplifies deploying and managing ML models, including LLMs, at scale. For platform engineers, KServe acts as the model serving control plane—the layer responsible for lifecycle, scaling, and operational governance.

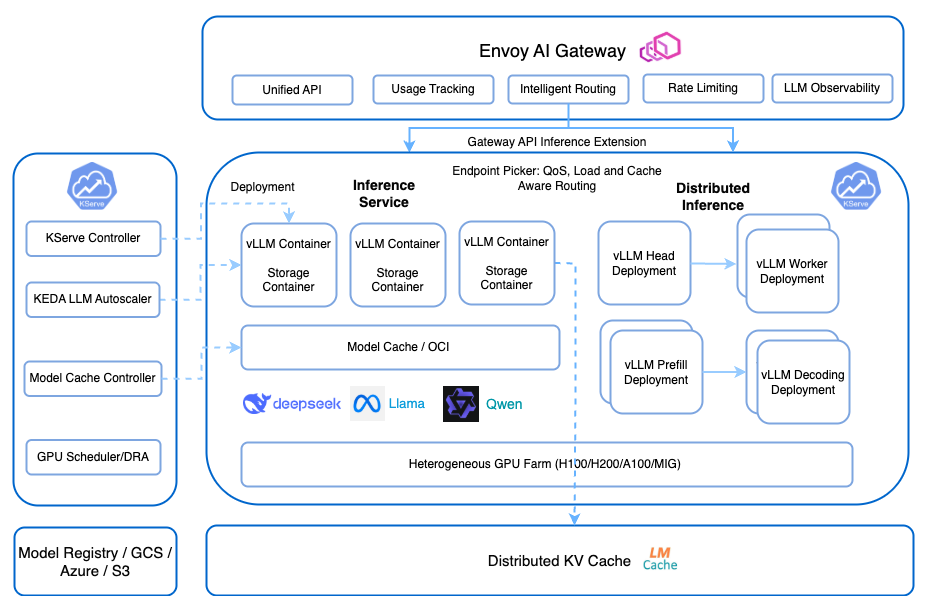

The high-level generative inference architecture, including the interaction between Envoy AI Gateway and the KServe control plane, is illustrated in Figure 1.

Inference as a service: What happens when a request comes in

Instead of thinking about InferenceService as a list of features, it's more useful to follow the life of a single request: A request enters the system—for example, a /v1/chat/completions call from an application using an OpenAI-compatible client. KServe immediately takes responsibility.

First, KServe determines where the request should go. If no pods are running, it triggers scale-from-zero. If traffic increases, it scales horizontally based on real-time demand. Then, it routes the request through the appropriate revision of the service, whether that's a stable deployment or a canary rollout receiving partial traffic.

Before reaching the model, optional preprocessing steps might enrich or transform the request. Then, the system hands it off to the serving runtime, such as vLLM or TGI, where the model inference occurs. As tokens begin streaming back, KServe maintains the connection to ensure low-latency responses for the client. KServe continuously observes traffic patterns, adjusts scaling decisions, manages revisions, and maintains endpoint stability.

From the developer's perspective, it feels simple: send a request, get a response. From the platform engineer's perspective, it's a fully orchestrated, adaptive system managing compute, traffic, and lifecycle in real time.

LLMInferenceService in KServe

KServe v0.16 introduces more generative AI capabilities, including LLMInferenceService, which is designed specifically for large language model workloads. The service provides OpenAI-compatible APIs, streaming token responses, and native integration with LLM runtimes. It is also built to handle high-concurrency workloads.

The service connects to optimized runtimes such as vLLM and Hugging Face TGI to enable continuous batching, paged attention, and KV-cache reuse. This makes each individual pod highly efficient, but that efficiency has limits.

When KServe alone is not enough: The engineer's reality

Initial KServe deployments often look successful: the model is live, autoscaling responds to requests, and GPUs are active. This stability is often tested, however, once the system encounters production-level traffic.

You might notice inconsistent performance, where some requests are fast while others are unexpectedly slow. GPU utilization can appear high without being effective, and identical prompts might fail to benefit from cache reuse. These issues cause tail latency to become unpredictable under load.

You realize that requests are being routed without awareness of where their data already exists. KV cache—a key optimization in LLM inference—is effectively random in a multi-replica setup. Then comes the next challenge: Prefill and decode phases—two fundamentally different workloads—are competing for the same GPUs.

Finally, scaling decisions are reactive, not intelligent. The system scales based on load, but not on how efficiently that load is being processed.

At this point, the problem shifts. The challenge is no longer about deploying models; it's about orchestrating intelligence across the cluster. That's where llm-d comes in.

Integrating KServe and llm-d: Why separation wins

It might be tempting to ask: Why not just build all of this into KServe?

The answer lies in the architecture. Keeping KServe and llm-d as separate layers is a deliberate design choice that enables composability. KServe focuses on what it does best: managing the model lifecycle, AI exposure, and operational governance, such as autoscaling.

In contrast, llm-d handles different operational concerns, including runtime-aware scheduling, cache locality optimization, and intelligence across pods and nodes. Merging these into a single monolithic system would couple features that evolve at different speeds.

Instead, this layered approach gives platform teams:

- Flexibility: Swap runtimes or schedulers independently.

- Extensibility: Integrate future innovations without redesigning the stack.

- Clarity: Each layer has a clear responsibility.

From a platform engineering perspective, this is a win. Instead of a single tool trying to solve every problem, an effective system uses components that manage specific roles and work together through stable interfaces.

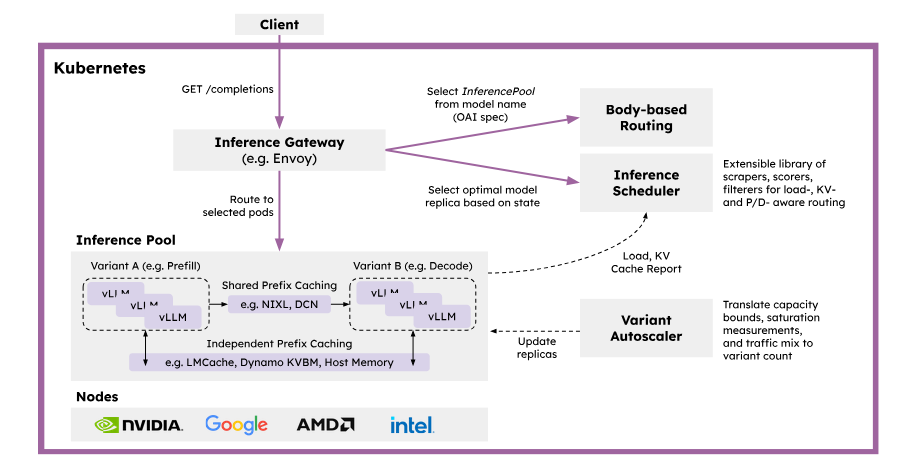

The architectural flow for intelligent request routing and distributed prefix caching in Kubernetes is shown in Figure 3.

KServe LLMInferenceService and llm-d: Responsibility separation

To build an evolvable AI inference platform, you must separate these operational concerns.

KServe manages the model lifecycle and governance, while LLMInferenceService provides the generative API abstraction. Within the runtime, vLLM ensures execution, and llm-d provides cross-runtime routing and KV-cache awareness. Finally, Kubernetes orchestrates the underlying resources.

This separation is what enables a production-ready, scalable, and evolvable AI inference platform.

Cost efficiency comparison: Naive versus optimized

Serving LLMs at scale is more than a model problem; it is a distributed systems problem.

Naive architectures can introduce cache locality loss, GPU imbalance, and duplicate computation. These issues lead to high tail latency and overprovisioned infrastructure.

With KServe and llm-d, these inefficiencies are systematically removed through intelligent routing and phase-aware execution.

Benchmark results: The before and after story

Before introducing llm-d, the system behaved like many real-world deployments. Requests were distributed evenly—but blindly. Cache reuse was inconsistent. GPU utilization looked high, but effective throughput told a different story. In practice, this meant we were leaving a significant portion of performance unused.

Once we introduced cache-aware routing and phase separation, system behavior improved. Requests began landing where their context already existed. Prefill and decode workloads stopped competing for the same resources. Schedulers began making decisions based on actual system state, not just traffic volume. These changes resulted in a measurable increase in efficiency.

Key outcomes:

- Up to 57 times improvement in Time to First Token (P90)

- Double the token throughput

- Approximately 50% reduction in tail latency

- More consistent and predictable performance under load

- Improved GPU utilization

| Optimization area | Naive architecture (round-robin LB) | Optimized (KServe + llm-d) | Source |

|---|---|---|---|

| Cache locality | Requests routed randomly → KV cache frequently missed | Cache-aware routing preserves prefix locality | KV-Cache Wins You Can See: From Prefix Caching in vLLM to Distributed Scheduling with llm-d |

| Time to First Token (P90) | Baseline latency under cache-blind scheduling | Up to ~57× faster P90 TTFT in benchmark | KV-Cache Wins You Can See: From Prefix Caching in vLLM to Distributed Scheduling with llm-d |

| Token throughput | ~4,400 tokens/sec (baseline test cluster) | ~8,730 tokens/sec (~2× improvement) | KV-Cache Wins You Can See: From Prefix Caching in vLLM to Distributed Scheduling with llm-d |

| Throughput at scale | Degrades under multi-tenant load | Sustained 4.5k–11k tokens/sec | llm-d 0.5: Sustaining Performance at Scale |

| Tail latency (P95/P99) | Higher tail latency due to stragglers and imbalance | ~50% tail latency reduction (reported tests) | llm-d: Kubernetes-native distributed inferencing |

| GPU utilization | Uneven utilization, idle GPUs possible | Improved effective utilization via routing intelligence | Well-lit Path: Intelligent Inference Scheduling |

| Autoscaling control | Scale reacts to load only | Works with KServe autoscaling and routing intelligence | Autoscaling with Knative Pod Autoscaler |

The model remained the same; the performance gains came from the orchestration and routing logic of the system.

KV-cache-aware scheduling and disaggregated inference with llm-d

As LLM deployments mature, scaling is no longer just about adding GPUs. It's about using them intelligently. Modern runtimes such as vLLM introduced prefix (KV) caching to reduce redundant computation, but without smart scheduling, much of that benefit is lost.

This is where llm-d provides a different approach.

Disaggregated inference (prefill and decode separation)

LLM inference consists of two phases: prefill and decode. The prefill phase is compute-heavy; it processes the full prompt and builds the model's attention context. The decode phase is latency-sensitive and generates tokens step by step, where responsiveness impacts user experience.

llm-d separates these phases across different GPU groups, assigning compute-optimized resources to prefill and latency-optimized resources to decode. With intelligent scheduling between them, workloads are aligned to the right hardware profile.

This phase-aware architecture increases GPU utilization, reduces tail latency, and lowers cost per token by eliminating resource contention between different workloads.

Intelligent inference scheduler

llm-d's inference scheduler evaluates the following metrics:

- GPU utilization

- Queue depth

- Cache residency

- SLA constraints

- Load distribution

The system uses an intelligent scheduler to decrease serving latency and increase throughput. It achieves this through prefix-cache aware routing, utilization-based load balancing, fairness and prioritization for multi-tenant serving, and predicted latency balancing.

Conclusion

Modern gen AI platforms require more than fast runtimes. They require cache locality awareness, phase-aware scheduling, and distributed intelligence within a composable, Kubernetes-native architecture. By combining KServe and llm-d, platform teams can move from serving models to operating efficient inference systems at scale.

Explore the project documentation:

Engage with community resources and Slack channels to stay updated and contribute to ongoing developments.