In a perfect cloud-native world, everything runs in a container. But in the real enterprise world, critical data often lives on virtual machines (VMs). These workloads could be anything from a massive PostgreSQL database to a legacy payment processor, and they should not be left out of your zero trust architecture.

Since its introduction, Red Hat OpenShift Service Mesh 3 has been closely aligned with the upstream Istio project. We are now adding the ability to integrate external virtual machine workloads into OpenShift Service Mesh as a Developer Preview feature.

In a recent learning path, we explored how to incorporate a VM deployed with Red Hat OpenShift Virtualization into OpenShift Service Mesh. In this post, we will explore how to connect an external VM with an OpenShift Service Mesh 3.3 deployment. We'll take the classic example Bookinfo application and connect it to a database outside the cluster. The database will be running on a Red Hat Enterprise Linux 9.6-based VM, which will not have direct connectivity to the pod networks.

Why connect an external VM?

Why go through the trouble of installing a service mesh sidecar on a VM?

- Zero trust security: You can enforce mutual Transport Layer Security (mTLS) between your containerized front-end and your VM-based database, and will not need to maintain a list of allowed IP addresses.

- Observability: You can get "golden signal" metrics (latency, traffic, errors) for your database automatically.

- Traffic management: You can use advanced mesh features like circuit breaking or retries for connections to the VM.

Architecture and considerations

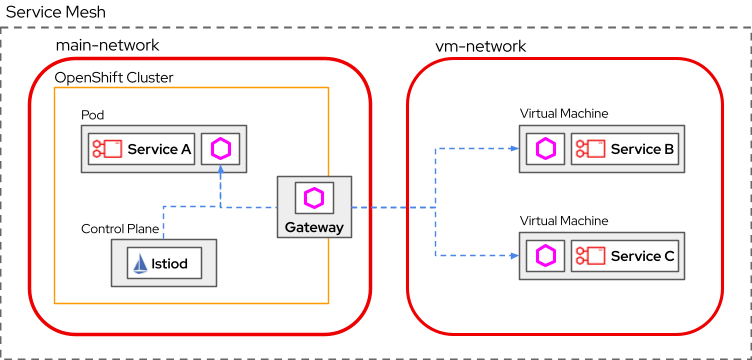

Before we dive into the shell commands, let's look at the overall architecture. Integrating VMs into a service mesh is a bigger job than just opening the right ports; you also need to extend the control plane's reach.

Approaches to integration

By default, Istio will not restrict access to external services. But a recommended configuration would place strict controls on egress traffic and limit requests to certain known external resources. We can create a ServiceEntry for each known workload that tells the mesh how to reach it. The mesh can route to it, but the workload has no mesh capabilities. It has no identity or mTLS, and reports no metrics.

If the workloads are running on a VM with supported operating systems, we can extend the integration further by running an Envoy proxy alongside the workloads. The proxy will help establish mTLS connections to and from the mesh, as well as enable all the service mesh features. This will let the VM become a fully integrated workload with its own identity.

Network topology

According to the Istio community guidance, there are generally two network topologies:

- Single-network: The VM can access the pod network and traffic can flow between the VM and the pods directly. When we bring up a VM in OpenShift Virtualization, the VM can be placed in this flat network setup.

- Multi-network: The VM and the pods are on different networks. The VM may require a gateway to route the traffic to the pods.

We are dealing with a multi-network mesh setup, which is a common case for real-world connectivity scenarios. Our example will use two networks:

main-network(pod network): The Bookinfo microservices run here.vm-network(VM LAN): The Red Hat Enterprise Linux 9 VM running the database runs here. It is on an external network.

The solution: East-west gateway

Since the networks are disconnected, we use an east-west gateway on the cluster edge to bridge the two networks.

- Control plane traffic: The VM connects to the gateway's public IP address to talk to

istiod(fetching certs/config). - Data plane traffic: We will use the gateway in multi-network routing scenarios if the network is not addressable. All workloads on any network are assumed to be directly reachable from each other. The traffic will be sent as is. If

main-networkworkloads are unreachable from other networks, we will configure a gateway to handle their traffic. All requests tomain-networkfrom a different network will be forwarded to the gateway. The gateway will then use the request's Server Name Indication (SNI) header to determine which workload it should forward to.

In this example the VM can't address the pod network directly, so we will instruct the VM to send the mesh traffic to the edge gateway. To simplify our setup, we will attach a public IP address to the VM so that we can easily route traffic to it. This provides direct connectivity from any workload in the mesh. The encrypted traffic will be treated as any other outbound traffic.

Environment

- Cluster: Red Hat OpenShift Container Platform 4.16 or later.

- Service mesh: OpenShift Service Mesh 3.3 or later. You can follow the instructions to set up OpenShift Service Mesh.

- Bookinfo application: Follow the instructions to deploy the Bookinfo sample applications with an ingress gateway. Make sure you can access the /

productpageendpoint through the ingress gateway. - Monitoring stack and Kiali: Turn on the monitoring stack for Red Hat OpenShift for user-defined projects. Follow the instructions to enable the monitoring stack with OpenShift Service Mesh. Next, follow the instructions to install the Kiali operator and configure it with the monitoring stack.

- Virtual machine: Red Hat Enterprise Linux 9.6 or later.

- Workstation:

oc: Follow the instructions to set up theocCLI.istioctl: Follow the instructions to set up theistioctlCLI.

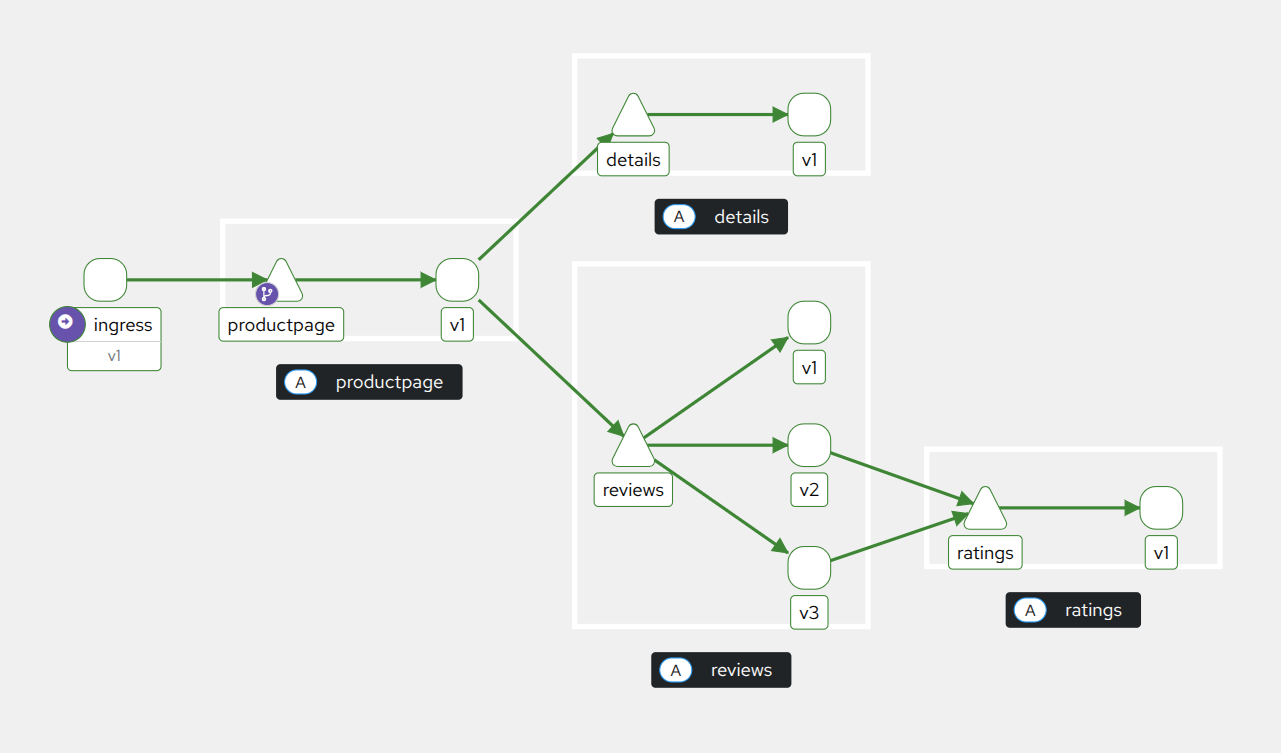

We will start with a simple version of the Bookinfo applications as shown in Figure 2.

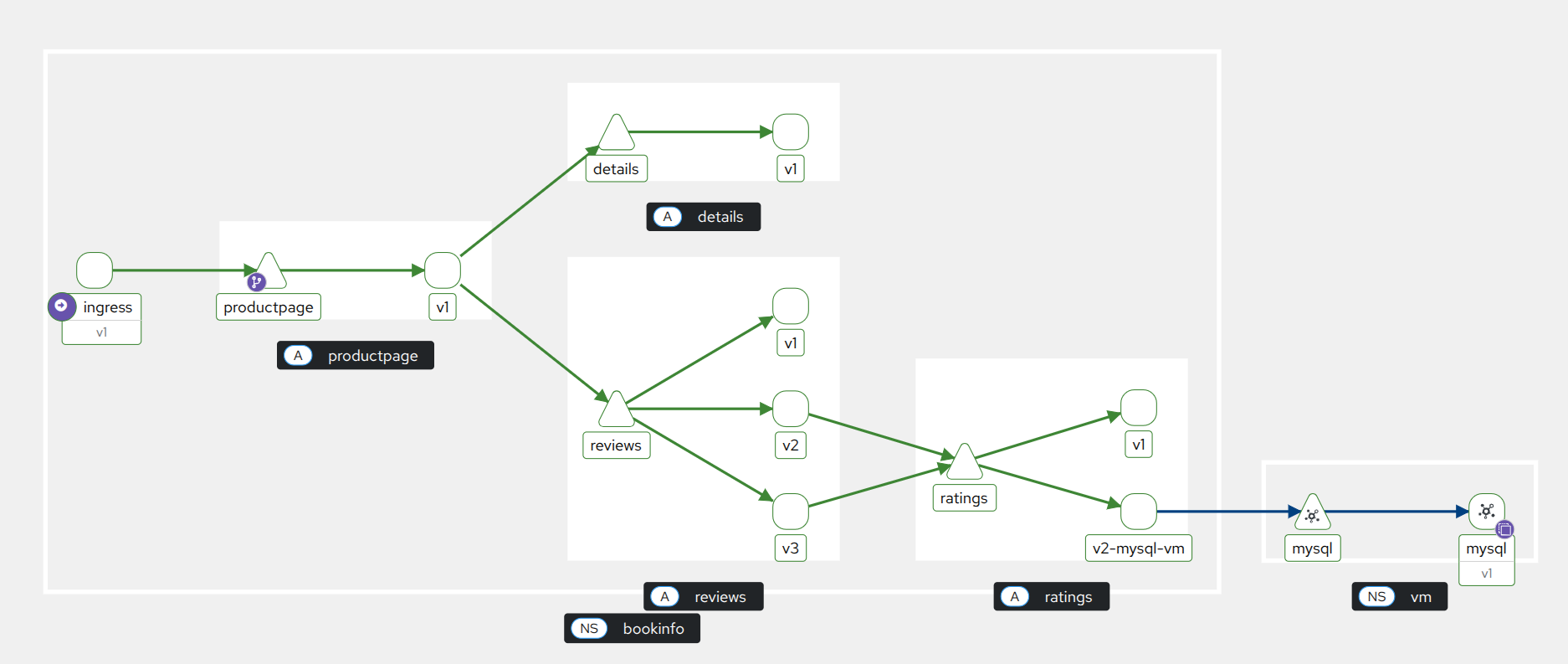

When we reach the end of the article, you will have a new version of the ratings application that will retrieve reviewers' ratings from a MySQL server running on an off-cluster VM.

Step 1: Configure the control plane for multi-network

We need to define the networks logically. Remember, we're calling the OpenShift cluster main-network and the VM environment vm-network.

1. Update the Istio Control Plane

In OpenShift Service Mesh 3.3, we want to pick a network name, mesh ID, and cluster name in our Istio resource. This informs the control plane about the network topology. There will be other networks outside the pod network.

apiVersion: sailoperator.io/v1

kind: Istio

metadata:

name: default

spec:

namespace: istio-system

values:

global:

meshID: mesh1

network: main-network # Identifies the OpenShift network

multiCluster:

clusterName: cluster12. Deploy the east-west gateway

This gateway acts as the bridge. It listens on main-network for traffic destined for vm-network. We use sni-dnat mode to route traffic based on SNI header for the traffic originating from vm-network. The VM only knows that it should send the mesh traffic to the gateway. The gateway will then route the traffic to the appropriate service in the cluster by looking at the SNI header.

This is different from the standard ingress gateway, where the gateway terminates the TLS and routes the traffic to the appropriate service based on the hostname. The sni-dnat mode is critical for multi-network routing. The mode is set automatically when Passthrough is detected in the Gateway resources.

apiVersion: v1

kind: Service

metadata:

name: istio-eastwestgateway

namespace: istio-system

labels:

istio: eastwestgateway

spec:

type: LoadBalancer

selector:

istio: eastwestgateway

ports:

- port: 15012

targetPort: 15012

name: tcp-istiod # For Control Plane connections (xDS - discovery service)

- port: 15017

targetPort: 15017

name: tcp-webhook

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: istio-eastwestgateway

namespace: istio-system

spec:

selector:

matchLabels:

istio: eastwestgateway

template:

metadata:

annotations:

# Select the gateway injection template (rather than the default sidecar template)

inject.istio.io/templates: gateway

labels:

istio: eastwestgateway

sidecar.istio.io/inject: "true"

spec:

containers:

- name: istio-proxy

image: auto

env:

- name: ISTIO_META_REQUESTED_NETWORK_VIEW

value: "main-network"Note that we set ISTIO_META_REQUESTED_NETWORK_VIEW to main-network so this gateway receives only endpoints from main-network from the Istiod control plane. This ensures its Envoy configuration is populated only with endpoints reachable in that network. We do this because the gateway routes inbound traffic using the SNI value to select the destination service, while the requested network view ensures that only main-network endpoints are considered for that routing decision.

3. Expose the control plane

The VM in vm-network needs to reach the istiod service in main-network to get its configuration. We expose istiod through the east-west gateway.

apiVersion: networking.istio.io/v1

kind: Gateway

metadata:

name: istiod-gateway

namespace: istio-system

spec:

selector:

istio: eastwestgateway

servers:

- port: { number: 15012, protocol: TLS, name: tls-xds }

tls: { mode: PASSTHROUGH }

hosts: ["istiod.istio-system.svc"] # This should match the istiod service in your environment.

- port: { number: 15017, protocol: TLS, name: tls-webhook }

tls: { mode: PASSTHROUGH }

hosts: ["istiod.istio-system.svc"] # This should match the istiod service in your environment.

---

apiVersion: networking.istio.io/v1

kind: VirtualService

metadata:

name: istiod-vs

namespace: istio-system

spec:

hosts: ["istiod.istio-system.svc"]

gateways: [istiod-gateway]

tls:

- match:

- port: 15012

sniHosts: ["*"]

route:

- destination:

host: istiod.istio-system.svc.cluster.local

port: { number: 15012 }

- match:

- port: 15017

sniHosts:

- "*"

route:

- destination:

host: istiod.istio-system.svc.cluster.local

port:

number: 443

---

apiVersion: networking.istio.io/v1

kind: Gateway

metadata:

name: cross-network-gateway

spec:

selector:

istio: eastwestgateway

servers:

- port:

number: 15443

name: tls

protocol: TLS

tls:

mode: AUTO_PASSTHROUGH

hosts:

- "*.local"We need to label the istio-system namespace with main-network.

oc label namespace istio-system topology.istio.io/network="main-network"Step 2: Generate identity artifacts

We should have a bookinfo namespace that was created when we deployed the Bookinfo applications. All our VM configurations will live there.

1. Create the WorkloadGroup

A WorkloadEntry is used to describe how to connect to a non-Kubernetes workload, while a WorkloadGroup defines a reusable template for a group of WorkloadEntries. In setups where PILOT_ENABLE_WORKLOAD_ENTRY_AUTOREGISTRATION is not enabled, each VM must have a corresponding WorkloadEntry created manually before it can join the mesh, making the process brittle and error-prone. With autoregistration enabled, the control plane automatically creates a WorkloadEntry when the VM connects using a WorkloadGroup as a template, eliminating this manual step.

In OpenShift Service Mesh 3.3, these two features are enabled by default. With PILOT_ENABLE_WORKLOAD_ENTRY_AUTOREGISTRATION enabled, the control plane will automatically create a WorkloadEntry for the VM when it connects using the WorkloadGroup as the template. Let us define a WorkloadGroup for our ratings service in the VM.

Note that the PILOT_ENABLE_WORKLOAD_ENTRY_HEALTHCHECKS is optional, but we recommend enabling it, because it ensures that the health checks use the agent's health reporting.

# vm-workloadgroup.yaml

apiVersion: networking.istio.io/v1

kind: WorkloadGroup

metadata:

name: mysql-vm-group

namespace: vm

spec:

metadata:

labels:

app: mysql

class: vm-workload

version: v2

template:

serviceAccount: mysql-vm

network: vm-network # Defines this workload as belonging to the external network2. Generate the token manually

Prior to OpenShift Container Platform 4.16, a long-lived service account API token secret was generated for each service account that was created. Starting with OpenShift Container Platform 4.16, this service account API token secret is no longer created. We need to generate a bound service account token for the VM with a specific audience (istio-ca) to authenticate.

# Create the ServiceAccount

oc create sa mysql-vm -n vm

# Generate the token with audience 'istio-ca'

oc create token mysql-vm -n vm --audience=istio-ca --duration=24h > istio-token3. Generate configuration files

We will use istioctl to generate the configuration files, but we will overwrite the token it tries to generate. The config files are:

cluster.env: Contains metadata that identifies the namespace, service account, network and CIDR, along with (optionally) the inbound ports to capture.istio-token: A Kubernetes token used to get certs from the Certificate Authority (CA).mesh.yaml: Provides ProxyConfig to configurediscoveryAddress, health-checking probes, and some authentication options.root-cert.pem: The root certificate used to authenticate.hosts: An addendum to/etc/hoststhat the proxy will use to reachistiodfor xDS.

export WORK_DIR=./vm-bootstrap

mkdir -p $WORK_DIR

# Get the Public IP of the East-West Gateway

GW_HOSTNAME=$(oc -n istio-system get svc istio-eastwestgateway -o jsonpath='{.status.loadBalancer.ingress[0].hostname}')

GW_IP=$(dig +short ${GW_HOSTNAME} | head -n 1)

istioctl x workload entry configure \

-f vm-workloadgroup.yaml \

-o "${WORK_DIR}" \

--clusterID "cluster1" \

--ingressIP "${GW_IP}" \

--autoregister

# CRITICAL: Overwrite the auto-generated token with our OCP-compatible one

cp istio-token ${WORK_DIR}/istio-tokenStep 3: Configure the VM

Switch to your Red Hat Enterprise Linux 9.6 VM console.

1. Install the sidecar

We use the upstream RPM (Red Hat Package Manager) packages that match our OpenShift Service Mesh Istio resource version (1.28.4).

Note that these RPM packages are upstream artifacts, and are thus not supported by Red Hat.

# Install the Istio 1.28.4 sidecar RPM

curl -LO https://storage.googleapis.com/istio-release/releases/1.28.4/rpm/istio-sidecar.rpm

# Install iptables-nft dependencies for the sidecar

sudo dnf install iptables-nft -y

# Install the sidecar RPM

sudo rpm -ivh istio-sidecar.rpm2. Install configuration files

Copy the files from ${WORK_DIR} on your workstation to the VM. You can use scp to transfer the files. After that, follow these steps to move the files to the correct locations.

# create all required directories

sudo mkdir -p /etc/istio/proxy /var/run/secrets/tokens /etc/certs

# Place files (scp these from your workstation first)

sudo cp root-cert.pem /etc/certs/root-cert.pem

sudo cp istio-token /var/run/secrets/tokens/istio-token

sudo cp cluster.env /var/lib/istio/envoy/cluster.env

sudo cp mesh.yaml /etc/istio/config/mesh

# Ensure ownership

sudo chown -R istio-proxy:istio-proxy /etc/istio /var/run/secrets/tokens /etc/certs /var/lib/istio3. Configure networking (multi-network specific)

In a multi-network setup, the VM cannot resolve cluster domain names. We must manually map istiod to the east-west gateway public IP address inside /etc/hosts, so the sidecar can initiate the bootstrap process with the control plane.

cat hosts | sudo tee -a /etc/hostsStep 4: Putting it into practice with Bookinfo

Now that the VM is connected to the control plane, let's connect a new ratings provider service to the Bookinfo applications in the mesh.

1. Prepare the database on the Red Hat Enterprise Linux VM

On your Red Hat Enterprise Linux 9 VM, install and configure MariaDB to serve as the back end for the Bookinfo ratings service.

# Install MariaDB

sudo dnf install mariadb-server -y

sudo systemctl enable --now mariadb

# Secure installation (optional for demo) and configure DB

sudo mysql -u root <<EOF

GRANT ALL PRIVILEGES ON test.* TO 'root'@'localhost' IDENTIFIED BY 'easy';

GRANT ALL PRIVILEGES ON test.* TO 'root'@'127.0.0.1' IDENTIFIED BY 'easy';

FLUSH PRIVILEGES;

EOF

# Load the Bookinfo ratings schema

curl -s https://raw.githubusercontent.com/openshift-service-mesh/istio/refs/heads/release-1.27/samples/bookinfo/src/mysql/mysqldb-init.sql | sudo mysql -u root -peasy2. Configure VM firewall and start Istio

We need to allow traffic on the Red Hat Enterprise Linux firewall. However, you do not need to configure iptables rules for traffic interception manually. The Istio systemd service includes a script (istio-start.sh) that automatically configures the necessary iptables redirections when the service starts.

# 1. Open Firewall ports

# 3306: MySQL, 15021: Health Checks, 15090: Prometheus Metrics

sudo firewall-cmd --permanent --add-port=3306/tcp

sudo firewall-cmd --permanent --add-port=15021/tcp

sudo firewall-cmd --permanent --add-port=15090/tcp

sudo firewall-cmd --reload

# 2. Start the Istio Service

# This will automatically apply the iptables rules to intercept traffic

sudo systemctl enable --now istioYou can verify the rules were applied by checking sudo iptables -t nat -L. You should see chains named ISTIO_INBOUND, ISTIO_OUTPUT, etc.

3. Expose the VM database to the mesh

Back on your Red Hat OpenShift workstation, define a ServiceEntry. This tells the mesh that the MySQL database is running on our VM in vm-network.

apiVersion: networking.istio.io/v1

kind: ServiceEntry

metadata:

name: mysql

namespace: vm

spec:

hosts:

- mysqldb.vm.svc.cluster.local

location: MESH_INTERNAL

ports:

- number: 3306

name: mysql

resolution: STATIC

workloadSelector:

labels:

app: mysql

---

apiVersion: v1

kind: Service

metadata:

name: mysql

namespace: vm

labels:

app: mysql

spec:

ports:

- port: 3306

name: mysql

appProtocol: MYSQL

selector:

app: mysqlThe WorkloadGroup you created earlier automatically generated a WorkloadEntry with the VM's IP address when the sidecar connected. Here's what happens now:

- The

ServiceEntryselects thatWorkloadEntryvia the labels and directs the traffic to theServicerepresenting the workloads. - When a pod in the cluster connects to

mysqldb.vm.bookinfo.svc.cluster.local:3306, the traffic is routed directly to the VM in our case.

Note that this direct routing makes it difficult to identify the real public IP address of the VM, which we will discuss momentarily in the “Limitations and considerations” section. We could specify a gateway for vm-network routing, which would enable a more flexible network situation, but this is beyond the scope of this blog post.

4. Switch ratings to use the VM database

We will now deploy ratings-v2, which is configured to talk to a MySQL database instead of using Bookinfo's local mock data. We point it to the hostname defined in our ServiceEntry.

apiVersion: apps/v1

kind: Deployment

metadata:

name: ratings-v2

namespace: bookinfo

labels:

app: ratings

version: v2

spec:

replicas: 1

selector:

matchLabels:

app: ratings

version: v2

template:

metadata:

labels:

app: ratings

version: v2

spec:

serviceAccountName: bookinfo-ratings

containers:

- name: ratings

image: quay.io/sail-dev/examples-bookinfo-ratings-v2:1.20.3

ports:

- containerPort: 9080

env:

- name: DB_TYPE

value: "mysql"

- name: DB_HOST

value: "mysqldb.vm.svc.cluster.local"

- name: DB_PORT

value: "3306"

- name: DB_USER

value: "root"

- name: DB_PASSWORD

value: "easy"Apply this deployment. Once the pod is running, generate traffic to the Bookinfo productpage. The ratings service will now connect via the east-west gateway to your Red Hat Enterprise Linux 9.6 VM to fetch ratings data!

Limitations and considerations

This is a smal sample application, and the implementation therefore has some limitations.

Gateway

The east-west gateway is a critical component of our multi-network setup. It is responsible for routing traffic between main-network and vm-network.

Ports

We are exposing 2 of the istiod ports to the public network. 15012 is for TLS and mTLS for xDS and CA services. 15017 is for TLS webhook services. Even though the ports are exposed, the traffic is mTLS-encrypted. The identity of the VM will be verified by istiod.

Reachability

Our VM needs to be reachable from the gateway. The WorkloadEntry will contain an IP address of the VM that it registers for. This IP address is where the gateway will forward the VM traffic to from the mesh.

VM

There are a few points to note about the VM and how it integrates into the mesh network.

Identity

The VM is not a Kubernetes workload, and it does not have a Kubernetes service account. We create a ServiceAccount for the VM, which is used to generate a token for the VM for the initial bootstrap. The token is used to authenticate the VM to istiod. In our case, we generate a 24-hour short-lived token. The Envoy proxy in the VM will rotate the certificates automatically. It will be just like the proxy sidecar in a pod. We may need to regenerate the token if the VM is stopped for a long period of time, depending on the certificate time to live (TTL) settings.

Traffic

Before the Envoy proxy starts, pilot-agent will run a series of iptables rules to intercept the traffic on all interfaces. All outbound traffic from the VM will be redirected to the Envoy proxy. This may affect other applications running on the VM.

Firewall

The firewall will intercept the traffic before the rules added by the pilot-agent. For a running legacy application, the firewall should already be configured to open the necessary ports. There should be no change needed.

VM public IP addresses

It is common to use Network Address Translation (NAT) when routing public IP traffic to the VM. This makes it difficult for the proxy to discover its own public IP address. A similar issue occurs if multiple network interface cards (NICs) are attached to the VM: The mesh will simply assign the first address it sees to the VM. Even though we have the PILOT_ENABLE_WORKLOAD_ENTRY_AUTOREGISTRATION feature to ease the addition of new VMs, it does not cover complex network setup.

Conclusion

You have successfully hybridized the Bookinfo application! The productpage runs in containers on OpenShift, while the critical ratings data resides securely on a Red Hat Enterprise Linux 9.6 VM, fully integrated into OpenShift Service Mesh 3.3.