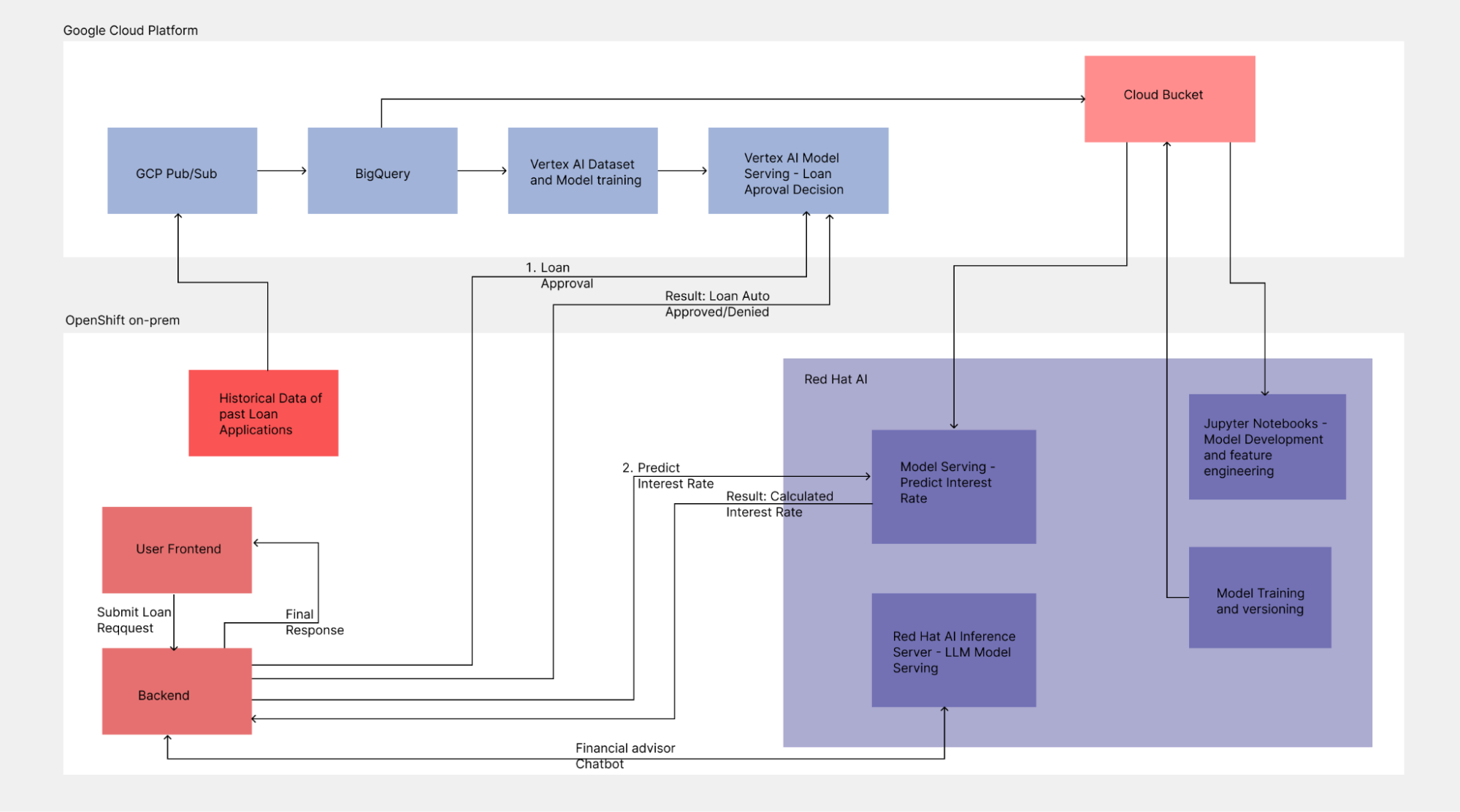

This blog presents a practical solution pattern that demonstrates how a modern financial application can make loan decisions using multiple machine learning (ML) systems deployed across hybrid environments. The architecture reflects real-world financial services requirements, where regulatory, compliance, and data residency constraints influence where models are deployed.

A distributed architecture for regulated environments

In this pattern, a loan approval classifier runs on Google Cloud using Vertex AI, while an ONNX-based regression model for interest rate prediction is deployed on Red Hat OpenShift AI running on premise. Many financial institutions require sensitive customer and risk data to remain on premise or within tightly controlled environments. Deploying OpenShift AI on premise enables these organizations to run ML workloads close to regulated data while still integrating with cloud-based services.

A lightweight React frontend and a FastAPI backend orchestrate both models, delivering a unified application experience despite the distributed deployment. The models are intentionally hosted in different environments to illustrate a core hybrid principle: models run where data resides.

The architecture also includes a Llama-based chatbot deployed on Red Hat OpenShift Container Platform using Red Hat AI Inference Server. This setup provides efficient on premise inference and contextual guidance while maintaining full control over enterprise data.

This article walks through the implementation, integration, and operational considerations of this hybrid AI setup. It shares a realistic and reproducible pattern for organizations building intelligent applications across OpenShift and Google Cloud, especially for teams with strong systems or DevOps backgrounds. You can find the project on GitHub.

Figure 1 shows the overall architecture.

Build a hybrid AI foundation with Google Cloud and Vertex AI

The process begins with a data ingestion pipeline and a gatekeeper model deployed in Vertex AI. This model serves as the first line of defense, determining whether a loan application is approved or rejected.

The architecture: Cloud-first ingestion

Our architecture begins by ingesting historical loan data. To handle this data reliably, we use Google Cloud Pub/Sub. The application publishes the loan data to a specific topic, which triggers a subscription to push that data directly into BigQuery.

This setup ensures that our training data is decoupled from our application logic, allowing for scalable data accumulation.

Feature engineering with SQL

Once the data is in BigQuery, you can use SQL for initial preprocessing instead of complex Python scripts. The goal is to create a synthetic label called loan_approval_status that the model learns to predict.

A SQL query applies business logic to the raw data:

- Auto-approve: If the credit score and income meet the thresholds (for example, a score greater than 700), the status is set to

1. - Reject: If the criteria aren't met, the status is set to

0.

This creates a clean, labeled dataset (loan_training_data_v1) ready for training.

Vertex AI AutoML: The gatekeeper model

With the dataset ready, use the Vertex AI AutoML tabular training feature to build a classification model.

- Input: The BigQuery table we just created.

- Target column:

loan_approval_status. - Training: Vertex AI automatically tests various algorithms to find the best fit.

Once trained, deploy this model to a Vertex AI endpoint. This endpoint acts as the gatekeeper for our application.

The logic is as follows:

- If the model predicts Approve with a confidence score greater than 75%, the request proceeds to the next stage.

- If the prediction is Reject or has low confidence, the process stops, saving computational resources for downstream models.

Test the endpoint

Using the Google Cloud console, you can send a sample JSON payload:

{

"instances": [{

"credit_score": 720,

"annual_income": 85000,

"loan_amount": 20000

}]

}The result returns a prediction of 0 or 1 and a confidence score. This outcome determines if the application continues to the next stage of the workflow.

Moving to the edge with OpenShift AI and predictive modeling

Many industries require sensitive financial logic, such as calculating specific interest rates, to run closer to the data or on specific on-premise infrastructure.

We use Red Hat OpenShift AI to handle the second stage of the pipeline: a regression model that predicts the exact interest rate for approved loans. This approach helps ensure that sensitive data remains on premise by bringing the model to the data.

The environment: OpenShift AI

For this demo, we run OpenShift AI on a Google Cloud cluster to simulate a hybrid setup. Our development work takes place in Jupyter notebooks provided by OpenShift AI workbenches.

Workflow: From cloud to container

Building a predictive model on premise requires a pipeline that brings cloud storage with your containerized environment. This process involves the following steps:

- Data sync: We pull the approved loan data from a Google Cloud Storage (GCS) bucket into the OpenShift environment. You can also use Apache Spark or pull data directly from the application that publishes the historical data.

- Feature engineering: Use pandas and scikit-learn in the notebook to refine the features for interest rate prediction.

- Training: Train a TensorFlow and Keras regression model. Unlike the classification model described earlier, this model outputs a continuous value for the interest rate.

Standardize with ONNX

To ensure the model is portable and optimized for inference, convert the TensorFlow model into the ONNX (Open Neural Network Exchange) format. This setup serves the model using various runtimes without being locked into specific framework dependencies.

Deploy with OpenVINO Model Server

OpenShift AI makes deployment simple. We use the OpenVINO Model Server runtime.

- Upload the ONNX model to an S3-compatible bucket (or back to GCS).

- In the OpenShift AI dashboard, create a model server.

- Deploy the model to expose an inference endpoint using gRPC or REST.

!curl -X POST -H "Content-Type: application/json" \

-d '{"inputs": [{"name": "input:0", "shape": [1, 5], "datatype": "FP32", "data": [720, 95000, 35000, 60, 0.04]}]}' \

https://interest-rate-loan-rate-model.apps.<domain>/v2/models/interest-rate/infer{

"model_name": "interest-rate",

"model_version": "5",

"outputs": [{

"name": "Identity:0",

"shape": [1, 1],

"datatype": "FP32",

"data": [11.088425636291504]

}]

}As you can see, the request returns a predicted interest rate of 11.08% for these inputs. We now have two endpoints:

- Vertex AI: This endpoint decides if a loan is approved.

- OpenShift AI: This endpoint determines the interest rate for the approved loans.

Next, we combine these two endpoints in the frontend UI to route traffic between the models.

Orchestration, LLMs, and the intelligent user experience

We have two deterministic models running in a hybrid environment. However, displaying a raw number like 11.08% is not a helpful experience for the user. We need to orchestrate these models to present the data in a clear, useful way.

A large language model (LLM) running on Red Hat OpenShift acts as a financial advisor. A Python backend and a React frontend connect the system components.

Infrastructure spotlight: GPU provisioning

To run high-performance workloads and a chatbot, you need GPUs. OpenShift simplifies this using MachineSet resources.

Define a MachineSet for an NVIDIA A100 node and verify that the NVIDIA GPU Operator is running to manage drivers and toolkit injection. Then, apply taints and tolerations to ensure only specific AI workloads land on these expensive GPU nodes. Adding GPU nodes in OpenShift is a straightforward process.

Hosting Llama 3 on OpenShift

Once our cluster is GPU-enabled, we chose the Llama 3 8B Instruct model for this project. The model strikes a balance between performance and resource usage, fitting comfortably on a single NVIDIA A100 GPU.

To serve this LLM, we use Red Hat Inference Server, a high-throughput and memory-efficient serving engine. We deploy vLLM as a custom serving runtime in OpenShift AI. This setup exposes an API compatible with standard chat interfaces.

The backend: The orchestrator

A Python backend serves as the core of this application, routing requests based on business logic. It executes the following workflow:

- Receive request: The user submits data from the frontend.

- Call Vertex AI: The backend sends a request to the Google Cloud endpoint.

- If the model rejects the request, the process stops.

- If the model approves the request with more than 75% confidence, the backend proceeds.

- Call OpenShift AI: The backend calls the OpenVINO endpoint to determine the interest rate.

- Prompt engineering: The backend uses the approval status and interest rate to construct a prompt for the LLM: You are a helpful financial advisor. The customer's loan was approved at a rate of 10.5%. Explain this to them and offer financial advice.

- Call OpenShift AI: The backend sends the prompt to the vLLM endpoint, which returns a natural language response.

The frontend: Context-aware chat

The React frontend offers two modes. In the Prediction form, users input their credit score and income. The Loan assistant chat provides a context-aware interface for further interaction.

If a user gets rejected, they can ask the chatbot why they were denied. Because the backend passed the prediction context to the LLM, the chatbot can explain that a credit score of 500 is just below the threshold, for example. It can then suggest improvements, like lowering the requested amount or consolidating debt.

Conclusion

This project demonstrates that modern AI solutions rarely rely on just one model. Success comes from a combination of hybrid infrastructure and hybrid models, where Google Cloud and Red Hat OpenShift work together. This foundation allows traditional predictive AI, such as regression and classification, to combine with generative AI. By orchestrating these components, we create applications that are accurate, engaging, and helpful.

Last updated: March 20, 2026