When we introduced Red Hat OpenStack Services on OpenShift in early 2025, our consultants and solutions architects noticed a recurring theme: organizations wanted to do more with less. This led to releasing support for multiple OpenStack services on a single Red Hat OpenShift cluster, a project we internally called MultiRHOSO phase 1.

But we didn't stop there. To provide even greater granularity and efficiency, we are now focusing on deploying OpenStack Services on OpenShift using hosted control planes (HCPs).

What are hosted control planes?

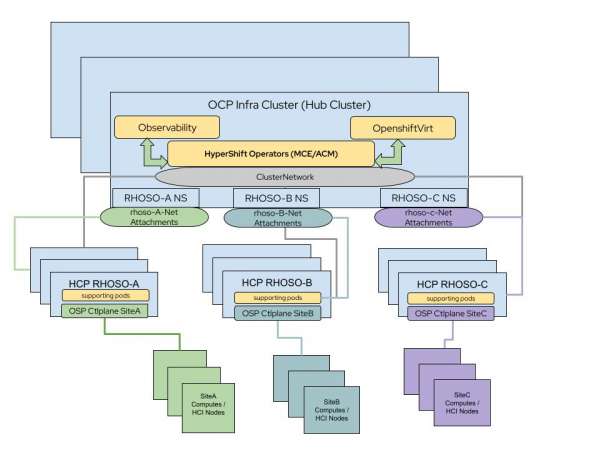

Hosted control planes (HCPs) are an OpenShift cluster deployment mechanism that utilizes an OpenShift hub cluster and multiple hosted clusters through a variety of infrastructure providers. This architecture enables the use of multiple independent OpenShift clusters sharing the same OpenShift infrastructure for the control plane (hub cluster different namespaces) with multiple options to deploy the hosted clusters' dataplane, such as virtual machines (OCPVirt) and bare metal nodes.

Using hosted control planes with Red Hat OpenStack Services on OpenShift offers three distinct advantages:

- Version isolation: Because each hosted cluster has isolated custom resource definitions (CRDs) and operators, you can deploy different versions or feature releases of OpenStack services without version conflicts.

- Granular security: You can separate the cluster administrator persona across environments, reducing the attack surface and enabling finer-grained administration across versions.

- Reduced hardware footprint: By using the Red Hat OpenShift Virtualization provider, you can maintain a consistent hardware footprint on the hub cluster while deploying multiple isolated OpenStack environments based on available resources.

Hub cluster prerequisites and installation

To set up multiple OpenStack clusters with hosted control planes, you must first install the hub cluster.

Hub cluster requirements:

- At least 3 nodes (master/worker compact cluster)

- Minimum of 16 CPU cores and 32 GB of RAM per node and the requirements for each hosted cluster

- A dedicated NIC or bond for network attachments

- An NVMe or SSD-backed StorageClass for hosted cluster etcd pods

- A wildcard-enabled ingress controller or a dedicated network per hosted cluster to expose APIs

Step 1: Install required operators

Install the following operators on the hub cluster:

- Red Hat OpenShift Virtualization

- Storage provider for etcd (e.g., LVM Storage)

- Storage provider for VMs (e.g., Red Hat OpenShift Data Foundation)

- MetalLB and NMState

- Multicluster engine for Kubernetes or Red Hat Advanced Cluster Management for Kubernetes

Step 2: Configure the network

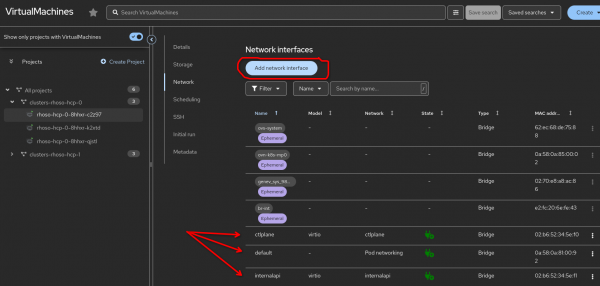

Create a NodeNetworkConfigurationPolicy (NNCP) for each node to define VLANs and bridges for isolated networks. In addition, action network attachment definitions are required in the hosted cluster namespace on the hub cluster to map the NNCP bridges to the networks required for OpenStack Services on OpenShift network isolation. You can do this either after the HCP cluster creation or during its creation (Figure 2).

Step 3: Deploy the hosted cluster

Use the hcp command line interface (CLI) to create the hosted cluster using the kubevirt provider as in the following example.

cluster using the kubevirt provider.

hcp create cluster kubevirt \

--name rhoso-hcp-0 \

--node-pool-replicas 3 \

--pull-secret /root/.docker/config.json \

--additional-trust-bundle /root/hcpscripts/bastion-ocp4.crt \

--memory 32Gi \

--cores 16 \

--etcd-storage-class=lvms-vg1 \

--root-volume-storage-class=ocs-external-storagecluster-ceph-rbd \

--root-volume-size 85 \

--release-image=quay.io/openshift-release-dev/ocp-release:4.18.34-x86_64Step 4: Configure OpenStack services on the hosted cluster

Once the hosted cluster is live, gain access using the generated kubeconfig and deploy the supporting operators, including the OpenStack operator.

Note: Disable the openstack-baremetal operator if no Metal3 operator is present in the OpenShift Virtualization hosted cluster provider.

Final thoughts

By combining OpenStack Services on OpenShift with hosted control planes, your organization can achieve a highly isolated, version-agnostic environment that maximizes your existing hardware. This deployment model empowers IT operations teams to do more with less by providing three distinct advantages: version isolation, granular security, and a smaller hardware footprint.

Ultimately, this architecture allows IT operations teams to manage multiple development tiers or hundreds of edge locations with a consistent, automated experience. For more details, including configuring pre-provisioned data plane nodes, refer to the KBA.