Data science teams have become increasingly comfortable fine-tuning large language models (LLMs) on their workstation or a single GPU box. Libraries like training_hub make it easy to run supervised fine-tuning (SFT), orthogonal subspace fine-tuning (OSFT), or LoRA-style fine-tuning with a few lines of Python.

The hard part isn’t getting a model to train once. It’s turning that one local experiment into a repeatable, observable, production-grade workflow your team can trust, scale, and govern. That’s where Red Hat OpenShift AI and its integration with Kubeflow Trainer, AI pipelines, and model lifecycle tooling come in.

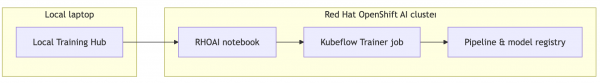

4-step process

This post walks through a practical pathway:

- Start with local experiments using the Training Hub.

- Move to OpenShift AI Interactive Notebooks for cluster-backed, collaborative work.

- Scale out with training jobs using Kubeflow Trainer for distributed fine-tuning.

- Operationalize with AI pipelines and Model Registry for production-grade workflows.

Figure 1 illustrates a high-level view of this process.

Throughout, you’ll see how you can keep your algorithmic code and configs the same while changing the execution environment under the hood.

Step 1: Local experiments with Training Hub

Training Hub is an algorithm-focused interface for common post-training techniques on LLMs, developed by the Red Hat AI Innovation Team. To learn more, refer to the Training Hub repository and its README.

It supports SFT, OSFT for continual learning, and LoRA / QLoRA (quantized) low-rank adaptation.

On your local machine, a simple fine tuning exercise looks like this:

from training_hub import sft

result = sft(

model_path="Qwen/Qwen2.5-1.5B-Instruct",

data_path="/path/to/data.jsonl",

ckpt_output_dir="/path/to/checkpoints",

num_epochs=3,

effective_batch_size=8,

learning_rate=1e-5,

max_seq_len=256,

max_tokens_per_gpu=1024,

)With a simple pip install training-hub and extras like [cuda] or [lora] as needed, you can iterate quickly on small datasets and models. This allows you to try different algorithms (SFT vs OSFT vs LoRA) with minimal code changes, tune hyperparameters, inspect intermediate checkpoints, and learn what works for your problem.

However, staying local has clear limits. Resource ceilings mean a single GPU or workstation can quickly run out of VRAM or storage as models and datasets grow. The manual process of tracking which dataset, config, and commit produced which checkpoint is often ad hoc. Furthermore, collaboration and reproducibility suffer because sharing results as “here’s a notebook and some files in my home directory” simply doesn’t scale.

The next step is to keep the same Training Hub code, but move it into a platform designed for shared, production-grade AI workloads.

Step 2: Bring your notebook to OpenShift AI interactive notebooks

OpenShift AI provides workbench (notebook) environments that feel familiar to data scientists but run on top of Kubernetes. The key idea is your Training Hub notebook from local development can often run unchanged in a workbench environment.

Training runs directly inside a single workbench pod (your notebook environment), giving you fast iteration and immediate feedback just like local development. You can also easily debug by inspecting variables, logs, and artifacts in one place.

Why move your local notebook into OpenShift AI?

OpenShift AI provides Red Hat–curated images with PyTorch, CUDA, popular ML libraries, the training_hub library, and related dependencies. These images receive regular security updates, CVE fixes, and compatibility testing.

Security and compliance are built-in with images built and scanned following enterprise standards, while RBAC and network policies help keep data and workloads isolated. You also benefit from Kubernetes-native isolation and resource management, where each workbench is a pod with configured CPU, memory, and GPU limits. This grants access to GPUs you may not have available locally, and quotas ensure one user’s experiment doesn’t starve the cluster.

Conceptually, you will move your Training Hub notebook into an OpenShift AI workbench and use the same sft/osft/lora_sft calls, now backed by cluster GPUs instead of your laptop.

This is still single-node fine-tuning, making it ideal for prototyping, learning, quick POCs, and smaller models and datasets that fit comfortably on a single node.

When the model size, dataset volume, or team size outgrow that single notebook pod, you will reach for training jobs.

Learn more from the fine-tuning on OpenShift AIguided examples.

Step 3: Scale with training jobs using Kubeflow Trainer

OpenShift AI offers cluster-scale capabilities for your Training Hub workflows through its training jobs mode, specifically distributed fine-tuning with Kubeflow Trainer. Instead of training inside the notebook pod, you will submit a Training Job from your workbench.

Then Kubeflow Trainer creates dedicated training pods across the cluster, while the notebook becomes a lightweight control plane to submit jobs, monitor them, and analyze outputs.

Key benefits of Kubeflow Trainer include faster training via parallelism, supporting multi-node and multi-GPU setups as well as data parallelism and sharded strategies (e.g., FSDP). This allows you to scale to much larger models and datasets, so you’re no longer bound by a single workbench node’s resources.

Additionally, it offers built-in fault tolerance and checkpointing. If a node fails, the job can resume from a checkpoint rather than starting over. Integration with Kueue provides centralized queueing and prioritization of jobs, ensuring fair sharing of GPU resources across teams.

Decoupling runtimes from experiments

A major operational advantage of OpenShift AI is the separation of concerns.

Platform engineers define ClusterTrainingRuntime objects, which include base images with the right CUDA, drivers, and libraries, GPU topology and resource configuration, and any organization-specific tooling (e.g., logging agents, security hooks, etc.).

Data scientists simply choose a runtime when defining a training job, eliminating the need to build custom images for every team and reducing the risk of “works on my laptop but not in prod” dependency drift.

Your Training Hub code, including calls like sft(...) and osft(...), can remain essentially the same. What changes is the introduction of Kubeflow Trainer to specify replicas, GPU counts, and runtime to use providing the scale to train faster and the flexibility of multi runtime options.

Because everything is Kubernetes-native, you also gain better GPU utilization via scheduling and bin-packing across nodes, multi-tenancy and quotas to ensure fair share and prevent noisy neighbors, and resilience where pods can be rescheduled on other nodes while checkpoints protect progress.

At this stage, you’ve gone from a “single notebook pod” to distributed, production-like training jobs, still using the same Training Hub algorithms and configs.

Check out the fine-tuning on OpenShift AI guided examples to learn more.

Step 4: Operationalize with pipelines and Model Registry

For true production-grade fine-tuning, you need more than just singular jobs. You need automated, repeatable workflows with clear lineage and promotion paths. That’s where AI pipelines and Model Registry come in.

You can use AI pipelines (based on Kubeflow pipelines) to orchestrate all the steps of your model customization workflow, including data preparation and validation, fine-tuning (SFT, OSFT, or LoRA via Training Hub), evaluation and metrics collection, and model registration into a registry.

Instead of manually triggering each step, you define a pipeline that downloads an appropriate dataset, runs a training job via Kubeflow Trainer, evaluates the resulting model against a test suite, and registers the model (with metrics and metadata) if it meets your criteria.

Why pipelines matter for production

Pipelines matter for production because they ensure repeatability and automation, allowing for nightly retraining on fresh data and CI/CD-style checks when training code changes. They also provide traceability, so you can answer “Which dataset and code commit produced this model?” as pipeline runs capture parameters, artifacts, and logs for audit.

Furthermore, pipelines enable approval and quality gates, automatically blocking registration of models that don’t meet SLA thresholds and requiring human approval for promotion into staging or production environments. They also facilitate easy re-runs and what-if experiments, letting you rerun a pipeline with different hyperparameters or datasets using the same defined steps.

You can compose AI pipelines from reusable components and add steps for model compression, model serving, etc. For more information, read the article, Fine-tune AI pipelines in OpenShift AI 3.3.

The role of the Model Registry

A Model Registry gives you a central source of truth for fine-tuned models, providing versioning and metadata (e.g., performance metrics, datasets, training configs, etc.) and a clear lifecycle from dev to staging to production. It also integrates with model serving on OpenShift AI, where serving components can pull the current production model from the registry, and rollbacks become as simple as promoting an earlier version.

Because pipelines and registries are integrated into the OpenShift AI/Kubernetes ecosystem, you also benefit from unified observability (logs, metrics, events) across all pipeline components, compliance and governance via RBAC, namespaces, and auditable artifacts, and consistent infrastructure whether jobs are simple or multi-step, single-node or distributed.

Refer to the fine-tuning on OpenShift AI guided examples on GitHub for a deep dive into pipeline components.

A journey from laptop to production

Throughout this journey, the core Training Hub code you wrote at the beginning remains recognizable. You’re not rewriting algorithms every time you move environments; you’re upgrading the execution context from laptop to workbench pod to distributed training jobs to automated AI pipelines.

A typical end-to-end journey:

- Explore locally with Training Hub: Install training_hub on your laptop or a workstation and use SFT/OSFT/LoRA APIs to explore what’s possible for your use case. Remember to keep your configuration files, notebooks, and code under version control.

- Move your notebook into an OpenShift AI workbench: Use a supported, GPU-enabled workbench training image that already includes the right ML stack. This lets you enjoy a familiar notebook experience, now backed by enterprise infrastructure.

- Scale out with Kubeflow Trainer training jobs: Extract the core training code from your notebook into a script or module, then define a Training Job using Kubeflow Trainer and the appropriate ClusterTrainingRuntime. From there, let Kubeflow Trainer, Kueue, and Kubernetes schedule and manage distributed training.

- Operationalize with pipelines and Model Registry: Define an AI pipeline that chains together data prep, fine-tuning, evaluation, and registration. Configure quality gates so only good models make it into the registry, and integrate serving components so production pulls its models from the registry.

One coherent path, many benefits

By combining Training Hub with OpenShift AI, you get a single, coherent path from local experimentation to production-grade fine-tuning. For data scientists and ML engineers, you can keep working in Python and notebooks. The same Training Hub workflows follow you from laptop to workbench to distributed jobs and pipelines.

Platform and SRE teams can centralize runtimes, enforce security and governance, and drive high GPU utilization using Kubernetes-native patterns like Kubeflow Trainer and Kueue.

Finally, technical decision-makers gain traceability, repeatability, and controlled model promotion, with a platform that supports scaling, resilience, and cost control.

That’s how you go from “it worked on my laptop” to production-grade fine-tuning on Red Hat OpenShift AI, without losing the agility that made local experimentation so powerful in the first place. To try this path yourself, start with a local SFT or OSFT example from the Training Hub repository and then explore the fine tuning guided examples for OpenShift AI.