Red Hat Process Automation Manager 7.9 brings bug fixes, performance improvements, and new features for process and case management, business and decision automation, and business optimization. This article introduces you to Process Automation Manager's out-of-the-box integration with Apache Kafka, revamped business automation management capabilities, and support for multiple decision requirements diagrams (DRDs). I will also guide you through setting up and using the new drools-metric module for analyzing business rules performance, and I'll briefly touch on Spring Boot integration in Process Automation Manager 7.9.

Note: Red Hat Process Automation Manager 7.9 updates related to decision management and business optimization also apply to Red Hat Decision Manager 7.9.

About Process Automation Manager

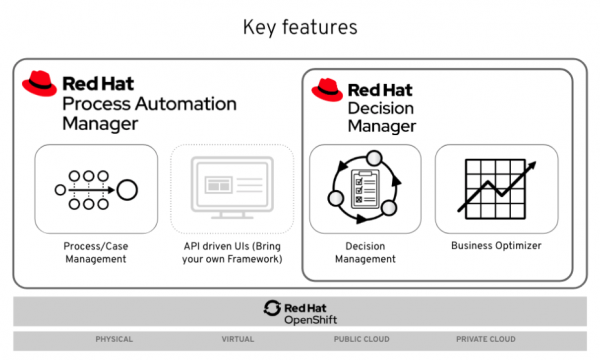

Red Hat Process Automation Manager (formerly Red Hat JBoss BPM Suite) and Red Hat Decision Manager (formerly Red Hat BRM Suite) are based on battle-tested open-source software like jBPM, Drools, OptaPlanner, and Kogito. Figure 1 is an overview of each product's capabilities.

Process Automation Manager and Decision Manager run on top of on-premises and containerized environments, leveraging enterprise orchestration platforms like Red Hat OpenShift and various cloud providers.

Integrating Process Automation Manager with Red Hat AMQ Streams (Kafka)

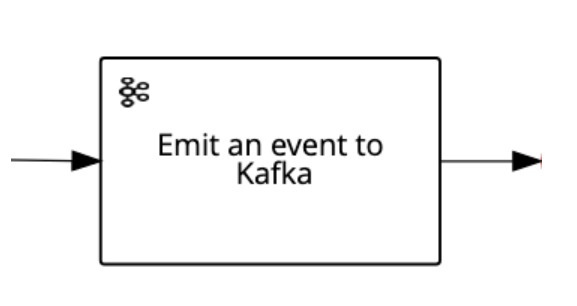

As part of our effort to improve the integration between Process Automation Manager and Red Hat AMQ Streams, we've introduced a new Kafka task to the 7.9 release. As shown in Figure 2, the WorkItemHandler task allows you to emit events to a Kafka topic running in a Kafka broker.

This new Kafka task ensures that messages are delivered to the Kafka topic before reaching the next node. The task uses the same retry/rethrow engine mechanism that handles errors in other tasks.

WorkItemHandler comes with three String input data assignments by default: An event key, the topic name, and the value you want to send in the event. For output, the task uses a default String type of result.

Before using WorkItemHandler, you need to set up your environment by enabling the task in Business Central and your project. You also need to add the task as a Maven dependency in your project. To learn more about using the WorkItemHandler task in Business Central, see Managing custom tasks in Business Central.

Analyzing rules performance with the drools-metric module

This release introduces a new drools-metric module for identifying performance bottlenecks in business rules. Once you've found the bottlenecks, you can adjust your rules implementation to improve performance.

The Drools rules engine uses the Phreak algorithm to validate facts in working memory against business rules. Adjusting the business rules syntax in your code can significantly increase Phreak's performance.

In the next section, I will show you how to use the drools-metric module and ReteDumper helper class to discover faulty syntax. We'll use an example from the MetricLogUtils GitHub repository for the demonstration.

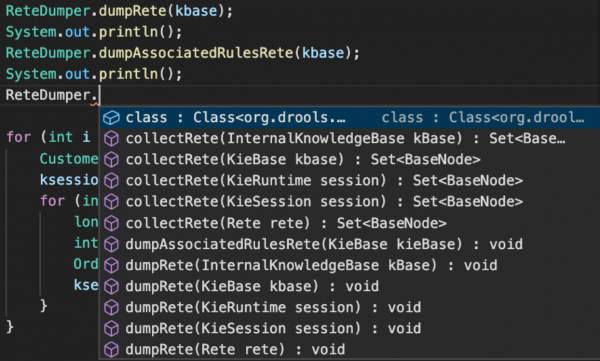

Rules analysis with ReteDumper

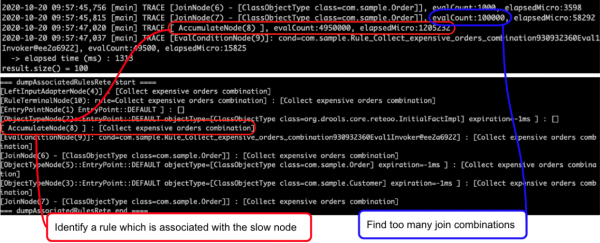

To start, you can use the ReteDumper helper class to output the time spent in each Phreak node so that you can see how many times a node is activated. The screenshot in Figure 3 shows an example of ReteDumper's output.

By logging the data in this output, you can find abnormal results like the ones shown in Figure 4.

The Collect expensive orders combination rule, which I have highlighted in red, is associated with a slow Phreak node. The eval condition, highlighted in blue, causes too many join combinations during rules evaluation. Coupled with performance tuning, this type of data analysis can improve a rule's execution time from 1,524 microseconds to as low as 112 microseconds. Let's run through an example use case from the MetricLogUtils GitHub repository so you can see these results for yourself.

A use case for drools-metric

This example demonstrates the performance benefits gained from updating business rules syntax in a common use case. The files for this example are available in the MetricLogUtils repository's src/main/resources folder:

- Clone the project and enter its folder:

$ git clone https://github.com/tkobayas/MetricLogUtils.git $ cd MetricLogUtils/use-cases/use-case-join/

- Run the project tests with the default rule implementation:

$ mvn test

- Check the result in the logs. You should see a line that indicates the elapsed time in microseconds:

elapsed time (ms). Also, notice the delay time:AccumulateNode(8). - Check the differences between the rule's implementation in the files

Sample.drlandSample.drl.fix1. You will find these files in the MetricLogUtilssrc/main/resources/com/sample/folder. - To improve rule performance, replace the contents of the file

Sample.drlwith the implementation available inSample.drl.fix1. - Rerun the test and check the results:

$ mvn test

- Perform the same steps with the file

Sample.drl.fix2and notice the performance improvements.

Using drools-metric in Decision Manager 7.9

To use the new drools-metric module in Decision Manager 7.9, you need to add the org.drools:drools-metric Maven dependency to your rules project. You also need to enable the trace log for the class org.drools.metric.util.MetricLogUtils. See Chapter 20. Performance tuning considerations with DRL Red Hat Decision Manager 7.9 in the Decision Manager documentation for more details.

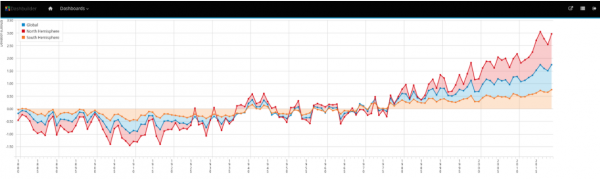

A new automated pipeline for business dashboards

Process Automation Manager 7.8 introduced the Dashbuilder component for deploying standalone environments for business dashboards. Dashbuilder lets you create business dashboards in Business Central and deploy reports across multiple environments. Figure 5 shows a dashboard report exposing key performance indicators (KPIs) collected in real-time.

Starting in Process Automation Manager 7.9, you can use REST APIs or a user interface (UI) to manage dashboards. An automated pipeline lets you export, import, and even deploy multiple dashboards with a single Dashbuilder component.

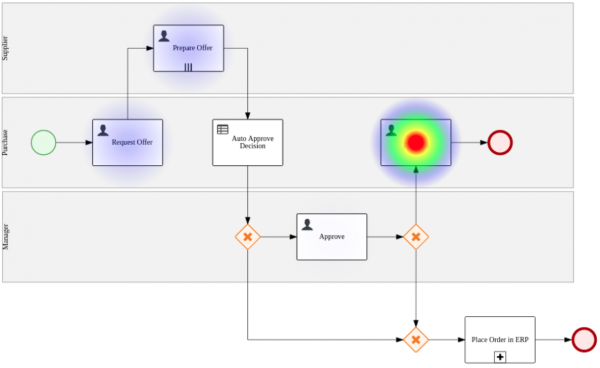

We have also improved report customization. You can now develop your own components or use third-party components to display business data. The Heatmap component displayed in Figure 6 is an example of one of the many components freely available from the Dashbuilder community. Heatmap lets us see at a glance which nodes are triggered more frequently and which nodes take more time to execute.

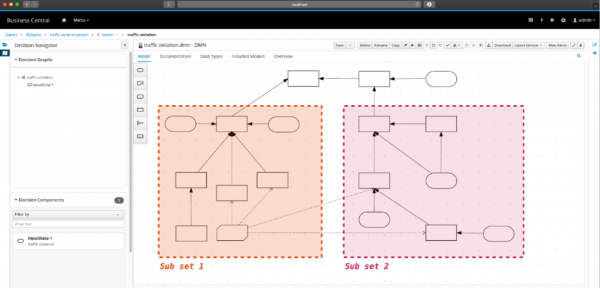

Multiple DRDs in DMN models

You can now use multiple decision requirements diagrams (DRDs) in your decision model notation (DMN) models. We can better organize decision logic by splitting complex decisions by their domains. Figure 7 illustrates using multiple DRDs to break down two sets of key decisions into two smaller sets.

Watch the video: DMN—Multiple DRDs support in Process Automation Manager 7.9

As shown in the following video demonstration, you can easily manipulate your nodes and move them to a new diagram or an existing one. The video also demonstrates how to navigate diagrams, rename them, and model your decision in a self-contained DMN model.

SpringBoot integration improvements

This release also improves Process Automation Manager's integration with Spring Boot by enhancing audit data replication and allowing you to create self-contained JAR deployments.

Conclusion

This article presented a sample of the many new features in Red Hat Process Automation Manager 7.9. See the release notes for Process Automation Manager 7.9 and Decision Manager 7.9 to learn about the Spring Boot integration, bug fixes, security improvements, and more

Last updated: May 18, 2021