Running AI evaluations in production is not a one-time script. It is a continuous operational discipline. This includes scheduling compute-intensive jobs, managing concurrency, tracking experiments across model versions, enforcing resource quotas of workloads competing for precious accelerator resources, and surviving cluster restarts without losing state.

If you have operated production machine learning (ML) workloads, you know that gluing together cron jobs, shell scripts, and a shared Jupyter notebook is not a strategy—it is technical debt on a countdown timer.

This is the first post in a series covering how to build a scalable, reproducible AI evaluation infrastructure using the EvalHub project and Red Hat AI. Catch up on the other parts in the series:

- Part 1: How EvalHub manages two-layer Kubernetes control planes

- Part 2: EvalHub: Because "looks good to me" isn't a benchmark

A framework-agnostic orchestration layer

Red Hat AI 3.4 introduces the evaluation hub, a unified control plane for AI evaluation and safety capabilities based on the upstream EvalHub project.

The core value proposition is simple: We do not force you to choose the evaluation frameworks we "think" are best. Because new evaluation harnesses and safety techniques are published almost every week, the evaluation hub acts as a framework-agnostic orchestration layer.

Whether you are using industry-standard tools like Garak and lm-evaluation-harness or your own proprietary custom scripts, the hub allows you to:

- Onboard any framework: Move to the latest techniques the moment they are released.

- Scale consistently: Run diverse evaluation tasks across your Kubernetes or Red Hat OpenShift cluster without manual plumbing.

- Ensure immutability: Automatically track every result as an experiment in MLflow and generate immutable OCI artifacts for a verifiable audit trail.

Evaluations are not run on Kubernetes as an afterthought; the cluster is the control plane. This post walks through exactly how that works, from the custom resource definition (CRD) to the executor that runs your benchmarks.

The big picture: A two-layer control plane

EvalHub's Kubernetes architecture is organized into two distinct layers.

The first layer is the TrustyAI Service Operator. This standard Kubernetes operator manages the lifecycle of EvalHub instances. It deploys the service, configures environment variables (including the MLflow tracking URI), and controls replica counts. It also ensures the deployment stays converged against the desired state declared in a EvalHub custom resource.

The second layer is the EvalHub Server. It acts as the evaluation orchestration control plane. It exposes a versioned REST API (/api/v1/...) and receives evaluation requests from clients such as code, notebooks, CI pipelines, or UIs. It then sends these requests to the appropriate evaluation backend through its executor factory.

These two layers interact through the standard Kubernetes declarative model. You declare the desired state, the operator ensures it exists, and the server handles the runtime logic.

The EvalHub custom resource

You can deploy EvalHub to a Kubernetes or Red Hat OpenShift cluster using a single YAML declaration managed by the TrustyAI Service Operator:

apiVersion: trustyai.opendatahub.io/v1alpha1

kind: EvalHub

metadata:

name: evalhub

namespace: my-namespace

spec:

replicas: 1

env:

- name: MLFLOW_TRACKING_URI

value: "http://mlflow:5000"The operator watches for these resources and reconciles them into a running deployment. The EvalHub CRD is part of the trustyai.opendatahub.io/v1alpha1 API group—the same group that governs TrustyAI fairness, explainability, and guardrails services. This co-location is intentional: EvalHub is a peer component in the TrustyAI Operator extending the AI safety ecosystem.

Inside the EvalHub server

Once the operator brings the EvalHub pod online, the server handles the runtime orchestration. The server is written in Go. It uses the standard net/http router, structured JSON logging with zap, and Prometheus metrics. These features make it compatible with existing OpenShift observability stacks without configuration overhead.

The storage layer is abstracted behind a pluggable interface. It supports SQLite in-memory for local development and single-node testing and PostgreSQL for production multi-replica deployments. Configuration is loaded from config/config.yaml, and credentials are provided in Kubernetes secrets.

Providers, or the evaluation backends, are defined in YAML configuration files shipped with the TrustyAI operator. The default set includes lm-evaluation-harness (with 167 benchmarks), Garak, GuideLLM, and LightEval. You can integrate additional providers, such as RAGAS, MTEB, and IBM CLEAR, using EvalHub Contrib adapters. You can register providers by adding a YAML entry to the providers ConfigMap or by using the API—no code changes required. The new provider immediately appears in the standard /api/v1/evaluations/providers and /api/v1/evaluations/benchmarks endpoints.

The executor factory: How evaluation backends are invoked

When evaluation job requests arrive at the EvalHub service, we first identify the backend runtime. This internal abstraction manages the workflow based on the environment where the service runs. When you deploy EvalHub using the Open Data Hub (ODH) operator, the runtime environment is Kubernetes or OpenShift. For local execution on a workstation or server, a different runtime implementation is used that does not rely on Kubernetes at all. In a Kubernetes context, each evaluation or benchmark runs as a separate Job. This ensures benchmarks run in isolation from each other. A sidecar container sends real-time progress and status information back to the service. This container insulates the evaluation from technical details, making it easier to write and integrate custom evaluations.

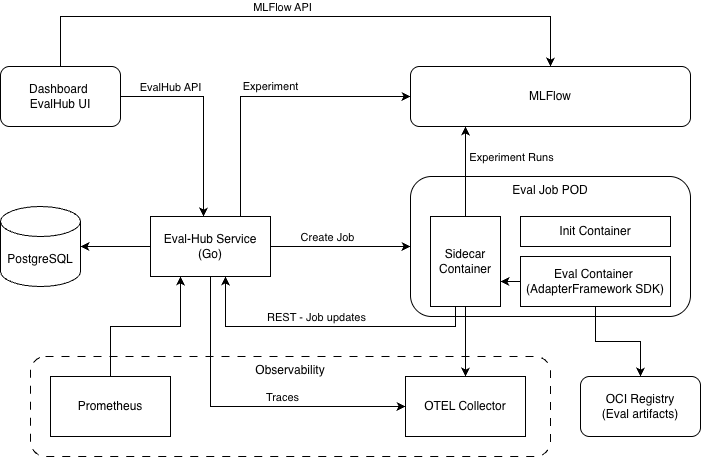

Figure 1 illustrates the EvalHub architecture.

EvalHub supports four categories of executors: lm-evaluation-harness, LightEval, GuideLLM, and custom (BYOF) frameworks.

lm-evaluation-harness

This executor is the default for capability benchmarks. The server routes requests to the lm-evaluation-harness library from EleutherAI. This library runs tasks in batches and returns structured results. Collection-based evaluations group all lm-eval tasks into a single backend call for efficiency, avoiding the overhead of separate invocations per benchmark.

LightEval

Use LightEval for fast, lightweight capability evaluations. LightEval is registered as a first-class provider in the EvalHub default set. Its execution model uses the bring your own framework (BYOF) adapter pattern. The server provisions a Kubernetes Job running the pre-built quay.io/evalhub/community-lighteval:latest container image with python main.py as its entrypoint. That container reads its JobSpec from a ConfigMap mounted at /meta/job.json. It runs the LightEval framework and reports results back to EvalHub through the sidecar callback URL. No Kubeflow Pipelines endpoint is involved. The provider YAML declares this deployment model:

id: lighteval

runtime:

k8s:

image: quay.io/evalhub/community-lighteval:latest

entrypoint: [python, main.py]

cpu_request: 100m

memory_request: 128MiThis is the same pattern any BYOF adapter uses. The difference is that LightEval ships as a ready-made adapter image, so you do not have to build your own. If you want to build a custom LightEval integration via the SDK's FrameworkAdapter, the execution is identical: run_benchmark_job calls LightEval's Python library in-process inside your adapter pod.

GuideLLM

Use GuideLLM for infrastructure performance profiling. GuideLLM measures throughput, latency, memory usage, and token cost across hardware configurations. This makes it the right tool when the question is not How accurate is the model? but How fast, at what cost, and on which hardware?

Like other providers, GuideLLM is declared in a YAML configuration file and exposed through the standard /api/v1/evaluations/providers endpoint.

Custom (BYOF)

Use the EvalHub SDK FrameworkAdapter base class to bring your own framework. To use a custom framework, implement the run_benchmark_job method, use the provided callbacks for progress reporting, and register the provider using a ConfigMap. EvalHub handles scheduling, status tracking, and result aggregation automatically.

The EvalHub SDK: Adapter, client, CLI, and MCP server

The eval-hub-sdk is a Python package available via pip install eval-hub-sdk. It includes a registered evalhub command-line interface (CLI) entry point and four modules under src/evalhub/.

evalhub.adapter

This module provides the bring your own framework (BYOF) adapter layer. When you implement run_benchmark_job() and use JobCallbacks for status reporting and OCI artifact persistence, your evaluation logic runs as a portable adapter. In production, EvalHub deploys it as a Kubernetes Job pod and manages all scheduling and result aggregation. Locally, you can instantiate the adapter directly for development and testing. The adapter also includes built-in MLflow integration (callbacks.mlflow.save(...)). This supports both the ODH client and the upstream mlflow library.

evalhub.client

This module includes typed synchronous and asynchronous REST clients (EvalHubClient and AsyncEvalHubClient). Use these to programmatically submit jobs and manage providers, benchmarks, and collections. These clients support multi-tenant deployments through a per-request tenant parameter.

evalhub.cli

The evalhub.cli module is a fully implemented CLI (evalhub) built on Click. It includes the following command groups:

evalhub eval run/status/results/cancel: Submit jobs using YAML or JSON configurations or inline flags. You can watch the status with--waitor--watchand retrieve results in formats such as table, JSON, YAML, or CSV.evalhub collections list/describe/create/delete/run: Manage and execute benchmark collections.evalhub providers list/describe: Inspect registered providers and their benchmarks.evalhub health: Check service availability with response time.evalhub config set/get/list/use: Manage multi-profile config at~/.config/evalhub/config.yamlevalhub mcp: Start the Model Context Protocol (MCP) server instdiotransport mode.

evalhub.mcp (in developer preview)

An MCP server built on FastMCP. This module exposes nine MCP resources—including providers, benchmarks, collections, and jobs—which AI agents can browse. It also includes two MCP tools: submit_evaluation and cancel_job. Resource template parameters include autocomplete support. You can start the server using evalhub mcp or use it directly as an AsyncEvalHubClient wrapper.

OCI artifact persistence

The adapter's Open Container Initiative (OCI) interface is fully implemented. It is not a mere placeholder. OCIArtifactPersister uses olot to create a compliant OCI layout on disk and oras-py to push it to a registry. In Kubernetes mode, the sidecar acts as an OCI proxy. This means the adapter doesn't need to handle registry credentials directly; the sidecar manages the authentication. In local mode, the system uses standard Docker configuration file authentication.

artifact = callbacks.create_oci_artifact(OCIArtifactSpec(

files_path=Path("./results/"),

coordinates=OCICoordinates(

oci_host="quay.io",

oci_repository="myorg/eval-results",

annotations={"score": "0.85"}

)

))

# artifact.reference → "quay.io/myorg/eval-results:evalhub-<job_sha>@sha256:..."

# artifact.digest → "sha256:..."The OCI artifact reference—including the host, repository, and a SHA256-based tag—is included in the report_results() callback to EvalHub. This makes the reference queryable alongside MLflow experiment data.

MLflow: The experiment memory

When an evaluation request includes an experiment configuration, the EvalHub server creates or reuses an MLflow experiment and associates the run with it. You provide the experiment name and optional tags. The server then injects additional metadata so that every tracked run is attributable without manual bookkeeping.

{

"name": "llama-3-healthcare-safety-v2",

"tags": [

{"key": "environment", "value": "production"},

{"key": "model_family", "value": "llama"},

{"key": "collection", "value": "healthcare_safety_v1"}

]

}If the named experiment exists and is active, EvalHub reuses it. Otherwise, it creates a new one. In multi-tenant deployments, experiments are scoped to the tenant's namespace. This ensures job pods can reach the tracking server with matching credentials.

The adapter SDK's built-in MLflow integration logs evaluation metrics, job configuration, and model information to the experiment run. Adapters can also save additional metadata and artifacts, such as per-sample predictions, confusion matrices, or custom analysis outputs. This gives you full control over the data captured alongside standard evaluation results.

Platform teams have a single queryable store for evaluation history across model versions and benchmark suites. The experiment record captures the exact configuration that produced the scores. This makes reproducibility a standard feature rather than an afterthought.

Observability out of the box

EvalHub ships with three observability surfaces:

- Health checks: Kubernetes liveness and readiness probes are configured in the deployment manifests. This ensures the operator restarts unhealthy pods and the load balancer only routes traffic to ready instances.

- Prometheus metrics: The server instruments request counts, request duration, and evaluation statistics at the

/metricsendpoint. It uses standard Prometheus and OpenTelemetry (OTEL) exposition formats. You can scrape these metrics using OpenShift monitoring without any additional configuration. - Structured logging: All logs are emitted as structured JSON in production mode. In development, logs use a human-readable console output. Every log line includes request and evaluation IDs for correlation across distributed traces.

Horizontal scaling

Because EvalHub state lives in PostgreSQL (in production) and experiment data stays in MLflow, the server is stateless and horizontally scalable. The EvalHub CR spec.replicas field controls the pod count directly. When combined with Kueue ClusterQueue capacity enforcement, scaling the evaluation control plane only requires adjusting a single integer and a resource quota. This removes the need for distributed coordination code.

Multi-tenant architecture

EvalHub is designed for shared platforms where teams, projects, or business units require isolated evaluation environments within the same cluster. The multi-tenancy model works across two levels.

API-level tenant isolation

At the REST API layer, every request includes an X-Tenant HTTP header that identifies the tenant. The storage layer uses a chainable builder interface, WithTenant(). This ensures every SQL query is automatically filtered by tenant scope without extra logic in every handler. You can register providers as system-scoped or tenant-scoped. System-scoped providers are shared and read-only across all tenants, while tenant-scoped providers are private and mutable.

MLflow experiment namespacing follows the same boundary. Each evaluation run is scoped to a tenant-specific namespace so job pods can reach the tracking server with the correct credentials. The SDK's EvalHubClient and AsyncEvalHubClient accept a tenant parameter at initialization. Set the parameter to include the X-Tenant in every request. You can also use a per-call override if a single client needs to act across different tenants.

Kubernetes namespace-level isolation

On the infrastructure side, the EvalHub operator uses a label-driven discovery model rather than an explicit tenant registry. The system automatically onboards any Kubernetes namespace labeled evalhub.trustyai.opendatahub.io/tenant=true as a tenant. The operator's reconcileTenantNamespaces() function watches Namespace resources and filters them by label. It then provisions the following objects in each tenant namespace: a dedicated job ServiceAccount, RoleBindings granting the API ServiceAccount permission to create and manage Jobs and ConfigMaps in that namespace, and a Service CA ConfigMap for TLS callback injection on OpenShift.

Adding a new tenant requires no changes to the EvalHub deployment. Administrators simply label a namespace and the controller converges automatically. Resource quota enforcement uses standard Kubernetes ResourceQuota objects. You can apply these to each tenant namespace without extra EvalHub configuration.

Together, these layers provide a clean operational model. The X-Tenant header enforces data isolation at the application layer, while the namespace label mechanism enforces compute and credential isolation at the infrastructure layer.

The EvalHub tenancy model aligns with MLflow when you deploy MLflow with the Kubernetes-Workspace-Provider. Before a request reaches the EvalHub API layer, an authentication and authorization layer performs a token and subject access review. There are three types of Kubernetes resources protected by these rules: evaluations, providers, and collections. Administrators can create custom Roles to grant fine-grained access to specific identities, such as service accounts, users, or groups.

Putting it all together

The path from a CR to a completed evaluation run looks like this:

- A user or CI system sends a

POSTrequest to/api/v1/evaluations. The request includes a model endpoint, benchmarks or a collection ID, and an experiment name. - The EvalHub server validates the request and expands the collection into individual benchmarks. It groups these by provider and creates an async evaluation job with a unique ID.

- The executor factory dispatches each provider group to the appropriate backend adapter. For example, capability tasks go to the

lm-evaluation-harnessruntime, while performance profiling uses GuideLLM. - Results flow back and are aggregated based on the weights declared in the collection YAML to produce a final composite score.

- The MLflow client writes the full experiment record—including scores, parameters, hardware context—to the tracking server.

- The caller polls

/api/v1/evaluations/{id}for status or queries MLflow directly for historical comparison.

At every step, standard Kubernetes primitives do the heavy lifting. This includes CRDs, operators, resource quotas, Prometheus, and structured logs. EvalHub does not reinvent cluster operations. It brings evaluation into your existing operational model.

The evaluation hub is now available as part of the AI evaluation and safety capabilities within Red Hat AI. Whether you are benchmarking a new model version in CI, profiling inference infrastructure with GuideLLM, or building a custom evaluation adapter with the BYOF framework, this architecture supports your production workloads. The Kubernetes controller, the EvalHub CRD, and the full SDK, including the CLI, OCI artifact interface, and REST client, represent the current state of the platform. Note that the MCP server component is currently available as a developer preview.

To get started, explore the EvalHub documentation, the eval-hub-sdk on GitHub, and the TrustyAI Service Operator for controller implementation details. If you are already running Red Hat OpenShift AI, TrustyAI is available as a platform component. To learn more about how Red Hat AI supports responsible, production-grade AI evaluation at scale, visit redhat.com/ai.

Last updated: May 19, 2026