Retrieval-augmented generation (RAG) is a practical way to answer questions using your own content (such as policies, docs, tickets, and product descriptions) without assuming a general LLM model already contains that information.

At its core, RAG follows a "retrieve context, then answer" pattern. Retrieval is the part that often becomes overcomplicated. Once you store embeddings alongside text in a database, retrieval becomes a standard nearest-neighbor query. In other words: similarity is a query.

This article demonstrates a "boring" implementation using Apache Camel, PostgreSQL, and pgvector. The goal is to create a baseline that is easy to understand and debug. You can see exactly what was indexed, what was retrieved, and the context provided to the model.

If you want the bigger-picture framing LLMs as semantic processors and keeping the "AI parts" at the edges, read Making LLMs boring: From chatbots to semantic processors.

A quick glossary

An embedding is a vector (a list of numbers) produced from text. Similar text tends to end up near each other in that vector space.

Chunking is splitting a document into smaller pieces before embedding it. It's rarely optional. Without chunking, you retrieve entire documents when you only need a paragraph.

pgvector adds a vector(N) column type and distance operators <=> to PostgreSQL. This allows you to store embeddings and run similarity searches using SQL.

The anatomy of a RAG pipeline

Most RAG systems rely on three primary steps.

- First, you index the content. This involves taking the context, chunking it, and storing the text within its vector. This is typically a batch job.

- Second, you retrieve information. When a user asks a question, the system embeds the question and queries the database for the nearest chunks. You usually apply a similarity threshold (to avoid weak matches) and a topK (to keep context bounded).

- Third, you provide an answer. If the retrieval finds no matches, the system returns a "not found" response or asks a clarifying question. If retrieval found something, you pass the retrieved chunks into the prompt as context and tell the model to answer using only that context.

Let's make those steps concrete.

Step 1: Index (chunk → embed → store)

Indexing transforms static files into a queryable knowledge base. At a minimum, you should store the chunk text, a little metadata to help with tracing (such as the source, section ID, and document name), and the embedding vector.

With pgvector, a basic schema looks like this:

CREATE EXTENSION IF NOT EXISTS vector;

CREATE TABLE IF NOT EXISTS chunks (

id SERIAL PRIMARY KEY,

content TEXT NOT NULL,

source VARCHAR(255),

chunk_index INTEGER,

embedding vector(768),

created_at TIMESTAMP DEFAULT CURRENT_TIMESTAMP

);The vector(768) dimension must match your embedding model. If you switch embedding models, you might need a different dimension (and usually a reindex).

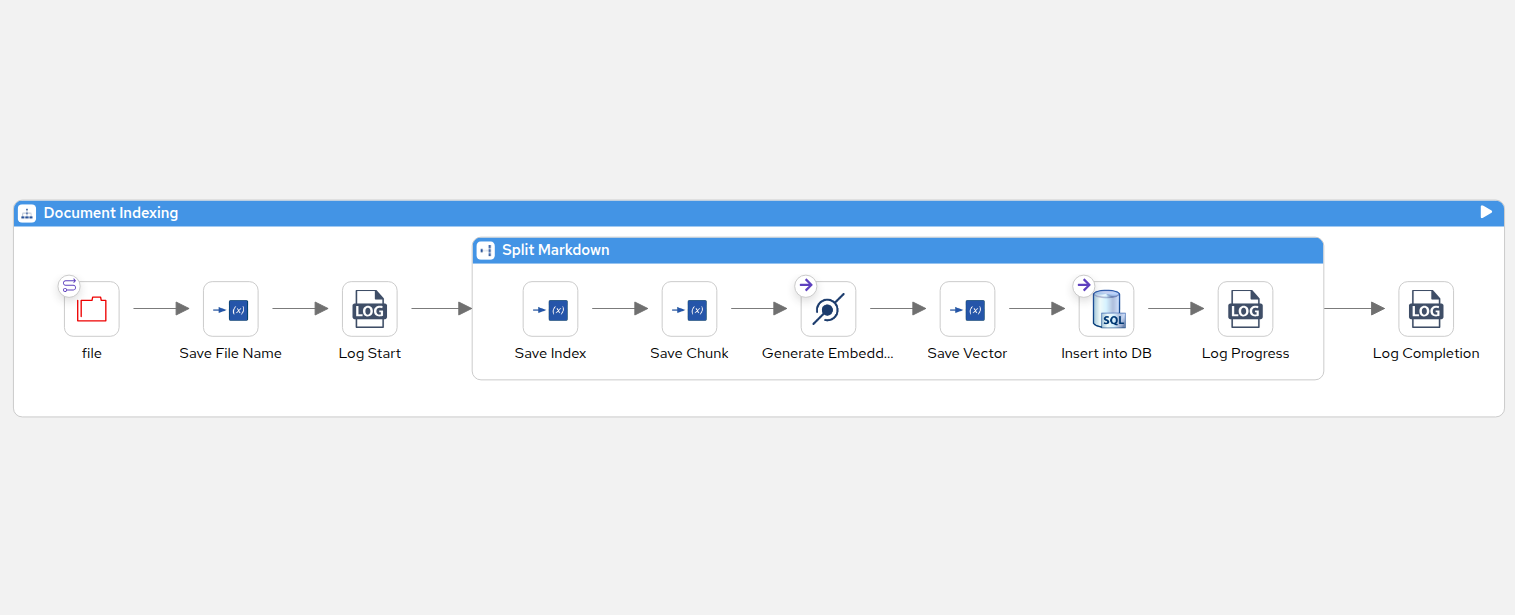

Use the following Camel route to implement the chunk → embed → store process, as shown in Figure 1:

- beans:

- name: markdownSemanticTokenizer

type: org.apache.camel.tokenizer.MarkdownSemanticTokenizer

properties:

headerMode: "RAG_CONTEXT"

- route:

id: index-files

description: "Document Indexing"

from:

uri: file:documents

parameters:

noop: true

include: ".*\\.md"

steps:

- setVariable:

description: "Save File Name"

name: fileName

simple: "${header.CamelFileName}"

- split:

description: "Split Markdown"

method:

ref: "markdownSemanticTokenizer"

steps:

- setVariable:

description: "Save Index"

name: chunkIndex

simple: "${exchangeProperty.CamelSplitIndex}"

- setVariable:

description: "Save Chunk"

name: chunkText

simple: "${body.trim()}"

- to:

description: "Generate Embedding"

uri: openai:embeddings

- setVariable:

description: "Save Vector"

name: embeddingVector

simple: "${body.toString()}"

- to:

description: "Insert into DB"

uri: sql:INSERT INTO chunks (content, source, chunk_index, embedding) VALUES (:#chunkText, :#fileName, :#chunkIndex, :#embeddingVector::vector)

Note

This route assumes you are starting with clean Markdown files. In the real world, enterprise knowledge is usually locked in PDFs or Word documents. To handle this, you can drop the camel-docling step docling:CONVERT_TO_MARKDOWN into your route. Powered by IBM's AI document parser, camel-docling understands complex document layouts including reading order, multi-column text, and even tables and seamlessly converts them into structured Markdown.

You can then index the documents by running the following camel command:

camel run index-documents.camel.yaml utils/* application.propertiesApache Camel includes more than 300 components, allowing you to ingest documents and data from wherever your enterprise stores them: Amazon S3, Google Drive, Azure Files, Brokers, Salesforce, JIRA, or secure FTP servers.

Step 2: Retrieve (similarity as SQL)

At query time, the system embeds the user's question and runs a nearest-neighbor query against the stored vectors.

In pgvector, <=> is a distance operator where a smaller value indicates a closer match). A common pattern is to convert distance into a similarity score (often 1 - distance), filter by a threshold, then take the top results.

Using camel-openai for embeddings, this workflow involves calling the openai:embeddings endpoint and then running an sql:SELECT query with the resulting vector.

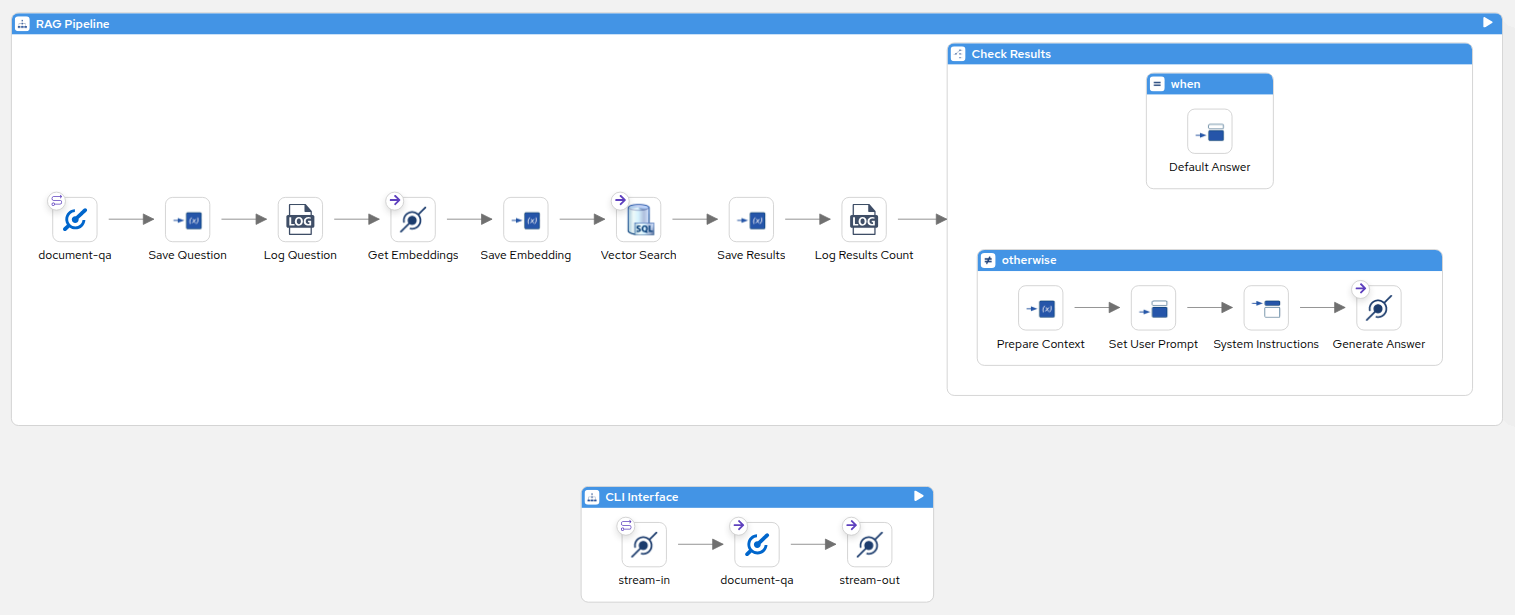

- route:

id: document-qa-route

description: RAG Pipeline

from:

description: document-qa

uri: direct

parameters:

name: document-qa

steps:

- setVariable:

description: Save Question

name: question

simple: ${body.trim()}

- log:

description: Log Question

message: "Question: ${variable.question}"

- to:

description: Get Embeddings

uri: openai:embeddings

- setVariable:

description: Save Embedding

name: queryEmbedding

simple: ${body.toString()}

- to:

description: Vector Search

uri: >

sql:SELECT content, source,

1 - (embedding <=> :#queryEmbedding::vector) as similarity

FROM chunks

WHERE 1 - (embedding <=> :#queryEmbedding::vector) > {{rag.similarity.threshold}}

ORDER BY embedding <=> :#queryEmbedding::vector

LIMIT {{rag.topK}}Two settings are important during the initial configuration:

threshold: (0.6) This setting prevents the system from adding weakly related chunks to the prompt.topK: (5) This parameter limits the amount of context provided to the model.

Step 3: Answer (or refuse)

After the system retrieves the rows, it passes them into the model prompt as context and instructs the model to answer using only that information. This grounds the model in the retrieved data and helps prevent it from improvising.

You must decide what happens if the retrieval step returns no results. For internal knowledge bases, forcing an answer is a recipe for hallucinations. A response such as, "I do not have enough information in the provided documents to answer that," is useful because it identifies where to improve your corpus, chunking strategy, or threshold limits.

- setVariable:

description: Save Results

name: searchResults

simple: ${body}

- log:

description: Log Results Count

message: Found ${body.size()} relevant chunks

- choice:

description: Check Results

otherwise:

steps:

- setVariable:

description: Prepare Context

name: context

simple: ${variable.searchResults}

- setBody:

description: Set User Prompt

simple: ${variable.question}

- setHeader:

description: System Instructions

name: CamelOpenAISystemMessage

simple: >

Answer the question using ONLY the context below.

If the context doesn't contain enough information, say "I

don't have complete information on that."

Be concise and cite the source when relevant.

Context:

${variable.context}

- to:

description: Generate Answer

uri: openai:chat-completionNow you are ready to ask the questions:

echo "What is the return policy?" | camel run document-qa.camel.yaml application.propertiesWill the model always stay perfectly inside the lines? Not always. This design makes the failure mode visible by allowing you to log retrieved rows and verify the context provided to the model, as illustrated in Figure 2:

Beyond document Q&A: Reusing the pattern

The beauty of storing embeddings in PostgreSQL and treating similarity as a SQL query is that you aren't limited to building Q&A chatbots. Once the core foundation of indexing and retrieval is in place, you can adapt the final answer phase to solve various engineering challenges.

Because you are using standard SQL, you can easily join your vector similarity searches with your existing business logic.

Semantic product search

Standard keyword searches often fail if users are unfamiliar with your exact terminology. By embedding your product catalog, you can map fuzzy user inputs ("a large screen for design work") to the closest items in vector space. From there, you have options: you can return the database rows directly to the UI for a fast, deterministic search experience, or you can pass the retrieved rows to an LLM to generate a conversational summary of their options.

Automated ticket deduplication

You don't always need an LLM at the end of a RAG pipeline; sometimes, you can skip the "answer" step entirely. When a new support ticket is submitted, embed the text and run a similarity query against your historical, closed tickets. If the similarity score crosses a high threshold, you can automatically link the new ticket as a duplicate or route it to the exact engineer who solved the previous issue.

By treating vectors as standard database rows, you can transform AI features into common backend engineering tasks.

Limitations

This baseline works well, but there are practical limits you should anticipate as you scale:

- Chunking strategies significantly affect retrieval quality. If a chunk boundary separates a rule from its exception, the system might retrieve text that appears relevant but leads to an incorrect answer. This is a data-shaping problem more than a model problem.

- Similarity is not correctness. Nearest neighbor means "close in embedding space," not "true," "complete," or "up to date." In practice you often combine vectors with metadata filters (source, version, access control) and keyword search for exact terms.

thresholdandtopKtuning is unavoidable. Too low and you inject noise. Too high and you refuse too often. You adjust based on real queries and real failure cases.- Cost and latency can add up as the system scales. Many RAG flows are two model calls per request (embeddings plus chat completion). At scale, caching and batching become important.

While a more advanced stack (like hybrid keyword + vector search + reranking) can outperform this baseline on relevance, the trade-off is complexity. Start simple, and only add components when you have a concrete metric that demands it.

Takeaway

The primary advantage of this "boring RAG" approach is that it transforms a complex system into a standard software engineering task. By treating semantic search as a SQL query and keeping an air gap between your AI and your database, you ensure that every failure mode (e.g. a bad retrieval, wrong context, or a bad answer) is completely isolated and debuggable.

Start simple. Once your core pipeline is running smoothly, you can confidently introduce complexity like advanced chunking or hybrid search exactly where the metrics tell you to.

Next steps

You can find fully runnable Apache Camel routes for document Q&A, product similarity, and ticket deduplication in the companion GitHub repository.