The objective of this article is to provide an overview of how to secure DNS traffic in the Red Hat OpenShift Container Platform when forwarding requests to upstream resolvers in Identity Management (IdM) using DoT. At the end of this article, we will see some limitations that still need to be addressed.

With the recent release of Red Hat Enterprise Linux (RHEL) 10, Red Hat has complied with the U.S. government's memorandum MS-22-09, mandating against inherently trusting internal networks due to the prevalence of hybrid environments and mixed workloads. This concept, known as zero trust architecture, requires that all traffic within every environment must be authenticated, authorized, and encrypted.

There are a plethora of protocols that provide real and practical implementations of encryption in their standards, such as HTTPS, LDAP + TLS, etc. They are commonly used worldwide, but all of these protocols depend on the same underlying single protocol DNS, and the use of unencrypted DNS is a common practice among companies. RHEL 10 (9.6 is supported as well) is the first release in the market that provides encrypted DNS (eDNS), using DNS over TLS (DoT) not only on runtime but also at the earliest stages of booting and installation, including support for custom CA certificates.

Additionally, with the advent of RHEL 10, a task force has been created to implement DNS over TLS (DoT) on the integrated (and optional) DNS provided by Identity Management (IdM). It is important to remark that right now DoT in IdM is considered a Technology Preview, not ready to use in production.

Environment

Our environment will consist of an IdM server in RHEL 10 and an OpenShift Container Platform cluster in version 4.14. But the DoT feature for OpenShift Container Platform is available from the earlier 4.11 version and of course it can be used in any version newer than 4.14.

First, we will install the IdM server in our RHEL 10 server with hostname idm.melmac.univ with the packages needed to enable DoT:

root@idm:~# dnf install ipa-server ipa-server-encrypted-dns ipa-server-dnsNext, we will proceed with the installation. For that, there are two modes we can consider for IdM DoT, these are: relaxed which allows fallback to unencrypted DNS if DoT is unavailable (the default) and enforced that strictly requires DoT for all DNS communications and rejects any unencrypted requests. For our environment, we will cover the use case of a new IdM installation, but it is important to remark that existing installations can be converted to eDNS as well. We will perform the installation using the enforced policy:

root@idm:~# ipa-server-install --setup-dns --dns-over-tls --dns-policy enforced --no-dnssec-validation --dot-forwarder=8.8.8.8#dns.google.comFor our example, we are adding a public DNS forwarder, but you can set any that is available inside your environment, and also it is considered useful to specify more than one forwarder for redundancy purposes. Accept all options provided by default, and next enable the firewall taking into consideration the eDNS port 853/TCP (you don’t need to add the DNS port UDP/53 standard in enforced mode) provided via the service dns-over-tls:

root@idm:~# firewall-cmd --add-service freeipa-ldap

root@idm:~# firewall-cmd --add-service freeipa-ldaps

root@idm:~# firewall-cmd --add-service dns-over-tls

root@idm:~# firewall-cmd --runtime-to-permanentIt is important to remark that the installation options we have chosen ensures that a certificate will be automatically provided to secure DNS. This certificate is signed by the IdM Certificate Authority (CA) Dogtag and the path in our IdM server is /etc/pki/tls/certs/bind_dot.crt and (for completeness) the key generated is found on /etc/pki/tls/private/bind_dot.key. But as we can see here, you can also provide at installation time a custom certificate signed by an external CA.

If we check our certificate (automatically generated) we can see the following relevant fields:

root@idm:~# openssl x509 -text -in /etc/pki/tls/certs/bind_dot.crt -noout

[...]

Issuer: O=MELMAC.UNIV, CN=Certificate Authority

Validity

Not Before: Jul 21 21:30:36 2025 GMT

Not After : Jul 22 21:30:36 2027 GMT

Subject: O=MELMAC.UNIV, CN=idm.melmac.univ

[...]

X509v3 Subject Alternative Name:

othername: UPN::DNS/idm.melmac.univ@MELMAC.UNIV, othername: 1.3.6.1.5.2.2::<unsupported>, DNS:idm.melmac.univWith this we have our IdM completely set up and ready to enable secure encrypted DNS (eDNS).

Using CoreDNS operator to forward secure queries

Now let’s look at the other side of the equation: the DNS Operator on the OpenShift Container Platform (OpenShift Container Platform). CoreDNS supports queries that are encrypted with TLS, to use it, we will need to add the certificate generated by IdM to a ConfigMap object. As we have seen, this certificate is on IdM in the path /etc/pki/tls/certs/bind_dot.crt but it can also be obtained from a direct query to the IdM server, for example in our OpenShift Container Platform cluster (or any client with communication to IdM) we can execute:

[azure@bastion-cm66f ~]$ openssl s_client -connect idm.melmac.univ:853 </dev/null 2>/dev/null | openssl x509 > cert.pemAnd the stored certificate will be the same as the one seen previously.

The ConfigMap resource in OpenShift Container Platform cluster should look like this (be careful with indentations when copying the content certificate, if it’s not correct it will fail when it creates the resource):

apiVersion: v1

kind: ConfigMap

metadata:

name: dns-ca

namespace: openshift-config

data:

# This is the CA certificate for DNS over TLS

# Get it from 853 port of the DNS server

ca-bundle.crt: |

-----BEGIN CERTIFICATE-----

<Content_of_the_certificate>

-----END CERTIFICATE-----Next, we must create an object of type DNS and provide in the YAML code our specific environment data, for my environment the object is the following:

apiVersion: operator.openshift.io/v1

kind: DNS

metadata:

name: default

spec:

servers:

- name: melmac-dns

zones:

- melmac.univ

forwardPlugin:

transportConfig:

transport: TLS

tls:

caBundle:

name: dns-ca

serverName: idm.melmac.univ

policy: Random

upstreams:

- 10.249.100.11:853

cache:

negativeTTL: 0s

positiveTTL: 0s

logLevel: Normal

operatorLogLevel: Normal

upstreamResolvers:

policy: Sequential

protocolStrategy: ""

upstreams:

- port: 53

type: SystemResolvConfWith the previous YAML code, we have configured our CoreDNS resource to forward queries to the upstream server provided in 10.249.100.11:853 (note that if you have any replicas in your IdM environment, you can just add all of them here) using the ca bundle created in the previous step dns-ca, when querying for the zone melmac.univ. This configuration will use DoT only for the IdM zone melmac.univ, not for external zones, but it’s possible to change that. It is important to remark that the field serverName: idm.melmac.univ relates to the CN provided in the certificate generated when installing DoT in IdM, as seen previously:

Subject: O=MELMAC.UNIV, CN=idm.melmac.univWith that, we have all the resources in-place created to test this feature.

Debugging tips

Before explaining the tests we will perform, it is interesting to provide some tips about how to troubleshoot and debug the eDNS resolutions in case some issue arises, to be ready beforehand.

As said, to confirm it’s working as expected and we don’t have any errors, you can review the logs provided at namespace openshift-dns in the dns-default pods (notice that this will only provide errors).

To illustrate how should look an issue, the following example shows unsuccessful queries:

[azure@bastion-cm66f ~]$ oc get pods -n openshift-dns

NAME READY STATUS RESTARTS AGE

dns-default-2l9vr 2/2 Running 0 27h

dns-default-bzmh6 2/2 Running 0 27h

dns-default-dkgdz 2/2 Running 0 27h

dns-default-m5wfx 2/2 Running 0 27h

dns-default-r2g76 2/2 Running 0 27h

dns-default-wl5sh 2/2 Running 0 27h

[...]

[azure@bastion-cm66f ~]$ oc logs -n openshift-dns dns-default-m5wfx

Defaulted container "dns" out of: dns, kube-rbac-proxy

.:5353

hostname.bind.:5353

melmac.univ.:5353

linux/amd64, go1.20.12 X:strictfipsruntime,

[INFO] 10.129.2.78:47819 - 27907 "AAAA IN idm.melmac.univ. udp 33 false 512" - - 0 6.910328086s

[ERROR] plugin/errors: 2 idm.melmac.univ. AAAA: dial tcp 10.249.100.11:853: i/o timeout

[INFO] 10.129.2.78:42770 - 62480 "A IN idm.melmac.univ. udp 33 false 512" - - 0 0.001761012s

[ERROR] plugin/errors: 2 idm.melmac.univ. A: dial tcp 10.249.100.11:853: connect: no route to host

[INFO] 10.129.2.78:47819 - 37127 "A IN idm.melmac.univ. udp 33 false 512" - - 0 9.087127558s

[ERROR] plugin/errors: 2 idm.melmac.univ. A: dial tcp 10.249.100.11:853: i/o timeoutOur example shows a CoreDNS log with connectivity issues with the IdM named DoT server. This is the first step to know if the configuration is working properly or not. With that we can check if the DNS queries inside our Pod network are being successfully resolved when using DoT.

Testing eDNS

Now, it’s time to test it! For that, we will need to make some queries inside a Pod deployed in our OpenShift Container Platform cluster. Let’s create a Pod with the image support-tools that is based on RHEL 10:

apiVersion: apps/v1

kind: Deployment

metadata:

name: query-tools

labels:

app: query-tools

spec:

replicas: 1

selector:

matchLabels:

app: query-tools

template:

metadata:

labels:

app: query-tools

spec:

containers:

- name: query-tools

image: registry.redhat.io/rhel10/support-tools

command:

- bash

- -c

args:

- sleep infinityI like to test inside some RHEL 10 images because there, the dig utility has the flag +tls to ask directly to secured nameservers, but you can use any other image that is based on RHEL 10 instead of the support-tools one. First, we will connect to the container in the Pod:

[azure@bastion-cm66f ~]$ oc exec -it query-tools-6cc56b8bb6-qbxwr -- shNext, we will install the bind-utils package:

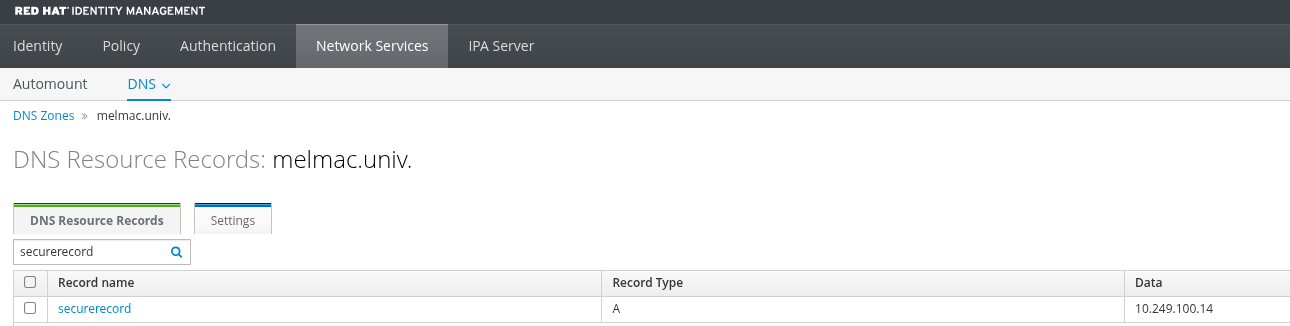

sh-5.2# dnf install bind-utilsNow, let’s suppose we have the following registry in our IdM DNS zone melmac.univ (Figure 1):

If we query this resource record directly inside our Pod (remember we have the enforced policy, so there is not any option that communication will be insecure), we can see that the record is successfully resolved:

sh-5.2# dig securerecord.melmac.univ

[...]

;; ANSWER SECTION:

securerecord.melmac.univ. 900 IN A 10.249.100.14

;; Query time: 53 msec

;; SERVER: 172.30.0.10#53(172.30.0.10) (UDP)

;; WHEN: Tue Jul 22 13:56:12 UTC 2025

;; MSG SIZE rcvd: 105

sh-5.2# dig securerecord.melmac.univ @10.249.100.11 +tls

[...]

;; ANSWER SECTION:

securerecord.melmac.univ. 86400 IN A 10.249.100.14

;; Query time: 7 msec

;; SERVER: 10.249.100.11#853(10.249.100.11) (TLS)

;; WHEN: Tue Jul 22 13:59:31 UTC 2025

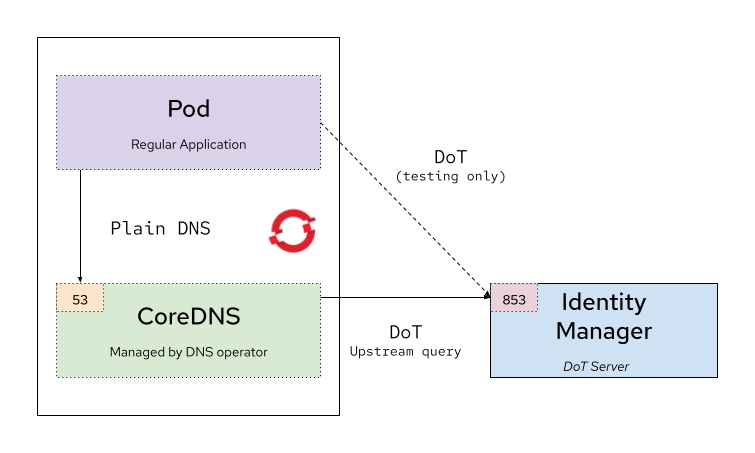

;; MSG SIZE rcvd: 97Let’s explain these tests. The flow of the communication of the first query is the following: inside a Pod we execute a dig query without specifying the IdM nameserver, it goes to the CoreDNS port 53, this acts as a proxy cache and redirects the query using DoT to the upstream server (IdM) defined for this zone, that answers successfully. In the second test we are querying directly to the nameserver (IdM) via secure DoT over port 853/TCP, which also is returning a successful result. These tests are illustrated in Figure 2.

With that we have checked that the DNS queries to upstream servers configured via CoreDNS are effectively encrypted.

Caveats and limitations

Unfortunately some work still needs to be done on the OpenShift Container Platform side. We have identified that the Container Runtime CRI-O, that is available on the worker nodes and that is the one that Kubelet instructs to download images, is not affected by the configuration of CoreDNS, and as such the worker nodes are using the local resolver defined in /etc/resolv.conf. As a result, all of this works fine in case of external registries that host the images of our containers (like public quay.io), but if we have an internal registry that is added to the IdM DNS zone secured with DoT, the query to know its resolution will not work. You can check any resolution provided by IdM directly in the worker nodes using:

[azure@bastion-cm66f ~]$ oc debug node/aro-cluster-cm66f-zltmf-worker-eastus2-czpjc

Starting pod/aro-cluster-cm66f-zltmf-worker-eastus2-czpjc-debug-4gtjc ...

To use host binaries, run `chroot /host`

Pod IP: 10.0.2.6

If you don't see a command prompt, try pressing enter.

sh-4.4#

sh-4.4# chroot /host

sh-5.1# nslookup idm.melmac.univ

Server: 10.0.2.6

Address: 10.0.2.6#53

** server can't find idm.melmac.univ: NXDOMAINAs you can see in the previous output, the node is not able to resolve the IdM registry, and as a consequence any registry, like a container one, that is included in the IdM DNS zone. This is a limitation that still needs to be addressed.

Wrap up

This article demonstrated how to use the IdM DoT feature to provide a secured and encrypted upstream DNS server to OpenShift Container Platform. This is an effective way to accomplish the zero trust architecture and improve security communications in your environments.

Because IdM is included in your RHEL subscription, you can try to replicate this content in your lab environment without any additional subscriptions. To set up the OpenShift Container Platform, you will need an OpenShift subscription. If you are not already a RHEL subscriber, get a no-cost trial from Red Hat.