In this article, I will cover how we can deploy the same Angular application image but use a different configuration for each environment. Before we start, however, let's review what we mean when we talk about "continuous delivery."

What is continuous delivery?

According to ContinuousDelivery.com:

Continuous delivery is the ability to put changes of all types—including new features, configurations, bug fixes, and experiments—into production, or into the hands of users, safely and quickly in a sustainable way.

Our goal is to make deployments—whether of a large-scale distributed system, a complex production environment, an embedded system, or an app—predictable, routine affairs that can be performed on demand.

We achieve all of this by ensuring that our code is always in a deployable state, even in the face of teams of thousands of developers making changes on a daily basis. We thus completely eliminate the integration, testing, and hardening phases that traditionally followed "dev complete," as well as code freezes.

How to handle Angular environments

Angular applications typically have a config, containing settings like:

- URLs to the APIs

- App configuration based on the environment

- Logs

Angular's CLI offers application environments that set up the environment at build time.

In Angular, if you look into the angular.json file, you can see how the app will be built and its environment-specific setup:

"configurations": {

"production": {

"fileReplacements": [

{

"replace": "src/environments/environment.ts",

"with": "src/environments/environment.prod.ts"

}

],The fileReplacements section lets you replace the files needed for each environment at build time.

We can deduce by looking into the code that when we run ng build --configuration=production the src/envrionments/envrironment.ts file is replaced with src/envrionments/envrionment.prod.ts. As a result, if you import environments/environment.ts into your application to access the environment variables property, you will get the desired value.

Angular's CLI makes all the magic:

import { Component } from '@angular/core';

import { environment } from './../environments/environment';

@Component({

selector: 'app-root',

templateUrl: './app.component.html',

styleUrls: ['./app.component.css']

})

export class AppComponent {

constructor() {

console.log(environment.<property>);

}

title = 'app works!';

}

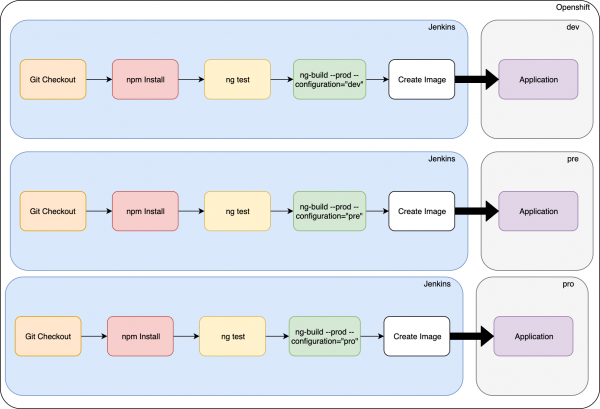

But this setup is not good for continuous delivery, as we have one image for each environment, as shown in Figure 1:

There is another approach, however. We can solve this problem by only building the image once and then promoting it to other environments using this approach:

Under assets/config, create a JSON file with the properties:

{

"server1": "url1",

"server2": "url2",

"server3": "url3"

}Create a new provider:

ng g s providers/appConfig

Replace the contents with the following:

import { Injectable } from '@angular/core';

import { HttpClient } from '@angular/common/http';

@Injectable({

providedIn: 'root'

})

export class AppConfigService {

private config: any;

constructor(private http: HttpClient) { }

public loadConfig() {

return this.http.get('./assets/config/config.json')

.toPromise()

.then((config: any) => {

this.config = config;

console.log(this.config);

})

.catch((err: any) => {

console.error(err);

});

}

getConfig() {

return this.config;

}

}Modify the app.module.ts with the following code:

import { BrowserModule } from '@angular/platform-browser';

import { NgModule, APP_INITIALIZER } from '@angular/core';

import { AppComponent } from './app.component';

import { AppConfigService } from './providers/app-config.service';

import { HttpClientModule } from '@angular/common/http';

export function initConfig(appConfig: AppConfigService) {

return () => appConfig.loadConfig();

}

@NgModule({

declarations: [

AppComponent

],

imports: [

BrowserModule,

HttpClientModule

],

providers: [{

provide: APP_INITIALIZER,

useFactory: initConfig,

deps: [AppConfigService],

multi: true,

}],

bootstrap: [AppComponent]

})

export class AppModule { }Here, we added the APP_INITIALIZER provider to load before the app's bootstrap, which lets us have the configuration before the app initialization. We then use a factory that calls appService.loadConfig(). Then, if we want to use it from the component, we inject the service and get the config:

import { Component } from '@angular/core';

import { HttpClient } from '@angular/common/http';

import { AppConfigService } from './providers/app-config.service';

@Component({

selector: 'app-root',

templateUrl: './app.component.html',

styleUrls: ['./app.component.css']

})

export class AppComponent {

title = 'app';

constructor(private http: HttpClient, private config: AppConfigService) {

console.log(this.config.getConfig());

}How to apply the configuration in Red Hat OpenShift

To use our environment-specific configuration, we just need to create a ConfigMap and then create a new volume from the ConfigMap(We are going to suppose that the app is already deployed and using nginx as base image)

oc create configmap config --from-file=<configMapLocation>/config.json

Now, set the volume in the deployment config:

oc set volume dc/angular --add --type=configmap --configmap-name=config --mount-path=/opt/app-root/src/assets/config --overwrite

Figure 2 shows what our architecture will ultimately look like:

I hope you found this article useful.

Last updated: July 1, 2020