With companies generating more and more revenue through their APIs, these APIs also have become even more critical. Quality and reliability are key goals sought by companies looking for large scale use of their APIs, and those goals are usually supported through well-crafted DevOps processes. Figures from the tech giants make us dizzy: Amazon is deploying code to production every 11.7 seconds, Netflix deploys thousands of time per day, and Fidelity saved $2.3 million per year with their new release framework. So, if you have APIs, you might want to deploy your API from a CI/CD pipeline.

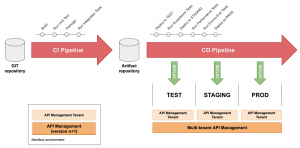

Deploying your API from a CI/CD pipeline is a key activity of the "Full API Lifecycle Management." Sitting between the "Implement" and "Secure" phases, the "Deploy" activity encompasses every process needed to bring the API from source code to the production environment. To be more specific, it covers Continuous Integration and Continuous Delivery.

An API is not "just another piece of software"

At Red Hat, we strongly believe an API is not “just another piece of software." Instead, we think an API is a software component in conjunction with:

- An interface to communicate with it.

- An ecosystem of consumers that communicate with this software.

- A relationship with developers consuming this API.

An API is built, deployed, and managed not just with the usual methods; as a result, deploying your API from a CI/CD pipeline requires additional processes, tools, and skills.

In this article, we will focus on the overarching principles and key steps to deploy your API from a CI/CD pipeline.

Overarching principles to deploy your API from a CI/CD pipeline

1. Use a contract-first approach

Although a code-first approach does not prevent you from deploying your API from a CI/CD pipeline, using a contract-first approach makes your processes much more reliable and streamlined.

In a contract-first approach, the API contract (for REST APIs the contract is named "OpenAPI Specification") is crafted well ahead of the implementation phase. It is a collaboration between the product owner, the architects, the developers, and the early customers. The Apicurio Studio can help you easily craft OpenAPI Specifications, collaboratively.

2. Ensure the testability of your API

To deploy your API from a CI/CD pipeline in an automated manner, tests are needed. There are different kinds of tests and a full book would be required to cover them all. To deploy your API from a CI/CD pipeline you would need to have at least:

- Unit tests: to test individually each smallest software component.

- Integration tests: to test a bigger chunk of software components together.

- Acceptance tests: to ensure business expectations are met (as part of the acceptance test-driven development methodology)

- End-to-end tests: to ensure every software component in the chain is working as expected, in a production-like environment.

- Performance tests: to ensure the performance is not degraded by a fix or a new feature.

Unit and integration tests are well known from developers. Let's focus on the usage of the later ones.

- Acceptance tests can be managed from a dedicated tool, such as Microcks, and triggered by your CI/CD pipeline.

- Performance tests can also be automated as explained in this blog post series: Leveraging Kubernetes and OpenShift for automated performance tests.

3. Adhere to the semantic versioning

When releasing new versions of your API, it is critical to adhere to the semantic versioning. It helps your CI/CD pipeline know how to deal with new releases: new minor versions are backward compatible, they can be deployed "in place". Major versions will need to be deployed "side-by-side" to keep existing customers happy.

4. Be idempotent

When managing software at scale, all tech giants will tell you: stuff happens. Servers fail, routers drop packets, hard disks loose data, etc. One way to be resilient to such kind of events is to be idempotent. Instead of creating a new service in your API Management solution, state that this service has to be present. Instead of deleting it, state it has to be absent. This way, your pipelines will be reliable in case of outages or transient perturbations.

Most operations of the new 3scale CLI have been designed to be idempotent.

5. Apply the API-Management-as-Code principles

Akin to the "Infrastructure-as-Code" principle, the "API-Management-as-Code" principle says that the state of your API management solution is fully determined by the content of your Git repositories. Services are defined by their OpenAPI apecification file, committed in your Git repository; Application plans are defined in an artefact file, also in your Git repository; and so on with the environment settings, API documentation, etc.

Steps to deploy your API from a CI/CD pipeline

1. Prepare the release

Since you applied API-Management-as-Code principles, all your artefacts are versioned and stored in a Git repository. To deploy your API from a CI/CD pipeline, start by checking out the repository.

Inside your Git repository is the API contract. Read the OpenAPI specification file and extract the relevant information for your pipeline:

- The field "info.version" is useful to apply semantic versioning.

- The vendor extension fields ("x-*" fields) in the "info" object can be used to hold metadata (Business Unit in charge, target channel, state, etc.).

From the OpenAPI specification, generate a Mock that will be exposed to your early adopters. Later, it will be used by all your API consumers to develop their client implementation. Tools such as Microcks can generate a mock from your OpenAPI specification file.

From those data, you can compute the API versioning and status.

The API is versioned according to semantic versioning: minor and patch versions are released continuously in place of the previous version. Existing consumers are always using the latest version. Major versions are released side-by-side and the previous API starts its deprecation countdown.

The API status can be computed from vendor extension fields or free-form metadata. It goes through those successive states :

- Created: The API is in working state, present on the developer portal but only accessible to early adopters.

- Published: The API is GA, anyone can subscribe. The subscription goes through the chosen workflow (with or without approval).

- Deprecated: The API is marked as deprecated. This reflects in the Developer Portal. No new third parties can subscribe to this API. API Gateway policies are enabled to communicate the retirement date (through headers or delays for instance).

- Retired: The API is removed from the Admin Portal and from the Developer Portal.

2. Deploy the API

Based on all this information, you can now publish the API in your API management solution. This will declare a new service or update the existing one and apply the correct configuration.

If your API requires custom API gateway policies, you will have to build a container image of your API gateway, containing the custom policy. The policy code is also stored in your Git repository. Once built, you can trigger a new deployment of the API gateway container.

3. Test your API

You can now ensure business expectations are met by running acceptance tests (from the acceptance test-driven development methodology). A tool such as Microcks can help you store, manage and run tests for your APIs. Having all your API test suites stored in one place is convenient: for each minor release, you can run the test suites of all previous releases. Thus ensuring the new release is actually backward compatible with the previous ones.

To deploy your API from a CI/CD pipeline, you will also have to publish application plans from the artefact files stored in your Git repository. Those staged plans are your service offering for API consumers. They hold quotas for each method, pricing rules for monetization, as well as the features list. The application plans are described as YAML files. They can be crafted by hand or from a GUI by the product owner and committed in your Git repository.

Once the application plans are published, you will have to create a new client application that will be used for end-to-end tests. This client application will hold some credentials that you can use to query the deployed API. Those end-to-end tests make sure the whole chain (firewall, reverse proxies, API gateway, admin portal, API back end, load balancers, etc.) are working. To be meaningful, end-to-end tests have to test the newly added API methods.

4. Release your API

Your new API release has been deployed! You can now publish the API documentation on your developer portal. You will have to take care of updating the OpenAPI Specification file to match the target environment. For OpenAPI Specification 2.0, this means updating the host, basePath, schemes, but also the securityDefinitions objects to replace the authorizationUrl and tokenUrl with their valid counterparts in the target environment.

The final touch to deploy your API from a CI/CD pipeline would be to notify your existing API consumers that a new minor release has been deployed. You can also send them a public release note if this is part of your processes.

Rollback

If something goes wrong during the CI/CD pipeline, you might be interested in rolling back any modification done so far. If you followed our idempotence and API-Management-as-Code principles, this has never been so easy: you can just trigger a new pipeline run of the previous minor release, and the previous state of the system will be restored.

Environments

If you have multiple environments in your company (as most, if not all our customers, have), those steps will have to be repeated in each environment.

There are some subtleties though:

- The first step (release preparation) is done once for all.

- The API gateway container image is also built only once and then deployed identically in each environment.

- Acceptance tests are run in functional environments whereas end-to-end tests are run in production-like environments (as well as performance tests).

- API consumers are notified only in production and production-like environments.

How many environments you need is entirely up to your internal processes. Some companies are fine with three environments, others need nine environments.

You can leverage the multi-tenant capabilities of the API management solution to handle multiple environments on one installation. However, a separated sandbox (usually in the development environment) is needed to test the N+1 version of the API management solution before applying the update.

Conclusion

As you can see, a lot of work is needed to deploy your API from a CI/CD pipeline! It is a good idea to choose a solution that comes with a helper CLI handling most of those operations. This way, you can focus on what matters the most: your code implementing business features.

Discover how the API management capability of Red Hat Integration can help you deploy your API from a CI/CD pipeline:

Last updated: August 6, 2019