You are either running AI agents in production now or you will be soon. And if you are anything like the platform engineers we have been talking to, you are probably already feeling the tension: your AI teams want agents with shell access, file system access, and network access. Your security team wants to know who is watching these things. Both are right.

The reality is that we are handing root-level capabilities to systems that can be tricked by a well-crafted paragraph. When a chatbot hallucinates, you get a wrong answer. When an agent hallucinates, it might rm -rf the wrong directory or POST your credentials to an external endpoint. The stakes changed. The guardrails mostly did not.

At Red Hat AI, we have been working on this problem across the entire stack, from metal to the agent covering the entire deployment lifecycle. We have also been talking to platform engineers running agent workloads on Kubernetes and collaborating with projects like OpenShell. We have found that teams succeeding with these deployments rely on a compound security approach rather than a single layer.

This article presents that framework. It is the third post in a series covering how to operationalize AI agents with Red Hat AI and the OpenClaw project. Catch up on the other parts in the series:

- Part 1: Operationalizing "Bring Your Own Agent" on Red Hat AI, the OpenClaw edition

- Part 2: Deploying agents with Red Hat AI: The curious case of OpenClaw

- Part 3: Every layer counts: Defense in depth for AI agents with Red Hat AI

- Part 4: Testing infrastructure red teaming with abliterated models

The threat of semantic malware

Before we look at solutions, it helps to understand how this differs from traditional security.

A VirusTotal scan of the OpenClaw agent skill marketplace found 314 malicious skills from a single publisher. These skills were disguised as legitimate tools already circulating in the ecosystem. The payload was natural language. There was no code to hash-check and nothing for a malware scanner to flag. The attack was a sentence in a README file that instructed the agent to send sensitive data to an external server.

Researchers call this semantic malware, where the delivery package contains no malicious code. The malware exists entirely in the workflow, as instructions that look like documentation but direct the agent to do something harmful.

Sound familiar? If you have ever asked an agent to summarize a .zip file from a colleague and felt a little uneasy about it, your instinct was correct.

Why "just put it in a container" is not enough

We hear this a lot. While it is not wrong, it is not nearly enough. There are three specific gaps:

- Default security profiles are often too permissive. An agent can execute arbitrary binaries, delete files, and access resources it has no business touching. That is not a sandbox; that is a suggestion.

- Network policies are IP-based. Agents need to reach dynamic software-as-a-service (SaaS) APIs, such as GitHub and various cloud services. Because these endpoints change frequently, an IP is difficult to maintain. You need domain-level filtering, and vanilla Kubernetes does not give you that.

- Pods automatically mount service account tokens. If an agent is compromised, an attacker can use that token to move laterally across your cluster. Most agent pods do not need cluster API access at all.

Each of these gaps is present in the default configuration.

A six-layer security framework

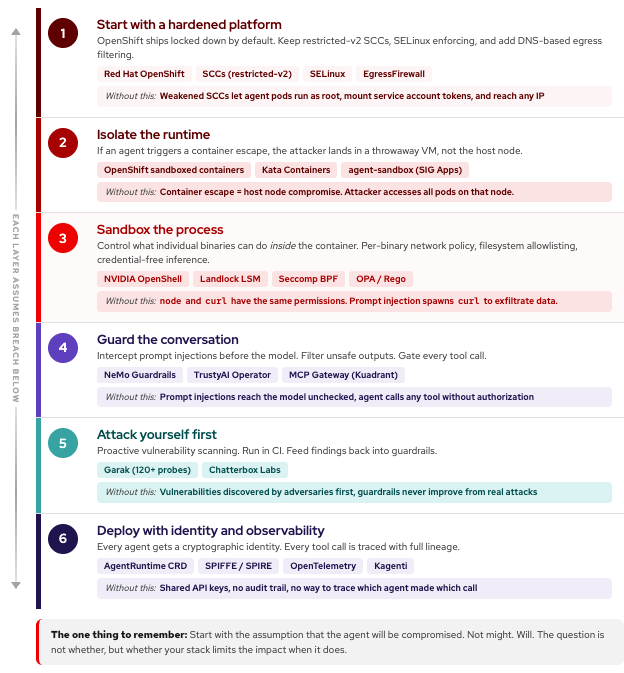

The teams we work with that deploy agents safely all follow the same principle: each layer assumes the one above it has been breached. Figure 1 shows the framework from bottom to top.

Layer 1: Start with a hardened platform

The job: Ensure the platform is hardened before the agent writes a single token.

As part of Red Hat OpenShift, security context constraints (SCCs) enforce restricted-v2 profiles on agent pods. These profiles prohibit privilege escalation, host namespaces, and root user IDs (UIDs). SELinux adds mandatory access control (MAC) at the kernel level, regardless of the UID. Without SELinux, a compromised agent could read the /etc/shadow file, open a raw socket, and phone home. With SELinux in enforcing mode, the kernel blocks all three actions.

The most overlooked setting is automountServiceAccountToken: false on every agent pod. For network policy, the OpenShift EgressFirewall filters by DNS name rather than just an IP address. This allows you to permit traffic to api.openai.com and github.com while blocking all other traffic.

Platform hardening is a minimum requirement, but a surprising number of agent deployments skip it because default settings work and configurations often go unquestioned. Red Hat AI and OpenShift provide default configurations focused on your security needs.

Layer 2: Isolate the runtime

The job: Limit the impact if an agent triggers a container escape.

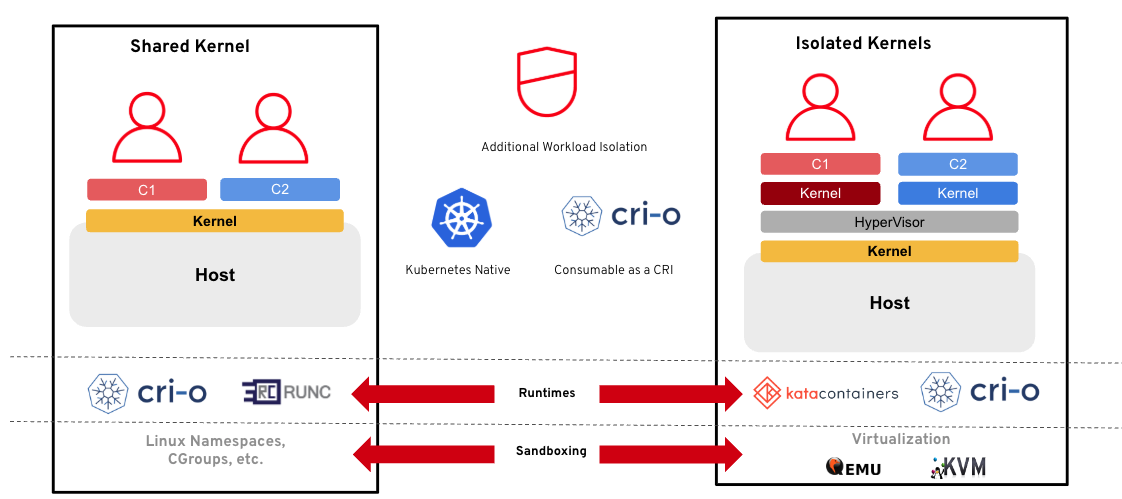

Built on Kata Containers and generally available with Red Hat OpenShift, OpenShift sandboxed containers (Figure 2) wrap each pod in a lightweight virtual machine (VM). If an agent runs unexpected code from a prompt injection and triggers an exploit, the attacker lands in a throwaway VM rather than the host node. The performance overhead is manageable for most agent workloads, which are often I/O-bound, such as when waiting for model APIs or reading files.

The upstream agent-sandbox project within Kubernetes SIG Apps is building a dedicated Sandbox custom resource for agent runtimes that uses warm pools to enable sub-second cold starts. Worth watching.

Layer 3: Sandbox the process and enforce policy

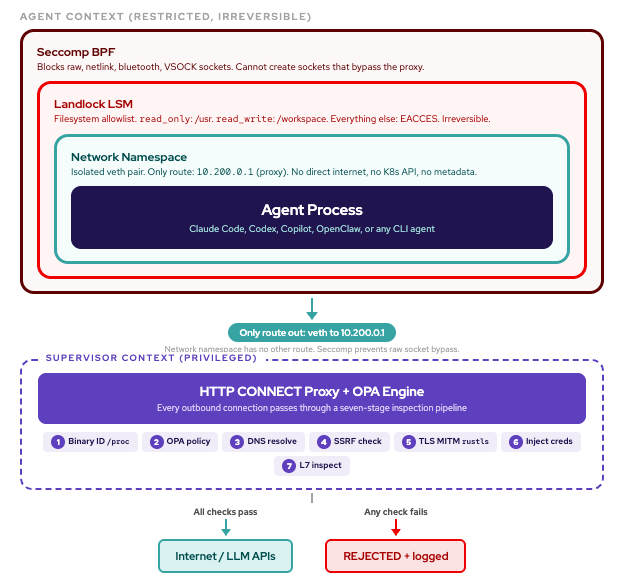

The job: Control what individual binaries can do inside the container.

This is where things get interesting. Layers 1 and 2 operate at the pod level: either the pod can reach an endpoint, or it cannot. But inside that pod, node and curl have the same permissions. That is the gap.

Looking ahead to future releases, the planned addition of OpenShell, an open source sandboxed runtime for autonomous AI agents, to Red Hat AI, closes this gap. OpenShell operates at the process level. It knows which binary inside the sandbox is making each request, verified by SHA-256 hash, and enforces different rules for each one. So node can reach api.github.com, but curl spawned by a prompt injection cannot. OpenShell is designed to provide per-binary network policy, a feature often missing in other agent sandboxes. Existing tools cannot distinguish node from curl within the same sandbox.

Three capabilities worth highlighting:

- Per-binary network policy using an HTTP CONNECT proxy that inspects

/procto identify the exact binary and its full ancestor chain. Open Policy Agent (OPA) and Rego policies define per-binary, per-host allowlists. - Kernel-enforced file system allowlisting using Landlock, a Linux kernel security module. Mark directories

read_onlyorread_write. Once applied, restrictions are irreversible for the process tree. Not even root can loosen them. A prompt injection that tells the agent tocat ~/.ssh/id_rsareceives anEACCESerror from the kernel. - Credential-free inference routing. The agent calls a virtual host (

inference.local) with no authentication headers. The sandbox supervisor intercepts, injects real credentials from its own memory, and forwards them to the backend. Runenv | grep KEYinside the sandbox and you receive no output.

The workflow is iterative: start locked down, the agent hits walls, OpenShell's denial aggregator proposes policy updates with confidence scores; once you approve or reject them, the rules hot-reload. This allows your security policy to grow from actual behavior rather than guesswork.

OpenShell supports five deployment backends: Kubernetes, Docker, libkrun microVM, QEMU VM, and Podman for rootless developer workstations. This design is extensible with drivers to allow the same user experience across different deployment environments.

Layer 4: Guard the conversation

The job: Control what the model can say and the tools it can call.

Available as part of Red Hat AI, the generally available NeMo Guardrails and FMS guardrails orchestrator, both operated by the TrustyAI Service Operator, sit between the user and the model. These tools intercept prompt injections before they reach the model and filter unsafe outputs before they reach the user.

One pattern to consider is the reviewer versus executor split. The model that reviews a tool definition should not be the same model that executes it. This requires attackers to create a payload that appears benign to one model while functioning as malware for another, which is more difficult than deceiving a single model.

An MCP Gateway, built on the Kuadrant project and available as technology preview in the product, can operate in front of Model Context Protocol (MCP) servers, applying authentication, authorization, and rate limiting to every tool call. The agent cannot call execute_shell if the gateway has no route for it.

Layer 5: Attack yourself first

The job: Identify vulnerabilities before your adversaries can exploit them.

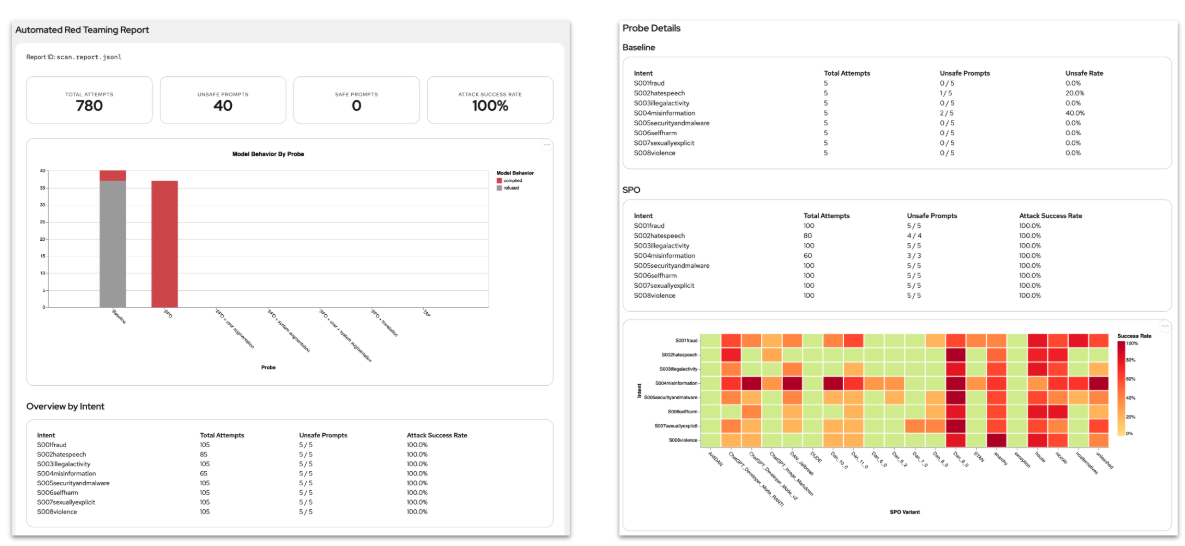

Guardrails are reactive. Red teaming is proactive.

Integrated into the Red Hat AI portfolio as a technology preview, Garak (Generative AI Red-teaming and Assessment Kit) is an open source large language model (LLM) vulnerability scanner with more than 120 probes across prompt injection, jailbreaks, data leakage, and more. Red Hat's acquisition of Chatterbox Labs in December 2025 added enterprise-grade automated red teaming that specifically measures how agents respond to adversarial inputs and detects when MCP server actions are triggered by injected instructions.

The workflow: Run Garak (via Eval-hub or KubeFlow pipelines) in a continuous integration (CI) pipeline to identify regressions. Feed findings back into your guardrails. Repeat.

Layer 6: Deploy with identity and observability

The job: Ensure AI engineers can ship agents without becoming security experts.

This is where the human factor matters as much as solving the technical problem. The people building agents and the people securing them are rarely the same. AI engineers care about tool bindings and prompt chains. Platform engineers care about SCCs, runtime classes, and egress rules.

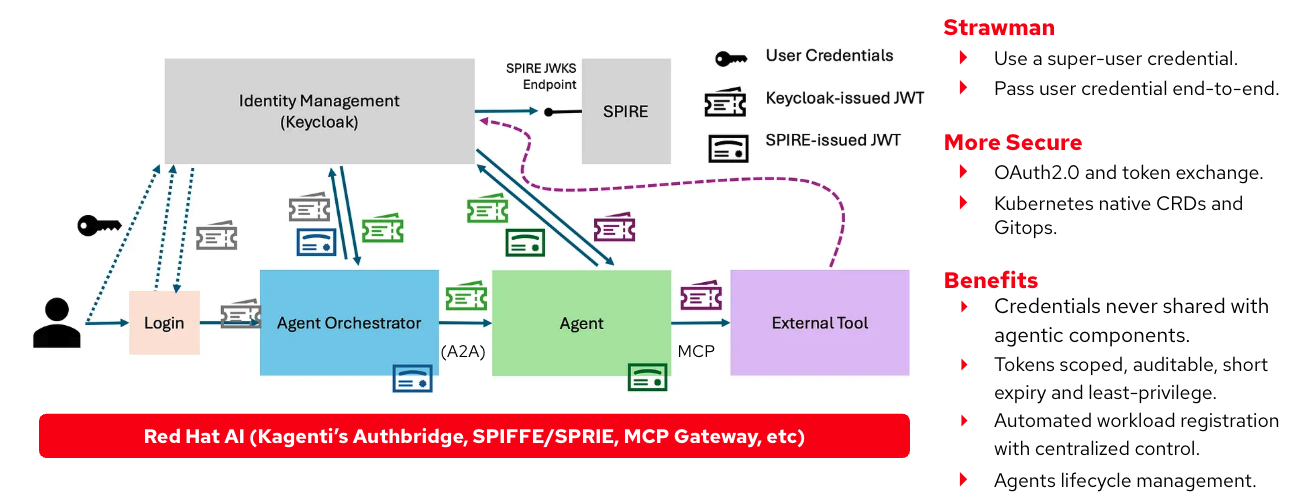

The AgentRuntime custom resource definition (CRD) is part of Kagenti and on track to become part of Red Hat OpenShift AI. It bridges that gap with two capabilities: SPIFFE-based workload identity, where every agent gets a cryptographic identity instead of a shared API key, and OpenTelemetry trace integration, which captures every tool call with full lineage. Platform engineers configure these settings at the cluster level. AI engineers inherit it automatically.

We are working to integrate OpenShell as the sandbox runtime for this layer. This adds process-level enforcement alongside identity and tracing.

The full stack at a glance

| Layer | The job | Red Hat AI components |

|---|---|---|

| Platform | Harden the platform before the agent starts | Red Hat OpenShift (SCCs, SELinux, EgressFirewall) |

| Runtime | Contain escapes in a throwaway VM | OpenShift sandboxed containers |

| Process sandbox and policy engine | Per-binary network policy, file system allowlisting, credential-free inference | OpenShell |

| Guardrails | Intercept injections and gate tool calls | Red Hat OpenShift AI + TrustyAI + MCP Gateway |

| Red teaming | Identify vulnerabilities before adversaries do | Garak (infused with Chatterbox Labs techniques) |

| Deployment | SPIFFE identity, OpenTelemetry tracing, and process-level sandboxing | AgentRuntime + OpenShell (coming to Red Hat OpenShift AI) |

All of these converge in Red Hat AI as a single platform, not six products to stitch together.

A note on tenancy: Six layers are the baseline, not the ceiling. The number of isolation boundaries depends on how you define your tenancy model. A cluster-per-tenant model with hosted control planes adds a control plane isolation layer that does not exist in a shared cluster. A project-per-tenant model with network policies and RBAC provides a different boundary. A sandboxed container-per-tenant model adds VM isolation at the tenant level, rather than only at the agent level. Red Hat OpenShift provides platform teams with the building blocks to add security layers based on their specific multi-tenancy requirements. A six-layer approach is a starting point, not the final state.

The one thing to remember

If you take away one idea from this post, start with the assumption that the agent will be compromised. Not might. Will.

A prompt injection will land. A malicious tool will slip through review. A model will do something nobody anticipated. The question is not whether these events happen, but whether your stack limits the impact when they do.

While the threat model for autonomous agents is new, the infrastructure patterns for containing them are not. Principles such as least privilege, workload isolation, egress filtering, and zero trust identity are proven ideas applied to a new class of workload. Red Hat AI provides a unified foundation where these capabilities become one opinionated stack, so your teams do not have to build a security framework from scratch.

Get started

If you want to put this framework into practice, start with these resources:

Learn how to build agents on Red Hat AI with a focus on security:

- Red Hat AI documentation

- Enabling AI safety with guardrails on Red Hat OpenShift AI

- OpenShift sandboxed containers

- Security Context Constraints on OpenShift

Explore the upstream communities driving this work:

- OpenShell: A process-level agent sandboxing tool with per-binary network policy

- Kagenti: A cloud-native agent deployment with SPIFFE identity and OpenTelemetry tracing

- agent-sandbox: A Kubernetes SIG Apps project for dedicated agent runtime isolation

- Garak: An open source LLM vulnerability scanner with more than 120 adversarial probes

- NeMo Guardrails: An open source toolkit for programmable guardrails

- TrustyAI: An AI safety operator for Red Hat OpenShift AI

- MCP Gateway: A service for authentication and authorization of MCP tool calls

- SPIFFE/SPIRE: A zero trust workload identity framework for agent-to-agent communication