Every SRE knows the feeling. You open Grafana, stare at 14 panels of time-series data, and try to answer one deceptively simple question: Is anything wrong?

Dashboards are excellent at displaying data. They are far less effective at conveying meaning. As Red Hat OpenShift clusters grow with dozens of namespaces, GPU-accelerated AI workloads, and vLLM inference services, the gap between what the metrics say and how you should act widens. Platform teams end up mentally stitching together CPU utilization graphs, pod restart counts, GPU temperatures, and request queue depths. They rely on pattern recognition to catch the anomaly before it escalates into an incident.

Managing these environments becomes more difficult as they grow. A single Grafana dashboard might surface the right signal for a three-node cluster. At enterprise scale—with varied GPU hardware, multiple inference engines, and dependencies across namespaces—static panels cannot reliably show the most important insights.

We asked a different question: What if the system could combine that data for you?

Architecture: From Prometheus to a large language model

The Red Hat OpenShift AI observability summarizer is not a chatbot bolted onto a dashboard. It is a purpose-built pipeline that transforms raw time-series data into actionable, human-readable insights. The design is layered. Each stage reduces noise and increases signal so the large language model (LLM) receives only the data it needs to produce a focused answer.

The pipeline flows through five distinct layers:

- Intent extraction: Classifying what the user asks.

- Metric discovery: Identifying which of the approximately 1,800 metrics are relevant.

- Query generation: Building type-aware PromQL for each selected metric.

- Statistical analysis: Applying anomaly detection, trend analysis, and health scoring.

- LLM summarization: Transforming structured analysis into a natural-language narrative.

Each layer is deliberate. Each one reduces noise. The following sections walk through them in detail.

Intent extraction: Understanding what you are really asking

When a user asks, "How are my GPUs doing?," the system does not simply search for metrics containing the word GPU. It classifies the intent behind the question into one of eight recognized types. Each type maps to a different query strategy, as detailed in the following table.

| Intent | Example question | Query strategy |

|---|---|---|

current_value | "What is the GPU temperature?" | Instant query |

count | "How many pods are running?" | Aggregated count |

average | "What is average CPU usage?" | avg() over time range |

percentile | "What is P95 latency?" | histogram_quantile() |

top_n | "Which pods use the most memory?" | topk() |

comparison | "Compare latency across models" | Multi-series query |

trend | "How has GPU utilization changed?" | Range query with slope analysis |

rate | "What is token throughput?" | rate() or increase() |

This classification is important because the same metric requires different PromQL depending on the user intent. A counter like vllm:num_requests_total requires increase() to answer "How many requests arrived in the last hour?" but rate() to answer "What is the current throughput?" Selecting the wrong function produces numbers that are technically valid but not useful for operations. The intent layer prevents these errors at the source.

Metric discovery: Signal selection at scale

This layer is where the core design work lives. An OpenShift cluster running GPU-accelerated workloads can expose thousands of Prometheus metrics. Most of these metrics are irrelevant to a specific question. Sending every metric to a language model wastes tokens, degrades response quality, and increases the risk of hallucinations. The discovery layer filters data intelligently.

Knowledge base: Metrics catalog

Approximately 1,800 pre-categorized OpenShift metrics are stored in a bundled JSON file. These are organized into 19 categories, including Cluster Health, Node Hardware, Pods & Containers, GPU & AI/ML, Networking, and Storage. Each metric entry carries three pieces of metadata:

- Priority (high, medium, low): The system only considers high and medium priorities during discovery to keep the candidate set manageable.

- Semantic keywords: These are generated through a five-tier relevance system to enable fast matching against user queries.

- Type metadata: This includes counter, gauge, histogram, or summary types. The query generator uses this metadata to select the correct PromQL pattern.

The catalog is bundled into the container image at build time. A cold-start lookup completes in approximately 15 milliseconds.

Dynamic GPU discovery

GPU metrics present a unique challenge: they vary by vendor. NVIDIA exposes DCGM_FI_DEV_GPU_TEMP. Intel Gaudi reports habanalabs_temperature_onchip. AMD uses its own prefix conventions. Hardcoding these names is difficult to maintain.

Instead, the system queries Prometheus at startup for metrics that match configurable vendor prefixes. It detects the primary GPU vendor present in the cluster and merges discovered GPU metrics into the catalog with the appropriate priority levels. This discovery process runs asynchronously. It completes in about one to two seconds and does not block the server from accepting requests.

To prepare for new metric naming conventions, platform teams can supply additional prefixes via environment variables: GPU_METRICS_PREFIX_NVIDIA, GPU_METRICS_PREFIX_INTEL, and GPU_METRICS_PREFIX_AMD. These variables are additive. Custom prefixes extend the built-in defaults instead of replacing them. This means you can support new GPU monitoring exporters without a code change or image rebuild.

Catalog validation

A bundled catalog can become outdated as clusters evolve. A CatalogValidator runs at startup to check catalog entries against the live Prometheus instance. It removes metrics that no longer exist and adds new ones at medium priority. Validation completes within a 10-second timeout and does not block server readiness.

Runtime catalog (after startup)

The static catalog is only the baseline. After validation, the service holds a single, cluster-specific catalog. Outdated names from the bundle are removed, and new Prometheus names that match the category structure are added. GPU and vLLM metrics are included in the working set for the chat. The semantic scoring described in the next section runs against this merged catalog.

Semantic scoring

When a user asks a question, the system ranks candidate metrics using a relevance scoring system. Each metric is scored across four dimensions:

- Keyword pattern matching against user intent

- Metric type relevance

- Name specificity

- Catalog priority

Domain-specific bonuses sharpen the ranking. GPU and vLLM metrics receive a 15-point boost when the question involves those domains. Common abbreviations like TTFT, TPOT, and ITL receive a 20-point boost to help the correct latency metric appear first. This scoring ensures the system selects the most relevant metric for operations instead of just the most common one.

Query generation: Metric type awareness

Correct PromQL generation is more complex than it appears. Prometheus defines four metric types, and each requires a different query pattern. Applying the wrong function to a metric produces results that look correct but are not useful for operations. This is a common error in hand-built dashboards.

- Counters (requests, errors, tokens processed): These always require

rate()orincrease(). Querying a counter's raw value returns a number that only increases, which does not show recent behavior. - Gauges (temperature, queue depth, memory usage): These display current or averaged values directly. Applying

rate()to a gauge produces nonsensical derivatives. - Histograms (latency distributions): These require

histogram_quantile()to extract meaningful percentiles. Raw bucket values are unintelligible to most operators. - Summaries (pre-calculated averages): Require dividing

rate(sum)byrate(count)to produce correct averages over a time window.

The system maintains a type registry that maps each discovered metric to its Prometheus type and automatically selects the correct query pattern. This prevents errors that lead to incorrect data.

Smart fallbacks

Not every metric is available on every cluster. The system uses fallback chains to ensure the user receives a useful response instead of an empty result. For example, when querying total request counts:

- 1st:

vllm:num_requests_total(preferred) - 2nd:

vllm:request_success_total+vllm:request_errors_total(computed sum) - 3rd:

vllm:request_success_total(lower bound)

This approach ensures operators always receive the best available answer, even if the cluster metrics differ from the expected baseline.

From numbers to context

Before the LLM call, the pipeline compresses time series into structured inputs. This includes numeric summaries, optional correlated logs and traces, and a distinction between computed facts and model conclusions. This reduces token use and ensures the system relies on data rather than hidden logic.

Per-metric summaries

For each metric, the system computes basic aggregates over the user query window, such as the average and latest values. This allows the model to see the data range without processing every raw sample. This reduces noise and cost while remaining accurate to the underlying data.

Severity and change: LLM interpretation

We do not currently use separate services to create labels like spike, dip, or trend. We also do not use a health score based on manual thresholds. The current implementation provides fixed baseline labels, such as stable trend and normal severity, alongside the numeric summaries.

The model uses these as a starting point. It can override them based on the data. For example, it might note a sharp increase even if the label is stable. Replacing these placeholders with computed classifiers is a natural next step. The pipeline's job is to supply accurate aggregates and baseline context; the model's job is to interpret them.

Correlated logs and traces

When you enable correlation, the system attaches related log lines and trace spans to the metric data. This helps the system link symptoms across different signals, such as connecting latency to errors or resource pressure elsewhere, instead of treating each series in isolation.

Why this layer stays intentionally thin

The design prioritizes honest signal engineering: providing specific, accurate data to the LLM. Richer statistical classifiers or scored health models can be added over time. Currently, the product provides clear data aggregation. This maintains a distinction between computed numbers and the model's interpretation, so readers and operators understand what is automated versus inferred.

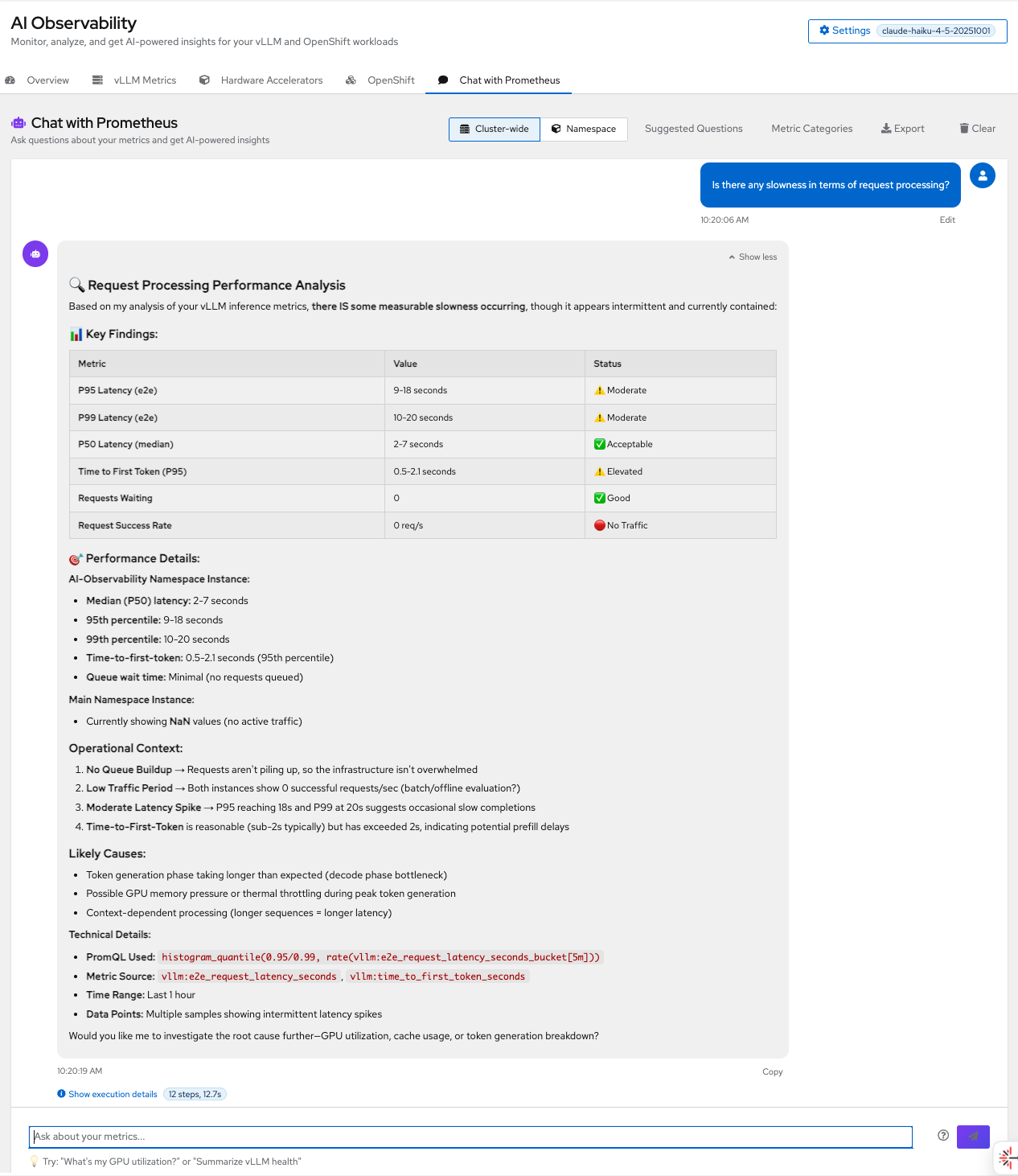

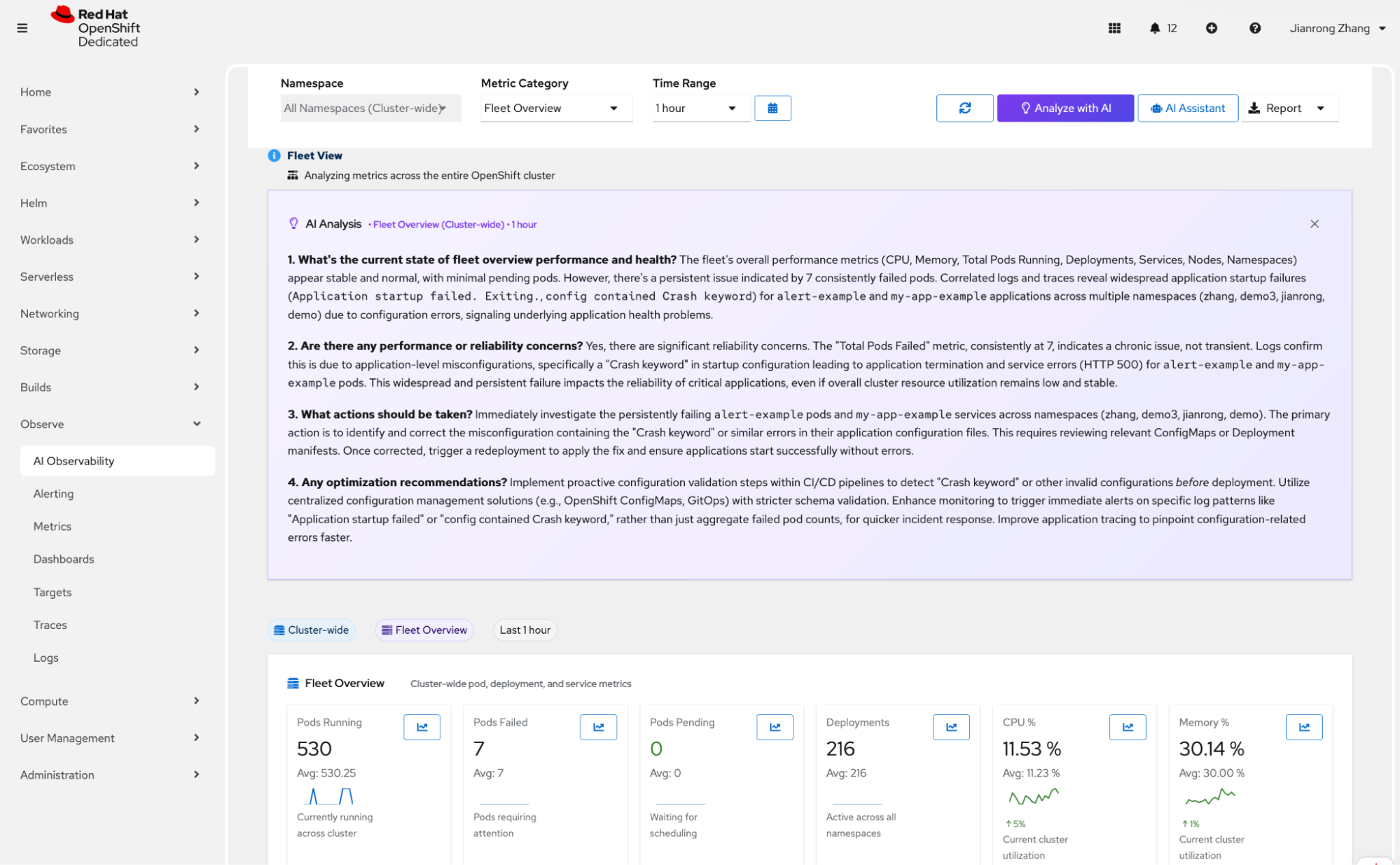

As shown in Figure 1, the system identifies latency spikes by querying specific percentiles, providing the model with a clear trend rather than just a single data point.

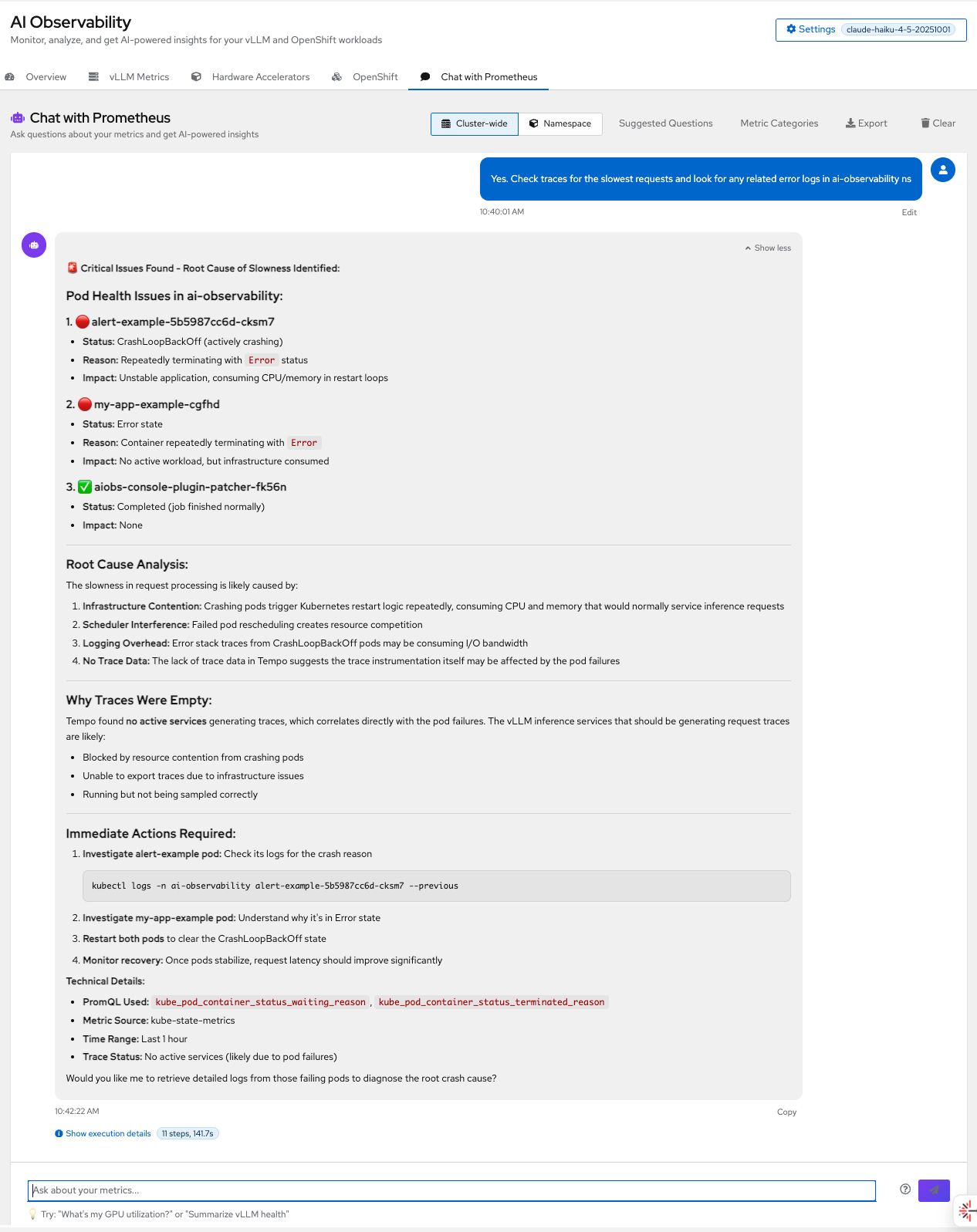

Beyond metrics, the system correlates different signals to find root causes. In Figure 2, you can see how the pipeline combines Tempo traces with kube-state-metrics to pinpoint crashing pods.

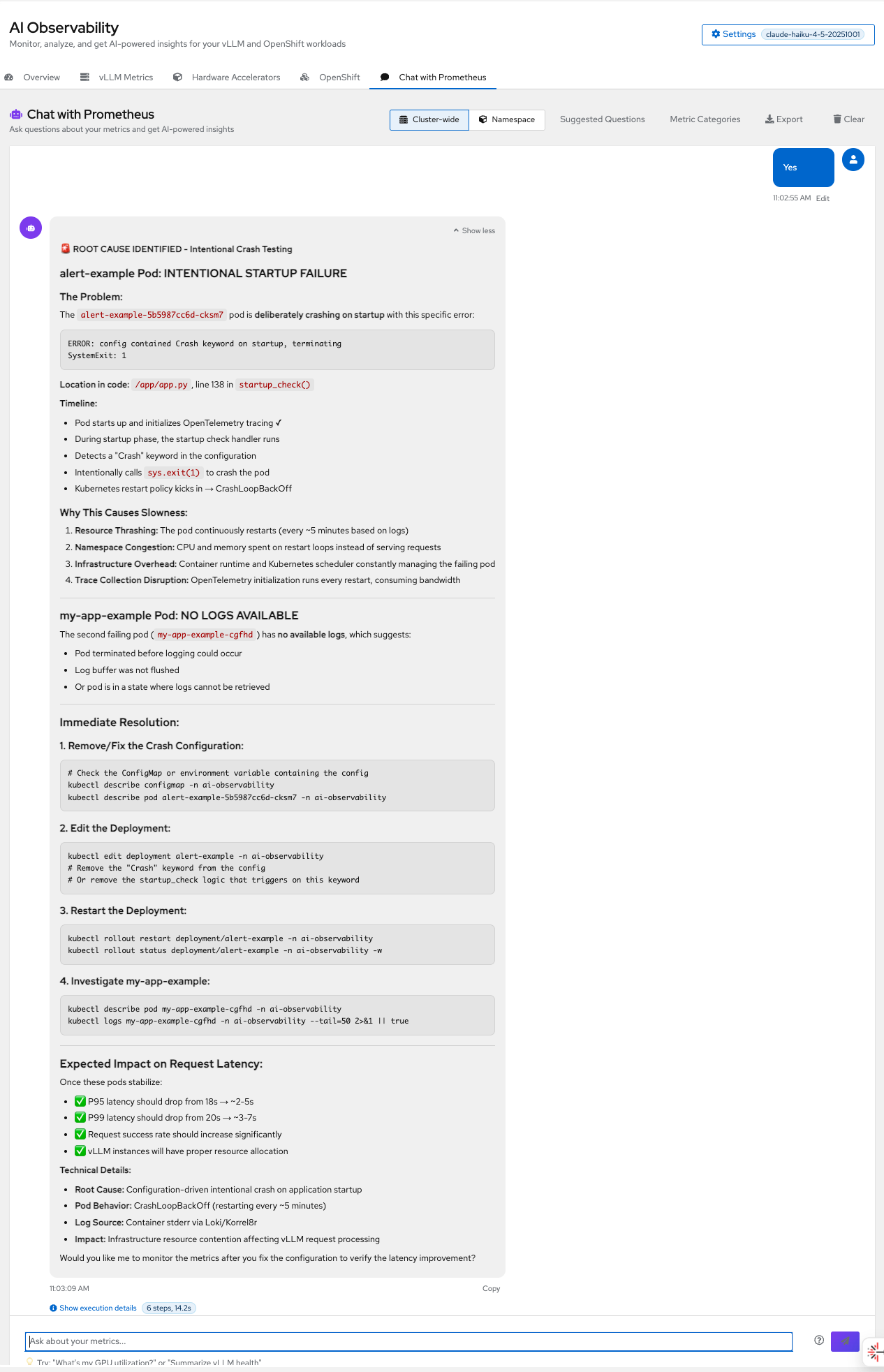

To provide the final layer of context, the system retrieves logs using Korrel8r (Figure 3).

LLM summarization

By the time data reaches the language model, the system has filtered it from about 1,800 candidate metrics down to three to five relevant ones. It has also queried the data with type-aware PromQL and added annotations for trends, anomalies, and health scores. The LLM task is deliberately narrow and well-defined: transform structured analysis into clear, natural-language narrative.

The system supports multiple LLM providers, including Anthropic Claude, OpenAI GPT, Google Gemini, and locally hosted Llama models using Llama Stack. We set the temperature to 0 for deterministic, reproducible results. The response is not a generic summary; it is a focused answer to the user question based on actual metric values.

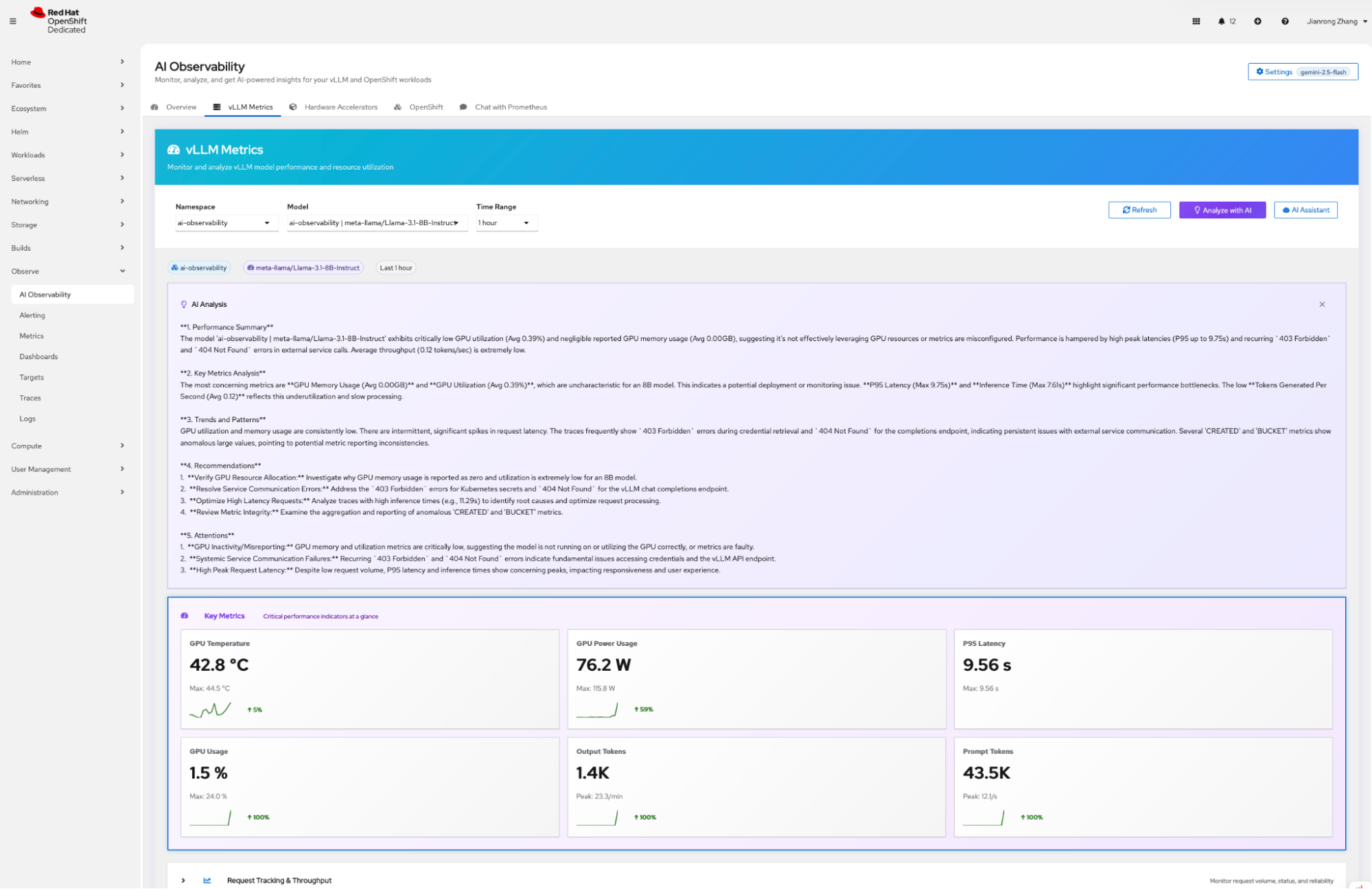

The system provides a summary of vLLM metrics with recommendations, as shown in Figure 4.

The system also provides a summary of your OpenShift metrics (Figure 5).

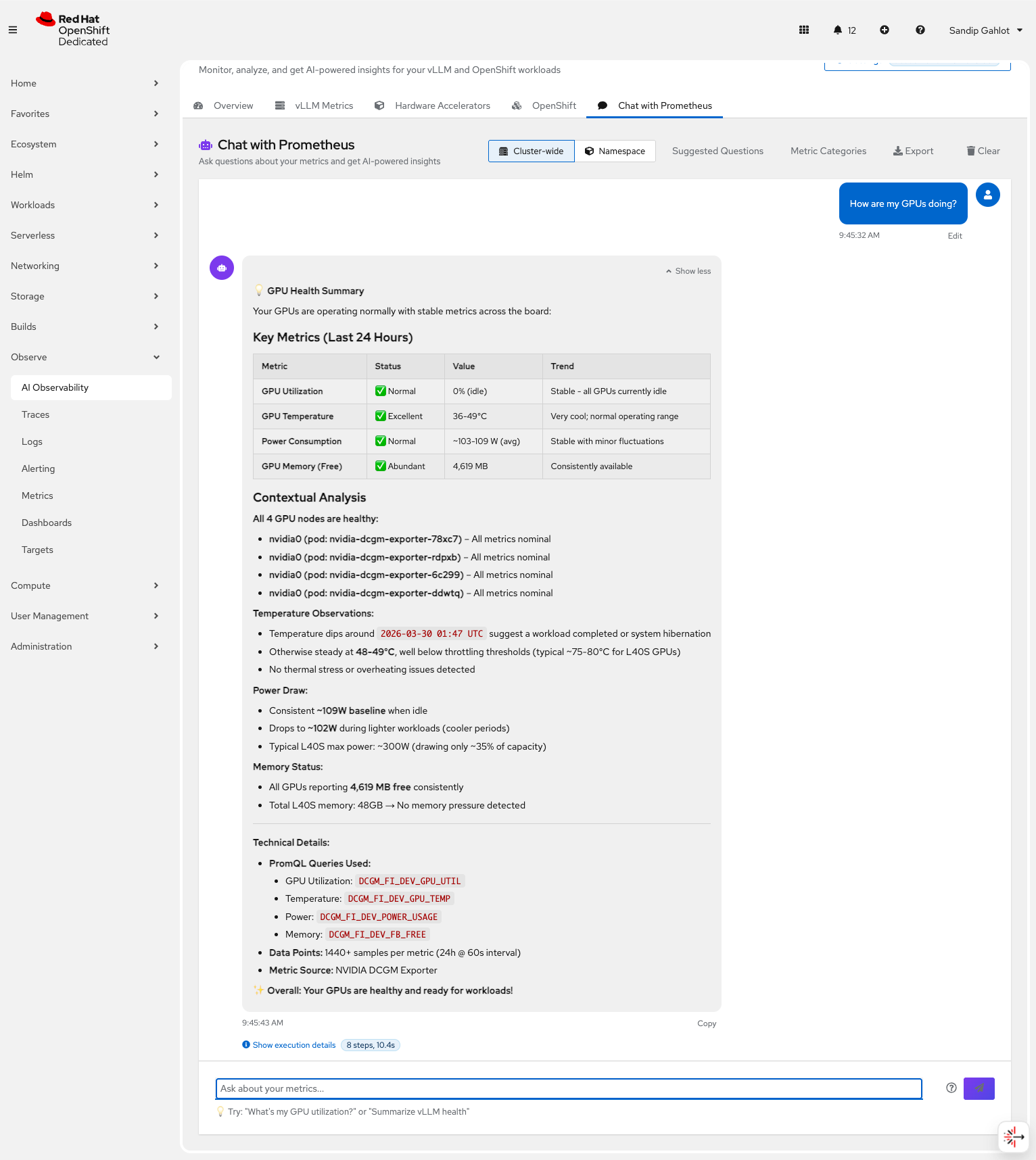

For example, the question "How are my GPUs doing?" produces the following response (Figure 6):

Your cluster has 4 NVIDIA A100 GPUs active. Average temperature is 72°C (stable, within normal range). GPU utilization is 85% average with one GPU at 94%—the workload in namespace llm-serving is driving this. Power consumption has been increasing over the past 6 hours. No anomalies detected, but consider load-balancing inference requests if utilization on GPU-3 continues climbing.

Not a dashboard. Not a wall of numbers. An answer.

Scope control: Cluster-wide versus namespace

Aggregation scope is a key architectural decision for this pipeline. The level of aggregation affects signal clarity and LLM response quality.

Cluster-wide queries use aggregation functions, such as avg(), max(), sum(), to combine per-pod, per-node, and per-GPU metrics into fleet-level summaries. This prevents the model from processing hundreds of individual time-series, which reduces noise.

Namespace-scoped queries add a {namespace="X"} label filter. This produces precise, targeted results when a user needs to investigate a specific workload or service.

The system defaults to cluster-wide to provide an overview. Users can then drill into specific namespaces for more detail. This mirrors the standard troubleshooting workflow of most SREs: start broad, then narrow.

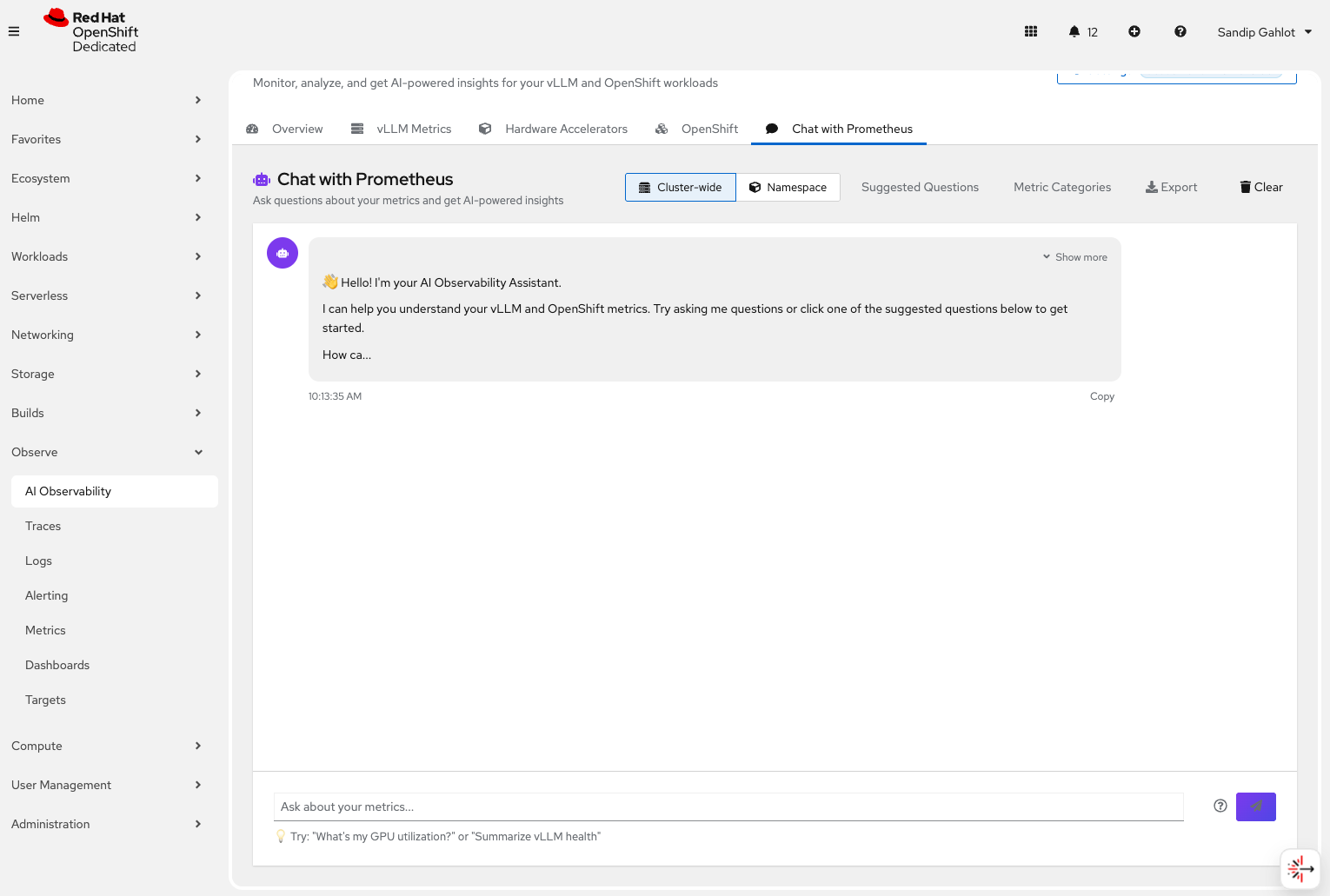

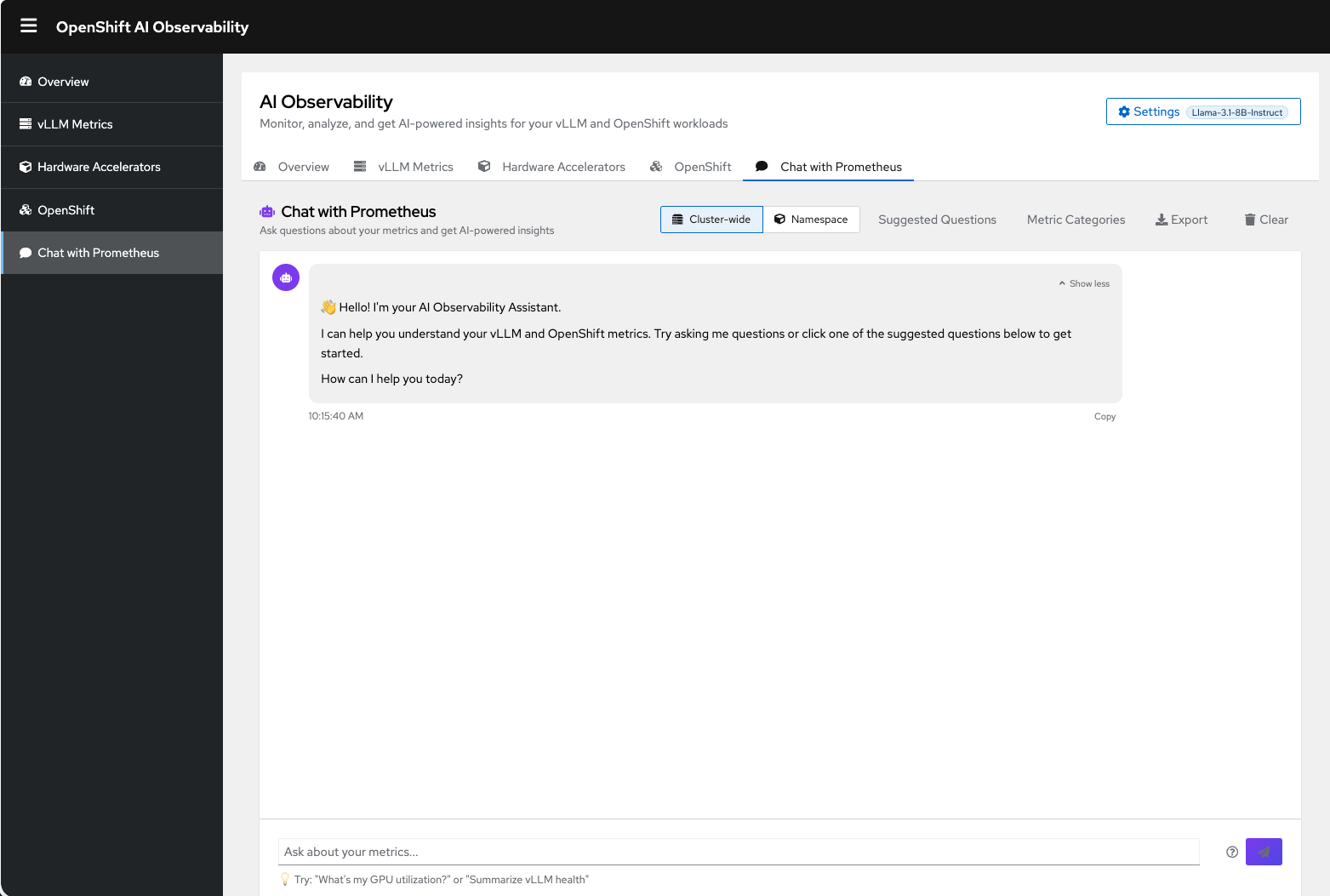

The console plug-in: Access insights within your workflow

The summarizer runs as an OpenShift console plug-in. It is embedded in the OpenShift web console navigation sidebar. Operators do not need to switch between tools. They can open a chat panel alongside their existing cluster management workflow to ask a question and receive an answer in context (Figure 7).

The plug-in also runs as a standalone React application for local development. The tool uses the same code base, components, and MCP client. The only difference is the credential strategy: Kubernetes Secrets in production versus browser session storage during development. See Figure 8.

Why summarization outperforms dashboards

Summarization offers several improvements over traditional dashboard-based monitoring.

Dashboards are passive

They present data and wait for a human to interpret it. The summarizer is active. It interprets the data, identifies important information, and presents a conclusion. For routine health checks, this reduces a 10-minute investigation into a 10-second read.

Dashboards are static

A Grafana panel shows the same visualization whether metrics are normal or alarming. The summarizer adapts its response to the data. If the system is healthy, the tool provides a brief update. If there are issues, it explains them and recommends actions.

Dashboards require expertise to read

Interpreting a histogram heatmap or a multi-series rate graph requires training and experience. Natural-language summaries are accessible to anyone on the team, from junior engineers to incident commanders to product managers checking on SLA compliance.

Dashboards do not correlate signals

Each panel exists in isolation. Recognizing that a CPU spike, a pod restart loop, and a latency increase are all symptoms of the same underlying issue requires a human to draw those connections. The summarizer connects these signals automatically through metric correlation and contextual analysis.

Prompt engineering: Structure, signal, and noise reduction

The quality of the LLM response depends on the quality of its input. The system prompt is not a simple instruction string, but a structured document designed to reduce errors, maximize relevance, and produce consistent output across model providers.

Signal selection

The prompt receives only the metrics that survived the discovery and scoring pipeline. This is typically three to five data points. Each metric includes its current value, trend classification, anomaly status, and unit of measurement. This prevents the model from inventing data or making assumptions about missing metrics.

Structured context

Statistical analysis results, such as health scores, anomaly flags, trend directions, are passed as structured fields rather than text. This provides the model with specific facts and reduces the risk of misinterpretation or embellishment.

Noise reduction

The system summarizes or omits metrics that are stable and within normal ranges. The prompt instructions direct the model to focus on actionable findings, such as anomalies and trends. This is more effective than listing every value. The result is a response that reads like an SRE incident summary instead of a data dump.

Try it yourself

The AI observability summarizer is open source and designed for Red Hat OpenShift and Red Hat OpenShift AI environments.

Use the following command to install the summarizer in your cluster:

make install NAMESPACE=your-namespaceAfter installation, open the OpenShift web console and navigate to Observe → AI Observability in the sidebar.

We welcome contributions from the community.

The takeaway

A common misconception about AI-powered observability is that the language model is the most difficult part. It is not. The LLM is the final 10% of the work. It translates structured analysis into language. The other 90% is signal engineering: this is the process of ensuring the model receives clean, relevant, and correctly computed data.

That work breaks down into four capabilities:

- Knowing which metrics matter: This involves catalog management, dynamic discovery, and semantic scoring.

- Querying them correctly: This uses type-aware PromQL generation with smart fallbacks.

- Reducing noise statistically: This involves anomaly detection, trend analysis, and health scoring.

- Controlling scope: This uses cluster-wide aggregation and namespace-level filtering.

When you get these four things right, the LLM can do what it does best: turning numbers into narrative.

Dashboards show you data. This system tells you what it means.