Build trusted Python containers with Project Hummingbird and Calunga

Learn how to use Project Hummingbird and Calunga open source projects to build application containers with Red Hat Hardened Images and trusted libraries.

Learn how to use Project Hummingbird and Calunga open source projects to build application containers with Red Hat Hardened Images and trusted libraries.

Learn how to use Red Hat Ansible Automation Platform and a Configuration-as-Code (CaC) approach to automate Infoblox DDI operations at scale.

Learn how to use OpenViking context database instead of traditional flat vector storage to provide AI agents with persistent, structured memory.

This article describes how to onboard a project and the results using two tools, CodeCov and CodeRabbit.

Learn how Fromager, an open source project, helps protect Python dependencies by rebuilding entire dependency trees from source, providing network-isolated builds, and managing dependencies as a verifiable map. Discover how Fromager ensures supply chain verifiability, ABI compatibility, and customization.

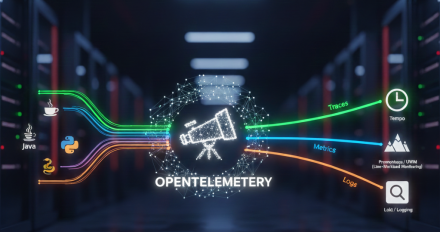

Learn how to set up distributed tracing for an agentic workflow based on lessons learned while developing the it-self-service-agent AI quickstart. This post covers configuring OpenTelemetry to track requests end-to-end across application workloads, MCP servers, and Llama Stack.

Follow this 4-step process using Training Hub and OpenShift AI to transition LLM fine-tuning from local experiments to repeatable, production-grade workflows.

Learn how to set up and run a local AI audio transcription using an Red Hat open source model.

Learn how to build reliable AI agents with our 8-stage evaluation framework. We explore DeepEval, multi-turn testing, and CI/CD integration for Red Hat AI.

Discover how to optimize training of MoE models with fms-hf-tuning, an open source tuning library for PyTorch FSDP and Hugging Face libraries. Learn about preprocessing data, throughput and memory efficiency features, distributed training, and expert parallelism. Improve your AI and agentic applications on domain-specific enterprise tasks.

Learn how to run high-performance computing workloads managed by Slurm within a containerized OpenShift environment using the Slinky operator.

Learn how the Responses API in Llama Stack automates complex tool calling while maintaining granular control over conversation flow for AI agents. Discover the benefits and implementation details.

Discover how I used an AI assistant to develop a production-grade Ansible Playbook to audit RHEL versions across a fleet of servers, generating a clean report.

Learn how to estimate memory requirements for your LLM fine-tuning experiments using Red Hat Training Hub's memory_estimator.py API. This guide covers the memory components, adjusting training setups for specific GPU specifications, and using the memory estimator in your code. Streamline your model fine-tuning process with runtime estimates and automated hyperparameter suggestions.

Understand the PyTorch autograd engine internals to debug gradient flows. Learn about computational graphs, saved tensors, and performance optimization techniques.

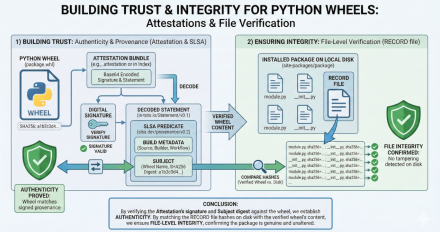

In this blog post, we're looking at how the Tech Preview of Red Hat Trusted

Learn about the Red Hat build of OpenTelemetry and its auto-instrumentation capabilities to achieve full-stack observability on OpenShift.

Explore big versus small prompting in AI agents. Learn how Red Hat's AI quickstart balances model capability, token costs, and task focus using LangGraph.

One conversation in Slack and email, real tickets in ServiceNow. Learn how the multichannel IT self-service agent ties them together with CloudEvents + Knative.

Learn how to fine-tune a RAG model using Feast and Kubeflow Trainer. This guide covers preprocessing and scaling training on Red Hat OpenShift AI.

Learn how to implement retrieval-augmented generation (RAG) with Feast on Red Hat OpenShift AI to create highly efficient and intelligent retrieval systems.

Learn about Fedora Rawhide testing a solution that embeds SBOM metadata directly into Python wheels, allowing scanners to recognize backported security fixes.

Learn how to migrate from Llama Stack’s deprecated Agent APIs to the modern, OpenAI-compatible Responses API without rebuilding from scratch.

What RHEL 8 and 9 users need to know about Python 3.9 reaching the end-of-life phase upstream.

Optimize AI scheduling. Discover 3 workflows to automate RayCluster lifecycles using KubeRay and Kueue on Red Hat OpenShift AI 3.