Although containers and Kubernetes and microservices seem to come up in every conversation, there's a big chasm between talking about, demonstrating, and actually using a technology in production. Anyone can discuss containers, many people can demo them, but far fewer are successfully using containers and Kubernetes in a microservices architecture.

Why? There are likely many reasons, but a simple one may be that developers don't know where to start.

Consider this series of articles your starting point. Relax, read on, and get ready to enter the exciting world of containers, Kubernetes, and microservices.

Parlez-vous C#?

To begin, let's be inclusive. Your favorite programming language is available in Linux containers. Whether you're a .NET veteran or a Swift beginner, you'll find that you can use containers. Get this: You can even create containers to run COBOL programs. You don't have to give up your favorite coding language to enter the world of Linux containers; put that excuse aside.

What are Containers?

Containers are the result of executing an image. Yeah, that's not the most helpful definition. It's accurate, but not helpful.

Let's start with understanding an image, because an image runs in a container.

What is an image?

An image is a snapshot of an environment that can be executed—started, powered on, etc. Years ago, before the cloud, when all we had were PCs, a common way to duplicate a PC was to use a software tool that would make a perfect copy of a hard drive—an "image" of that drive. You could then take that hard drive, put it in another PC, and you'd have a duplicate of the original machine. This approach was very popular in corporate environments to ensure everyone had the same PC configuration.

So, think about that in terms of virtual computing. Instead of copying from one hard drive to another, you can copy from a virtual machine (VM) to a file, which can then be sent around the network. That file can be copied to another VM and be booted, and there you have a copy of the first VM. That was standard operating procedure circa 2013 when scaling up an application meant spinning up more VMs to handle the workload.

Enter LXC

Even though Unix containers date back to 1979 (surprised? I know I was), Linux containers really got their foundation in 2008 with the introduction of LinuX Containers (LXC). Even so, that technology didn't really take off until 2013 when Docker exploded in popularity.

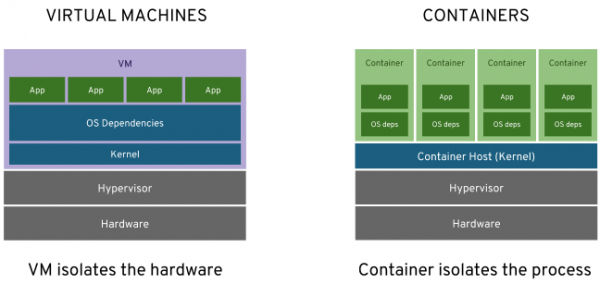

Imagine that, instead of cloning an entire VM, you could copy one executable program and put that into an image. And, imagine that the image didn't need the operating system (OS) inside of it. Instead, it simply has to conform to the kernel of the underlying operating system (i.e., all the system calls are compatible with, say, the Linux kernel). That means the image can be run on practically any Linux system, because it's only using the kernel. Now you have a portable application, which, by the way, starts incredibly quickly because the OS is already running on the host system.

That's a Linux image. It runs in a container. When it's actually running, it's a container. You may hear the terms used interchangeably. Although that's not technically correct, just understand and go with it.

These images are smaller than comparable VMs; they can be shared and passed around as files, and they start up quickly. Because it's standard practice to make an image as small as possible, a lot of work goes into making smaller components to go inside them. For example, you need some part of an operating system in order to talk to the Linux kernel, so vendors (e.g., Red Hat Enterprise Linux CoreOS) are doing what they can to make things more compact. Even .NET Core plays well because it is now shipped and installed in parts only as needed. That means a web site that formerly ran in Internet Information Service (IIS) using .NET Framework and took several gigabytes of space could now occupy, for example, 700 megabytes. Figure 1 shows a comparison of VMs and containers.

How can I get started?

Where you go to get started depends on your machine, specifically on which operating system you're running. MacOS, Windows, and Linux have different requirements for running Linux containers.

MacOS

The instructions can be found on this installation page.

Windows

Microsoft has instructions on their page for Linux Containers on Windows 10.

Fedora

This is my favorite. There are two tools that you can use on Fedora: Buildah and Podman. To install them, simply type buildah at a terminal command line and follow the prompts to install. Likewise, type podman at the terminal command line and follow the prompts.

Red Hat Enterprise Linux

One quick command, yum install buildah podman, and you're up and running.

Other Linux distros

You can find the necessary steps starting with this installation page.

What is this daemon?

If you are using Docker as your container engine, note that it requires a daemon to be running. This process will be transparent to you, but it does consume CPU cycles and you may occasionally see messages about or from it. The daemon must be running in order to build an image.

If you're using Buildah and Podman, you don't need any daemons. They were architected from the start with this in mind. Like a lot of software projects, they benefited from the past and made improvements.

What's next?

Next, I'll show how to build and run a "Hello World" app in the language of your choice, which will be covered in the next article.

Last updated: September 3, 2019